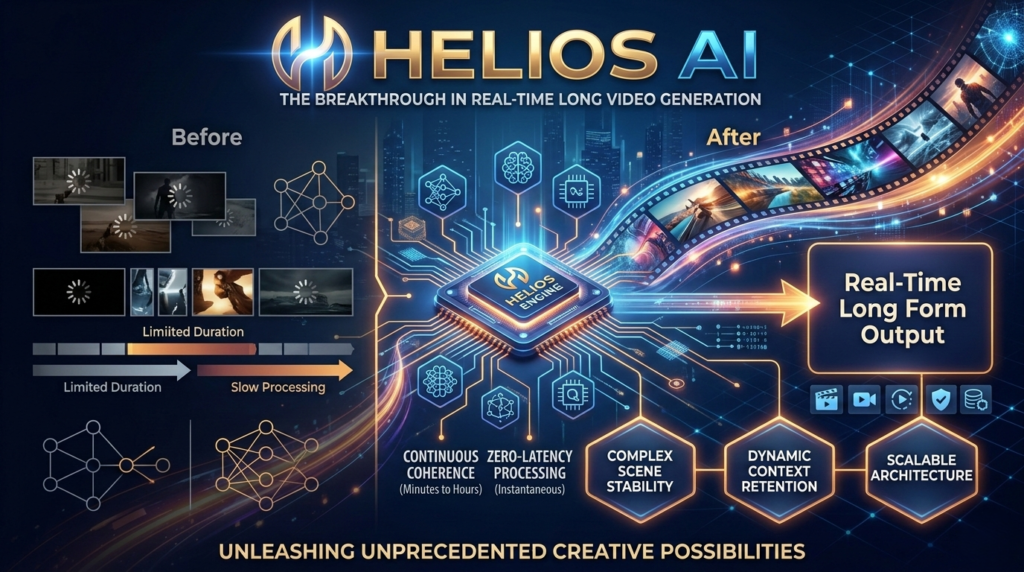

The world of AI video generation is moving at breakneck speed, but one hurdle has remained stubbornly high: the ability to generate long, high-quality videos in real-time. Most current models are computationally expensive, often requiring massive GPU clusters to produce even a few seconds of footage. When they do attempt longer sequences, they frequently suffer from “drifting”—a phenomenon where the video loses its initial subject or becomes a blurry mess over time.

Enter Helios AI, a groundbreaking 14B parameter autoregressive diffusion model that is changing the narrative. Developed by researchers at Peking University and ByteDance, Helios AI is the first model of its scale to achieve a staggering 19.5 FPS (frames per second) on a single NVIDIA H100 GPU. It doesn’t just make video; it makes minute-scale, high-quality video in real-time without the standard “crutches” of modern AI.

What Makes Helios AI a Game-Changer?

To understand the impact of Helios AI, we have to look at how traditional video models operate. Most rely on heavy optimization techniques like KV-caching, quantization, or sparse attention to speed up inference. While effective, these methods often come with a trade-off in visual fidelity or architectural complexity.

Helios AI takes a different path. It achieves real-time performance through fundamental architectural breakthroughs and infrastructure-level optimizations. This allows the model to support Text-to-Video (T2V), Image-to-Video (I2V), and Video-to-Video (V2V) tasks with a unified input representation.

Solving the “Long-Video Drifting” Problem

If you have ever used an AI video generator for a sequence longer than 10 seconds, you’ve likely seen the character’s face melt or the background morph into something unrecognizable. This is drifting. Traditionally, researchers used “heuristics” like self-forcing or keyframe sampling to keep the model on track.

Helios AI eliminates these hacks. Instead, the team proposed a training strategy that explicitly simulates drifting during the training phase. By teaching the model how to correct itself while it learns, Helios AI maintains incredible consistency even over minute-long generations. It stops repetitive motion at its source, ensuring that the last second of your video is just as coherent as the first.

Technical Specifications and Performance

The efficiency of Helios AI is nothing short of remarkable. Despite being a 14B parameter model, its computational cost is comparable to, or even lower than, many 1.3B parameter models.

| Feature | Helios AI Performance | Traditional 14B Models |

| Inference Speed | 19.5 FPS (Single H100) | Typically < 2-5 FPS |

| Video Length | Minute-scale (60s+) | Mostly 5-10s bursts |

| Drift Management | Native Training Simulation | Self-forcing / Error-banks |

| Optimization | Kernel-level & Architecture | KV-Cache / Quantization |

| Hardware Fit | 4 Models on 80GB VRAM | High VRAM per single instance |

By heavily compressing historical and noisy context and reducing the necessary sampling steps, Helios AI maximizes every clock cycle of the GPU. For developers, this means faster iterations; for creators, it means near-instant feedback on their prompts.

Actionable Insights: How to Leverage Helios AI

The release of Helios AI isn’t just a win for researchers; it’s a massive leap for the creator economy. Here is how you can start thinking about integrating this technology into your workflow:

- Rapid Prototyping for Filmmakers: Because Helios AI runs in real-time, directors can “pre-viz” entire scenes by simply typing descriptions. You can test lighting, camera angles, and pacing in seconds rather than waiting hours for a render.

- Interactive Social Media Content: The model’s speed opens the door for interactive video experiences. Imagine a stream where the audience’s comments directly and instantly change the video being generated.

- High-Fidelity V2V Transformations: Use the Video-to-Video capabilities of Helios AI to reskin existing footage. You can turn a backyard phone recording into a cinematic sci-fi epic while maintaining the original movement and timing perfectly.

- Cost-Effective Scaling: Since an 80GB VRAM GPU can fit four 14B Helios AI models, businesses can scale their video production pipelines with a fraction of the hardware costs previously required.

The Three Pillars of Helios AI Innovation

The success of Helios AI rests on three key dimensions that the research team focused on to break the “speed vs. quality” barrier.

1. Robustness to Contextual Drift

By eliminating the need for keyframe sampling, the model treats the video as a continuous, fluid entity. The training process forces the model to recover from “noisy” or “drifted” states, making it naturally resilient to the errors that usually plague long-form AI content.

2. Radical Computational Efficiency

Standard acceleration techniques like quantization often lose the “soul” of the video—the fine textures and subtle movements. Helios AI proves that you can reach 19.5 FPS through smarter infrastructure. By optimizing the scheduler loop and kernels, the developers ensured that the hardware is never idling.

3. Unified Task Support

Most models are specialized. You have one for T2V and another for I2V. Helios AI uses a unified representation. This means the model understands how to transition between text prompts and image anchors seamlessly, leading to better “Image-to-Video” results where the initial frame’s identity is strictly preserved.

Why Real-Time Matters for the Future of AI

We are transitioning from “Generative AI as a tool” to “Generative AI as an interface.” For an interface to be effective, it must be instantaneous. When you move your mouse, the cursor moves immediately. When you prompt Helios AI, the video begins to form immediately.

This low-latency generation is the bridge to AI-generated gaming, real-time VR environments, and personalized media. Helios AI is the first 14B model to cross that bridge without stumbling.

Final Thoughts: The Road Ahead

The team behind Helios AI has committed to releasing the code, the base model, and a distilled version for the community. This open-science approach will likely trigger a wave of new applications and fine-tuned versions optimized for specific styles like animation, realism, or 3D world-building.

As we look toward the future of digital content, Helios AI stands as a testament to the fact that we don’t always need “more power”—sometimes, we just need better architecture. By solving the drift problem and shattering speed records, Helios AI has set a new standard for what we should expect from video generation in 2026 and beyond.