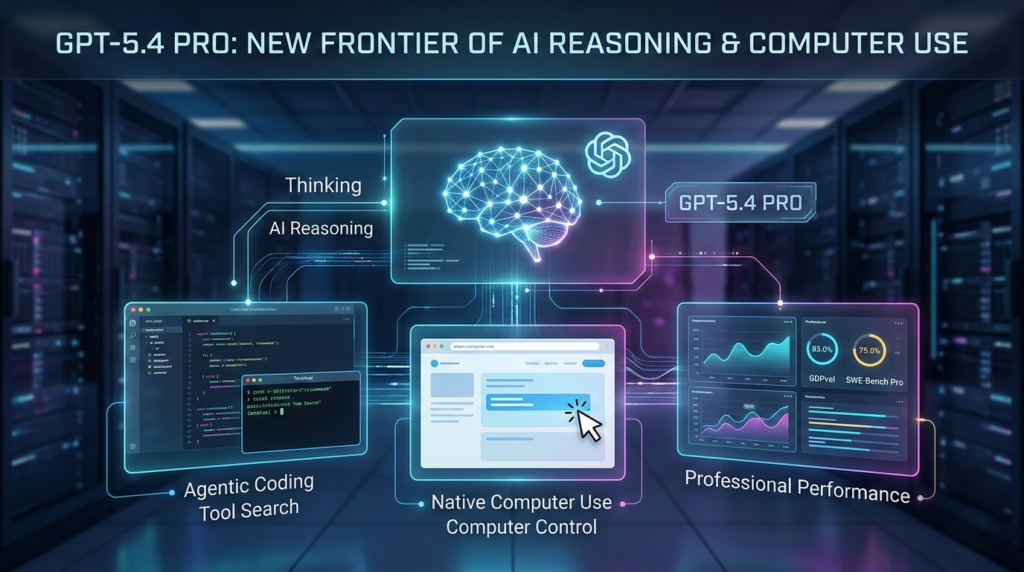

The artificial intelligence landscape just shifted again. OpenAI has officially announced the release of GPT-5.4 Pro, alongside GPT-5.4 Thinking and new integrations within Codex. This isn’t just a minor iteration; it is a specialized leap toward “agentic” workflows where AI moves beyond generating text to actually operating computers and solving professional-grade problems with human-like precision.

If you are a developer, a business leader, or an AI enthusiast, the arrival of GPT-5.4 Pro marks a turning point in how we interact with large language models. This release emphasizes “Thinking” capabilities—a more efficient reasoning engine designed for long, tool-heavy workflows that previous models struggled to manage without losing context.

In this comprehensive guide, we will break down the core features of GPT-5.4 Pro, explore the new native computer-use capabilities, and analyze the pricing structure that defines this high-performance tier.

What is GPT-5.4 Pro?

GPT-5.4 Pro is OpenAI’s most capable frontier model to date, specifically engineered for professional work that requires maximum performance on complex tasks. While the standard GPT-5.4 (available as “Thinking” in ChatGPT) offers a balance of speed and intelligence, the GPT-5.4 Pro version is built for high-stakes environments where accuracy on benchmarks like GDPval and SWE-bench Pro is non-negotiable.

The “Thinking” aspect of this model refers to its ability to iterate internally before providing an output. By utilizing advanced reasoning paths, GPT-5.4 Pro can identify the best sequence of actions for a given prompt, making it particularly effective for agentic coding and complex tool search.

Key Technical Specifications

- Context Window: Up to 1M tokens in Codex and the API (experimental/opt-in).

- Standard Context: 272K tokens.

- Performance: 83.0% on GDPval (exceeding industry professionals).

- Computer Use: Native ability to read screenshots and issue keyboard/mouse actions.

Native Computer Use: The Game Changer

Perhaps the most significant leap in GPT-5.4 Pro is its native computer-use capability. Unlike previous iterations that required complex third-party wrappers, GPT-5.4 Pro can now interact with operating systems directly.

According to the latest benchmarks, the model achieves a 75.0% success rate on OSWorld-Verified. This means GPT-5.4 Pro can:

- Write Playwright Code: Automate browser testing and web interactions seamlessly.

- Visual Perception: Read screenshots to understand the state of a desktop or application.

- Action Execution: Issue keyboard and mouse commands to navigate software.

- Custom Policies: Developers can set confirmation policies, ensuring the AI asks for permission before taking high-risk actions.

This level of autonomy transforms the model from a “chatbot” into a “digital collaborator.” Imagine a scenario where GPT-5.4 Pro handles your spreadsheet modeling, cross-references data with a web CRM, and drafts a report—all by navigating the software just as a human would.

GPT-5.4 Pro vs. Standard GPT-5.4: A Comparison

Understanding which model to use depends on your specific needs. Below is a comparison table detailing the differences between the new releases and their predecessor.

| Feature | GPT-5.2 | GPT-5.4 (Thinking) | GPT-5.4 Pro |

| Primary Use Case | General Knowledge | Efficient Reasoning | Complex Professional Work |

| GDPval Score | 70.9% | ~80% | 83.0% |

| Tool Search | Standard | Optimized | Advanced Agentic Search |

| Context Limit | 128K – 256K | 272K | 1M (Opt-in) |

| Computer Use | Limited | Supported | Best-in-Class |

For most users, the standard GPT-5.4 Thinking model will be the daily driver. However, for enterprise-level data modeling—where the model scored a staggering 87.3% on internal spreadsheet benchmarks—GPT-5.4 Pro is the clear winner.

Tool Search and API Efficiency

Efficiency is a major theme in this release. OpenAI has introduced “Tool Search” for the API, allowing agents to retrieve only the function definitions they need at runtime. This is a massive win for developers working with large tool surfaces or the Model Context Protocol (MCP).

In testing across 250 MCP Atlas tasks, Tool Search reduced token usage by 47% without sacrificing accuracy. This means that while GPT-5.4 Pro is a more powerful model, it is also designed to be more “token-aware,” helping to keep costs manageable for long-running workflows.

Understanding the Pricing Structure

The power of GPT-5.4 Pro comes at a premium. OpenAI has moved away from a “progressive tax” on context and instead uses a tiered system based on the 272K token threshold.

API Pricing (per 1M Tokens)

| Model Tier | Input Cost | Cached Input | Output Cost |

| GPT-5.4 (<272k) | $2.50 | $0.25 | $15.00 |

| GPT-5.4 Pro (<272k) | $30.00 | N/A | $180.00 |

| GPT-5.4 (>272k) | $5.00 | $0.50 | $22.50 |

| GPT-5.4 Pro (>272k) | $60.00 | N/A | $270.00 |

It is important to note that the 1M token context window is an experimental feature. To use it, developers must explicitly configure model_context_window and model_auto_compact_token_limit. Without these parameters, the system defaults to the standard 272K window.

Actionable Insights for Implementing GPT-5.4 Pro

To get the most out of GPT-5.4 Pro, you should refine your implementation strategy. This model responds differently to prompts than the 4-series or early 5-series models.

- Embrace Latency for Logic: The “Thinking” process takes time. Do not use GPT-5.4 Pro for simple Q&A where speed is the priority. Save it for tasks that require multi-step reasoning.

- Optimize Vision Patches: The vision cap has increased to 2500 “patch” tokens (approx. 1600x1600px). If you are sending images for computer-use tasks, ensure you are resizing them to this cap to avoid unnecessary token consumption.

- Use Tool Namespacing: With the new Tool Search feature, group your related functions into namespaces. This allows GPT-5.4 Pro to load only relevant functions, significantly cutting down your API bill.

- Steerable Behavior: Take advantage of the custom confirmation policies. For automated coding tasks, set the model to “High Risk” confirmation for file deletions but “Auto-Approve” for read-only actions.

The Future of Coding with Codex and GPT-5.4

The release also brings major updates to Codex. With GPT-5.4 Pro integration, /fast mode now delivers up to 1.5x faster performance. On the SWE-Bench Pro benchmark, which measures the ability of AI to resolve real-world GitHub issues, GPT-5.4 Pro outperformed all previous versions, including the specialized GPT-5.3-Codex.

This suggests that the “Pro” version isn’t just a larger model; it’s a smarter one. Its ability to iterate on longer-running tasks makes it the perfect partner for developers looking to automate the “boring” parts of software engineering—like writing Playwright scripts or debugging complex tool-heavy workflows.

Final Thoughts: Is It Worth the Upgrade?

The arrival of GPT-5.4 Pro signals that we are moving into the era of the “AI Agent.” While the price point for the Pro tier is significantly higher than the standard model, the gains in productivity for white-collar work and professional modeling are undeniable.

If your workflow involves complex spreadsheets, navigating multiple software interfaces, or managing massive codebases, GPT-5.4 Pro offers a level of competence that finally rivals human professionals. It is no longer just about what the AI can say—it is about what the AI can do.

As OpenAI continues its trend of monthly releases, the window between “cutting edge” and “legacy” is shrinking. Implementing GPT-5.4 Pro today could give your business the competitive edge needed to navigate the rapidly evolving AI economy.