The character of war is changing. In early 2024, the United States Department of Defense (DoD) made a quiet but seismic shift by authorizing the use of Large Language Models (LLMs) like Claude and GPT-4 for operational planning. This wasn’t just about drafting memos; it was the dawn of AI in military conflict as a primary strategic asset.

As geopolitical tensions rise from Eastern Europe to the South China Sea, the integration of generative AI into the “OODA Loop” (Observe, Orient, Decide, Act) has moved from science fiction to a battlefield reality. This comprehensive guide explores how sovereign nations are leveraging these models, the technical infrastructure required for “Frontline AI,” and the ethical minefield that follows when algorithms are given the keys to the armory.

1. The Tactical Necessity: Why LLMs are Vital in Modern Warfare

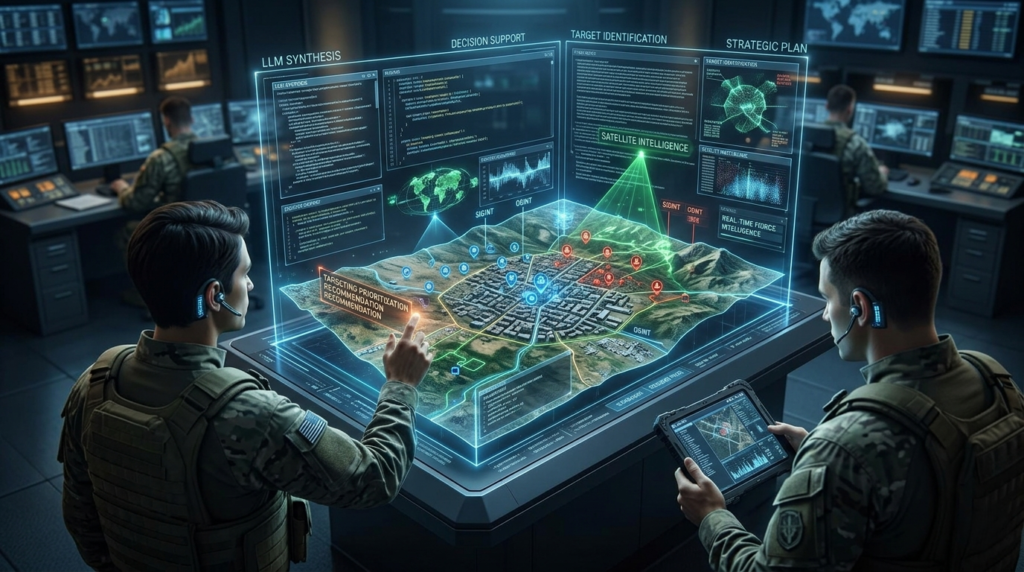

The primary driver for deploying AI in military conflict is the sheer volume of data generated by modern sensors. A single reconnaissance drone can stream gigabytes of high-definition footage every hour. Multiplying that by thousands of assets creates a “data fog” that no human intelligence team can penetrate alone.

From Information Overload to Actionable Intelligence

LLMs excel at synthesizing unstructured data. In a conflict scenario, an LLM can ingest:

- Real-time radio transcripts (SIGINT).

- Satellite imagery descriptions.

- Open-source intelligence (OSINT) from social media.

- Historical tactical reports.

By processing these simultaneously, the AI provides a “summarized battlefield awareness” that allows commanders to see patterns—such as the movement of a specific battalion—long before a human analyst would connect the dots.

Reducing the “Sensor-to-Shooter” Timeline

In traditional warfare, identifying a target and authorizing a strike could take hours. By using AI in military conflict, systems like Palantir’s Maven Smart System use LLMs to suggest targeting packages in seconds. This speed is not just an advantage; in an era of hypersonic missiles, it is a survival requirement.

2. Global Case Studies: Sovereign Strategies for AI Deployment

Different nations view the role of LLMs through the lens of their specific security challenges. The race for “Sovereign AI” is the new space race.

The United States: The Integration of “Agentic” Workflows

The U.S. military is focusing on “Agentic AI”—models that don’t just answer questions but execute multi-step tasks. Through the “Global Information Dominance Experiments” (GIDE), the DoD is testing how LLMs can automate logistics, such as predicting fuel needs for a carrier strike group based on real-time combat maneuvers.

Ukraine: The Proving Ground for OSINT AI

The conflict in Ukraine has become a laboratory for AI in military conflict. Low-cost LLM integrations are being used to scrape Russian Telegram channels to geolocate troop positions. This “democratized intelligence” allows smaller forces to punch significantly above their weight by using AI to filter out propaganda and find tactical truths.

China: The Doctrine of “Intelligentization”

The People’s Liberation Army (PLA) views AI as the core of “Intelligentized Warfare.” Unlike the Western focus on “human-in-the-loop,” Chinese military researchers are exploring more autonomous applications, including AI-driven swarms and cognitive warfare—using LLMs to generate massive amounts of disinformation to confuse an enemy’s civilian population during a strike.

3. The Technical Backbone: RAG and Edge Computing in the Field

Deploying an LLM in a war zone is vastly different from running it in a temperature-controlled data center in Silicon Valley.

Retrieval-Augmented Generation (RAG) for Tactical Accuracy

One of the biggest risks of using AI in military conflict is “hallucination.” A commander cannot afford an AI to “invent” a nearby fuel depot. To solve this, militaries use RAG. This forces the LLM to only use a specific, verified database of tactical maps and intelligence logs as its source of truth, ensuring that every output is grounded in reality.

The Move to the “Tactical Edge”

Cloud-based AI is vulnerable; a satellite link can be jammed. Therefore, the goal is “Edge AI”—running smaller, highly efficient LLMs on ruggedized hardware inside tanks, planes, or even handheld devices. This ensures that even if a unit is cut off from headquarters, their AI-driven decision support remains online.

4. Economic Impact: The Modern Warfare Market Growth

The surge in AI in military conflict has triggered a massive shift in defense spending. Traditional “kinetic” weapons (tanks and ships) are now being secondary to “digital” weapons.

Market Projections (2025-2032)

- Total Market Value: Expected to exceed $135 Billion by 2032.

- CAGR: A staggering 38.5%, far outpacing traditional aerospace growth.

- Investment Focus: 60% of R&D in top defense firms is now directed toward software-defined warfare and AI integration.

The Rise of “Defense-Tech” Unicorns

Silicon Valley startups are increasingly becoming “Prime Contractors.” Companies that specialize in computer vision and LLMs are securing multi-billion dollar contracts that used to go exclusively to traditional hardware manufacturers.

5. Ethical Paradoxes and the “Governance Gap”

As we integrate AI in military conflict, we face a moral crisis: Can a machine understand the Laws of Armed Conflict (LOAC)?

The Risk of Automation Bias

“Automation bias” occurs when a human operator stops questioning the AI’s suggestions because “the machine is always right.” If an LLM misidentifies a civilian convoy as a military target, and a pressured commander hits “confirm,” who is responsible?

The Sovereign AI Privacy Dilemma

When a nation uses a commercial LLM (like GPT-4), they risk “data leakage.” Every prompt sent to a commercial server could potentially train the model or be intercepted. This is why nations like India and France are investing heavily in Sovereign LLMs—models built and hosted entirely within their own borders on secure government hardware.

6. How to Implement Military-Grade SEO for AI Content

For defense analysts and tech bloggers, writing about AI in military conflict requires a specific SEO strategy to reach decision-makers.

Logical Hierarchy and Keyword Usage

Using a primary focus keyword like AI in military conflict helps in ranking for high-intent searches. However, the content must be structured using H2 and H3 tags to allow search engines to parse the complex technical data.

Use of Comparison Tables

To make the data “skimmable” for high-level executives, always include comparison tables that break down the capabilities of different nations or technologies.

| Technology | Role in Conflict | Key Advantage |

| LLMs | Data synthesis & Planning | Rapid OODA loop |

| Computer Vision | Targeting & Navigation | Precision in strikes |

| Generative AI | Info-ops & Decoys | Mass-scale deception |

7. Actionable Insights for the Future of Defense

If your organization is looking to navigate the era of AI in military conflict, consider these three pillars:

- Prioritize Interoperability: AI is only useful if it can read data from every branch of the military. Break down data silos now.

- Invest in Human-Centric AI: The goal should not be to replace the commander, but to reduce their “cognitive load.” Design interfaces that explain why a decision was reached.

- Secure the Supply Chain: Software is the new ammunition. Ensure your AI models are “clean” from adversarial poisoning or backdoors.

8. Conclusion: The Algorithmic Arms Race

The integration of AI in military conflict is no longer optional. As LLMs become faster and more accurate, the “digital divide” between advanced militaries and those relying on legacy systems will widen. The challenge for the coming decade will be to harness this power to enhance national security while establishing international norms that prevent an unbridled algorithmic arms race.

Warfare has always been a contest of wills; it is now becoming a contest of compute.

Frequently Asked Questions: AI in Military Conflict

1. How are Large Language Models (LLMs) specifically used in tactical decision-making?

In the context of AI in military conflict, LLMs serve as high-speed reasoning engines. Unlike traditional software that follows rigid “if-then” logic, an LLM can parse unstructured data—such as intercepted radio chatter or handwritten field reports—and synthesize it into a coherent tactical summary.

Tactically, this allows for “Course of Action” (COA) generation. A commander can prompt the system: “Based on current fuel levels and enemy anti-air positions, suggest three extraction routes for the 4th Battalion.” The LLM then cross-references geographic data with intelligence logs to provide weighted recommendations.

2. What is “Sovereign AI” and why is it critical for national security?

Sovereign AI refers to a nation’s ability to produce, host, and maintain its own artificial intelligence infrastructure without relying on foreign technology providers. For any nation deploying AI in military conflict, using a “public” or “foreign-hosted” LLM is a massive security risk.

If a defense department uses a commercial API, their sensitive prompts—detailing troop locations or strategic vulnerabilities—could be stored on external servers or used to train the next iteration of the model. Sovereign AI ensures that the “data umbilical cord” stays within national borders, utilizing air-gapped data centers and indigenous hardware.

3. Can AI in military conflict operate without an internet connection?

Yes, this is known as Edge AI. In a contested electronic warfare environment, satellite links are often jammed or destroyed. To remain effective, LLMs are being “quantized” (compressed) to run on local, ruggedized servers embedded in command vehicles or aircraft.

By running AI in military conflict on the edge, units maintain their decision-support capabilities even when totally disconnected from the global web. This is a primary focus for modern systems architects who prioritize “offline-first” software resilience.

4. What are the primary ethical concerns regarding autonomous weapons?

The integration of AI in military conflict brings up the “Black Box” problem. If an AI suggests a target that results in civilian casualties, the lack of transparency in how the model reached that conclusion creates a legal and moral vacuum.

Key ethical concerns include:

- The Accountability Gap: Who is responsible for an algorithmic error?

- Target Misidentification: LLMs can “hallucinate” or misinterpret context, potentially mistaking a civilian gathering for a military briefing.

- Lowering the Threshold for War: If AI makes warfare “cleaner” or less risky for one’s own soldiers, leaders might be more inclined to engage in kinetic conflicts.

5. How does RAG (Retrieval-Augmented Generation) prevent AI hallucinations in war?

RAG is the “safety rail” for AI in military conflict. Instead of letting an LLM rely on its general training data (which might be outdated or inaccurate), RAG forces the model to look at a specific, verified “Knowledge Base” first.

For example, when asked about enemy positions, the RAG-enabled system will only pull data from the last 15 minutes of satellite telemetry and scout reports. If the information isn’t in the verified data, the AI is programmed to say “Data not available” rather than guessing.

6. Which countries are currently leading in military AI deployment?

While many nations are researching the tech, the leaders in active AI in military conflict deployment are the United States, China, and Russia.

- The US leads in software integration (Project Maven).

- China leads in “Intelligentized” hardware and autonomous swarm theory.

- India is rapidly catching up with a focus on border surveillance and the “iDEX” (Innovations for Defence Excellence) initiative, aiming for indigenous AI solutions.

7. How does AI impact Electronic Warfare (EW) and Signal Jamming?

AI has revolutionized EW by enabling “Cognitive Radio.” Traditional jamming targets a specific frequency. However, an AI-powered signal processor can detect an enemy’s attempt to jam a frequency and instantly “hop” to a clear channel, or even generate “noise” that mimics friendly signals to confuse enemy sensors. This makes AI in military conflict a shield as much as it is a sword.

8. What is the role of “Vibe Coding” or Natural Language in modern defense?

“Vibe Coding”—the ability to build software using natural language—is reaching the frontlines. It allows non-technical officers to create custom situational awareness tools on the fly. Instead of waiting weeks for a software update, a soldier can use an LLM to write a script that scrapes local weather data and correlates it with drone flight paths, creating a custom tool in minutes.

9. Will AI eventually replace human generals?

The consensus among defense experts is “No.” The current doctrine focuses on Human-Machine Teaming (HMT). While AI in military conflict can process data a million times faster than a human, it lacks “strategic intuition” and moral judgment. The AI provides the options, but the human retains the authority.

10. How can defense contractors improve their Rank Math SEO for AI topics?

For professionals at firms like Kalinga.ai, the key to SEO in this niche is Topical Relevance.

- Primary Keyword: Use AI in military conflict in the H1 and the first 100 words.

- Keywords to Include: Focus on “RAG,” “Edge Computing,” and “Sovereign AI.”

- Readability: Break down complex technical jargon with bullet points and comparison tables, as search engines favor content that simplifies complex “How-to” queries.

Final Thoughts: The Strategic Imperative of AI in Military Conflict

As we navigate the complexities of 2026, it is clear that the integration of AI in military conflict is not merely a technological upgrade—it is a fundamental shift in the geometry of power. For decades, military superiority was defined by kinetic mass: who had the most tanks, the largest naval fleets, or the most advanced stealth fighters. Today, that paradigm has been superseded by “informational mass.” The victor is no longer the side that fires the most rounds, but the side that can process, synthesize, and act upon data with the greatest velocity.

The transition to an algorithmic defense posture represents the most significant change in warfare since the invention of gunpowder. When we discuss AI in military conflict, we are talking about a system that collapses the time between a sensor detecting a threat and a commander making a decision. In the high-stakes environments of modern border security and regional disputes, a delay of even sixty seconds can be the difference between a successful defense and a catastrophic loss. This “hyper-war” reality is what makes LLMs and RAG-based systems indispensable.

The Dual Challenge: Innovation vs. Sovereignty

For nations like India and organizations like Kalinga AI, the challenge is twofold. First, there is the technical hurdle of building “Tactical AI” that can survive the “fog of war.” This requires moving away from fragile, cloud-dependent models and toward robust, edge-computed solutions that can operate in air-gapped environments. Second, there is the geopolitical necessity of Sovereign AI. As this blog has detailed, relying on foreign-hosted algorithms for national security is a strategic vulnerability. True defense independence in the 21st century is defined by the ownership of one’s own data weights, training sets, and compute infrastructure.

The Human Element in an Automated Age

Despite the rapid advancement of autonomous systems, the “Final Thought” must always return to the human in the loop. The goal of deploying AI in military conflict should never be to outsource the moral burden of war to an algorithm. Instead, the focus must remain on “Augmented Intelligence”—using LLMs to strip away the “cognitive noise” of the battlefield so that human leaders can focus on the ethical, political, and strategic nuances that a machine can never truly grasp.

We are moving toward a future where “Vibe Coding” and natural language interfaces will allow every soldier to become a data analyst. This democratization of tech capability will empower smaller, more agile units to operate with the intelligence of a full headquarters. However, this power must be tempered with rigorous governance and “Explainable AI” (XAI) frameworks to ensure that every algorithmic recommendation is transparent and accountable.

The Road Ahead

The “Algorithmic Arms Race” is already here. Whether it is through the deployment of drone swarms, the use of LLMs for signals intelligence, or the creation of sovereign national security stacks, the nations that master AI in military conflict will set the rules for the next century of global stability. For defense strategists, systems architects, and policymakers, the mandate is clear: innovate with urgency, but govern with caution. The machines are ready for the battlefield; the question remains whether our international legal and ethical frameworks are ready for the machines.

In the end, AI is a tool of immense potential. Used correctly, it can provide a level of precision that reduces collateral damage and saves lives. Used recklessly, it could accelerate conflict beyond human control. As we continue to build and deploy these systems at Kalinga AI and beyond, our commitment must be to a future where technology serves as a guardian of peace, ensuring that even in times of conflict, human judgment remains the ultimate authority.