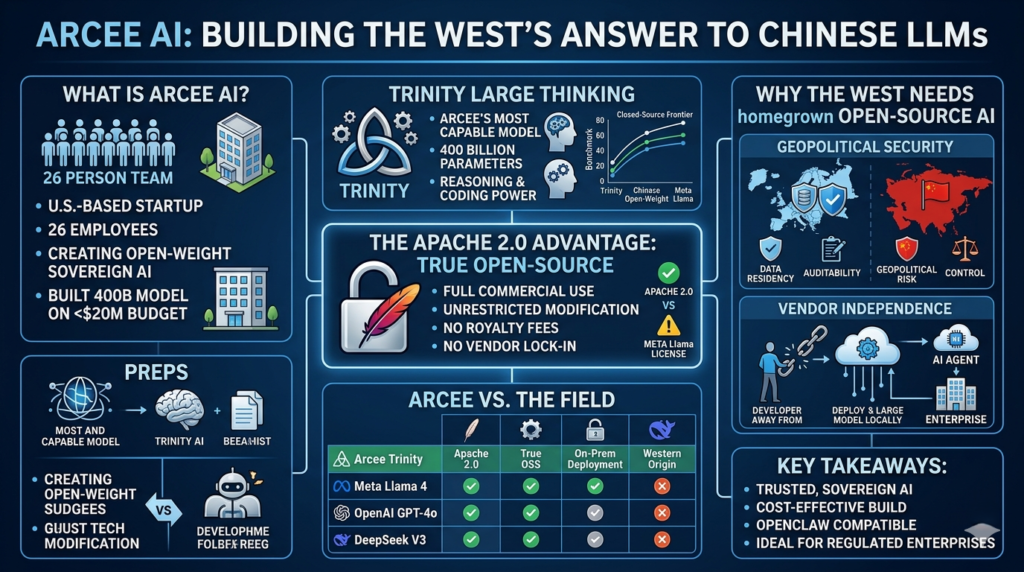

A tiny 26-person startup just released one of the most capable open-weight reasoning models ever built outside China — and it may matter more than any benchmark score suggests.

If you’re a developer, enterprise architect, or AI product builder who wants a powerful open-source AI model without handing control to Big Tech or Beijing, Arcee AI deserves your full attention. The company just launched Trinity Large Thinking, a 400-billion-parameter reasoning model that runs under the Apache 2.0 license — and the implications go far beyond a benchmark leaderboard.

What Is Arcee AI?

Arcee AI is a 26-person U.S. startup that builds open-source AI models designed for enterprise deployment, on-premises use, and developer customization — without proprietary lock-in.

Founded with a mission to give Western companies a credible, sovereign AI alternative, Arcee has done something most well-funded AI labs struggle with: it built a 400B-parameter large language model from scratch on a reported $20 million budget. That’s not a rounding error at OpenAI — that’s their coffee budget. Yet Arcee’s models are competitive with many top-tier open-weight models in independent benchmarks.

Arcee offers two access modes:

- On-premises download: Companies train the model on their own infrastructure, keeping data fully in-house.

- Cloud API: A hosted version for teams that want convenience without full self-hosting.

This dual-access model makes Arcee uniquely practical — and uniquely safe — for organizations with strict data governance requirements.

Why the West Needs a Homegrown Open-Source AI Model

The AI landscape in 2026 is bifurcated. On one side, you have the closed-source giants — Anthropic, OpenAI, Google DeepMind — whose models are powerful but whose terms of service, pricing, and product decisions you cannot control. On the other, you have a wave of capable Chinese open-weight models that are genuinely impressive but come with a different kind of risk.

Arcee is trying to claim the middle ground: a fully open, Western-built, permissively licensed open-source AI model that no single gatekeeper controls.

The Risk of Chinese Open-Weight Models

Chinese-developed open-weight models have made remarkable progress and are frequently competitive with Western counterparts on benchmarks. But their adoption among U.S. and European enterprises is constrained by legitimate concerns.

Critics and policy researchers have flagged that deploying Chinese-origin models — even open-weight ones — may route sensitive data through infrastructure with ties to the Chinese government, or create dependency on a technical ecosystem that could be restricted or modified under political pressure. The Centre for European Policy Analysis has specifically noted the concept of an “AI kill switch” embedded in Chinese open-source projects as a non-trivial risk.

For enterprises operating in defense, healthcare, finance, or critical infrastructure, this risk profile is disqualifying — regardless of how good the model is.

The Problem with Big Lab Dependency

Closed-source models from the major American labs introduce a different kind of fragility: vendor dependency.

A recent example made this viscerally clear. Claude, Anthropic’s flagship model, had become a popular backbone for the open-source AI agent tool OpenClaw. Then Anthropic abruptly announced that existing Claude Code subscriptions would no longer cover OpenClaw usage — users would need to pay extra. Developers who had built entire workflows on Claude suddenly faced unexpected costs and broken assumptions.

This is exactly the kind of rug-pull that a truly open-source AI model is designed to prevent. When you host and control your own model weights, no one can change the pricing structure on you overnight.

Arcee’s CEO Mark McQuade has pointed out that his company’s models have, in fact, become among the top models used with OpenClaw — precisely because developers want an alternative they can rely on without fearing a policy change from a commercial cloud provider.

Trinity Large Thinking — Arcee’s Most Capable Model Yet

Trinity Large Thinking is Arcee’s new reasoning model, and it’s the company’s most ambitious release to date. According to McQuade, it is the most capable open-weight open-source AI model ever released by a non-Chinese company — a claim that’s bold but benchmarked.

How Does It Benchmark?

Arcee shared benchmark data with TechCrunch ahead of the launch. The results show Trinity Large Thinking performing comparably to top-tier open-weight models currently available. It does not beat the closed-source frontier models from Anthropic or OpenAI, and it isn’t positioned to — that’s a different race.

What it does do is compete meaningfully with the best fully open models available today, including strong showings on reasoning, coding, and instruction-following tasks. For teams that need a capable, controllable open-source AI model they can actually run and fine-tune, the benchmarks are more than sufficient.

What Makes the Apache 2.0 License Matter

Not all “open source” is created equal.

Meta’s Llama 4, the dominant Western open-weight model by most measures, ships under a custom license that the Open Source Initiative has explicitly stated does not qualify as true open source. There are restrictions on commercial use above certain user thresholds, limits on using the model to train competing models, and other conditions that can surprise teams mid-deployment.

Arcee’s Trinity models ship under Apache 2.0 — the gold standard for permissive open-source licensing. This means:

- Commercial use: Fully permitted.

- Modification: Fully permitted.

- Redistribution: Fully permitted.

- No royalty or usage fees.

- No clause restricting use against the model provider.

For legal, compliance, or procurement teams evaluating AI infrastructure, this distinction is not a technicality — it’s a dealbreaker condition.

Arcee AI vs. The Field: A Comparison

Understanding where Arcee fits requires comparing it to the alternatives across the dimensions that matter for enterprise and developer adoption.

| Dimension | Arcee Trinity | Meta Llama 4 | OpenAI GPT-4o | DeepSeek V3 |

|---|---|---|---|---|

| License | Apache 2.0 (true OSS) | Custom (not OSS per OSI) | Proprietary API only | MIT (but Chinese-origin) |

| On-Premises Deployment | ✅ Yes | ✅ Yes (with restrictions) | ❌ No | ✅ Yes |

| Geopolitical Risk | Low (U.S.-based) | Low | Low | High (Chinese gov’t exposure) |

| Model Size | 400B parameters | Mixture of Experts | Undisclosed | 671B parameters |

| Vendor Lock-In Risk | None | Low | High | Moderate |

| Fine-Tuning Freedom | Unrestricted | Restricted above scale | Not applicable | Permissive but risky |

| Closed-Source Frontier Parity | No | No | Yes | Approaching |

| Best For | Enterprise sovereignty, devs | Scale, ecosystem | Convenience, performance | Raw capability (risk trade-off) |

The table makes the positioning clear: Arcee is not trying to be the most powerful model in the world. It’s trying to be the most trustworthy open-source AI model for organizations that need control, transparency, and a clean legal footing.

Who Should Use Arcee’s Open-Source AI Model?

Not every organization has the same AI requirements. Here’s how to think about fit.

Enterprise Deployments

Arcee is particularly well-suited for regulated industries — banking, insurance, government contractors, healthcare, and defense — where data residency, auditability, and vendor independence are non-negotiable.

If your legal team has flagged concerns about sending sensitive data to a third-party cloud API, or if your procurement process requires open-source licensing documentation, Trinity Large Thinking is one of the few capable open-source AI models that checks all the boxes simultaneously.

Enterprises can download the model, fine-tune it on proprietary internal data, and deploy it in an air-gapped environment — something simply not possible with any closed-source alternative.

Developers and Agent Builders

For developers building AI agents, coding assistants, or automation pipelines, Arcee’s growing footprint in the OpenClaw ecosystem is a telling signal. The developer community has begun routing workflows through Arcee models specifically because they offer a stable, policy-resilient foundation.

An open-source AI model running on your own infrastructure won’t suddenly become more expensive because a provider renegotiated its B2B contracts. It won’t restrict which downstream tools you can connect. And it won’t deprecate without warning.

For agent builders in particular — where the entire value of a product depends on reliable model access — this kind of sovereignty is not a luxury. It’s architecture.(open-source AI model, Arcee AI Trinity, Apache 2.0 AI models, open-weight LLM reasoning, enterprise AI sovereignty)

Challenges Arcee Still Faces

Rooting for Arcee doesn’t mean ignoring its real constraints.

- Raw capability gap: Trinity Large Thinking does not match frontier closed-source models like Claude 3.7 Sonnet or GPT-4o on complex multi-step reasoning. For the most demanding tasks, this matters.

- Scale: With 26 employees, Arcee faces real limits in model iteration speed, safety research depth, and ecosystem development compared to labs with thousands of staff.

- Distribution: Arcee lacks Meta’s developer network, Hugging Face integration depth, or the brand recognition that drives organic adoption. Growing mindshare in a crowded market requires sustained investment in community and documentation.

- Compute costs: Training and fine-tuning a 400B-parameter model is not cheap, even on a shoestring. As model scale escalates across the industry, staying competitive at the frontier will demand either more capital or smarter efficiency breakthroughs.

These are solvable problems — but they’re real ones. Arcee’s bet is that trust, licensing purity, and geopolitical positioning can be a durable moat even as raw capability catches up on a lagging curve.

The Bigger Picture: What Arcee Means for the Open-Source AI Ecosystem

Here’s the genuinely important thing about Arcee that gets lost in benchmark debates: it represents a structural argument about what AI development should look like.

The concentration of frontier AI capability in three or four large American labs — and the parallel concentration of open-weight capability in Chinese companies and Meta — leaves a dangerous gap. There is very little territory between “pay OpenAI indefinitely” and “use a Chinese model with political risk.” Arcee is trying to occupy that gap.

A healthy open-source AI model ecosystem requires diversity — of origins, of funding structures, of governance models, and of licensing terms. Every capable model released under Apache 2.0 by a small independent lab makes the ecosystem less brittle. It gives enterprises real choices. It gives developers real optionality. And it reduces the leverage that any single provider can exert.

The argument for supporting companies like Arcee is not purely sentimental, though the sentiment is understandable. It’s structural. An open-source AI model ecosystem dominated by a handful of gatekeepers — even well-intentioned ones — is not actually open.

Arcee is not going to dethrone GPT-4o next quarter. But it doesn’t need to. It needs to be good enough, open enough, and trustworthy enough to matter to the organizations for whom those properties are decisive. On that basis, Trinity Large Thinking clears the bar.

Key Takeaways

- Arcee AI is a 26-person U.S. startup that built a 400B-parameter open-source AI model on roughly $20 million — one of the most cost-efficient large model builds on record.

- Its new Trinity Large Thinking model is, by Arcee’s claim, the most capable open-weight model released by any non-Chinese company to date.

- All Trinity models use Apache 2.0 licensing — a meaningfully stronger guarantee of freedom than Meta’s Llama 4 license or any proprietary API.

- Arcee occupies a distinct strategic position: not trying to beat closed-source frontier models, but offering sovereign, auditable, vendor-independent AI for enterprises and developers who need it.

- For AI agent builders and regulated enterprises, the combination of capability, licensing purity, and geopolitical safety makes Arcee a serious option — not a charity pick.

Frequently Asked Questions: Arcee AI and the Open-Source Ecosystem

1. What exactly defines an “open-source AI model” in the context of Arcee AI?

In the current AI landscape, the term “open source” is often used loosely. Many models are “open-weight,” meaning you can download the parameters, but they come with restrictive licenses. Arcee AI distinguishes itself by releasing models under the Apache 2.0 license.

A true open-source AI model under Apache 2.0 allows for:

- Commercial Freedom: You can use the model to generate revenue without paying royalties or hitting “user caps.”

- Derivation Rights: You can modify the model, fine-tune it on your own data, and even redistribute those modified versions.

- Legal Protection: It includes a contributor license agreement that protects users from certain patent litigation. Unlike Meta’s Llama or various Chinese models that include “acceptable use” policies or commercial restrictions, Arcee provides the same level of freedom you would expect from Linux or Apache web servers.

2. How does Arcee AI’s Trinity model compare to Chinese LLMs like DeepSeek or Qwen?

Chinese models like DeepSeek V3 and Qwen 2.5 are currently some of the most powerful open-weight models globally. However, for Western enterprises, the comparison isn’t just about benchmark scores—it’s about sovereignty and risk.

While a Chinese model might offer slightly higher coding scores, Arcee Trinity is built specifically to offer a “geopolitically safe” alternative. This means the training data, the engineering team, and the infrastructure are based in the West, specifically the U.S. For industries like defense, healthcare, and government, the potential for “backdoors” or future sanctions on Chinese software makes a Western-built open-source AI model the only viable long-term choice, even if the raw parameter count is different.

3. Why is the Apache 2.0 license superior to the Meta Llama license?

The Meta Llama license is a “bespoke” license. While it allows for many open-weight benefits, it is not recognized as “Open Source” by the Open Source Initiative (OSI).

The primary friction points with Meta’s license include:

- The 700-Million User Rule: If your product grows to a massive scale, you must request a special license from Meta.

- Usage Restrictions: You cannot use Llama to improve other non-Llama models.

- Policy Changes: Meta retains significant control over how the “open” community uses its tech.

By choosing an Apache 2.0 open-source AI model like Trinity, a company ensures that its core intellectual property isn’t built on a foundation that Meta could theoretically “revoke” or complicate through legal updates later. It offers a “clean” legal audit trail for venture capital or M&A activities.

4. Can a 26-person startup really compete with OpenAI or Google?

Arcee AI isn’t trying to build a “world-simulator” or a general-purpose assistant that knows every joke and recipe on the internet. Instead, they are focusing on Small Language Models (SLMs) and Domain-Specific models.

By spending their $20 million budget specifically on high-quality reasoning data rather than massive web-scrapes, they’ve achieved high efficiency. Their success proves that in 2026, the “scaling laws” are being challenged by “data quality laws.” You don’t need 5,000 engineers to build a world-class model if your training pipeline is mathematically superior and your data is curated for enterprise logic rather than social media chatter.

5. What are the hardware requirements for running Arcee’s 400B Trinity model?

Running a 400-billion parameter open-source AI model is a significant undertaking. While smaller 7B or 70B models can run on consumer-grade hardware or single Mac Studios, a 400B model typically requires:

- Multi-GPU Clusters: You will likely need 8x H100 or A100 GPUs to run the model at full precision.

- Quantization: Many developers use “quantization” (4-bit or 8-bit) to shrink the model size, allowing it to run on more modest hardware (like 2x or 4x A6000s) with minimal loss in reasoning capability.

- Cloud Orchestration: For those without the hardware, Arcee offers a Cloud API, but the real power lies in the fact that you could move it to your own private cloud (VPC) at any time.

6. How does Arcee AI handle data privacy during fine-tuning?

One of the main reasons to use a Western open-source AI model is the ability to perform On-Premises Fine-Tuning. When you use a closed API (like GPT-4), your data—including proprietary trade secrets or patient records—is sent to a third-party server.

With Arcee, you download the weights. Your training data never leaves your firewall. Arcee provides tools specifically designed to help enterprises “merge” their internal knowledge into the model’s weights without “catastrophic forgetting,” ensuring the model becomes an expert on your company while keeping that expertise 100% private.

7. What is “OpenClaw” and why is Arcee’s model popular there?

OpenClaw is a leading open-source framework for building autonomous AI agents. Originally, many OpenClaw users relied on Anthropic’s Claude. However, after Anthropic implemented restrictive pricing for agentic usage, the community looked for a stable alternative.

Arcee’s models have become a “gold standard” for OpenClaw because:

- Stability: No one can change the API terms on a model you host yourself.

- Reasoning: Trinity’s “Large Thinking” capabilities allow it to handle the complex multi-step planning required for agents.

- Cost: For high-volume agentic tasks, self-hosting an open-source AI model is significantly cheaper than paying per-token on a commercial API.

8. Is Trinity Large Thinking suitable for coding and software development?

Yes. Reasoning models are inherently better at coding because coding is essentially a series of logical “if-then” statements and structural planning. Arcee has optimized Trinity to understand complex codebase structures. Because it is an open-source AI model, developers can also “feed” it their entire private repository to create a local coding assistant that understands their specific style and library dependencies without the risk of leaking code to a competitor’s training set.

9. What is the future of “Sovereign AI”?

Sovereign AI is the idea that a nation or a company should own the intelligence it relies on. Just as countries want to own their energy grids and water supplies, in 2026, they want to own their “intelligence grid.” Arcee AI is at the forefront of this movement. By providing a high-performance open-source AI model that is free from the influence of both “Big Tech” monopolies and foreign geopolitical rivals, Arcee is enabling a future where AI is a public utility rather than a proprietary secret.