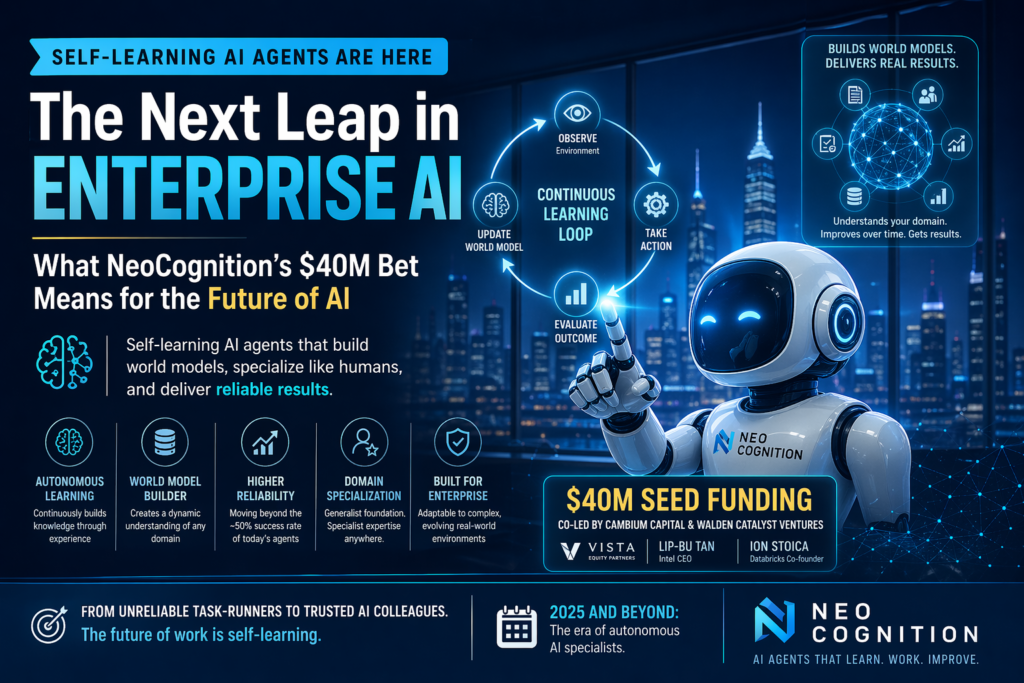

Self-learning AI agents are no longer a theoretical milestone — they’re a funded startup reality. NeoCognition, a research lab spun out of Ohio State University, just secured $40 million in seed funding to build AI agents that specialize and adapt the way humans do, marking one of the most significant early-stage bets on the next generation of enterprise AI.

If you’re wondering why this matters: today’s AI agents fail at their assigned tasks roughly half the time. NeoCognition is building the architecture to fix that.

What Are Self-Learning AI Agents?

Definition: Self-learning AI agents are AI systems that autonomously acquire domain-specific knowledge over time — adapting their behavior, building internal world models, and improving task performance without being manually retrained for each new environment.

This is fundamentally different from what most “agents” do today.

Current AI agents — including those powering popular tools like Claude Code, OpenAI’s operator products, and Perplexity’s computer use features — are generalist systems. They approach every task as if for the first time, drawing on broad pre-trained knowledge but carrying no accumulated understanding of a specific environment, workflow, or set of rules.

Self-learning AI agents, by contrast, build up a persistent model of their operating domain. They learn which actions produce which outcomes. They develop a map of cause-and-effect within a specific professional context. And crucially, they get reliably better at the tasks they’re assigned — much like a new employee who starts as a generalist but rapidly becomes a specialist on the job.

NeoCognition’s founding thesis is that this capacity for rapid, autonomous specialization is the critical missing link between today’s unreliable agents and tomorrow’s trusted AI workers.

Why Today’s AI Agents Fail — The 50% Problem

The Consistency Gap

Yu Su, NeoCognition’s founder and a former Ohio State AI agent lab director, puts the problem bluntly: current agents complete tasks as intended only about 50% of the time.

That number should give every enterprise CTO pause. A human employee who failed half their assigned tasks would be let go immediately. Yet organizations are being asked to trust agents with this same failure rate for complex, consequential work — from data retrieval and customer workflows to code execution and scheduling.

The root cause isn’t intelligence. It’s consistency.

Today’s AI agents are highly capable within the boundaries of their training, but they lack persistent knowledge about the specific systems, rules, and relationships that define any given work environment. Every task is approached cold. Every workflow is navigated without memory of what worked before. The agent that helped process invoices yesterday has no retained understanding of how your finance systems actually behave.

Why Generalist Agents Can’t Be Trusted Workers

Think of it this way: a brilliant generalist on their first day at a new job is going to make mistakes, not because they lack intelligence, but because they lack context. They don’t know the internal shorthand, the exception-handling protocols, the quirks of the legacy software, or the unwritten rules of which approvals are actually required.

Self-learning AI agents are designed to solve this. They enter a domain as generalists and earn specialization through experience — building an internal model of the “micro world” they operate in.

As Su told TechCrunch: “For humans, our continued learning process is essentially the process of building a world model for any profession, any environment.”

NeoCognition’s agent architecture mirrors this process by design.

How NeoCognition Is Building Self-Learning AI Agents That Specialize

The World Model Approach

The core of NeoCognition’s technology is the concept of an autonomous world model builder. Rather than fine-tuning a model on labeled examples for a specific domain — which requires expensive, human-curated data — NeoCognition’s agents are designed to build their own domain model through interaction.

This approach draws directly from cognitive science. Human experts don’t memorize a domain — they develop mental models: dynamic, updated representations of how their professional environment works. A seasoned accountant doesn’t look up the tax code every time; they’ve internalized a structured model of rules, precedents, and likely edge cases.

NeoCognition’s self-learning AI agents are engineered to do the same thing, for any domain an enterprise points them at.

The agent observes its environment, takes actions, evaluates outcomes, and updates its internal world model accordingly — continuously. It builds an increasingly accurate map of the relationships, constraints, and consequences within a given professional micro-world. As that model matures, task completion rates improve, and reliability increases toward the kind of consistency that makes autonomous operation genuinely trustworthy.

Generalist Foundation + Domain Specialization

A key architectural distinction worth understanding: NeoCognition is not building narrow, vertically-trained agents. The company is building generalist agents that are capable of self-learning specialization in any domain.

This is a significant technical and commercial differentiator. Traditional approaches to reliable AI agents require custom engineering for each vertical — bespoke pipelines, hand-labeled data, and ongoing maintenance specific to one industry or use case. That model doesn’t scale.

NeoCognition’s design flips this. A single agent system can be deployed across different enterprise environments — legal, finance, customer operations, SaaS tooling — and develop domain expertise in each through autonomous learning rather than manual customization.

The result: enterprises don’t need to choose between generalist flexibility and specialist reliability. They get both.

NeoCognition vs. Traditional AI Agent Platforms

Understanding where NeoCognition fits requires comparing it against the existing landscape of AI agent approaches.

| Feature | Traditional AI Agents | NeoCognition Self-Learning AI Agents |

|---|---|---|

| Learning Model | Static (pre-trained, no in-deployment learning) | Dynamic (continuous autonomous learning) |

| Domain Expertise | Generalist; must be fine-tuned per vertical | Generalist that self-specializes in any domain |

| Task Reliability | ~50% success on complex tasks | Targeting significantly higher through world modeling |

| Customization Requirement | High (requires bespoke engineering per use case) | Low (agents learn domain structure autonomously) |

| Memory | No persistent cross-session memory by default | Builds persistent domain-specific world model |

| Enterprise Fit | Works with well-defined, narrow workflows | Designed for complex, dynamic enterprise environments |

| Deployment Model | Point-in-time deployment, periodic retraining | Continuously improving in production |

This comparison reveals why NeoCognition’s approach is compelling to enterprise buyers. The self-learning AI agent model aligns with how real organizations actually operate — with messy, evolving workflows that no static system can keep up with.

Why $40M at Seed Stage? The Investor Signal

A $40 million seed round is unusual by any measure. For context, most seed rounds for AI startups land between $3 million and $15 million. NeoCognition’s raise is nearly 3x the top of that range — and it attracted a distinctive investor mix.

The round was co-led by Cambium Capital and Walden Catalyst Ventures, with participation from Vista Equity Partners and a set of high-profile angels including Intel CEO Lip-Bu Tan and Databricks co-founder Ion Stoica.

Vista Equity Partners’ involvement is particularly notable. As one of the largest private equity firms in enterprise software, Vista’s portfolio spans hundreds of SaaS companies that are actively looking to modernize their products with AI capabilities. Su explicitly called out this relationship as a strategic asset: Vista gives NeoCognition direct access to a vast installed base of potential enterprise customers.

That’s not just capital — it’s a distribution channel built into the cap table.

The angels bring technical validation. Lip-Bu Tan’s presence connects NeoCognition to semiconductor infrastructure. Ion Stoica’s Databricks background — building the data layer that most enterprise AI runs on — signals that serious infrastructure thinkers believe NeoCognition’s architecture is technically credible.

The size of the round also reflects the current market dynamic: experienced AI researchers with novel approaches are in high demand, and investors are writing larger checks earlier to secure access. Su himself noted that he initially resisted pressure from VCs to commercialize, only choosing to spin out NeoCognition when he became convinced that foundational model advances had created a genuine opening for agents with real personalization and specialization capability.

What Self-Learning AI Agents Mean for Enterprise Software

A New Category of AI Worker

The implications of reliable self-learning AI agents for enterprise software are significant. Right now, most enterprise AI deployments fall into one of two buckets:

- Copilot features — AI that assists humans with discrete tasks (drafting, summarizing, suggesting) but doesn’t operate autonomously.

- Narrow automation — AI bots engineered for specific, repetitive workflows with limited scope for variation.

Self-learning AI agents represent a third category: autonomous domain experts. These are agents that can be onboarded into a complex enterprise environment and develop genuine expertise, without requiring the kind of specialized engineering investment that narrow automation demands.

For SaaS companies — NeoCognition’s primary target market — this opens a compelling product line. Rather than building static AI features, SaaS vendors can embed self-learning agent infrastructure that becomes more valuable the longer it operates within a customer’s environment. The product improves automatically. Customer switching costs rise. Retention strengthens.

Key Enterprise Use Cases for Self-Learning AI Agents

Here are the domains where self-learning AI agents are likely to deliver the most immediate enterprise value:

- Financial operations: Agents that learn an organization’s specific accounting rules, exception patterns, and approval workflows — and apply them consistently without human handholding.

- Legal and compliance workflows: Agents that build domain models around a company’s regulatory environment, contract templates, and risk thresholds.

- Customer success and support: Agents that develop a deep understanding of a product’s edge cases, common failure modes, and customer communication norms.

- Enterprise IT and DevOps: Agents that learn the architecture, dependencies, and deployment protocols of a specific organization’s technical stack.

- Sales and CRM operations: Agents that internalize pipeline stage definitions, deal qualification criteria, and individual rep workflows to provide genuinely contextual guidance.

In each of these cases, the value of the self-learning AI agent increases over time — the opposite of today’s static, one-size-fits-all systems.

The Road Ahead: What Human-Like AI Learning Could Unlock

From Task-Runners to Trusted Colleagues

The long arc that NeoCognition is pursuing points toward something genuinely transformative: AI agents that function as trusted, autonomous domain experts rather than probabilistic task-runners that require constant supervision.

This isn’t just a product vision — it’s a technical paradigm shift. The current “take a leap of faith every time you run an agent,” as Su puts it, is fundamentally incompatible with enterprise deployment at scale. Organizations cannot build reliable products, processes, or value chains on top of systems that succeed half the time.

Self-learning AI agents that build persistent world models close this gap by making the agent’s behavior increasingly predictable, contextual, and aligned with the specific environment it operates in.

The Open Questions

That said, NeoCognition faces real challenges common to any startup operating at this frontier:

- Evaluation: How do you measure whether an agent’s world model is accurate versus confidently wrong? Self-learning systems can encode errors as readily as insights.

- Generalization vs. overfitting: An agent that specializes deeply in one organization’s environment may become brittle if that environment changes — or may fail to transfer learning across similar but distinct contexts.

- Trust and oversight: Autonomy and reliability must be accompanied by transparency. Enterprise buyers will require explainability for decisions made by self-learning agents, especially in regulated industries.

- Compute costs: Continuous autonomous learning has a compute cost profile very different from standard inference. As NeoCognition scales, efficiency in the learning loop will be critical.

These are solvable problems — but they’re the problems that will define whether NeoCognition’s $40 million translates into lasting category leadership, or becomes a sophisticated proof-of-concept in a field moving fast in multiple directions.

What to Watch

With a team of 15 PhD-level researchers and a well-resourced cap table, NeoCognition has the ingredients to build something meaningful. The milestones that will signal genuine traction:

- Published or verifiable benchmarks showing self-learning AI agents outperforming generalist systems on complex, multi-step enterprise tasks.

- First enterprise deployments with publicly referenceable outcomes.

- Reliability metrics that break meaningfully above the 50% baseline Su cites for current agents.

- Expansion of the team as research translates into product.

The broader AI agent market is moving quickly, with well-funded competitors at major labs and a growing number of specialized startups. But NeoCognition’s differentiation — building agents that learn autonomously rather than requiring manual specialization — addresses the most fundamental weakness of today’s agent ecosystem.

If the world model approach works at scale, self-learning AI agents could become the dominant paradigm for enterprise AI deployment within the next few years. The $40 million seed is a large bet. The potential upside is larger still.

Quick Summary: NeoCognition at a Glance

- Founded: 2025 (spun out from Ohio State University)

- Founder: Yu Su, professor and AI agent lab director

- Funding: $40M seed, co-led by Cambium Capital and Walden Catalyst Ventures

- Notable Investors: Vista Equity Partners, Intel CEO Lip-Bu Tan, Databricks co-founder Ion Stoica

- Core Technology: Self-learning AI agents that autonomously build world models for any domain

- Target Market: Enterprise SaaS companies seeking to deploy reliable autonomous AI workers

- Team Size: ~15 employees, majority PhD holders

- Key Problem Addressed: Current AI agents succeed at complex tasks only ~50% of the time

The rise of self-learning AI agents signals a maturation in how the industry thinks about AI reliability. The question is no longer whether AI can complete a task — it’s whether it can be trusted to do so consistently, in your specific environment, without being told exactly how. NeoCognition is betting the answer is yes.

Frequently Asked Questions (FAQ)

1. What are self-learning AI agents?

Self-learning AI agents are advanced artificial intelligence systems that continuously improve their performance by learning from real-world interactions. Unlike traditional AI tools that rely on static training, self-learning AI agents adapt over time, building domain-specific knowledge and refining their decision-making processes. This allows them to handle complex tasks more effectively and become more reliable with continued use in enterprise environments.

2. How do self-learning AI agents differ from traditional AI agents?

The main difference lies in adaptability and learning capability. Traditional AI agents operate based on pre-trained models and often struggle when faced with new or evolving scenarios. In contrast, self-learning AI agents dynamically learn from their environment, develop internal world models, and improve without constant retraining. This makes them more suitable for real-world business applications where workflows are constantly changing.

3. Why are current AI agents considered unreliable?

Many current AI agents fail to complete tasks consistently because they lack persistent memory and contextual understanding. They treat each task independently, without learning from past experiences. Self-learning AI agents address this issue by storing knowledge, understanding patterns, and applying previous learnings to new tasks, significantly improving reliability and consistency over time.

4. What industries can benefit from self-learning AI agents?

Several industries can benefit from adopting self-learning AI agents, including finance, healthcare, legal services, customer support, and IT operations. These agents can learn industry-specific rules, automate repetitive workflows, and provide insights based on accumulated knowledge. Their ability to specialize makes them highly valuable in environments that require accuracy and adaptability.

5. How do self-learning AI agents improve enterprise efficiency?

Self-learning AI agents enhance enterprise efficiency by reducing manual intervention, minimizing errors, and optimizing workflows. As they learn from ongoing operations, they become faster and more accurate, enabling businesses to scale operations without proportionally increasing human resources. This leads to cost savings and improved productivity across departments.

6. Are self-learning AI agents safe and trustworthy?

While self-learning AI agents offer significant advantages, trust and safety depend on proper implementation and monitoring. Organizations must ensure transparency, establish evaluation mechanisms, and maintain human oversight. When deployed responsibly, these agents can become reliable digital workers that consistently deliver accurate results.

7. What challenges do self-learning AI agents face?

Despite their potential, self-learning AI agents face challenges such as model evaluation, risk of incorrect learning, and high computational costs. Ensuring that these agents learn the right patterns without bias is critical. Additionally, maintaining performance while scaling across multiple domains remains a key technical hurdle.

8. What is the future of self-learning AI agents?

The future of self-learning AI agents looks promising as they evolve into autonomous domain experts. With advancements in AI architecture and computing power, these agents are expected to become more reliable, efficient, and widely adopted across industries. They could redefine how businesses operate by transforming AI from a support tool into a fully autonomous workforce component.