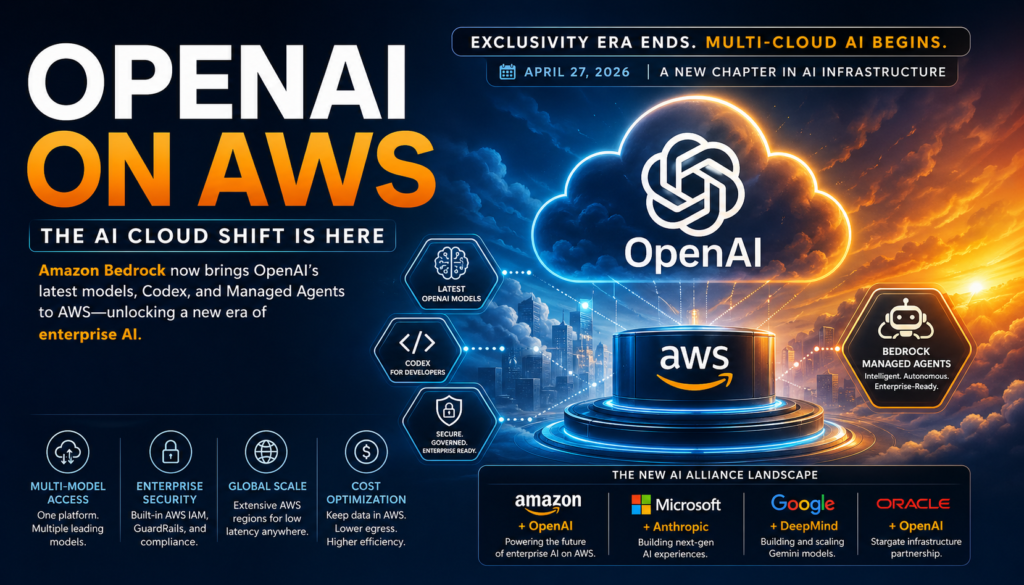

Amazon’s AWS now offers OpenAI models, Codex, and a brand-new agent service directly through Amazon Bedrock — and it happened almost overnight. The shift became possible after OpenAI quietly ended Microsoft’s exclusive rights to its technology on April 27, 2026, unlocking a multi-cloud AI era that enterprises have been waiting for.

If you’re a developer, a cloud architect, or a business leader deciding where to run your AI workloads, this is the most consequential infrastructure story of 2026.

What Is OpenAI on AWS?

Definition: OpenAI on AWS refers to the availability of OpenAI’s AI models, tools, and agent services natively within Amazon Web Services — specifically through Amazon Bedrock, AWS’s managed AI platform for building and deploying AI-powered applications.

Until April 2026, this was impossible. A clause in the OpenAI–Microsoft partnership granted Microsoft exclusivity over OpenAI’s commercial products, meaning no other major cloud provider could offer them. That clause is now gone.

As of April 29, 2026, Amazon Bedrock includes:

- OpenAI’s latest reasoning and language models

- Codex, OpenAI’s code-generation service

- Bedrock Managed Agents, a new agent orchestration service built specifically around OpenAI’s reasoning models

OpenAI on AWS is not a third-party workaround or an API relay. It is a first-party, deeply integrated offering that Amazon promises is “the beginning of a deeper collaboration between AWS and OpenAI.”

How the Microsoft–OpenAI Exclusivity Era Ended

To understand why OpenAI on AWS is such a big deal, you need the backstory.

OpenAI and Microsoft have had a deeply intertwined relationship since 2019, with Microsoft investing tens of billions of dollars and receiving exclusivity over OpenAI’s commercial products in return. This meant Azure was the only major cloud where you could officially build with GPT-4, DALL·E, Codex, and other OpenAI tools at enterprise scale.

That changed for a simple reason: money. OpenAI signed a deal with Amazon worth up to $50 billion — one of the largest private funding arrangements in history. The problem? Offering OpenAI products on AWS would violate the Microsoft exclusivity clause.

The resolution came on April 27, 2026, when a revised OpenAI–Microsoft agreement was announced. Microsoft relinquished its exclusive rights to OpenAI’s technology. Within 24 hours, Amazon CEO Andy Jassy tweeted that it was a “very interesting announcement” — and within 48 hours, Bedrock was live with OpenAI’s latest offerings.

The Microsoft–OpenAI relationship had reportedly been deteriorating for some time, with each company quietly strengthening ties with the other’s biggest rival. OpenAI moved toward AWS and Oracle. Microsoft deepened its relationship with Anthropic, including working on a new agent product powered by Claude.

What Amazon Is Actually Offering on Bedrock

OpenAI Models on Amazon Bedrock

Amazon Bedrock is the central platform at play here. Bedrock is Amazon’s fully managed service that lets developers access a range of foundation models from multiple AI providers — including Anthropic, Meta, Cohere, and now OpenAI — through a single, unified API.

With OpenAI on AWS now live, developers can access OpenAI’s latest models through the same Bedrock interface they already use for Claude or Llama. This means:

- No need to manage separate API keys or endpoints for OpenAI

- OpenAI model inference runs on AWS infrastructure, meaning lower latency for workloads already hosted on AWS

- Billing consolidated through existing AWS accounts

This multi-model flexibility has always been Bedrock’s core value proposition. The addition of OpenAI’s models makes it the most comprehensive AI model marketplace of any cloud provider.

Codex for Code Generation

Codex, OpenAI’s code-writing AI, is also available through Bedrock. For engineering teams already running CI/CD pipelines, code repositories, and development tooling on AWS, access to Codex natively within their cloud environment is a significant workflow improvement.

Previously, using Codex required routing requests through OpenAI’s API or Azure OpenAI Service. With OpenAI on AWS, those requests can stay entirely within an organization’s AWS environment — which matters for compliance, data residency, and latency.

Bedrock Managed Agents

This is arguably the most significant new product in the announcement. Bedrock Managed Agents is a new service from Amazon specifically designed to use OpenAI’s reasoning models as their cognitive backbone.

What are Bedrock Managed Agents? They are pre-configured, managed AI agents that can reason through multi-step tasks, call external tools, interact with databases, and operate autonomously — all within the security perimeter of an AWS environment.

Key features include:

- Agent steering: Developers can guide agent behavior through constraints and priorities without fine-tuning

- Built-in security controls: Identity and access management (IAM) integration means agents operate within existing AWS permission structures

- OpenAI reasoning models as the engine: The agents leverage OpenAI’s o-series reasoning models, known for complex multi-step problem solving

For enterprise teams that have been cautious about deploying autonomous AI agents due to security and governance concerns, Bedrock Managed Agents directly addresses those fears by wrapping OpenAI’s most powerful reasoning capabilities in AWS’s compliance-friendly infrastructure.

AWS vs Azure: Where to Run Your OpenAI Workloads Now

The central question for most enterprise architects: now that OpenAI on AWS is a reality, how does it compare to running OpenAI on Azure?

Here is a clear comparison:

| Feature | OpenAI on AWS (Bedrock) | OpenAI on Azure (Azure OpenAI Service) |

|---|---|---|

| Model availability | Latest OpenAI models + Codex | Latest OpenAI models + DALL·E, Whisper |

| Agent services | Bedrock Managed Agents (new) | Azure AI Agent Service |

| Multi-model access | Yes — Anthropic, Meta, Cohere, OpenAI | Primarily OpenAI + limited others |

| Security & compliance | AWS IAM, GuardRails for Bedrock | Azure AD, Responsible AI controls |

| Data residency controls | AWS Regions (extensive global coverage) | Azure Regions (comparable global coverage) |

| Existing AWS integration | Native — no cross-cloud data transfer | Requires cross-cloud setup for AWS workloads |

| Existing Azure integration | Requires cross-cloud setup | Native — ideal for Microsoft-stack enterprises |

| Pricing model | Pay-per-token via AWS billing | Pay-per-token via Azure billing |

| Maturity | Launched April 2026 | Available since 2023 |

The bottom line: For organizations already running significant infrastructure on AWS, OpenAI on AWS now offers a compelling reason to consolidate AI workloads rather than maintaining a separate Azure relationship just for OpenAI access. For deeply Microsoft-integrated enterprises (Office 365, Azure AD, Teams), Azure OpenAI Service still has native workflow advantages.

Neither is universally superior — your existing cloud footprint is the deciding factor.

Why This Shift Matters for Enterprise AI Strategy

Before OpenAI on AWS became available, many organizations faced an awkward choice: use the best AI models (OpenAI’s) on Azure, or use competitive-but-different models (Anthropic’s Claude, Meta’s Llama) on their primary cloud platform, AWS.

This created real costs:

- Data egress fees when sending data cross-cloud for inference

- Compliance complications when regulated data had to leave an AWS environment to reach Azure

- Vendor lock-in risk tied to a single AI provider on a single cloud

- Operational overhead of managing two cloud relationships

OpenAI on AWS resolves all four problems simultaneously.

Here are the key enterprise implications:

- AI cost optimization: Inference costs drop when data doesn’t leave your primary cloud region

- Simplified governance: One cloud environment, one audit trail, one compliance framework

- Model optionality: Bedrock lets teams switch between OpenAI, Anthropic, and other models based on task fit — without replatforming

- Agent deployment confidence: Bedrock Managed Agents give risk-averse enterprises a governed path to agentic AI

The Bigger Picture: AI’s Cloud Alliance Wars

What we are witnessing with OpenAI on AWS is not just a product launch. It is the opening chapter of a restructured AI ecosystem where model providers and cloud providers form strategic alliances that compete directly against each other.

The current alliance map looks like this:

- Amazon + OpenAI: Bedrock now hosts OpenAI models and agents; Amazon is a major OpenAI investor

- Microsoft + Anthropic: Microsoft is deepening its Claude integration and building new agent products on top of it

- Google + Google DeepMind: Vertically integrated — Google builds and hosts its own Gemini models

- Oracle + OpenAI: Oracle is also part of the Stargate infrastructure consortium

This fragmentation of the OpenAI–Microsoft exclusive is the tipping point. For the past three years, competitive moats in enterprise AI were defined by which cloud you were on. Going forward, the moat will be defined by which models you can access, how quickly, and at what governance level.

OpenAI on AWS signals that the era of AI model exclusivity is over. The era of AI infrastructure competition has just begun.

What Should Developers and Businesses Do Right Now?

If you’re making AI infrastructure decisions today, here is a practical framework:

If you’re an AWS-first organization:

You now have no compelling reason to maintain a separate Azure relationship solely for OpenAI access. Evaluate migrating OpenAI workloads to Bedrock — particularly if you’re already using Bedrock for Anthropic’s Claude or Meta’s Llama models.

Action: Request access to OpenAI models on Amazon Bedrock and run a cost comparison against your current Azure OpenAI spend, factoring in data transfer costs.

If you’re an Azure-first organization:

The launch of OpenAI on AWS doesn’t immediately change your calculus unless you have significant non-Azure infrastructure. Azure OpenAI Service is mature, deeply integrated with Microsoft 365 and Azure Active Directory, and Microsoft isn’t going anywhere as an OpenAI partner.

Action: Monitor Bedrock Managed Agents closely — if AWS ships enterprise agent governance features faster than Azure, it could shift the conversation.

If you’re evaluating agentic AI for the first time:

Bedrock Managed Agents is worth serious evaluation. The combination of OpenAI’s reasoning models and AWS’s security posture is the most enterprise-friendly autonomous agent offering currently available from any provider.

Action: Start with a limited pilot using Bedrock Managed Agents on a defined internal workflow — not customer-facing — to evaluate the governance controls before wider deployment.

Questions to ask your cloud provider right now:

- Which OpenAI models are available on Bedrock today, and what is the roadmap?

- How does Bedrock Managed Agents pricing compare to building custom agents on Azure AI Agent Service?

- What data residency guarantees exist for inference requests made through Bedrock to OpenAI models?

- How does GuardRails for Bedrock apply to OpenAI model outputs?

Key Takeaways

To summarize what the arrival of OpenAI on AWS means:

- The Microsoft exclusivity era is over. OpenAI is now officially available as a first-party service on Amazon’s cloud, not just Microsoft’s.

- Amazon Bedrock is now the most model-diverse AI platform from any major cloud provider, offering Anthropic, Meta, Cohere, and OpenAI under one roof.

- Bedrock Managed Agents is a net-new product — not a repackaging of existing services — designed specifically to run OpenAI’s reasoning models in enterprise-governed environments.

- Multi-cloud AI is now the default reality. Organizations should build AI architectures with model portability in mind, not cloud-native lock-in.

- The alliance wars are just beginning. Microsoft–Anthropic vs Amazon–OpenAI vs Google’s vertical integration will define cloud competition for the next decade.

OpenAI on AWS isn’t just a product update. It is a structural realignment of the enterprise AI market, and it happened in 48 hours. The organizations that adapt their cloud strategy now will have a measurable head start.

Frequently Asked Questions

Q: Is OpenAI on AWS available to all AWS customers? A: Yes. OpenAI’s models, Codex, and Bedrock Managed Agents are available through Amazon Bedrock, which is accessible to all AWS account holders. Standard Bedrock pricing and access controls apply.

Q: Does using OpenAI on AWS mean my data goes to OpenAI? A: This depends on the data processing agreements between AWS and OpenAI. Enterprises should review the Bedrock data privacy documentation and any applicable Business Associate Agreements before processing sensitive data.

Q: Can I still use Azure OpenAI Service if I switch some workloads to AWS? A: Yes. Nothing about this announcement prevents organizations from maintaining a multi-cloud strategy. The point is that you no longer have to use Azure to access OpenAI’s models at enterprise scale.

Q: What makes Bedrock Managed Agents different from building my own agent with the OpenAI API? A: Bedrock Managed Agents provides managed infrastructure, built-in AWS security integration (IAM, logging, audit trails), and agent steering features that would otherwise require custom engineering. It is a higher-level abstraction designed for enterprise governance requirements.