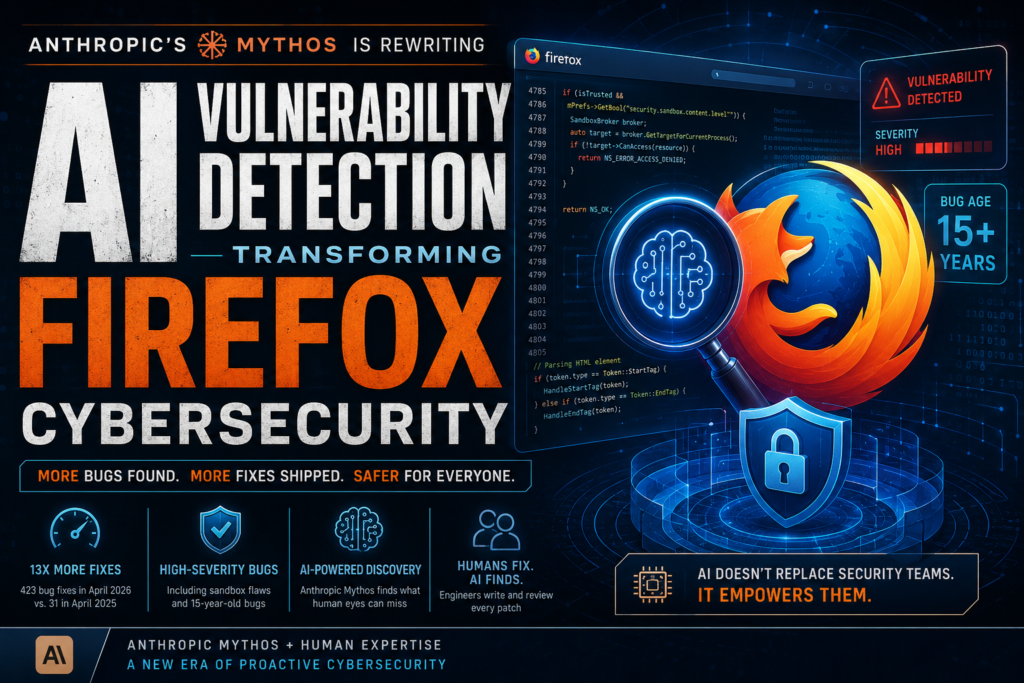

Anthropic’s Mythos model has done something no AI security tool has managed before: finding hundreds of high-severity bugs in Firefox — including some lurking in the codebase for over a decade. AI vulnerability detection has just crossed a threshold that security professionals have been waiting years for.

What Is Anthropic Mythos and Why Does It Matter for Cybersecurity?

Definition: Anthropic Mythos is a large-scale AI model developed by Anthropic, unveiled in April 2026, with a demonstrated specialization in identifying software security vulnerabilities at a speed and depth that surpasses prior AI tools — and, in some domains, human researchers.

When Anthropic previewed Mythos, it came with a striking warning: the model was so effective at sniffing out vulnerabilities that thousands of high-severity bugs had to be fixed before the model could be made public. That isn’t a marketing line — it’s a preview of what AI vulnerability detection looks like when it genuinely works.

The real-world validation came from Mozilla, which published a detailed post in May 2026 describing how Mythos had transformed Firefox’s security pipeline. The results were not incremental. They were a step change — and they carry implications for every software team that cares about security.

How AI Vulnerability Detection Is Changing Browser Security

Until recently, AI-powered security tools were a mixed blessing. They could scan code at scale, but they buried security teams in low-quality reports and false positives. Researchers spent more time triaging AI-generated noise than acting on genuine threats. That dynamic has now fundamentally shifted.

Mozilla’s security researchers put it plainly: the models got dramatically more capable, and the techniques for harnessing them improved just as fast. Together, those two factors moved AI vulnerability detection from “interesting experiment” to “core part of the security workflow.”

Firefox’s Numbers Tell the Story

The numbers Mozilla published are hard to ignore. In April 2026, Firefox shipped 423 bug fixes — compared to just 31 in April 2025. That’s a 13x increase in one year. Twelve of those bugs were documented in detail, ranging from a pair of sophisticated sandbox vulnerabilities to a 15-year-old parsing error in how Firefox handles an HTML element.

Brian Grinstead, a distinguished engineer at Mozilla, described the shift as sudden and unmistakable: “These things are actually just suddenly very good. We see that on our own internal scanning, we see that on external bug reports, and we see that in all sorts of signals across the industry.”

AI vulnerability detection is no longer a marginal productivity gain. It is redefining what “thorough” looks like in a security review.

Sandbox Bugs: The Holy Grail of Browser Security

Browser sandboxes are among the hardest targets in software security. Exploiting a sandbox requires writing a compromised patch, then using that patch to attack the most protected layer of the browser — a multi-step, creative, technically precise process.

Mozilla’s bug bounty program reflects this difficulty directly: successfully finding a Firefox sandbox vulnerability earns up to $20,000, the highest reward available. Despite that incentive, human researchers rarely find sandbox bugs at scale. Mythos found more of them than human researchers ever had — not occasionally, but consistently, at volume.

That result alone justifies taking AI vulnerability detection seriously, even for organizations that have historically relied on manual audits and traditional fuzzing pipelines.

Traditional Bug Hunting vs. AI Vulnerability Detection

Understanding where AI vulnerability detection outperforms — and where it still needs human support — helps security teams decide where to invest. Here’s a direct comparison:

| Dimension | Traditional Bug Hunting | AI Vulnerability Detection (Mythos-era) |

|---|---|---|

| Speed | Days to weeks per audit cycle | Continuous, near-real-time scanning |

| Coverage | Focused on known vulnerability patterns | Can identify novel and dormant bugs |

| False Positive Rate | Low (human judgment filters noise) | Previously high; now substantially reduced with agentic self-assessment |

| Sandbox Vulnerabilities | Rare finds; requires elite researchers | Found at higher volume than human researchers |

| Bug Age | Typically catches recent regressions | Uncovers bugs dormant for 10–15+ years |

| Patch Generation | Human-written, high quality | AI drafts exist but usually require human rework |

| Cost per Bug Found | High (researcher time + bounty) | Lower per-finding, but tooling investment required |

| Scalability | Hard to scale without more headcount | Scales with compute, not headcount |

The table makes clear that AI vulnerability detection is not a replacement for security engineers — but it is a force multiplier that changes the math on what a security team can accomplish.

What Makes Mythos Different From Previous AI Security Tools?

The history of AI in cybersecurity is littered with tools that promised transformation and delivered noise. What makes the current generation — and Mythos specifically — different?

The False Positive Problem — Now Solved?

Earlier AI bug-finding tools had a structural problem: they were optimized to flag potential vulnerabilities, not to assess whether those flags were worth a human’s time. The result was alert fatigue. Security teams that deployed these tools often found themselves spending more time disproving AI findings than acting on real threats.

Mozilla’s researchers noted that this dynamic has now reversed. The models themselves have become capable enough to evaluate the quality of their own outputs and filter poor-quality findings before they reach a human reviewer.

This is the difference between a tool that finds bugs and a tool that finds bugs worth fixing — a distinction that determines whether AI vulnerability detection is actually useful in production environments.

Agentic Systems and Self-Assessment

The key architectural shift is the move to agentic systems — AI models that don’t just generate a single output, but plan, execute, reflect, and revise across multiple steps. For vulnerability detection, this means a model can write a potential exploit, test it, assess whether it demonstrates a real vulnerability, and discard it if the evidence doesn’t hold up.

This self-correcting loop is what allows Mythos to find sandbox vulnerabilities at scale. The task requires generating a compromised code patch and then attacking the browser’s most hardened layer using that patch. It’s a multi-step chain that requires both creative reasoning and technical precision — exactly the kind of task that benefits from agentic architecture.

AI vulnerability detection powered by agentic systems is a qualitatively different capability from the single-pass scanning tools of two or three years ago.

The Human-AI Partnership: Why Engineers Still Fix the Bugs

One of the most practically important findings from Mozilla’s experience is this: AI finds the bugs; humans fix them.

Despite well-documented progress in AI coding tools, Mozilla’s team found that AI-generated patches for the bugs Mythos discovered could not be deployed directly. The AI-written code served as a useful model — a starting point that illustrated the fix — but every single patch shipped to users was written by a human engineer and reviewed by another.

Grinstead was direct about this: “For the bugs we’re talking about in this post, every single one is one engineer writing a patch and one engineer reviewing it. We have not found it to be automatable.”

This is a useful corrective to the narrative that AI will fully automate software security. The current reality is a human-AI division of labor: AI vulnerability detection dramatically expands the surface area that can be audited, while human judgment remains essential for producing deployable, trustworthy fixes.

Security teams evaluating AI tools should plan their workflows accordingly. The ROI from these tools comes from finding more bugs faster — not from eliminating the engineering work of fixing them.

What This Means for Attackers and Defenders

The emergence of powerful AI vulnerability detection is not a one-sided advantage. The same capabilities that allow Mythos to find bugs in Firefox are available — in some form — to threat actors. The question security professionals are now grappling with is: who benefits more?

The Responsible Disclosure Challenge

Anthropic followed responsible disclosure norms after Mythos found its cache of vulnerabilities, working with affected software vendors before making findings public. But the disclosure window creates an uncomfortable reality: in the month or more between discovery and patch, organizations with unpatched software are exposed.

As AI vulnerability detection tools proliferate, this window is likely to narrow — but the transition period carries real risk. Sophisticated threat actors are almost certainly running similar techniques, even with models less capable than Mythos.

Mozilla’s Grinstead offered a measured take: “It’s useful for both attackers and defenders, but having the tool available shifts the advantage a little bit to defense.”

Anthropic CEO Dario Amodei was more optimistic, arguing that the total number of exploitable bugs is finite: “If we handle this right, we could be in a better position than we started, because we fixed all these bugs. There are only so many bugs to find.”

The honest answer is that nobody knows yet how this balance will settle. What is clear is that AI vulnerability detection has permanently changed the speed and scale at which the security landscape can shift.

Key Takeaways for Security Teams

Whether you manage security for a major browser, an enterprise application, or an open-source project, the Mozilla-Mythos case study offers concrete lessons:

- AI vulnerability detection has crossed a quality threshold. The false positive problem that plagued earlier tools is substantially reduced in agentic, self-assessing systems. The cost-benefit calculation has changed.

- Start with the highest-value targets. Sandbox vulnerabilities and other high-severity bug classes are exactly where AI tools now outperform human researchers at scale. Prioritize these areas for AI-assisted audits.

- Plan for human-in-the-loop patch development. AI finds bugs; humans fix them. Build workflows that treat AI output as a bug report, not a code commit.

- Legacy code is not safe from scrutiny. Mythos found bugs that had been dormant in Firefox for 15 years. AI vulnerability detection does not respect the assumption that “old and stable” means “secure.”

- Responsible disclosure processes need to scale. As AI tools surface more vulnerabilities faster, disclosure and remediation pipelines must keep pace. Teams that haven’t stress-tested their disclosure workflows should do so now.

- Benchmark your current tooling. If your AI security tools are still generating high false positive rates, the industry has moved on. The current generation of agentic AI vulnerability detection tools sets a new baseline for what’s acceptable.

The Road Ahead for AI Vulnerability Detection

The Firefox case study is early evidence of a broader shift. AI vulnerability detection is not replacing security teams — it is expanding what those teams can accomplish. One engineer with access to a Mythos-tier tool can now audit code that would previously have required a team of specialists months to review.

This has positive and challenging implications simultaneously. On the positive side, it means that software that has historically been under-audited — legacy systems, small open-source projects, embedded firmware — becomes auditable at a cost that organizations can actually absorb. On the challenging side, it means that the window of safety for unpatched software is shrinking, and threat actors with access to similar tools will be closing that window from the other direction.

The organizations best positioned for this transition are those that treat AI vulnerability detection as a core capability rather than a supplement — integrating it into their development pipelines, their bug bounty processes, and their incident response planning now, before the rest of the industry catches up.

Firefox’s 13x increase in monthly bug fixes is not an anomaly. It is a preview.