TokenSpeed is the open-source LLM inference engine you need if you’re running coding agents at scale — and it already outperforms TensorRT-LLM, NVIDIA’s state-of-the-art serving stack, by up to 11% on throughput benchmarks. Released in May 2026 by the LightSeek Foundation under the MIT license, TokenSpeed closes one of the most consequential gaps in modern AI infrastructure: the missing piece between raw model capability and production-grade agentic deployment.

What Is TokenSpeed?

Definition: TokenSpeed is an open-source LLM inference engine developed by the LightSeek Foundation, designed specifically to meet the throughput and latency demands of agentic workloads — multi-turn, long-context tasks like those performed by coding agents such as Claude Code, Codex, and Cursor.

Expansion: Most inference engines were optimized for short-form chatbot interactions. TokenSpeed takes a fundamentally different stance. It is engineered around the reality that agentic systems routinely handle contexts exceeding 50,000 tokens across dozens of sequential turns, requiring simultaneous optimization of both per-GPU throughput and per-user perceived responsiveness. As of its public release, TokenSpeed is currently in preview status, with its GitHub repository available under the permissive MIT license.

Why Agentic Inference Demands a New Kind of Engine

The inference bottleneck in AI deployment is not a new problem — but agentic workloads have made it dramatically harder. Traditional LLM serving was optimized for short prompts, single-turn responses, and relatively uniform batch sizes. Coding agents break every one of those assumptions.

The Dual-Metric Problem

Question: What makes agentic inference uniquely difficult to optimize?

Direct Answer: Agentic systems must simultaneously optimize two competing metrics:

- Per-GPU TPM (Tokens Per Minute): How many total tokens a single GPU can serve across all users — determining infrastructure cost and scalability.

- Per-User TPS (Tokens Per Second): How fast an individual user perceives the model responding — determining whether the agent feels usable in real time.

Most public benchmarks don’t capture this dual pressure at all. A system that maximizes throughput by batching aggressively will tank per-user TPS. A system that prioritizes latency will leave GPU capacity severely underutilized. TokenSpeed is designed to maximize per-GPU TPM while enforcing a per-user TPS floor — typically 70 TPS, and sometimes 200 TPS or higher on demanding deployments.

This is not a marginal engineering refinement. It is the core insight behind TokenSpeed’s entire architecture.

Inside TokenSpeed’s Architecture: Five Pillars That Change the Game

TokenSpeed’s design is structured around five interlocking subsystems. Each subsystem addresses a specific failure mode in existing inference engines when applied to agentic workloads.

1. Compiler-Backed Modeling with SPMD

What is SPMD?

SPMD (Single Program, Multiple Data) is a parallel execution pattern where all compute processes run the same program, but each operates on a different shard of data. It is the dominant paradigm in distributed deep learning because it enables clean, predictable parallelism across multiple GPUs.

In TokenSpeed, developers do not need to write the communication logic between GPU processes manually. Instead, they specify I/O placement annotations at module boundaries. A lightweight static compiler then automatically generates the required collective operations during model construction — eliminating an entire class of implementation errors that arise in hand-written distributed LLM code.

This local SPMD approach means less boilerplate, fewer bugs at initialization, and faster iteration when porting new model architectures.

2. A Scheduler That Encodes Correctness in the Type System

The scheduler is where TokenSpeed makes its most architecturally distinctive bet. It makes a hard structural separation between two planes:

- Control Plane (C++): Implemented as a finite-state machine (FSM) that governs KV cache state transfer, resource ownership, and request lifecycle. Crucially, correctness constraints are enforced at compile time via the type system — not at runtime via convention or documentation.

- Execution Plane (Python): Handles the actual computation steps, keeping the development experience fast and accessible.

Why does this matter?

KV cache management is one of the most error-prone areas in LLM serving. Incorrect reuse, race conditions, or resource leaks in the KV cache can cause silent correctness failures — the kind that show up as subtly wrong model outputs rather than clear crashes. By encoding the valid state transitions for KV resources into a verifiable FSM, TokenSpeed catches these errors at compile time rather than discovering them in production.

This is a meaningful safety bet, and it’s one that distinguishes TokenSpeed from most Python-first inference frameworks.

3. A Pluggable, Hardware-Agnostic Kernel Layer

The kernel layer in TokenSpeed is not baked into the engine’s core. Instead, it is treated as a first-class modular subsystem with three key properties:

- A portable public API that abstracts hardware-specific implementations.

- A centralized kernel registry and selection model that dynamically routes to the best available kernel at runtime.

- An extensible plugin mechanism that supports heterogeneous accelerators — meaning TokenSpeed is not architecturally locked to NVIDIA hardware.

This hardware-agnostic design has significant long-term implications for teams running mixed GPU fleets or planning migrations between accelerator generations.

4. The MLA Kernel — Already Adopted by vLLM

The highest-performance kernel in TokenSpeed’s repertoire targets MLA (Multi-head Latent Attention), the attention variant used in models like DeepSeek. This is not an incremental improvement — the TokenSpeed MLA kernel has already been adopted by vLLM, one of the most widely used open-source inference frameworks in production.

Two specific optimizations drive its performance:

Decode Kernel: The query-sequence axis (q_seqlen) and head axis (num_heads) are grouped together to fully utilize NVIDIA Tensor Cores. This matters because coding-agent workloads often use models with fewer attention heads, which would otherwise leave Tensor Core utilization low during decode.

Prefill Kernel: A binary-version prefill kernel uses fine-tuned softmax implementation knobs, outperforming TensorRT-LLM’s MLA across all five typical prefill workload types measured in the benchmarks.

Combined, these two optimizations nearly halve decode latency relative to TensorRT-LLM on speculative decoding workloads at batch sizes 4, 8, and 16 with long prefix KV cache.

5. SMG Integration for Low-Overhead CPU Orchestration

The final subsystem is SMG — a PyTorch-native component integrated for the CPU-side request entrypoint. Its role is to minimize handoff latency between CPU orchestration and GPU execution, reducing the overhead that accumulates across the many short CPU-side operations that happen during multi-turn agentic conversations.

For single-turn chatbots, this overhead is negligible. For a coding agent managing dozens of turns over a long context, it compounds quickly. SMG is TokenSpeed’s answer to that compounding cost.

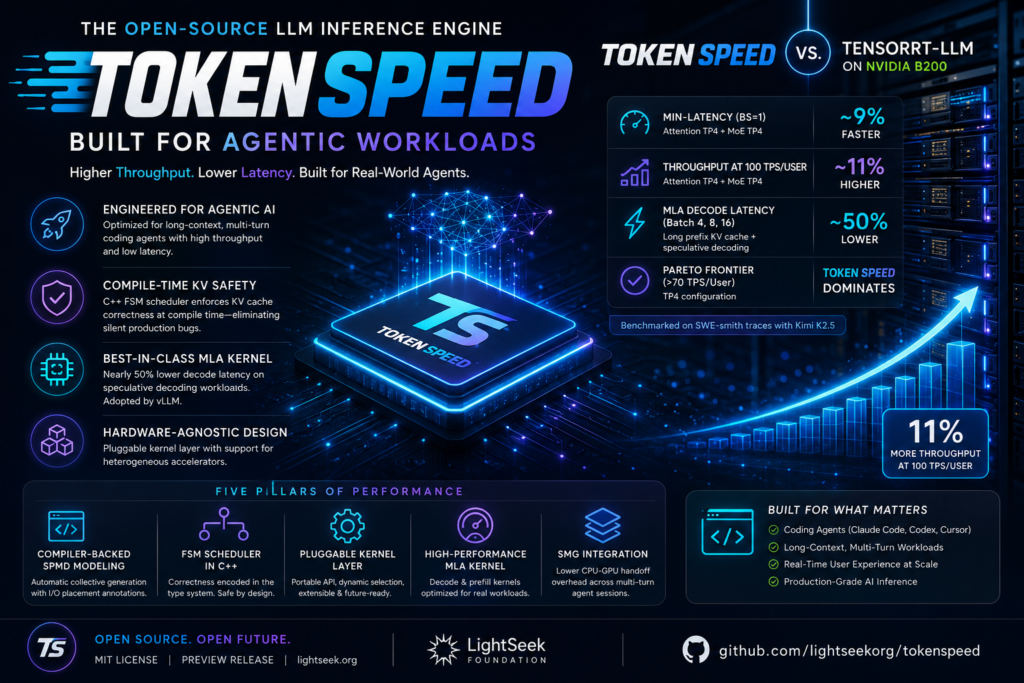

TokenSpeed vs. TensorRT-LLM: Benchmark Results on NVIDIA B200

The LightSeek Foundation, in collaboration with the EvalScope team, benchmarked TokenSpeed against TensorRT-LLM — the current performance standard on NVIDIA Blackwell — using SWE-smith traces as the workload. SWE-smith traces are specifically designed to mirror real production traffic from coding agents, making them significantly more representative than synthetic uniform-batch benchmarks.

The test model was Kimi K2.5, evaluated on NVIDIA B200 hardware in single (non-disaggregated) deployment mode.

| Metric | Configuration | TokenSpeed vs. TensorRT-LLM |

|---|---|---|

| Min-Latency (Batch Size 1) | Attention TP4 + MoE TP4 | ~9% faster |

| Throughput at 100 TPS/User | Attention TP4 + MoE TP4 | ~11% higher |

| MLA Decode Latency (Batch 4, 8, 16) | Long prefix KV cache + speculative decoding | ~50% lower (nearly half) |

| MLA Prefill Performance | All 5 typical coding-agent workload types | TokenSpeed wins across the board |

| Pareto Frontier (>70 TPS/User) | TP4 configuration | TokenSpeed dominates end-to-end |

Note: TP4 refers to tensor parallelism across 4 GPUs — a technique that shards model weights across multiple devices to reduce per-device memory pressure and latency. These benchmarks cover single deployment only; PD (prefill-decode) disaggregation support is undergoing cleanup and will be covered in a dedicated follow-up.

The results are not marginal. At 100 TPS/user — a realistic operational target for responsive coding agents — TokenSpeed delivers 11% more throughput per GPU. At the min-latency end, it is 9% faster than the best proprietary alternative available on Blackwell hardware. For teams paying NVIDIA B200 rental rates, that 11% throughput improvement translates directly into meaningful infrastructure cost savings at scale.

Key Features of TokenSpeed at a Glance

For quick reference, here is what TokenSpeed delivers out of the box:

- MIT License: Fully open-source, commercially usable without restrictions.

- Agentic-First Design: Explicitly optimized for long-context, multi-turn workloads — not retrofitted from chatbot-era engines.

- Compile-Time KV Safety: C++ FSM scheduler enforces KV cache correctness at compile time, eliminating a major source of silent production bugs.

- SPMD Compiler Integration: Automatic generation of collective operations eliminates manual distributed communication code.

- Hardware-Agnostic Kernel Layer: Pluggable kernel system supports heterogeneous accelerators beyond NVIDIA.

- Best-in-Class MLA Kernel: Already adopted by vLLM; nearly halves decode latency vs. TensorRT-LLM on speculative decoding workloads.

- SMG Integration: Reduces CPU-GPU handoff overhead that compounds over multi-turn agent sessions.

- Python Execution Plane: Keeps the developer-facing surface fast to iterate on, despite the C++ control plane.

- Benchmarked on SWE-smith Traces: Performance validated on workloads that actually reflect production coding-agent traffic.

- Preview Status: Currently in active preview with the team signaling ongoing development, including PD disaggregation support.

Who Should Care About TokenSpeed?

TokenSpeed is not a general-purpose inference engine competing with vLLM for the average use case. It is a precision tool aimed at a specific and growing segment of the AI infrastructure market. Here is who benefits most:

AI Infrastructure Engineers running coding agents at scale — particularly those using models with MLA attention (DeepSeek family, Kimi) — will see the most immediate benefit from TokenSpeed’s kernel optimizations and scheduler design.

Platform Teams at AI-native Companies that operate agent fleets for software development automation will find the dual-metric optimization (per-GPU TPM + per-user TPS floor) directly relevant to their SLA design.

Researchers and Open-Source Contributors interested in production-grade inference engine design will find TokenSpeed’s architectural choices — especially the FSM-based control plane and compiler-backed SPMD modeling — a meaningful reference implementation.

Organizations Evaluating Post-NVIDIA Hardware benefit from the hardware-agnostic kernel layer, which means TokenSpeed is architecturally positioned to support alternative accelerators as the GPU landscape evolves.

Frequently Asked Questions

What is TokenSpeed?

TokenSpeed is an open-source LLM inference engine released by the LightSeek Foundation in May 2026 under the MIT license. It is specifically designed to serve agentic workloads — long-context, multi-turn inference tasks — with performance targeting or exceeding TensorRT-LLM on NVIDIA Blackwell hardware.

How does TokenSpeed compare to vLLM?

TokenSpeed and vLLM are complementary rather than direct competitors. TokenSpeed focuses on agentic workload optimization and has contributed its MLA kernel to vLLM, which that project has already adopted. vLLM remains a general-purpose inference framework; TokenSpeed targets the specific performance envelope of coding agents.

Is TokenSpeed production-ready?

As of its May 2026 release, TokenSpeed is in preview status. The core engine and benchmarks are public, but some features — notably PD (prefill-decode) disaggregation — are still being cleaned up. It is suitable for evaluation and staging environments; production deployments should monitor the official roadmap.

Does TokenSpeed only work on NVIDIA GPUs?

No. TokenSpeed’s kernel layer is designed to be hardware-agnostic via a pluggable plugin mechanism. While the published benchmarks focus on NVIDIA B200 (the current Blackwell generation), the architecture explicitly supports heterogeneous accelerators.

What models work best with TokenSpeed?

The current MLA kernel optimizations are most impactful for models using Multi-head Latent Attention — including the DeepSeek family and Kimi K2.5 (the model used in benchmarking). Broader model support continues to expand as the project matures.

Final Verdict: TokenSpeed Sets a New Bar for Agentic Inference

The AI infrastructure landscape is entering a new phase. For the last few years, most optimization efforts in large language model deployment focused on a relatively narrow use case: chatbot-style interactions. These workloads were predictable, often short, and easier to batch efficiently. A user would submit a prompt, receive a response, and end the interaction. Inference systems were built around this assumption.

That world is changing quickly.

The rise of coding assistants, autonomous agents, research copilots, workflow orchestration systems, and multi-step reasoning tools has fundamentally changed what production inference looks like. These systems no longer operate as simple request-response applications. Instead, they maintain persistent context, manage long conversational histories, call external tools, generate intermediate outputs, revise prior steps, and often continue execution over dozens of turns.

This shift introduces an entirely different operational challenge.

In traditional deployments, throughput alone was often enough to evaluate infrastructure quality. If an engine could process more tokens per second or reduce GPU idle time, it was considered efficient. But agentic systems force teams to think beyond aggregate metrics.

What matters now is balancing two priorities that naturally compete with each other: system-wide utilization and user-perceived responsiveness.

A highly optimized batch scheduler may improve hardware efficiency but degrade user experience by increasing latency. On the other hand, aggressively prioritizing individual response speed may leave GPU resources underutilized, dramatically increasing infrastructure costs. Finding equilibrium between these two forces is no longer a nice engineering bonus—it is becoming a business requirement.

That is why this release feels strategically important.

Rather than retrofitting a chatbot-era engine to support modern workloads, this project appears to start from a different premise entirely: agentic inference is not simply “more tokens.” It is a distinct serving problem that deserves dedicated architectural decisions.

That distinction matters.

Long-context, multi-turn systems create bottlenecks in places many traditional inference stacks do not handle well. KV cache correctness becomes increasingly fragile as requests span longer sessions and more complex resource sharing. CPU orchestration overhead compounds over repeated scheduling cycles. Decode efficiency becomes a larger factor, especially when speculative execution or prefix caching is involved. Even small inefficiencies become amplified across production-scale deployments.

Addressing these problems requires more than kernel tuning.

The most compelling aspect of this system is that its design choices seem aligned with the real-world operational behavior of coding agents and autonomous workflows. The architecture reflects awareness that correctness, scalability, and developer ergonomics must coexist.

The compiler-backed modeling approach is especially notable. Distributed model serving is notoriously difficult to maintain, and manual communication logic introduces significant implementation risk. By abstracting this complexity into placement annotations and automated collective generation, the system reduces engineering overhead while also lowering the likelihood of subtle distributed bugs.

This is not merely a developer convenience. Faster model integration and safer architecture experimentation can materially improve iteration speed for infrastructure teams.

The scheduler design is arguably even more interesting.

Separating the control plane from the execution plane introduces a cleaner mental model for resource management. More importantly, encoding correctness rules through a finite-state machine and type constraints shifts critical failure detection earlier in the lifecycle.

This matters because inference bugs are rarely obvious.

A crashing service is inconvenient, but a silently incorrect service is dangerous. Invalid KV reuse, race conditions, or resource lifecycle issues may not produce explicit errors. Instead, they can degrade output quality or produce inconsistent behavior that is difficult to trace. Catching these issues before runtime is a meaningful architectural advantage.

Another strong signal is the hardware abstraction strategy.

The AI infrastructure market is still heavily NVIDIA-centric, but assuming permanent hardware homogeneity would be shortsighted. Accelerator diversity is increasing. Organizations are actively evaluating alternative compute providers, custom silicon, and mixed-cluster deployments. A serving engine designed with kernel portability and plugin extensibility is better positioned for that future.

This design choice may become more valuable over time than current benchmark numbers alone.

Of course, benchmarks still matter.

Published results showing improvements in throughput, latency, and decode efficiency against a leading proprietary serving stack are difficult to ignore—particularly when tested on realistic coding-agent traces rather than synthetic workloads.

This benchmarking methodology deserves attention.

Many infrastructure announcements showcase performance gains using unrealistic or overly simplified scenarios. Uniform prompt lengths, predictable batching, or synthetic latency tests often fail to represent production traffic. Real coding-agent workloads are messy. They involve bursty requests, long prefixes, varied output lengths, and inconsistent interaction patterns.

Testing against traces that reflect actual production behavior makes the results substantially more relevant.

If those gains hold under broader adoption, the infrastructure cost implications are meaningful.

A modest percentage improvement in throughput may sound small in isolation, but at scale, even single-digit efficiency gains translate into major operational savings. GPU costs remain one of the most expensive components of AI deployment. Improving utilization while preserving responsiveness directly impacts margins, pricing flexibility, and system capacity.

This is particularly relevant for companies operating coding agents as core products rather than experimental features.

Better serving economics can enable lower prices, higher concurrency limits, or more generous user plans. In highly competitive markets, infrastructure efficiency becomes product strategy.

That is why releases like this matter beyond engineering circles.

This is not just another open-source repository or benchmark claim. It represents a broader maturation of the AI tooling ecosystem. The industry is moving from model obsession toward systems excellence.

Model quality remains important, but raw capability alone is no longer sufficient. A highly capable model running on inefficient infrastructure is commercially disadvantaged. Deployment architecture increasingly determines real-world product quality.

Fast models feel smarter.

Responsive systems encourage deeper engagement.

Stable infrastructure enables trust.

These are product realities, not just technical metrics.

For teams building coding assistants, internal automation systems, developer agents, or research copilots, evaluating infrastructure choices is no longer optional. The serving layer is becoming a strategic decision with direct impact on performance, cost, and user retention.

This project enters the market at an ideal moment.

Demand for long-context reasoning, autonomous execution, and persistent AI workflows is growing rapidly. Existing inference systems were not originally designed for these conditions. A purpose-built alternative with strong architectural opinions, open licensing, and early ecosystem relevance is likely to attract serious interest.

There is still work ahead. Preview-stage software always carries operational caution, and production teams should evaluate maturity, roadmap consistency, observability tooling, and deployment ergonomics before large-scale adoption.

But directionally, the signal is clear.

The future of AI deployment will not be won solely by larger models or marginal benchmark improvements. It will be shaped by the infrastructure layers that make advanced systems economically and operationally viable.

This release demonstrates a strong understanding of that reality.

For organizations building the next generation of AI-native products, this is a project worth watching closely—and potentially testing much sooner than later.