AI agents are no longer a research curiosity. They are booking flights, managing cloud infrastructure, executing financial trades, and writing production code — autonomously, at scale, with minimal human supervision. The frameworks to build these agents — LangChain, AutoGen, CrewAI, Microsoft Agent Framework, Azure AI Foundry — have made it almost trivially easy to spin one up in an afternoon.

But here is the uncomfortable truth the industry has been dancing around: we have been far better at building AI agents than governing them.

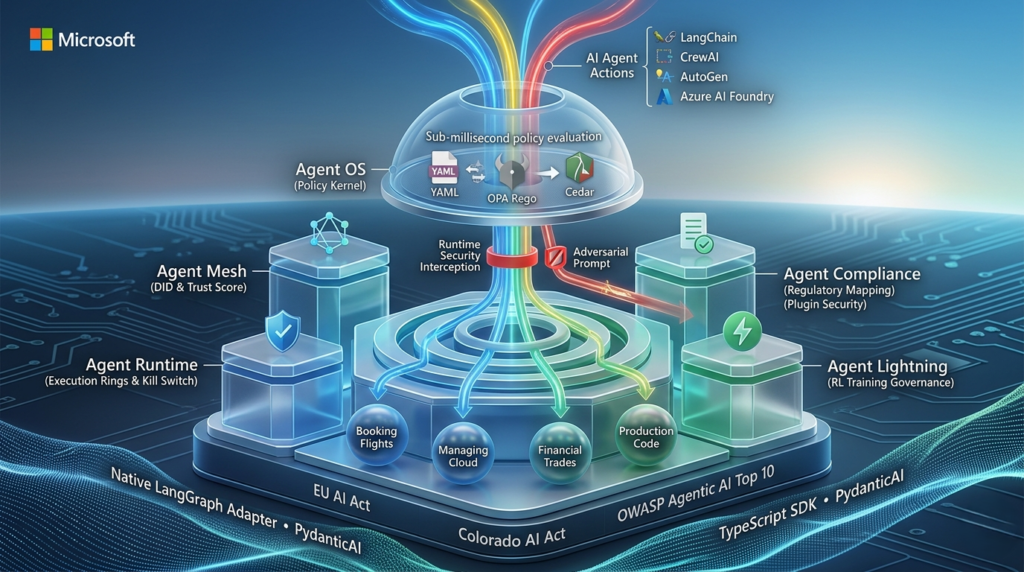

On April 2, 2026, Microsoft officially answered that gap with the release of the Agent Governance Toolkit — an open-source, MIT-licensed project that brings deterministic, sub-millisecond runtime security to autonomous AI agents. For developers who are shipping agents into production, this is arguably the most important infrastructure release of the year. Let’s unpack what it does, why it matters, and how you can start using it today.

The Governance Gap: Why Now?

Before diving into what the Agent Governance Toolkit does, it is worth understanding the problem it is solving — because the urgency is real and the regulatory clock is ticking.

In December 2025, OWASP published its Top 10 for Agentic Applications for 2026 — the first formal taxonomy of risks specific to autonomous AI agents. The list includes threats that sound almost science-fictional until you think about them carefully: goal hijacking, memory poisoning, identity abuse, rogue agents, and cascading failures. These aren’t edge cases. They are the predictable consequences of deploying systems that reason, plan, and act in the real world with limited oversight.

Regulators have noticed. Two major frameworks are on the horizon:

- EU AI Act — High-risk AI obligations take effect in August 2026

- Colorado AI Act — Becomes enforceable in June 2026

The infrastructure to govern autonomous agents, however, has not kept pace with the speed of building them. Developers are assembling powerful agentic pipelines using battle-tested frameworks, then shipping them into production environments where a single misconfiguration — or a single adversarial prompt — could trigger a cascade of irreversible real-world actions.

The Agent Governance Toolkit exists precisely to close that gap.

What Is the Agent Governance Toolkit?

The Agent Governance Toolkit is an open-source project released under the Microsoft GitHub organization, licensed under MIT. It is designed to provide runtime security governance for AI agents — intercepting, evaluating, and enforcing policy on every action an agent attempts to take, before that action executes.

The toolkit’s conceptual DNA comes from three proven engineering disciplines:

- Operating system kernels — privilege rings, process isolation, and syscall interception

- Service meshes — mutual TLS, cryptographic identity, and traffic policy enforcement

- Site Reliability Engineering (SRE) — SLOs, error budgets, and circuit breakers

The team at Microsoft asked a deceptively simple question: what if we took these proven, battle-tested patterns and applied them to AI agents? The result is a seven-package toolkit available in Python, TypeScript, Rust, Go, and .NET.

The Seven Packages: A Deep Dive

1. Agent OS — The Policy Kernel

At the heart of the Agent Governance Toolkit is Agent OS, a stateless policy engine that intercepts every agent action before execution at sub-millisecond latency (under 0.1ms at p99). Think of it as the kernel for your AI agents.

It supports three policy languages:

- YAML — for simple, human-readable rules

- OPA Rego — for complex, programmable logic

- Cedar — AWS’s purpose-built policy language for fine-grained authorization

The stateless architecture is not an accident. By making the policy engine stateless, horizontal scaling, containerized deployment, and auditability all come naturally. Every governance decision is observable and reproducible.

2. Agent Mesh — Cryptographic Identity and Trust

Agent Mesh solves the identity problem in multi-agent systems. It provides:

- Decentralized Identifiers (DIDs) using Ed25519 cryptographic keys

- Inter-Agent Trust Protocol (IATP) for secure, authenticated agent-to-agent communication

- Dynamic trust scoring on a 0–1000 scale with five behavioral tiers

This last point deserves emphasis. The binary “trusted vs. untrusted” model breaks down in agentic systems where agents are constantly changing their behavior. A trust score with behavioral decay and dynamic privilege assignment is a fundamentally more accurate model of reality — and the Agent Governance Toolkit gets that right.

3. Agent Runtime — Execution Rings and Kill Switch

Agent Runtime brings CPU-inspired privilege levels to agent execution. Agents operate within dynamic execution rings, constraining what they can access and modify based on their current trust state.

Key capabilities:

- Saga orchestration for multi-step transactions (with compensating actions on failure)

- Emergency kill switch for immediate agent termination when behavior goes out of bounds

- Resource limits enforced per ring level

4. Agent SRE — Production Reliability for Agents

This is where the Agent Governance Toolkit starts to feel less like a security tool and more like a complete production operations platform. Agent SRE brings canonical SRE practices to agent systems:

- Service Level Objectives (SLOs) and error budgets

- Circuit breakers to prevent cascading failures

- Chaos engineering capabilities to test agent resilience

- Progressive delivery for phased rollouts of agent behavior changes

For teams that are accustomed to running reliable distributed systems, this is a familiar and welcome toolkit.

5. Agent Compliance — Regulatory Mapping and Evidence Collection

Agent Compliance automates governance verification, producing compliance grades and mapping agent behavior to specific regulatory frameworks:

- EU AI Act (high-risk obligations)

- HIPAA

- SOC 2

- OWASP Agentic AI Top 10 — with evidence collection covering all 10 risk categories

This is the package that transforms the Agent Governance Toolkit from a developer security tool into an enterprise audit artifact. Automated compliance reporting means your security team and legal counsel can verify governance posture without manually reviewing logs.

6. Agent Marketplace — Plugin Supply Chain Security

The Agent Marketplace package addresses a risk that is easy to overlook: the supply chain. As agents become more capable, they rely increasingly on plugins, tools, and external services. Each one is a potential attack surface.

Agent Marketplace provides:

- Ed25519 signing and verification for plugins

- Trust-tiered capability gating — plugins only access what their trust level permits

- Full plugin lifecycle management

7. Agent Lightning — Governance During RL Training

The most forward-looking package in the toolkit, Agent Lightning applies the Agent Governance Toolkit’s policy enforcement to reinforcement learning training. Policy-enforced runners and reward shaping ensure zero policy violations during RL training — meaning governance doesn’t start when you deploy an agent, it starts during training.

Framework Compatibility: Works With What You Already Use

A governance toolkit that forces you to rewrite your agent stack is a governance toolkit no one will use. Microsoft built the Agent Governance Toolkit to be framework-agnostic from day one.

Each integration hooks into native framework extension points:

| Framework | Integration Method |

|---|---|

| LangChain | Callback handlers |

| CrewAI | Task decorators |

| Google ADK | Plugin system |

| Microsoft Agent Framework | Middleware pipeline |

| LangGraph | Native adapter (published on PyPI) |

| OpenAI Agents SDK | Native adapter (published on PyPI) |

| Haystack | Upstream integration |

| PydanticAI | Working adapter |

| LlamaIndex | TrustedAgentWorker |

| Dify | Governance plugin in marketplace |

The TypeScript SDK is available via @microsoft/agentmesh-sdk on npm. The .NET SDK is available as Microsoft.AgentGovernance on NuGet. Python teams can install the full toolkit with a single command:

bash

pip install agent-governance-toolkit[full]How the Agent Governance Toolkit Addresses OWASP’s Top 10

One of the most significant aspects of this release is the explicit, deliberate mapping to the OWASP Agentic AI Top 10. Every risk on the list has a corresponding mitigation in the toolkit:

| OWASP Risk | Toolkit Mitigation |

|---|---|

| Goal hijacking | Semantic intent classifier in policy engine |

| Tool misuse | Capability sandboxing + MCP security gateway |

| Identity abuse | DID-based identity with behavioral trust scoring |

| Supply chain risks | Plugin signing with Ed25519 + manifest verification |

| Code execution risks | Execution rings with resource limits |

| Memory poisoning | Cross-Model Verification Kernel (CMVK) with majority voting |

| Insecure communications | IATP encryption layer |

| Cascading failures | Circuit breakers and SLO enforcement |

| Human-agent trust exploitation | Approval workflows with quorum logic |

| Rogue agents | Ring isolation, trust decay, and automated kill switch |

This is defense in depth by design — multiple independent layers, each addressing different threat categories.

Open Source by Design: More Than a License

The MIT license is table stakes. What makes the Agent Governance Toolkit genuinely open-source by design is its architecture and stewardship philosophy.

The project ships as a monorepo with seven independently installable packages, meaning teams can adopt governance incrementally. Start with Agent OS for basic policy enforcement. Add Agent Mesh when multi-agent scenarios emerge. Layer in Agent SRE as your systems scale. You are never forced to buy the whole platform at once.

The quality standards are enterprise-grade:

- 9,500+ tests across all packages, with continuous fuzzing through ClusterFuzzLite

- SLSA-compatible build provenance

- OpenSSF Scorecard tracking

- CodeQL and Dependabot for automated vulnerability scanning

- Pinned dependencies with cryptographic hashes

- 20 step-by-step tutorials covering every package and feature

Microsoft has explicitly stated its aspiration to move the project into a foundation home — engaging with OWASP’s agentic AI community and foundation leaders to enable broader community governance. This is not a Microsoft product with an open-source license. It is intended to be community infrastructure.

Getting Started in Under 5 Minutes

If you want to try the Agent Governance Toolkit today, here is the fastest path:

bash

git clone https://github.com/microsoft/agent-governance-toolkit

cd agent-governance-toolkit

pip install -e "packages/agent-os[dev]" -e "packages/agent-mesh[dev]" -e "packages/agent-sre[dev]"

# Run the governance demo

python demo/maf_governance_demo.pyThe toolkit requires Python 3.10+ and runs at sub-0.1ms governance latency at p99. Individual packages are available on PyPI for incremental adoption.

Deploying on Azure

For production deployments, three Azure integration paths are supported:

- Azure Kubernetes Service (AKS) — Deploy Agent OS as a sidecar container alongside your agents for transparent, zero-code-change governance

- Azure AI Foundry Agent Service — Use the built-in middleware integration for agents built on Foundry

- Azure Container Apps — Run governance-enabled agents in a serverless container environment

Why This Matters for the Indian AI Developer Community

India’s AI developer ecosystem is maturing rapidly. Startups building on LangChain, teams deploying AutoGen workflows, and enterprises integrating AI agents into core business processes are all operating in an environment where the regulatory landscape is shifting globally.

The EU AI Act and Colorado AI Act are not hypothetical threats for Indian teams building for global markets. Enterprises procuring AI solutions increasingly require documented governance posture as a condition of vendor selection. The Agent Governance Toolkit gives Indian AI teams the same infrastructure that enterprise security teams in the US and Europe will be demanding — without the cost of proprietary tooling.

More importantly, the open-source nature of this toolkit means the community can contribute. Framework adapters, policy templates, regional compliance mappings — these are meaningful contribution opportunities for developers who want to shape how agentic AI governance evolves globally.

The Bigger Picture: Governing What We’ve Built

There is a pattern in technology where the capability to build something races ahead of the infrastructure to govern it. The internet needed firewalls and TLS before it could carry financial transactions safely. Microservices needed service meshes before they could run at the scale of modern cloud applications. Containers needed Kubernetes security policies before enterprises would trust them with sensitive workloads.

AI agents are at exactly this inflection point. The Agent Governance Toolkit is Microsoft’s argument that the time to build governance infrastructure is now — proactively, in the open, as shared community infrastructure — rather than reactively, after incidents force the industry’s hand.

That argument is correct. The toolkit is mature, thoughtfully designed, and built by a team that clearly understands both the threat landscape and the operational realities of production systems.

If you are building AI agents in any capacity — whether you are a solo developer experimenting with LangChain or an engineering team shipping agentic workflows to enterprise customers — the Agent Governance Toolkit belongs in your stack.

Quick Reference: Agent Governance Toolkit at a Glance

| Package | Core Function | Key Tech |

|---|---|---|

| Agent OS | Policy enforcement kernel | YAML, OPA Rego, Cedar |

| Agent Mesh | Cryptographic identity + trust | DIDs, Ed25519, IATP |

| Agent Runtime | Execution rings + kill switch | Saga orchestration |

| Agent SRE | Production reliability | SLOs, circuit breakers, chaos |

| Agent Compliance | Regulatory mapping | EU AI Act, HIPAA, SOC 2 |

| Agent Marketplace | Plugin supply chain security | Ed25519 signing |

| Agent Lightning | RL training governance | Policy-enforced runners |

GitHub: github.com/microsoft/agent-governance-toolkit

License: MIT

Languages: Python, TypeScript, Rust, Go, .NET

Have you started integrating agent governance into your stack? Share your experience in the comments — or join the Kalinga.ai community to connect with developers navigating the agentic AI landscape in India.

1. Governance & Technical Fundamentals

What exactly is “Sub-millisecond Runtime Security”?

In the world of autonomous agents, latency is the enemy of usability. The Agent Governance Toolkit includes a Policy Kernel (Agent OS) written in high-performance Rust and Go. It intercepts agent “calls” (requests to use a tool, access a database, or send an email) and evaluates them against your security policies in under 0.1ms at p99. This ensures that your agent remains secure without the “lag” that typically plagues middleware security layers.

Is this toolkit a replacement for frameworks like LangChain or AutoGen?

No. Think of LangChain or AutoGen as the engine and the Agent Governance Toolkit as the brakes and dashboard. It is designed to be framework-agnostic. It wraps around your existing agents using native adapters or middleware pipelines. You keep your favorite orchestration logic but gain a centralized layer for identity, trust, and kill-switches.

What are “Execution Rings” and how do they work?

Inspired by CPU privilege levels (Ring 0, Ring 3, etc.), the toolkit’s Agent Runtime assigns agents to specific rings based on their current Trust Score.

- Ring 0: Full system access (reserved for administrative agents).

- Ring 3: Restricted sandbox with no external network access and “read-only” database permissions. If an agent’s behavior becomes erratic, the toolkit can automatically “demote” it to a more restrictive ring in real-time.

2. Security & Risk Mitigation

How does the toolkit handle the “Rogue Agent” problem?

A “Rogue Agent” is one that deviates from its original intent due to prompt injection or cascading logic errors. The toolkit addresses this via three layers:

- Semantic Intent Classifiers: It uses a small, local model to check if the action matches the agent’s declared goal.

- Trust Decay: If an agent attempts three unauthorized actions, its trust score drops, triggering an Emergency Kill Switch.

- Human-in-the-loop Quorum: For high-stakes actions (like trades > $10,000), the toolkit pauses execution until a human or a “quorum” of supervisor agents provides a signed approval.

Does it protect against “Memory Poisoning”?

Yes. The Cross-Model Verification Kernel (CMVK) compares the agent’s current reasoning path against historical “golden” paths. If the agent’s long-term memory appears to have been manipulated to favor a specific adversarial outcome, the CMVK flags the anomaly and reverts the agent to a “known-good” state.

3. Compliance & Regulation

How does this help with the EU AI Act and Colorado AI Act?

The Agent Compliance package automatically generates “Evidence Bundles.” If a regulator asks how you are managing “high-risk” AI obligations (as required by August 2026), you can provide an automated report showing:

- Deterministic logs of every decision made.

- Mapping of agent actions to OWASP Agentic AI Top 10 risks.

- Cryptographic proof of identity for every agent involved in a transaction.

Can I use this for HIPAA or SOC 2 compliance?

Yes. While the toolkit doesn’t “make” you compliant on its own, it provides the Audit Artifacts necessary. By using the Agent Marketplace to verify plugin signatures and Agent Mesh to encrypt inter-agent communication via IATP, you fulfill the technical requirements for data privacy and integrity required by HIPAA and SOC 2.

4. Implementation & Ecosystem

How difficult is the migration for existing agent stacks?

Microsoft has prioritized “Incremental Adoption.” You don’t have to implement all seven packages at once.

- Day 1: Install

agent-osto start logging and observing agent actions. - Day 15: Implement basic YAML policies to block “dangerous” tool calls.

- Day 30: Roll out

Agent Meshfor cryptographic identity once you scale to multiple agents.

Is the toolkit truly Open Source?

Yes. It is licensed under the MIT License, meaning you can use, modify, and distribute it even in commercial, closed-source applications. Microsoft has also committed to moving the project to a neutral foundation home to ensure it remains a community-governed standard rather than a vendor-locked product.

What is “Agent Lightning” and why is it used during training?

Most governance happens at inference (when the agent is running). Agent Lightning is unique because it applies these same policies during the Reinforcement Learning (RL) phase. This ensures the agent never “learns” to bypass security protocols to achieve a reward, effectively “baking” safety into the agent’s weights before it ever touches production.

5. Regional Impact (India & Global)

Why is this release significant for Indian AI startups?

For Indian developers building for a global market, the biggest hurdle is often “Trust.” By using an enterprise-grade governance stack like this, Indian startups can provide their US and EU clients with documented proof that their agents are secure, governed, and compliant with international laws. It levels the playing field, allowing smaller teams to compete with global tech giants on security posture.