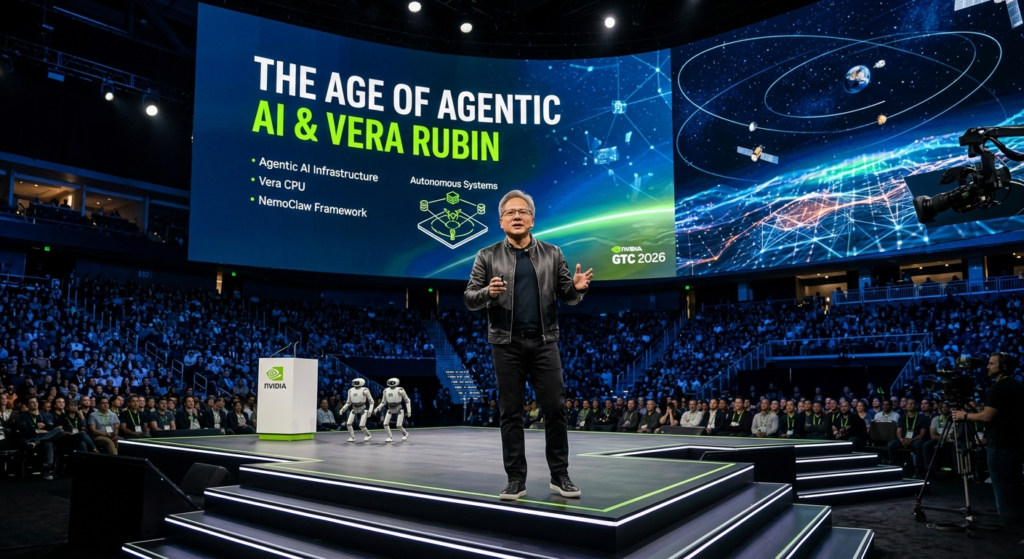

The world of technology moves fast, but NVIDIA CEO Jensen Huang moves faster. At the GTC 2026 keynote, the atmosphere was electric as Huang, donning his signature black leather jacket, laid out a vision that extends from the silicon in our data centers to the satellites in our orbit. This wasn’t just a product launch; it was a roadmap for the next decade of human-machine interaction.

The core message was clear: we have reached an “inference inflection point.” The industry is shifting from training massive models to deploying them at a scale that will touch every facet of global infrastructure. If you are an enterprise leader, a developer, or an investor, understanding these shifts is no longer optional—it is a requirement for survival in the Agentic AI era.

1. The Pivot to Agentic AI and the Vera CPU

The headline act of the keynote was the introduction of the Vera CPU and the broader Vera Rubin architecture. For years, NVIDIA has been synonymous with GPUs, but Jensen Huang is now positioning the company as a “full-stack AI infrastructure platform.”

Agentic AI—autonomous systems capable of reasoning, planning, and executing multi-step tasks—requires a different kind of horsepower. While GPUs excel at parallel processing for training, the sequential logic required for autonomous agents demands low-latency, highly efficient CPUs.

Key Specifications of the Vera CPU

- Efficiency: Delivers results with 2x the efficiency of traditional data center CPUs.

- Speed: Offers 50% faster processing for sequential AI tasks.

- Integration: Designed to work in perfect tandem with NVIDIA’s GPU clusters, creating a unified “AI Factory.”

Huang’s strategy is brilliant: by owning the CPU, NVIDIA captures the entire compute cycle of an agent, from the initial reasoning to the final action.

2. NVIDIA NemoClaw: Democratizing AI Agents

One of the most actionable announcements for developers was the launch of NVIDIA NemoClaw. Inspired by the viral success of the OpenClaw project, NemoClaw is a reference stack designed to help enterprises build and deploy secure, autonomous agents.

NemoClaw addresses the biggest hurdle to Agentic AI adoption: security. By providing a “sandbox” environment and integrated privacy layers, it allows companies to give AI agents access to sensitive internal data without the risk of data leakage.

How NemoClaw Changes the Game:

- Single-Command Deployment: Developers can stand up a “claw” (a long-running agent) with one command.

- Enterprise Security: Built-in safeguards ensure that agents operate within strict ethical and operational boundaries.

- Hardware Agnostic: While optimized for NVIDIA, it is designed to run across various environments, ensuring flexibility for the Agentic AI ecosystem.

3. The $1 Trillion Vision: AI Infrastructure as the New Utility

Jensen Huang didn’t just talk about chips; he talked about money—specifically, $1 trillion in revenue potential through 2027. This figure represents the massive buildout of AI factories globally. Huang noted that computing demand has increased 1 million times in just the last two years.

To meet this demand, NVIDIA is expanding its reach into “Physical AI.” This includes everything from the Disney-powered “Olaf” robots that walked across the stage to autonomous vehicle platforms now being adopted by giants like BYD and Hyundai.

Comparison: The Generational Leap in AI Infrastructure

| Feature | Blackwell Generation (2024-25) | Vera Rubin / Feynman Generation (2026+) |

| Primary Focus | Model Training & High-Performance Compute | Agentic AI & Real-time Inference |

| Core Hardware | GB200 Systems | Vera CPU + LP40 (LPU) |

| Interconnects | Traditional Copper/Optical | Co-packaged Optics (Light-based) |

| Scale | Global Data Centers | Earth-to-Space Infrastructure |

| Logic Mode | Massive Parallelism | Sequential Reasoning & Autonomy |

4. Reaching for the Stars: AI in Orbit

Perhaps the most “surprising” announcement was NVIDIA’s move into space. The Vera Rubin architecture—named after the astronomer who provided evidence for dark matter—is literally heading into orbit.

Partnering with companies like Starcloud, NVIDIA is designing the “Space-1” computer. Building a data center in space poses unique challenges, primarily cooling. Without air for convection, NVIDIA is leveraging advanced radiation-based cooling systems to run Agentic AI workloads in satellite constellations. This allows for real-time data processing in space, reducing the need to beam massive datasets back to Earth.

5. The Inference Inflection Point: Actionable Insights for Businesses

If your business is still only thinking about “Chatbots,” you are missing the bigger picture. The move toward Agentic AI means shifting from tools that answer questions to tools that solve problems.

Strategic Recommendations:

- Audit Your Infrastructure: The transition to Agentic AI will require a mix of GPU power for training and high-efficiency CPU power for execution. Plan your hardware refreshes around architectures like Vera Rubin.

- Focus on Privacy Early: Use tools like NemoClaw to build “internal-first” agents. The value of AI is highest when it can safely interact with your proprietary data.

- Shift to Inference: As training costs stabilize, the real competitive advantage will lie in how fast and cheaply you can run your models. Optimize for inference latency today to be ready for the Agentic AI wave of tomorrow.

The Future is Autonomous

GTC 2026 proved that NVIDIA is no longer just a chipmaker; it is the architect of a new digital reality. By bridging the gap between digital reasoning and physical action through Agentic AI, Jensen Huang is ensuring that NVIDIA remains the heartbeat of the modern world. Whether it’s a robot snowman on stage or a data center orbiting the planet, the era of autonomous intelligence is here.