Imagine waking up one morning to discover that more than half the people “reading” your website aren’t people at all. No eyes. No hands. No coffee cups. Just relentless, tireless software agents crawling your pages at machine speed, thousands of times a second.

That future isn’t a dystopian fantasy — it’s a forecast. According to Cloudflare CEO Matthew Prince, AI bot traffic will exceed human traffic on the internet by 2027. Speaking at the SXSW conference in Austin in March 2026, Prince laid out a vision of the web that is being fundamentally transformed by the rise of generative AI — and it has enormous implications for every business, developer, marketer, and website owner on the planet.

This isn’t just a statistic to bookmark and forget. It’s a tectonic shift in how the internet works, who — or what — is using it, and how organizations need to prepare. In this article, we’ll unpack what this means, why it’s happening, what the real-world consequences are, and what you can do about it right now.

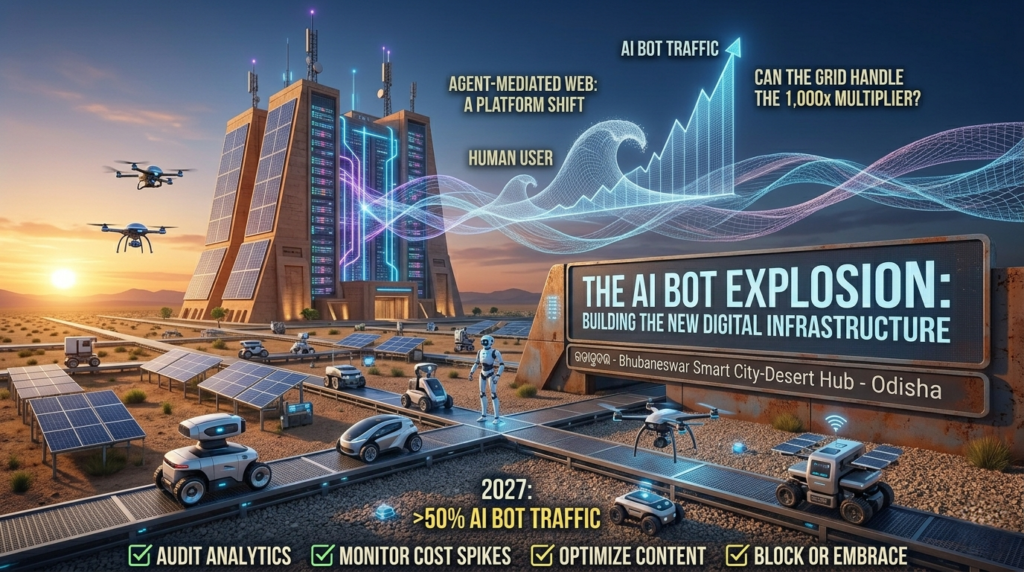

Understanding the surge in AI bot traffic is now critical for digital survival. As generative AI models transition from simple chat interfaces to proactive assistants, the volume of AI bot traffic hitting your servers will grow 1,000x faster than human clicks.

This shift means that managing AI bot traffic is no longer just a technical task for IT—it is a core business strategy. By 2027, when AI bot traffic officially overtakes human users, websites that haven’t optimized their infrastructure will face rising costs and skewed data.

To stay ahead, you must implement specialized tools to identify, filter, and leverage AI bot traffic effectively. This ensures your most valuable content remains accessible to both humans and the machines that now serve them.

From 20% to Over 50%: The Explosive Rise of AI Bot Traffic

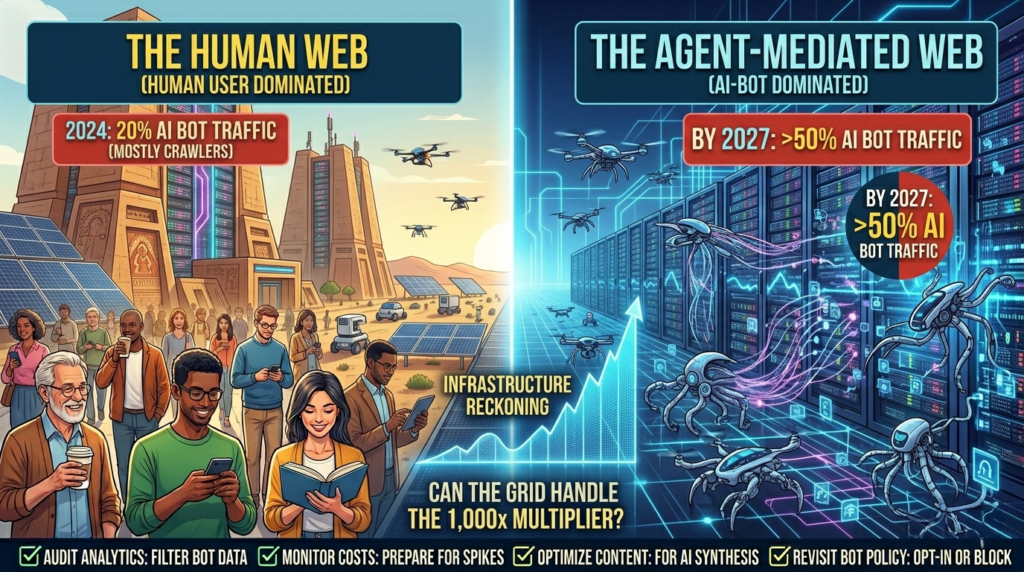

Before generative AI became a mainstream phenomenon, the internet was already no stranger to bots. Prince noted that, historically, roughly 20% of all internet traffic was bot-generated. The biggest player? Google’s web crawler, tirelessly indexing billions of pages so that search results could be served to human users.

Beyond Google and a handful of other reputable crawlers from companies like Bing and Apple, the bot landscape was relatively contained — mostly scammers, scraping operations, and the occasional security scanner. It was manageable. It was understood. IT teams knew what to expect.

Then generative AI changed everything.

With the explosion of large language model (LLM)-powered chatbots, AI assistants, and autonomous AI agents, the demand for fresh, relevant, up-to-date web content has gone parabolic. Every time a user asks an AI chatbot a question — whether it’s about comparing laptop specs, planning a trip, or understanding a medical symptom — the AI agent behind that query may be crawling not five websites, but five thousand.(AI bot traffic)

“If a human were doing a task — let’s say you were shopping for a digital camera — and you might go to five websites. Your agent or the bot that’s doing that will often go to 1,000 times the number of sites that an actual human would visit,” Prince explained. The implications of that multiplier are staggering when you think about the millions of AI-powered queries being processed every single minute of every day.(AI bot traffic)

This is what’s driving the surge in internet bot traffic growth — and it’s not slowing down.

Why AI Agents Browse So Differently from Humans

To understand why AI bot traffic is scaling so dramatically, it helps to understand how AI agents actually work when they’re browsing the web on a user’s behalf.

Human web browsing is, by nature, selective and deliberate. We read headlines, skim articles, follow a handful of links, and call it a day. We’re constrained by time, attention, and cognitive bandwidth. Even the most enthusiastic researcher rarely visits more than 20–30 websites on a single topic in one sitting.(AI bot traffic)

AI agents operate under an entirely different paradigm:

- They don’t get tired. An AI agent can crawl continuously for hours, visiting thousands of pages without diminishing returns.

- They prioritize breadth. To give a well-informed answer, AI models benefit from synthesizing information across many sources, not just a few.

- They move fast. Automated bots can make HTTP requests at speeds completely impossible for human users — milliseconds per page versus the many seconds it takes a person to read and process information.

- They’re insatiable. Generative AI has what Prince described as an “insatiable need for data,” constantly seeking to ground its outputs in the most current and accurate information available.

The result? A 1,000x multiplier on web traffic per query. When you scale that across millions of daily AI interactions, the math becomes overwhelming in a hurry. Cloudflare, which serves as infrastructure for roughly one-fifth of all websites globally, is in a unique position to observe this trend in real time — and what they’re seeing is traffic growing relentlessly with no sign of a plateau.

The Infrastructure Reckoning: Can the Internet Handle It?

The surge in AI bot traffic isn’t just a theoretical problem. It’s a physical one. Every page crawled, every API called, every data request fulfilled requires real servers, real bandwidth, and real electricity. The internet is not infinite — and we’ve seen what happens when traffic spikes beyond what the infrastructure can absorb.

Prince drew a direct comparison to the COVID-19 pandemic, when internet usage surged almost overnight as billions of people shifted to remote work, streaming video, and online education simultaneously. Platforms like Netflix, YouTube, and Disney+ reported unprecedented loads. Parts of the internet buckled under the strain, and some European ISPs actually asked streaming platforms to reduce their quality settings to prevent network collapse.

“This growth is more gradual, but unlike Covid, where it spiked over two weeks and then it kind of plateaued at the new high, we’re seeing internet traffic grow and grow and grow, and we don’t see anything that’s going to slow it down or stop it,” Prince warned.

The key difference is that the AI-driven surge isn’t a temporary shock — it’s a permanent structural shift. And that has serious implications across several dimensions:

Data Centers and Power Consumption

More bot traffic means more server load, which means more data centers, more cooling systems, and more energy. The AI boom is already straining global electricity grids and accelerating investment in data center construction. The bot traffic vs human traffic shift will only intensify these pressures.

Network Latency and Page Performance

As bot traffic saturates bandwidth, legitimate human users could experience slower page load times — particularly on sites that don’t have robust caching and content delivery network (CDN) infrastructure in place. This has direct consequences for SEO, user experience, and conversion rates.

Security Challenges

The rise of generative AI web crawlers creates new attack surfaces. Distinguishing a benign AI agent from a malicious scraping bot — or from a bot designed to conduct denial-of-service attacks — becomes exponentially harder when bot traffic is the majority. Traditional CAPTCHA systems and IP-based blocking strategies are increasingly inadequate.

The New Architecture: Agent Sandboxes and On-Demand Infrastructure

One of the most forward-thinking parts of Prince’s SXSW presentation was his vision of how the internet’s infrastructure needs to evolve to support the new reality of AI agents internet infrastructure.

Today, when an AI agent performs a task on your behalf — say, planning a vacation or comparing insurance quotes — it’s doing so using relatively primitive tools: making web requests, parsing HTML, and relaying information back to the user. The underlying infrastructure wasn’t designed for this use case.

Prince envisions a future where specialized, ephemeral computing environments — essentially “sandboxes” — are spun up dynamically to host AI agents for the duration of a task and then torn down the moment the task is complete. Think of it like opening a new tab in your browser: the operation should be instantaneous, the environment isolated, and the cleanup automatic.

“What we’re trying to think about is, how do we actually build that underlying infrastructure where you can — as easily as you open a new tab in your browser — you can actually spin up new code, which can then run and service the agents that are out there,” Prince explained.

He envisions a world where millions of these sandboxes are created every second. That’s not hyperbole — it’s a natural consequence of AI agents handling millions of concurrent user requests globally, each requiring its own isolated, secure execution environment.

This represents a fundamental rethinking of web architecture:

| Traditional Web Infrastructure | AI-Agent-First Infrastructure |

|---|---|

| Static servers responding to human requests | Dynamic sandboxes serving autonomous agents |

| Optimized for low-volume, high-engagement sessions | Optimized for high-volume, rapid-fire queries |

| Security via authentication and CAPTCHA | Security via agent identity verification and behavioral analysis |

| CDN for caching static content | Real-time content delivery for context-aware AI queries |

| Bot detection as a defensive measure | Bot management as a core infrastructure layer |

What This Means for Website Owners and Businesses

If you manage a website, run digital marketing campaigns, or operate any kind of online business, the shift toward AI bot traffic exceeding human traffic should be front and center in your strategic planning. Here’s what it means practically:

1. Your Analytics Data Is Already Compromised

Traditional web analytics tools measure sessions, page views, and user journeys. But if AI bots are crawling your site at scale, those numbers are increasingly noisy. Bot traffic inflates page view counts, distorts bounce rates, and can skew session duration metrics — all of which can lead to bad business decisions if taken at face value.

Action item: Audit your analytics setup to filter known bot traffic. Implement bot detection tools and examine your server logs for unusual crawl patterns.

2. Server Costs May Rise Unexpectedly

If your hosting plan bills by bandwidth or compute usage, aggressive AI bot crawling could spike your costs without generating any corresponding revenue. This is especially true for smaller sites that don’t have robust bot-blocking configurations in place.

Action item: Review your hosting contracts and set up traffic monitoring alerts. Consider working with a CDN provider that offers intelligent bot management.

3. Content Strategy Needs to Evolve

As generative AI web crawlers increasingly mediate the relationship between content and users, the question of how your content is discovered and represented by AI systems becomes as important as traditional SEO. AI agents don’t just rank pages — they synthesize them. That means content quality, accuracy, and structured data markup are going to matter more than ever.

Action item: Invest in well-structured, authoritative, factually accurate content. Use schema markup to help AI systems understand your content’s context and intent. Think beyond keywords — think about how your content answers questions comprehensively.

4. You Have the Power to Opt Out (For Now)

Cloudflare and other providers now offer tools that allow website owners to block AI bot traffic they don’t want. This is a meaningful choice. Some site owners may welcome the data contribution to AI training or the referral traffic that AI assistants can generate. Others — particularly publishers and content creators concerned about intellectual property — may prefer to opt out entirely.

Action item: Research and implement appropriate bot access policies using your CDN or hosting provider’s bot management tools. Revisit your robots.txt file to specify which AI crawlers, if any, are welcome.

Understanding the surge in AI bot traffic is now critical for digital survival. As generative AI models transition from simple chat interfaces to proactive assistants, the volume of AI bot traffic hitting your servers will grow 1,000x faster than human clicks.

This shift means that managing AI bot traffic is no longer just a technical task for IT—it is a core business strategy. By 2027, when AI bot traffic officially overtakes human users, websites that haven’t optimized their infrastructure will face rising costs and skewed data.

To stay ahead, you must implement specialized tools to identify, filter, and leverage AI bot traffic effectively. This ensures your most valuable content remains accessible to both humans and the machines that now serve them.

The Bigger Picture: AI as a Platform Shift

Prince was emphatic that the rise of AI bot traffic isn’t just a technical curiosity — it’s a fundamental platform shift on par with the transition from desktop computing to mobile.

“I think the thing that people don’t appreciate about AI is it’s a platform shift,” Prince said, drawing parallels to earlier transformative moments in the web’s history. “The way that you’re going to consume information is completely different.”

Every major platform shift in internet history has reshuffled the winners and losers. The transition from desktop to mobile forced businesses to rethink their entire user experience, distribution strategy, and monetization model. Companies that adapted — Uber, Instagram, Airbnb — thrived. Those that didn’t — countless once-dominant desktop-first businesses — faded.

The shift from a human-first internet to an AI agent-mediated internet is no different. The businesses that will thrive are those that understand how AI agents discover, evaluate, and relay information — and optimize accordingly.

This is already reshaping industries:

- Publishing and media are grappling with AI systems consuming their content without generating traditional page views or ad revenue.

- E-commerce is beginning to see AI agents conduct comparative shopping on users’ behalf, changing how product discovery works.

- SEO and content marketing are being upended as AI-generated answers increasingly reduce click-through rates from search results.

- Cybersecurity is scrambling to build detection systems capable of distinguishing malicious bots from benign AI agents.

Preparing for a Bot-Majority Internet: An Action Plan

Whether you’re a solopreneur running a personal blog or a CTO managing enterprise infrastructure, here’s a practical framework for navigating the shift:

For Content Creators and Marketers:

- Prioritize content depth and accuracy over keyword density alone

- Implement structured data (schema.org) to help AI systems parse your content correctly

- Monitor for AI-generated summaries of your content and assess whether they’re driving traffic or cannibalizing it

- Consider whether AI licensing agreements or opt-out policies align with your business model

For Developers and IT Professionals:

- Audit your current bot detection and filtering capabilities

- Implement rate limiting to protect server resources from overly aggressive crawlers

- Explore CDN solutions with built-in AI traffic management features

- Plan for increased infrastructure costs as internet bot traffic growth continues its trajectory

For Business Leaders and Strategists:

- Treat AI agent discoverability as a core strategic concern alongside traditional SEO

- Revisit your analytics infrastructure to ensure decision-making data is clean and bot-filtered

- Monitor the regulatory environment, as governments are beginning to scrutinize AI bot behavior and data usage practices

- Invest in understanding how your target customers are using AI assistants to research, compare, and purchase products and services

Conclusion: The Web Is Changing — Is Your Strategy?

The headline — that AI bot traffic will surpass human traffic by 2027 — might sound alarming. And in some ways, it is. But it’s also an invitation.

The companies and creators that treat this shift as a threat will spend the next few years playing defense, reactive and scrambling. The ones that treat it as an opportunity will be asking smarter questions: How do I become the source AI agents trust? How do I build content and infrastructure that serves the agent-mediated internet as well as it serves human users? How do I thrive in a world where the first “reader” of everything I publish is a machine?

Matthew Prince’s prediction isn’t a warning siren — it’s a weather forecast. The storm is coming regardless. The question is whether you’re building shelter or standing in an open field.

Start building.