Physical AI in industrial robotics is driving the most radical transformation of the factory floor in decades—and it has nothing to do with faster conveyor belts or bigger machines. This convergence of advanced artificial intelligence with real-world robotic systems is quietly dismantling the boundary between digital intelligence and physical action. For the first time in industrial history, machines are not just executing instructions—they are learning, adapting, and reasoning.

The factory floor is undergoing its most radical transformation in decades — and it has nothing to do with faster conveyor belts or bigger machines. Physical AI, the convergence of advanced artificial intelligence with real-world robotic systems, is quietly dismantling the boundary between digital intelligence and physical action. For the first time in industrial history, machines are not just executing instructions — they are learning, adapting, and reasoning.

If you run a manufacturing operation, manage a supply chain, or build automation systems, you are about to face a pivotal question: Are you prepared for a world where every industrial company becomes, by necessity, a robotics company?

This guide breaks down exactly what physical AI means for industrial automation, how the underlying technology actually works, which sectors stand to gain the most, and what practical steps forward-thinking organizations should take right now.

What Is Physical AI? A Clear Definition for Industrial Decision-Makers

Before examining its industrial impact, it is worth establishing a precise definition.

Physical AI refers to artificial intelligence systems that perceive, reason about, and act within the physical world — not just in software environments, but through robotic bodies, sensors, and actuators. Unlike conversational AI or data analytics AI, physical AI must contend with the unpredictability of the real world: variable lighting, irregular objects, imprecise surfaces, and the unforgiving consequences of errors.

The distinction from traditional robotics automation is critical:

| Feature | Traditional Industrial Robotics | Physical AI-Powered Robotics |

|---|---|---|

| Task flexibility | Fixed, single-task | Generalized, multi-task |

| Programming approach | Hand-coded routines | Learned policies from data |

| Adaptation to change | Requires reprogramming | Self-adjusts in real time |

| Simulation dependency | Limited | Deep integration with digital twins |

| Deployment timeline | Weeks to months | Hours to days |

| Suitable workforce scale | Large enterprise only | SMEs to enterprise |

Traditional robotic arms on assembly lines are extraordinary at repetitive precision — but break the routine and they fail immediately. Physical AI systems, by contrast, are built to handle variability. They generalize skills from training data, use world models to anticipate outcomes, and improve through deployment experience.

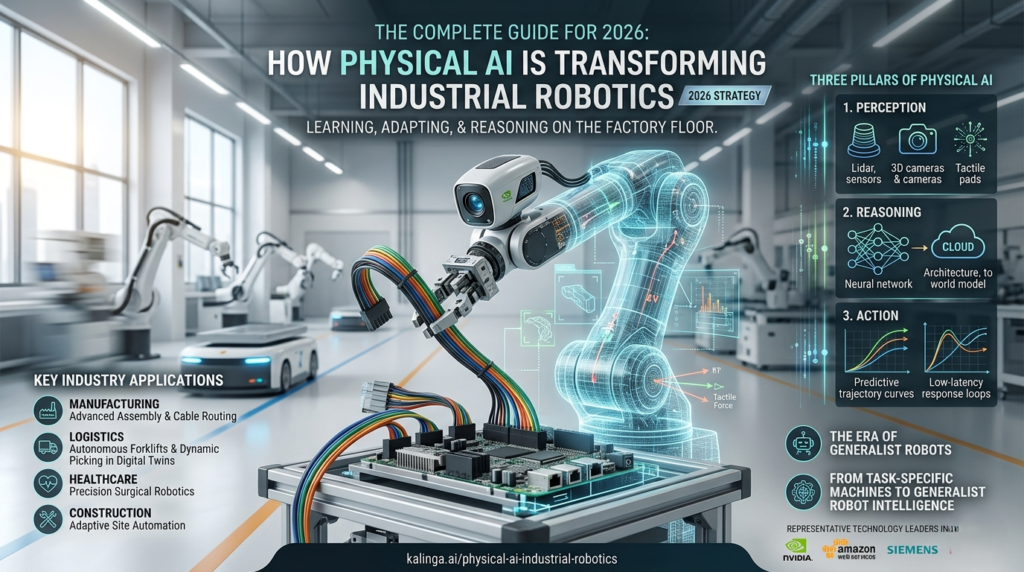

The Three Pillars of Physical AI

Understanding physical AI requires grasping three interdependent components:

- Perception: The robot’s ability to sense and interpret its environment using cameras, lidar, depth sensors, and tactile feedback.

- Reasoning: AI models that process sensory data and decide on actions, drawing on world models trained on vast simulation and real-world datasets.

- Action: Low-level control systems that translate decisions into precise physical movements with the reliability required for industrial deployment.

The breakthrough happening right now is that all three pillars are maturing simultaneously — and being integrated into unified platforms capable of deployment at scale.

The Shift from Task-Specific Machines to Generalist Robot Intelligence – Physical AI

For decades, industrial robotics operated on a simple principle: specialize deeply, deploy narrowly. A welding robot welds. A pick-and-place arm picks and places. This approach delivered measurable efficiency gains but imposed enormous rigidity on manufacturing operations.

The era of the generalist-specialist robot has arrived.

The most significant development in physical AI is the emergence of foundation models for robotics — AI architectures analogous to large language models, but trained on physical interaction data. These models give robots a general understanding of manipulation, locomotion, and object interaction that can be fine-tuned to specific industrial tasks with minimal additional training.

Why Generalist Robot Brains Change Everything – Physical AI

Consider the traditional deployment challenge for a mid-sized electronics manufacturer. Introducing a new product line historically required weeks of robotic reprogramming, physical reconfiguration, and validation testing. With physical AI:

- A generalist robot brain can be fine-tuned to a new assembly task using a small number of demonstrations

- Simulation environments validate performance before a single real-world test is run

- Updates propagate across robot fleets without halting production

This compresses deployment timelines from months to days and opens automation to dynamic production environments that were previously off-limits.

Key Takeaway: The value of physical AI is not just smarter individual robots — it is the ability to scale intelligence across entire robotic fleets with dramatically reduced engineering overhead.

How Physical AI Platforms Actually Work: A Technical Breakdown – Physical AI

Physical AI does not emerge from a single innovation. It is the product of a technology stack working in concert. Here is how the core components fit together.

World Foundation Models: Teaching Robots About Physical Reality – Physical AI

World models are AI systems trained to predict how the physical world responds to actions. When a robot arm reaches for an object, a world model anticipates how that object will move, deform, or respond. This predictive capability is foundational to robust robot behavior.

The most advanced world foundation models now unify three previously separate capabilities:

- Synthetic data generation: Creating photorealistic virtual training environments at scale

- Vision reasoning: Understanding spatial relationships, object categories, and scene context

- Action simulation: Predicting the physical consequences of robot movements

Training robots on purely real-world data is slow and expensive. World models enable the generation of vast synthetic datasets that capture the diversity of real industrial environments — different lighting, varied object positions, edge-case scenarios — without the cost or risk of real-world collection.

Simulation Frameworks and Digital Twins

Before any physical AI system touches real hardware, it must be validated exhaustively in simulation. Modern simulation frameworks built on physically accurate rendering engines create digital twins of entire production lines — not just individual robots, but their interactions with conveyor systems, human workers, variable materials, and environmental conditions.

This approach, known as virtual commissioning, has become standard practice among leading industrial robot manufacturers. A global install base exceeding 2 million industrial robots is already transitioning toward AI-driven virtual commissioning workflows, where physics-accurate digital twins allow engineers to identify failure modes, optimize robot paths, and validate safety protocols before deployment.

The economic impact is substantial. Virtual commissioning reduces physical setup time, eliminates costly hardware damage during testing, and enables parallel development — engineering teams can run thousands of simulation iterations simultaneously.

Edge AI Inference: Intelligence at the Machine

Cloud-based AI inference is inadequate for real-time industrial robotics. When a robotic arm is assembling a circuit board at speed, latency measured in milliseconds matters. Physical AI systems require edge computing platforms embedded directly in robot controllers — compact, high-performance modules capable of running complex AI inference locally with the functional safety guarantees industrial environments demand.

This embedded intelligence allows robots to respond to their immediate environment in real time, without round-trip communication to a data center. It also enables operation in environments with limited connectivity — construction sites, remote logistics hubs, or high-electromagnetic-interference factory floors.

Physical AI Across Industries: Real-World Applications and Impact

Manufacturing and Assembly

High-precision electronics assembly represents one of the most demanding environments for physical AI. Operations involving intricate component placement, cable routing, and quality inspection at speed push the limits of what traditional automation can achieve.

Physical AI systems trained in simulation can now perform dexterous manipulation tasks — handling flexible cables, aligning sub-millimeter components, adapting grip force to material variation — that were previously relegated to human workers. The ability to simulate cable handling physics before deploying on real assembly lines is enabling manufacturers to achieve precision and speed that neither pure automation nor human labor can match independently.

Key applications in manufacturing:

- High-precision component assembly with dexterous dual-arm manipulation

- Adaptive quality inspection that identifies defects across product variations

- Flexible automation that reconfigures for new product lines without reprogramming

- Human-robot collaborative workflows where AI-guided robots assist rather than replace workers

Warehouse and Logistics Automation

The logistics sector faces a compounding challenge: rising order volumes, labor shortages, and increasingly variable SKU catalogs that defeat rigid automation. Physical AI is addressing this through autonomous forklifts, picking robots, and fleet management systems trained in large-scale warehouse digital twins.

Physics-accurate digital twin environments allow logistics operators to simulate entire warehouse configurations — rack layouts, traffic patterns, loading dock schedules — and train autonomous vehicle fleets before a single unit is deployed. This approach dramatically reduces the integration timeline for new facilities and allows rapid reconfiguration when warehouse layouts change.

For large-scale contract logistics operations, this translates to measurable gains in throughput, energy efficiency, and safety incident reduction.

Healthcare and Surgical Robotics

Surgical robotics represents the most safety-critical application of physical AI — and arguably the most consequential. The deployment of AI-driven robotic systems in surgery, diagnostic imaging, and hospital logistics demands an entirely different standard of validation than industrial settings.

Simulation-based training workflows are now enabling surgical robotic systems to be validated in virtual clinical environments before clinical deployment. Robotic surgical platforms are using simulation and AI post-training workflows to prepare systems for urological procedures, while next-generation surgical computing platforms explore delivering mission-critical precision with embedded functional safety hardware.

Physical AI in healthcare is not about replacing surgical expertise. It is about augmenting precision, reducing procedural variability, and extending the reach of specialist capabilities to underserved healthcare settings.

Key Takeaway: In healthcare robotics, the simulation-first approach is not just an efficiency measure — it is a patient safety imperative. Physical AI systems must demonstrate validated performance in thousands of simulated edge cases before they approach a clinical environment.

Construction and Infrastructure

Autonomous construction deployment is emerging as a significant application domain for physical AI. Construction sites are among the most unstructured, variable, and hazardous environments imaginable — precisely the kind of challenge that defeats traditional automation but suits adaptive physical AI systems.

Robotics platforms equipped with physical AI are beginning to automate tasks including site surveying, material handling, structural inspection, and precision assembly. The ability to operate in GPS-degraded environments, navigate uneven terrain, and collaborate safely with human workers makes physical AI a strong fit for construction automation.

Humanoid Robots: From Research Projects to Industrial Deployment – Physical AI

No discussion of physical AI is complete without addressing the rapid maturation of humanoid robots. For years, bipedal robots existed primarily as research demonstrations. The combination of physical AI, advanced simulation training, and edge computing platforms has changed the calculus.

Why humanoids, specifically?

The industrial world is built for human bodies. Tools, workspaces, vehicle interiors, staircases — the entire built environment assumes a bipedal operator with two dexterous hands. Purpose-built robots require costly facility modifications. Humanoid robots can, in principle, operate existing infrastructure without modification.

What Makes Humanoid Deployment Challenging

Deploying humanoids industrially is not simply a matter of scaling up existing robot software. The challenges are fundamental:

- Whole-body coordination: Every movement requires simultaneous optimization of balance, gait, arm motion, and task execution

- Dexterous manipulation: Human-level hand dexterity involves dozens of degrees of freedom with fine-grained force control

- Safe human collaboration: Humanoids must detect and respond to human proximity in real time

- Reliable locomotion: Walking on irregular surfaces, ascending stairs, and recovering from perturbations requires robust learned policies

Foundation models specifically designed for humanoid control — trained on large-scale simulation data and fine-tuned with real-world demonstrations — are now enabling humanoids to succeed at new tasks in new environments at rates more than double what previous vision-language-action models achieved. The performance gap between humanoids and task-specific industrial robots on defined tasks is closing rapidly.

The near-term humanoid deployment roadmap:

- 2025-2026: Early industrial pilots in structured environments (electronics assembly, warehouse picking)

- 2026-2028: Broader deployment in semi-structured settings with AI-guided task adaptation

- 2028-2032: Generalized deployment across diverse industrial and logistics environments

Enabling Every Manufacturer to Automate: Democratizing Physical AI

One of the most important — and least discussed — dimensions of the physical AI revolution is accessibility. Enterprise-scale manufacturers have long had the engineering resources and capital to deploy sophisticated automation. Small and medium-sized manufacturers have not.

Physical AI is changing this equation in two significant ways.

No-Code Robot Deployment

AI platforms that integrate with widely deployed industrial and collaborative robotic systems are enabling manufacturers to extend automation into dynamic, variable applications without writing task-specific code. A manufacturer can deploy a robotic workforce with standard industrial robots by leveraging a shared AI intelligence layer — without dedicated robotics engineers.

This is not a marginal improvement. For the tens of thousands of small and medium manufacturers who have historically been priced out of advanced automation, no-code physical AI deployment represents a fundamental shift in competitive dynamics.

Open-Source Robotics Ecosystems

The physical AI field is also benefiting from deep integration between commercial AI infrastructure and open-source development communities. Connecting millions of robotics developers with the broader AI builder community accelerates the development of shared tools, pre-trained models, and validated deployment patterns.

The result is a growing library of open-source robot policies, simulation assets, and training frameworks that lower the barrier to entry for new robotics companies and research teams.

Key Takeaway: Physical AI is not exclusively an enterprise technology. The combination of no-code deployment platforms and open-source ecosystems is putting industrial-grade robotic intelligence within reach of organizations of all sizes.

Common Mistakes Organizations Make When Adopting Physical AI

Despite the clear opportunity, many organizations stumble in their approach to physical AI adoption. Understanding these failure modes helps avoid costly detours.

Mistake 1: Treating physical AI as a drop-in replacement for traditional automation Physical AI is not just “better robots.” It requires rethinking workflows, data collection strategies, and validation processes. Organizations that approach it as a direct swap for legacy systems miss its transformative potential.

Mistake 2: Skipping simulation validation The temptation to move quickly from procurement to deployment is understandable — but physical AI systems that skip thorough simulation validation encounter costly failures in production environments. The simulation-first methodology exists for good reason.

Mistake 3: Neglecting data infrastructure Physical AI systems learn from data. Organizations that lack structured processes for capturing, labeling, and managing robot interaction data will find their systems plateauing quickly. Data infrastructure is not a secondary concern — it is foundational.

Mistake 4: Underestimating the integration challenge Physical AI platforms require integration with existing manufacturing execution systems, ERP platforms, and safety frameworks. Organizations that treat this as an IT afterthought, rather than a core engineering workstream, will face delays and reliability issues.

Mistake 5: Deploying without change management Physical AI changes how workers interact with their environment. Workforce training, role redefinition, and communication about automation timelines are critical to successful adoption — and frequently underinvested.

Future Trends in Physical AI: What to Watch Over the Next Three Years

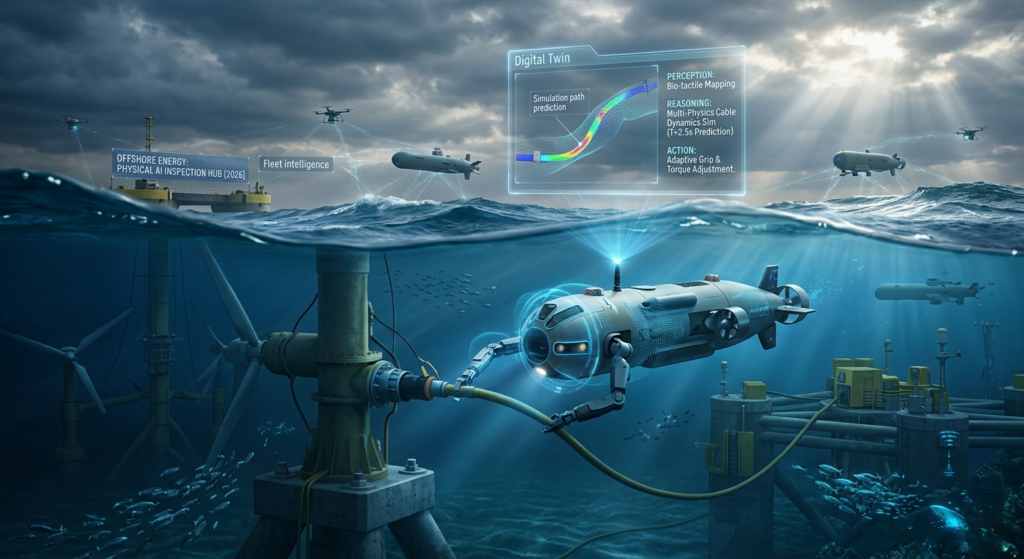

Multi-Physics Simulation

Current simulation platforms model rigid body dynamics well, but real-world manufacturing involves flexible materials, fluids, thermal effects, and complex contact mechanics. Next-generation simulation engines with multiphysics capability — handling deformable objects, complex material interactions, and high-fidelity tactile simulation — will unlock robot deployment in categories currently beyond reach.

Shared Intelligence Across Robot Fleets

As more robots deploy with physical AI, the potential for shared learning becomes significant. Experience gathered by one robot performing a task can, with appropriate architecture, accelerate training for other robots across a fleet or even across organizations. This “fleet intelligence” model could compress skill acquisition timelines dramatically.

Physical AI in Extreme Environments

Deep-sea infrastructure inspection, nuclear facility maintenance, and space-based manufacturing represent frontiers where human presence is hazardous or impossible. Physical AI systems capable of operating in unstructured, extreme environments with minimal human oversight are advancing rapidly and will open entirely new categories of robotic deployment.

Convergence of Humanoid and Specialized Platforms

The current distinction between humanoid robots and purpose-built industrial robots may blur as shared AI foundations — trained on the same world models and simulation frameworks — power increasingly diverse embodiments. The intelligence layer becomes platform-agnostic, deployable across wheeled, bipedal, and custom-form-factor robots.

FAQ: Physical AI and Industrial Robotics

What is the difference between physical AI and traditional industrial automation? Traditional industrial automation executes fixed, pre-programmed tasks with high precision but zero adaptability. Physical AI enables robots to perceive their environment, reason about it, and adapt their behavior — handling variability that would cause traditional systems to fail or require manual reprogramming.

How long does it take to deploy a physical AI robotic system? Deployment timelines vary significantly by complexity, but physical AI platforms with simulation-validated policies and no-code deployment tools are reducing timelines from months to days for many standard industrial tasks. Complex, novel applications may still require extended development and validation cycles.

Do physical AI robots require constant internet connectivity? No. Edge computing platforms embedded in robot controllers allow physical AI inference to run locally in real time. Cloud connectivity is used for training, updates, and fleet management — not for real-time operation.

Is physical AI suitable for small and medium-sized manufacturers? Increasingly, yes. No-code deployment platforms and shared AI intelligence layers are making physical AI accessible to manufacturers who lack large robotics engineering teams. Startup incubation programs and open-source frameworks are further lowering barriers.

What safety standards apply to physical AI in industrial settings? Physical AI systems must comply with applicable industrial safety standards — including those governing collaborative robot operation, functional safety, and sector-specific requirements (such as IEC 62061 for machinery and ISO 10218 for industrial robots). Simulation-based validation is increasingly recognized as a component of safety case development, though regulatory frameworks are still evolving.

How does physical AI affect manufacturing employment? Physical AI automates repetitive, hazardous, and precision tasks — but also creates demand for new roles in robot operations, AI training, simulation engineering, and system integration. The net employment impact varies by sector and deployment approach, with evidence suggesting that automation-intensive manufacturers often grow their workforces in higher-skill roles over time.

What is a world model in robotics? A world model is an AI system that learns to predict how the physical environment responds to robot actions. Rather than relying purely on reactive control, a robot with a world model can simulate the probable consequences of candidate actions and choose the one most likely to achieve its goal — enabling more robust performance in variable environments.

Conclusion: Preparing Your Organization for the Physical AI Era

The convergence of generalist robot intelligence, high-fidelity simulation, and accessible deployment platforms is compressing a decade of gradual robotics progress into a few transformative years. Physical AI is not an incremental upgrade to industrial automation — it is a foundational shift in what machines can do and who can deploy them.

The organizations best positioned to benefit are those that act now: investing in simulation infrastructure, building data pipelines for robot learning, and developing the internal expertise to evaluate, deploy, and iterate on physical AI systems.

Those that treat this as a future concern will find themselves competing with peers who have already built AI-fluent robotic operations — and discovering that catching up is considerably more expensive than leading.