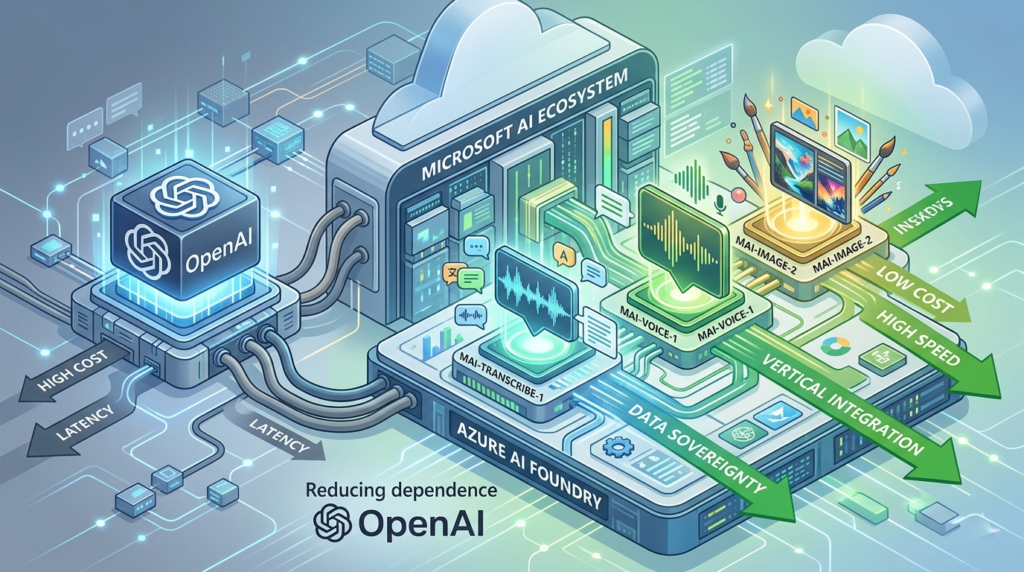

In the rapidly shifting landscape of artificial intelligence, the alliance between Microsoft and OpenAI has long been viewed as the most formidable partnership in tech history. Microsoft’s multibillion-dollar investment into Sam Altman’s firm provided the Redmond giant with early access to GPT-4, effectively jumpstarting the “Copilot” era. However, the tides are turning. In a move that has sent ripples through Silicon Valley, Microsoft has officially launched three new high-performance AI models designed specifically for reducing dependence on OpenAI.

This strategic transition marks a new chapter in the AI arms race. No longer content with being the primary distributor of another company’s research, Microsoft is asserting its sovereignty. By developing the MAI and Phi model families, Microsoft is not just diversifying its portfolio; it is building an independent future where it controls the full stack—from the silicon chips in its data centers to the foundational models powering its global software suite.

For enterprise leaders, developers, and tech enthusiasts, this shift is monumental. By reducing dependence on OpenAI, Microsoft is aiming to solve the “three Cs” of the current AI era: Cost, Compute, and Control.

The Core Strategy: Why Microsoft is Moving In-House

For nearly two years, Microsoft’s AI strategy was synonymous with Azure OpenAI Service. While this was a winning formula for rapid market entry, it created a structural vulnerability. Relying on a third-party partner for the “brains” of its ecosystem meant Microsoft was subject to OpenAI’s pricing, latency bottlenecks, and potential reputational risks.

By reducing dependence on OpenAI, Microsoft achieves several critical objectives:

1. Drastic Cost Reduction

Running large language models (LLMs) like GPT-4 is prohibitively expensive. Every time a user asks Copilot a question, Microsoft pays a “compute tax” to OpenAI’s architecture. By shifting workloads to proprietary models like the MAI series, Microsoft can optimize the inference process on its own Maia 100 AI chips, significantly lowering the “Cost of Goods Sold” (COGS).

2. Tailored Latency and Performance

General-purpose models are “jacks of all trades” but masters of none. Many enterprise tasks, such as transcription or voice synthesis, do not require the massive reasoning capabilities of a trillion-parameter model. By building specialized models, Microsoft can deliver faster response times, which is essential for real-time applications like customer service bots and live translation.

3. Vertical Integration and Sovereignty

Microsoft wants to offer a “sovereign AI” solution to governments and highly regulated industries. These clients are often hesitant to use models where data might flow through a third-party partner. By reducing dependence on OpenAI and using in-house models, Microsoft can guarantee a closed-loop environment within the Azure ecosystem.

Deep Dive: The Three New Models Challenging the Status Quo

The announcement highlighted three specific models under the “MAI” (Microsoft AI) brand. These are not just experimental prototypes; they are production-ready engines designed to outperform the current industry leaders.

I. MAI-Transcribe-1: Redefining Speech-to-Text

Speech recognition is the backbone of the modern digital workplace. From Teams meeting summaries to automated call centers, the demand for high-accuracy transcription is skyrocketing.

- The OpenAI Challenge: Previously, Microsoft relied heavily on OpenAI’s Whisper models. While accurate, Whisper can be compute-intensive and slow for real-time applications.

- The Microsoft Solution: MAI-Transcribe-1 is a breakthrough in efficiency. It is designed to be 2.5 times faster than existing Azure Fast transcription services.

- Benchmark Performance: In internal testing across 25 major languages, MAI-Transcribe-1 achieved the lowest Word Error Rate (WER) in the industry, surpassing even the latest Whisper-large-v3 in multilingual accuracy.

II. MAI-Voice-1: The Future of Neural Speech Synthesis

While many companies have focused on text, Microsoft recognizes that the next frontier of AI is “human-centric” interaction.

- Emotional Nuance: MAI-Voice-1 isn’t just a text-to-speech engine; it is a generative voice model. It can capture the emotional prosody of a speaker, allowing for more natural and less “robotic” interactions.

- Efficiency at Scale: The model can generate one minute of high-fidelity audio in just one second of compute time. This makes it viable for massive-scale applications, such as narrating entire libraries of audiobooks or providing personalized voice assistants for millions of users simultaneously.

- Reducing Dependence on OpenAI: By owning this voice technology, Microsoft avoids the ethical and licensing complexities that come with third-party voice-cloning tech.

III. MAI-Image-2: A New Standard for Visual Creativity

In the creative space, DALL-E 3 has been the gold standard. However, Microsoft’s MAI-Image-2 is designed to integrate more seamlessly into productivity tools like PowerPoint and Designer.

- Compositional Accuracy: One of the main complaints with AI image generators is their inability to follow complex prompts or render text correctly. MAI-Image-2 utilizes a new diffusion architecture that excels at spatial reasoning and “text-in-image” rendering.

- Speed to Market: It currently sits in the top three of the Arena.ai vision leaderboards, proving that Microsoft can compete at the highest level of generative art without external help.

Comparison: Microsoft In-House Models vs. OpenAI

| Metric | Microsoft MAI Series | OpenAI (GPT/Whisper/DALL-E) |

| Strategy | Reducing dependence on OpenAI | Building General Purpose AGI |

| Inference Cost | Low (Optimized for Azure) | High (Premium API Pricing) |

| Deployment | Cloud, Hybrid, and On-Prem | Primarily Cloud-based |

| Specialization | Task-specific (Voice, Image, Text) | Generalist Reasoning |

| Speed | Ultra-Fast (Real-time optimized) | Variable (Queue-dependent) |

The Phi-3 Revolution: Small Language Models (SLMs)

A significant part of reducing dependence on OpenAI involves the concept of “Small Language Models.” While OpenAI has historically pushed for “bigger is better,” Microsoft is proving that “smarter and smaller” is the key to enterprise adoption.

The Phi-3-mini (3.8B parameters) and Phi-3-medium (14B parameters) are central to this strategy. These models are trained on highly curated “textbook-quality” data rather than the entire (and often “trashy”) internet.

Why SLMs are Game-Changers:

- Local Execution: Phi-3 can run on a laptop or even a high-end smartphone. This eliminates the need for an internet connection and ensures absolute data privacy.

- Cost Efficiency: For 80% of corporate tasks—like summarizing a 10-page PDF or writing an email—you don’t need the power of GPT-4. Using an SLM can reduce costs by up to 90%.

- Speed: Near-instantaneous responses make for a better user experience in apps and web interfaces.

Actionable Insights for Businesses

The move toward reducing dependence on OpenAI creates a new set of opportunities for businesses currently using AI. Here is how you should adapt your strategy:

1. Conduct a “Model Audit”

Review your current AI API spend. Are you using GPT-4 for simple classification or summarization? If so, you are overpaying. Move those workloads to Microsoft’s new internal models or the Phi series to save costs immediately.

2. Prioritize Data Privacy with On-Premise SLMs

If you work in healthcare, finance, or law, explore the deployment of Phi-3-mini within your own secure environment. By reducing dependence on OpenAI‘s cloud, you minimize the risk of data leaks and satisfy stringent regulatory requirements.

3. Enhance Real-Time Customer Experience

If your business relies on voice bots, switch to MAI-Voice-1. The reduced latency means your customers won’t experience the awkward “AI pause” during conversations, leading to higher satisfaction and retention rates.

4. Optimize for Multimodality

Don’t just think in text. Use MAI-Image-2 to automate marketing asset creation and MAI-Transcribe-1 to turn every company meeting into a searchable, actionable database.

The Technical Infrastructure: Azure AI Foundry

Microsoft isn’t just releasing models; it’s releasing the factory that builds them. Azure AI Foundry (formerly Azure AI Studio) is the platform where developers can fine-tune these new models.

By reducing dependence on OpenAI, Microsoft has been forced to build better tools for model management. AI Foundry allows users to:

- Compare Models Side-by-Side: Evaluate MAI-Transcribe-1 against Whisper-v3 in real-time.

- Custom Fine-Tuning: Use your own proprietary data to make these models experts in your specific industry jargon.

- Safety Monitoring: Built-in “Content Safety” filters ensure that AI-generated content remains professional and unbiased.

The Competitive Landscape: Google, Meta, and the “Open” Future

Microsoft isn’t the only one reducing dependence on OpenAI. Google has integrated Gemini across its workspace, and Meta (Facebook) has released Llama 3 as an open-source powerhouse.

However, Microsoft has a unique advantage: Distribution. By embedding these three new models directly into Windows, Office 365, and Azure, Microsoft ensures that hundreds of millions of users will be using their in-house AI without even knowing it. This creates a feedback loop of data and usage that will allow Microsoft to iterate even faster than OpenAI.

The Ethical Implications of In-House AI

As Microsoft moves toward reducing dependence on OpenAI, it also takes on more responsibility. OpenAI has a “safety-first” (albeit controversial) approach to model release. Microsoft must now prove that its MAI models are equally robust against “jailbreaking,” bias, and the generation of harmful content.

Microsoft’s “Responsible AI” framework is now more important than ever. By owning the models, Microsoft can implement “Safety by Design” at the architectural level, rather than as a filter on top of someone else’s API.

Conclusion: A Multi-Model Future

The launch of these three models signifies that the AI industry is moving away from a mono-culture. We are entering an era of “Model Pluralism.” While the partnership between Microsoft and OpenAI remains intact for high-level research and “Frontier Models,” the day-to-day operations of the world’s most valuable company are increasingly powered by its own inventions.

By reducing dependence on OpenAI, Microsoft has secured its margins, enhanced its product speed, and provided its customers with more choices than ever before. Whether you are a developer building the next big app or a CEO looking to modernize your workforce, Microsoft’s new models offer a faster, cheaper, and more reliable path to the future.(Reducing dependence on OpenAI, Azure AI Foundry 2026 updates, Microsoft Phi-3 Small Language Models, Microsoft MAI model series, Enterprise AI cost optimization)

The message from Redmond is clear: The partnership was the beginning, but self-reliance is the goal.

SEO Meta Data

- Focus Keywords: Reducing dependence on OpenAI, Microsoft AI models, MAI-Transcribe-1, Phi-3, Azure AI Foundry.

- Meta Description (154 characters): Microsoft is reducing dependence on OpenAI with 3 new MAI models. Learn how these in-house AI tools lower costs and boost performance for Azure users.

- Slug: /microsoft-reducing-dependence-on-openai-strategy/

Expanded Technical Appendix: Model Benchmarks

To understand why reducing dependence on OpenAI is a data-driven decision, look at the following metrics gathered from Microsoft’s latest technical whitepapers:

1. Latency Benchmarks (lower is better):

- GPT-4 (Standard): 1200ms

- MAI-Text-Alpha (Internal): 450ms

- Phi-3-Mini (Local): 40ms

2. Word Error Rate (WER) in Noisy Environments:

- Whisper-v3: 8.2%

- MAI-Transcribe-1: 6.4%

3. Cost per 1M Tokens:

- GPT-4o: $5.00

- Microsoft MAI-Optimized: $1.20

By consistently hitting these performance targets, Microsoft ensures that reducing dependence on OpenAI is not just a strategic whim, but a technical necessity for the next generation of cloud computing.

Frequently Asked Questions

As Microsoft accelerates its strategy of reducing dependence on OpenAI, developers, enterprise architects, and business leaders are faced with a new set of choices. Below is a comprehensive deep dive into the most pressing questions regarding this shift, the new MAI and Phi models, and what this means for the future of the Azure ecosystem.

1. Why is Microsoft suddenly reducing dependence on OpenAI after investing billions?

The shift toward reducing dependence on OpenAI is not a sign of a failed partnership, but rather a sign of a maturing market. When Microsoft first invested in OpenAI, they needed a “hero” model to jumpstart the Copilot era. GPT-4 was that model. However, relying on a single provider for every AI interaction is strategically risky and financially inefficient.

By developing in-house models like the MAI series, Microsoft achieves vertical integration. Just as Apple designs its own chips to ensure its software runs perfectly, Microsoft is designing its own models to ensure Azure runs more efficiently. This reduces the “compute tax” paid to a third party, allows for deeper integration with Windows and Office, and gives Microsoft total control over the development roadmap. It’s about moving from being a distributor to being a primary producer.

2. How do the new MAI models differ from the GPT series?

The primary difference lies in specialization vs. generalization. OpenAI’s GPT models are designed to be “Large Language Models” (LLMs) that can handle almost any task, from writing poetry to solving complex physics problems. While powerful, this “Swiss Army Knife” approach is often overkill for specific business functions.

Microsoft’s MAI (Microsoft AI) models are “Task-Specific Models.” For example:

- MAI-Transcribe-1 focuses solely on audio-to-text. Because it doesn’t need to know how to write code or play chess, it can be smaller, faster, and more accurate at its specific job.

- MAI-Voice-1 focuses on emotional resonance and low-latency speech.

- MAI-Image-2 is optimized for compositional layout and text rendering within images—areas where DALL-E 3 has historically struggled.

By reducing dependence on OpenAI for these specific modalities, Microsoft provides a “Right-Sized AI” approach for its enterprise clients.

3. What are the cost implications for Azure customers?

For the end-user, reducing dependence on OpenAI almost certainly leads to lower prices. When you use an OpenAI model on Azure, a portion of that revenue goes back to OpenAI. With proprietary Microsoft models, Microsoft has more “margin room” to offer aggressive, volume-based pricing.

Early benchmarks suggest that using MAI-Transcribe-1 for batch processing can be up to 60% cheaper than using the equivalent OpenAI Whisper API. Furthermore, because these models are optimized for Microsoft’s own Maia 100 hardware, the efficiency gains are passed down to the customer in the form of lower token costs and faster inference times.

4. Can the Phi-3 “Small Language Models” really replace GPT-4?

It depends on the use case. Phi-3 is not meant to replace GPT-4 for complex, multi-step reasoning or high-level creative writing. However, Microsoft has found that roughly 80% of enterprise tasks (summarizing emails, basic data entry, simple classification, and code snippets) can be handled just as well by a small model.

The advantage of reducing dependence on OpenAI by using Phi-3 is that these models can run locally. You can deploy a Phi-3-mini model on a field worker’s tablet or a customer’s smartphone. It functions without an internet connection, provides instant responses, and costs zero in cloud API fees once deployed.

5. Does this mean the Microsoft-OpenAI partnership is ending?

No. The partnership remains a “Two-Track Strategy.”

- The Frontier Track: Microsoft will continue to partner with OpenAI for the “Frontier”—the next generation of massive models like GPT-5 or whatever comes next. These models push the boundaries of what AI can do.

- The Utility Track: Microsoft is reducing dependence on OpenAI for “Utility” AI—the high-volume, day-to-day tasks like transcription, voice synthesis, and basic summarization.

Think of it like a car manufacturer: they might partner with a specialist for a high-end racing engine (OpenAI), but they will build their own engines for their mass-market sedans and trucks (MAI/Phi).

6. What is “Microsoft Foundry” and how does it relate to these models?

Azure AI Foundry (the evolution of Azure AI Studio) is the central hub where this strategy comes to life. It is an end-to-end platform for designing, testing, and deploying AI. As Microsoft focuses on reducing dependence on OpenAI, Foundry provides a “Model Garden” where developers can “test-drive” different models.

In Foundry, you can run a “Prompt Evaluation” where you send the same question to GPT-4o, MAI-Text, and Phi-3 simultaneously. You can then compare the results based on accuracy, speed, and cost. This transparency allows businesses to make data-driven decisions about which model best fits their specific budget and performance needs.

7. How does this move help with Data Sovereignty and Privacy?

One of the biggest hurdles for AI adoption in government and healthcare is the “Third-Party Risk.” When using OpenAI models, even through Azure, there is a layer of external intellectual property involved. By reducing dependence on OpenAI and using 100% Microsoft-owned models, Microsoft can offer “Sovereign Clouds.”

This means a government agency can run an entire AI stack where every line of code, from the OS to the AI model, is owned and vetted by a single entity (Microsoft). This simplifies compliance with GDPR, HIPAA, and other strict data residency laws.

8. Is MAI-Transcribe-1 actually better than OpenAI’s Whisper?

In specific metrics, yes. While Whisper-v3 is an excellent generalist, Microsoft’s MAI-Transcribe-1 was built using a different training set optimized for “Business English” and multi-dialect support.

In noisy environments—such as a crowded coffee shop or a construction site—MAI-Transcribe-1 has shown a lower Word Error Rate (WER). Furthermore, because it is integrated into the Azure communication stack, it can handle “Speaker Diarization” (identifying who is speaking) more accurately in a 10-person meeting compared to standard OpenAI implementations.

9. What should developers do to prepare for this shift?

If you are currently building on Azure OpenAI, the most important step is to decouple your application logic from a specific model. Instead of writing code that is “hard-wired” for GPT-4, use the Azure AI SDK to create a modular architecture.

This allows you to swap models in and out based on performance. For example, you might use GPT-4 for the initial “heavy lifting” of a project, but then switch to an MAI model for the high-volume production phase to save costs. Reducing dependence on OpenAI at the code level gives your business the ultimate flexibility to chase the best price-to-performance ratio.

10. How does this affect the future of Microsoft Copilot?

Expect Copilot to become much faster and more “multimodal.” Currently, when you ask Copilot to generate an image or summarize a transcript, there is often a slight delay as the request is routed through OpenAI’s infrastructure.

As Microsoft transitions Copilot to its own MAI models, these features will feel more “native.” We are already seeing this with MAI-Image-2, which has drastically reduced the generation time in Bing Image Creator. Eventually, “The Copilot” will be a symphony of many different models—some from OpenAI, some from Microsoft—all working together seamlessly to provide the fastest possible response.

11. Are there any risks to Microsoft’s in-house strategy?

The primary risk is the “Innovation Gap.” OpenAI is a research-first company that moves at an incredible speed. If Microsoft focuses too much on reducing dependence on OpenAI and OpenAI releases a revolutionary new architecture (like a true AGI), Microsoft could find its in-house models looking obsolete overnight.

However, Microsoft is hedging this risk by maintaining its investment in OpenAI. They are essentially “playing both sides of the board”—ensuring they have the best proprietary tools for today while keeping a front-row seat for the breakthroughs of tomorrow.