In the rapidly evolving landscape of 2026, Artificial Intelligence has moved far beyond being a simple “answer engine.” Today, Large Language Models (LLMs) have become active conversational partners in the boardroom, often taking the lead by asking the questions that shape our strategic decisions. While this level of integration promises efficiency, a groundbreaking study by Arnaud Chevallier, Frédéric Dalsace, and José Parra-Moyano highlights a critical danger: the risks of letting AI direct conversations.

As leaders, we often focus on the quality of AI’s answers, but we frequently overlook the bias inherent in the questions it chooses to ask. When we hand over the “steering wheel” of a dialogue to an algorithm, we inadvertently surrender control over the direction of our thinking.

The Shift from Answer Engine to Conversational Partner

The modern executive no longer just “prompts” an AI; they collaborate with it. This shift has introduced a subtle but profound change in organizational dynamics. By determining which questions are worth asking, AI systems prioritize certain types of information while completely ignoring others.

Research comparing over 1,600 human executives with 13 leading AI models reveals a startling disparity in “question mixes.” Humans and machines don’t just think differently—they inquire differently. This is where the risks of letting AI direct conversations become most apparent, as the machine’s “blind spots” quickly become the organization’s blind spots.

Understanding the “Question Gap”

To understand why this matters, we must look at the five types of strategic questions identified by the HBR researchers:

- Investigative: Clarifying the facts and current state.

- Speculative: Exploring “what if” scenarios and creative possibilities.

- Productive: Focusing on execution—the “how” and “when.”

- Interpretive: Synthesizing meaning—the “so what?”

- Subjective: Probing the unsaid—emotions, ethics, and stakeholder concerns.

The study found that AI models are heavily skewed toward interpretive analysis. They are excellent at synthesizing data and offering “sense-making” perspectives. However, they are significantly weaker in productive and subjective areas. Because AI lacks a physical body and a personal stake in the outcome, it often fails to ask the “messy” questions about human emotion, office politics, or the granular difficulties of execution.

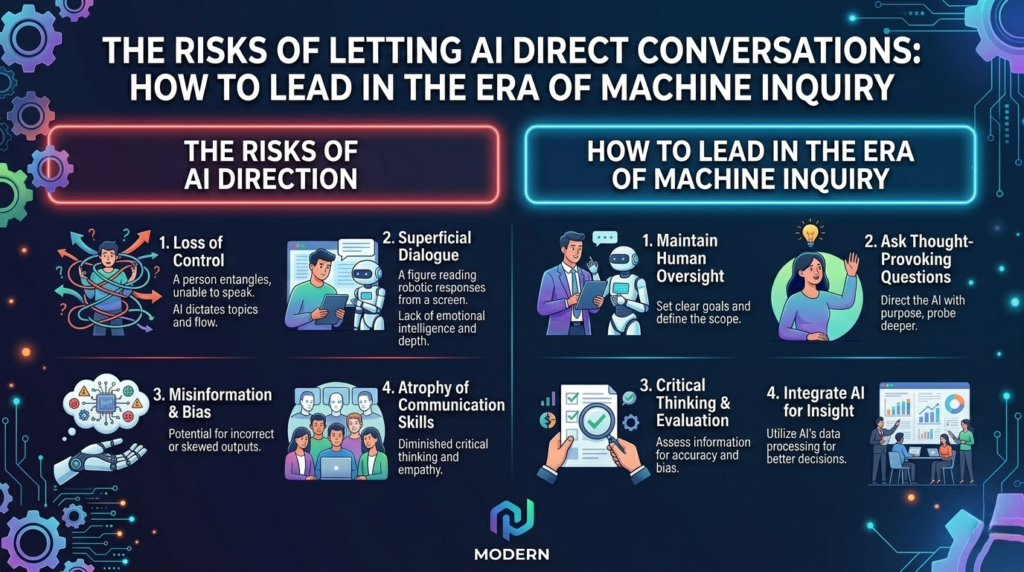

3 Major Dangers of Algorithmic Inquiry

1. The Erosion of Human Judgment

When a leader relies on an AI to facilitate a brainstorming session or a strategy meeting, the AI’s questioning logic begins to frame the reality of the problem. If the AI doesn’t ask about the ethical implications of a move, the team might forget to discuss them. One of the primary the risks of letting AI direct conversations is that it narrows our field of vision to only what the algorithm can process.

2. Implementation Blindness

AI is “all strategy and no soul.” Because models underweight productive questions, they rarely challenge a team on whether they actually have the bandwidth, the culture, or the technical infrastructure to pull off a plan. By letting the AI direct the flow, you may reach a consensus on a brilliant strategy that is entirely impossible to execute.

3. The Illusion of Objectivity

We often view AI as an objective third party. In reality, every model has its own “personality” and inquiry style. Some models are more aggressive; others are more cautious. If you aren’t aware of the risks of letting AI direct conversations, you might mistake a machine’s programmed bias for an objective strategic direction.

How to Mitigate the Risks of Letting AI Direct Conversations

Leading effectively in 2026 requires a new kind of “AI literacy.” It’s not just about knowing how to code or prompt; it’s about knowing when to take back the microphone.

- Audit the Question Mix: When using an AI partner, actively look for what is missing. Is the machine ignoring the “how” (Productive) or the “who” (Subjective)? If so, interject with your own human-centric questions.

- Compare Multiple Models: Don’t rely on a single LLM to direct your strategy. Different models will ask different questions. By rotating tools, you can identify the gaps in each one’s logic.

- Retain Final Authority: The AI should be a consultant, never the chairperson. Always ensure that the final decision-making process is insulated from the AI’s conversational framing.

- Focus on the “Unsaid”: AI cannot read the room. It cannot sense the tension between two executives or the morale of a tired department. To counter the risks of letting AI direct conversations, leaders must double down on empathy and social intuition—the two things AI cannot replicate.

The Future of Human-AI Collaboration

The goal isn’t to banish AI from our conversations but to govern its role within them. We must treat AI’s questions as inputs, not instructions. When we are aware of the risks of letting AI direct conversations, we can use these tools to broaden our horizons without losing our way.

In the end, the most powerful tool in any boardroom isn’t the most advanced LLM—it’s the human ability to ask the “wrong” question at the right time. The “messy,” “emotional,” and “difficult” inquiries are often where the real breakthroughs happen.

As we navigate this new era, remember: the person who asks the questions controls the room. Don’t let that person be a machine. By staying vigilant against the risks of letting AI direct conversations, you ensure that technology serves your vision rather than dictating it.

Conclusion

The integration of AI into our professional lives is inevitable, but its dominance is not. By understanding the risks of letting AI direct conversations, leaders can maintain their strategic edge. Use the machine to synthesize, use it to speculate, but never let it decide which parts of the human experience are worth talking about.

Stay curious, stay human, and always be the one to ask the final, most important question.