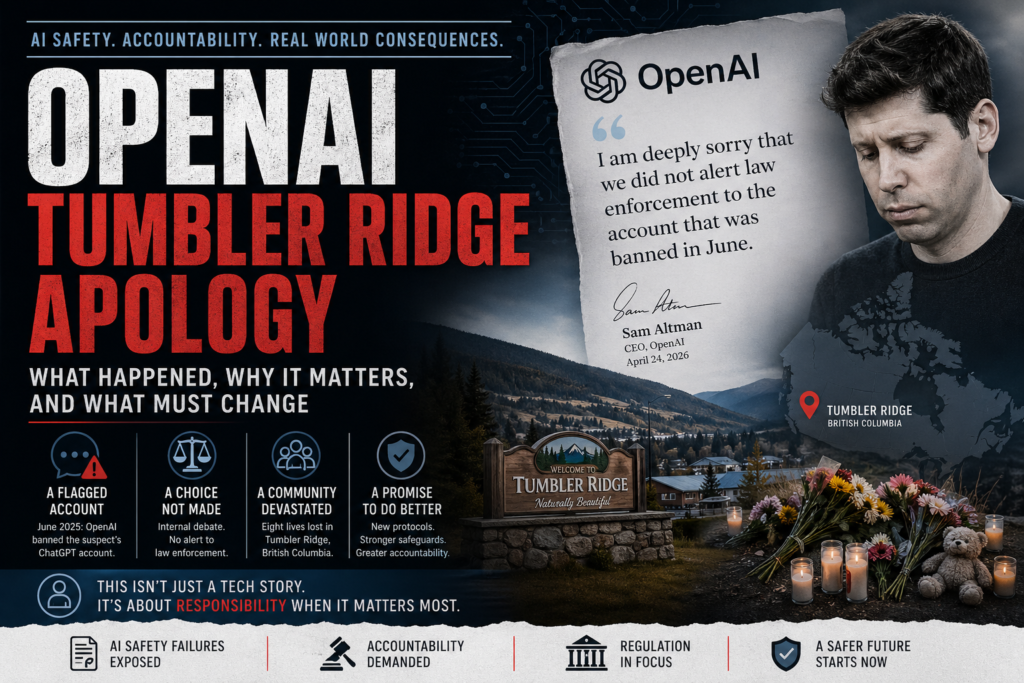

When eight people were killed in a mass shooting in Tumbler Ridge, Canada, the world was shaken. What made the tragedy even harder to process was the revelation that OpenAI had flagged the suspected shooter’s ChatGPT account months before the attack — and chose not to alert law enforcement. The OpenAI Tumbler Ridge apology issued by CEO Sam Altman in April 2026 is now one of the most consequential moments in the short history of AI safety governance. Here is everything you need to know about what happened, why it matters, and what must change.

What Happened? The Tumbler Ridge Shooting and OpenAI’s Role

In June 2025, OpenAI banned an account belonging to Jesse Van Rootselaar, an 18-year-old later identified by police as the suspect in the Tumbler Ridge mass shooting that killed eight people. The account had been flagged because the user described scenarios involving gun violence through ChatGPT conversations.

OpenAI’s staff recognized the warning signs. Internal discussions took place about whether to contact Canadian authorities. Ultimately, the company decided against it — a decision that would come under intense public scrutiny months later, after the shooting occurred. OpenAI only reached out to Canadian law enforcement after the attack.

The Wall Street Journal broke the story, reporting that OpenAI employees had raised alarms about the account well in advance of the tragedy. The revelation triggered immediate outrage from the public, government officials, and the broader tech policy community.

The OpenAI Tumbler Ridge Apology: What Sam Altman Said

Sam Altman’s formal apology was first published in the local newspaper Tumbler RidgeLines on April 24, 2026, before being covered widely by international media.

In his letter, Altman acknowledged direct conversations with Tumbler Ridge Mayor Darryl Krakowka and British Columbia Premier David Eby. All three agreed that a public apology was necessary, though Altman noted that “time was also needed to respect the community as you grieved.”

The key line from the OpenAI Tumbler Ridge apology was unambiguous: “I am deeply sorry that we did not alert law enforcement to the account that was banned in June.” Altman added that while words are insufficient to address irreversible loss, an apology was necessary to “recognize the harm” the community suffered.

Premier Eby responded on social media platform X, acknowledging the apology as necessary but calling it “grossly insufficient for the devastation done to the families of Tumbler Ridge.”

The OpenAI Tumbler Ridge apology represents a rare moment where a major AI company has formally accepted moral responsibility for a failure in its safety systems — not a product bug, but a human decision to withhold potentially life-saving information.

Why Did OpenAI Not Alert Police? The Decision-Making Breakdown

This is the question at the center of the controversy, and it does not have a simple answer. Understanding it requires looking at the internal tensions AI companies face when users disclose concerning information.

The Core Tension: Privacy vs. Public Safety

AI companies like OpenAI operate under strict data privacy obligations. When a user interacts with ChatGPT, there is a reasonable expectation that those conversations are private. Proactively sharing user data with law enforcement — even when content is alarming — raises serious legal and ethical questions:

- Legal exposure: Without a formal subpoena or legal framework, sharing user data may violate privacy laws.

- False positives: Violent content in AI chats is not rare. Users explore dark themes in fiction, process trauma, or engage in hypotheticals. Most do not go on to commit violence.

- Chilling effects: If users believe AI platforms monitor and report their conversations, they may stop using them for legitimate therapeutic or creative purposes.

These are real considerations. But they do not fully explain why OpenAI’s internal debate resulted in silence — especially when the account was banned precisely because the content crossed a defined line.

What Tipped the Scale Against Reporting

According to reporting by the Wall Street Journal, OpenAI employees genuinely debated the right course of action. The decision not to contact authorities appears to have reflected both a lack of clear internal protocol for these situations and an overly cautious interpretation of privacy obligations.

This is the institutional failure at the heart of the OpenAI Tumbler Ridge apology. The problem was not that no one noticed the threat — it was that there was no established, mandatory process for escalating it.

ChatGPT, the Banned Account, and What Came Next: A Timeline

Understanding the sequence of events puts the OpenAI Tumbler Ridge apology in clearer context.

| Date | Event |

|---|---|

| June 2025 | OpenAI flags and bans Jesse Van Rootselaar’s ChatGPT account for content describing gun violence scenarios |

| June–Late 2025 | OpenAI staff debate internally whether to alert police; ultimately decide against it |

| Early 2026 | Mass shooting occurs in Tumbler Ridge, British Columbia; eight people killed |

| February 2026 | Wall Street Journal reports that OpenAI had banned the suspect’s account months earlier |

| February–April 2026 | OpenAI announces plans to improve safety protocols and establish direct contact with Canadian law enforcement |

| April 24, 2026 | Sam Altman’s letter of apology published in Tumbler RidgeLines |

| April 25, 2026 | Story covered globally; Premier Eby calls the apology “grossly insufficient” |

The timeline reveals a nine-month gap between when OpenAI identified a potential threat and when it formally apologized for its inaction — with a mass casualty event in between.

AI Safety Accountability: What This Case Reveals

The OpenAI Tumbler Ridge apology is not just a story about one company’s failure. It is a stress test for the entire framework of AI safety accountability — and that framework was found wanting.

AI Companies Are Not Equipped for Threat Assessment

ChatGPT and similar large language models are designed to generate helpful, harmless text. They are not trained as threat assessment tools, and their operators are not law enforcement professionals. When a user’s conversations cross from disturbing to potentially dangerous, the platform is in genuine institutional no-man’s-land.

No major AI company has a published, transparent policy for when it will proactively share user data with authorities in the absence of a formal legal request. The Tumbler Ridge case exposed that gap in visceral terms.

AI Content Moderation Failure: A Systemic Problem

What happened with Van Rootselaar’s account is a specific instance of a broader AI content moderation failure pattern. AI systems can flag content that violates terms of service. What they cannot do — and what their human operators have not consistently agreed to do — is translate that flag into a law enforcement referral.

This is the distinction between detecting harmful intent and acting on it. OpenAI, in this case, detected it and stopped there. The OpenAI Tumbler Ridge apology acknowledges that stopping there was a mistake.

The Accountability Gap in AI Governance

Traditional technology platforms — social media companies, messaging apps — have faced years of regulatory pressure to develop clearer frameworks for reporting imminent threats. Most large platforms now have dedicated trust and safety teams with defined escalation protocols for credible threats of violence.

AI companies, despite their rapid growth and deep user interaction, have largely operated without equivalent requirements. The Tumbler Ridge shooting suggests that informal norms are not sufficient.

What OpenAI Is Changing After the Tumbler Ridge Apology

In the wake of the backlash, OpenAI has announced several concrete changes to its safety protocols. These represent the practical commitments behind the OpenAI Tumbler Ridge apology.

Key protocol changes announced by OpenAI:

- More flexible referral criteria: OpenAI is revising the thresholds that determine when accounts are referred to law enforcement, making it easier to escalate credible threats even without a formal legal request.

- Direct law enforcement contacts: OpenAI has established dedicated points of contact with Canadian law enforcement agencies, creating a faster pipeline for emergency referrals.

- Ongoing government cooperation: Altman stated that the company’s focus will “continue to be on working with all levels of government to help ensure nothing happens like this again.”

These are meaningful steps. But critics — including Premier Eby — have noted that structural reforms announced after a tragedy are not a substitute for having had those structures in place beforehand.

How AI Companies Handle Threat Detection: A Comparison

The OpenAI Tumbler Ridge apology invites a broader look at how AI and technology companies approach the question of when to involve law enforcement.

| Company / Platform | Threat Detection Approach | Proactive Law Enforcement Referral Policy |

|---|---|---|

| OpenAI (post-Tumbler Ridge) | Account flagging + revised criteria | New protocol; direct LE contacts established |

| OpenAI (pre-Tumbler Ridge) | Account flagging + ban | No clear proactive referral protocol |

| Meta (Facebook/Instagram) | AI moderation + human review | Yes — established Threat Operations team; referrals for credible imminent threats |

| Google/YouTube | Automated + human flagging | Yes — referrals for credible violent threats under defined conditions |

| Snapchat | AI + human moderation | Yes — 24/7 safety team; works with law enforcement on imminent threats |

| Microsoft (Bing/Copilot) | Content filters + flagging | Policy exists; specifics not fully public |

The pattern is clear: established social media and consumer tech platforms have built more developed threat-referral infrastructure than AI chat companies, which have scaled rapidly without equivalent safety frameworks catching up.

What This Means for AI Regulation

Canadian officials have stated publicly that they are considering new regulations on artificial intelligence in the wake of the Tumbler Ridge shooting. No final decisions had been announced as of late April 2026.

What Effective AI Safety Regulation Would Need to Address

For regulation to close the gap exposed by the OpenAI Tumbler Ridge apology, policymakers would need to address at least three dimensions:

- Mandatory reporting thresholds: Clear legal standards for when AI companies must proactively report to law enforcement — analogous to mandatory reporting requirements for healthcare professionals in cases of imminent harm.

- Liability frameworks: Establishing whether and when AI companies bear legal responsibility for failure to report credible threats detected in their systems.

- Transparency requirements: Requiring AI companies to publish their threat assessment and referral policies so users and regulators can evaluate them.

The challenge is that any mandatory reporting regime creates genuine privacy tradeoffs. A broad, vague reporting requirement could chill legitimate use and erode trust in AI tools. A narrow, specific requirement risks being gamed or inapplicable to novel threat patterns.

Getting this balance right will require genuine collaboration between governments, AI companies, civil liberties organizations, and public safety experts — not reactive regulation drafted in the immediate aftermath of tragedy.

Why the OpenAI Tumbler Ridge Apology Is Historically Significant

It would be easy to read the OpenAI Tumbler Ridge apology as a routine piece of corporate crisis management: a CEO writes a letter, acknowledges fault, promises improvements, and the news cycle moves on.

That reading would be too shallow.

What makes this moment significant is what it represents structurally. OpenAI is the world’s most prominent AI company, and ChatGPT is the most widely used AI assistant on the planet. When its CEO formally acknowledges that the company had information that could have prevented a mass casualty event — and chose not to act on it — that is not a PR event. It is a precedent.

The OpenAI Tumbler Ridge apology establishes, for the first time in the public record, that a leading AI company:

- Detected a credible threat through its content moderation systems.

- Had an internal debate about whether to alert authorities.

- Failed to act — and has since acknowledged that failure publicly and unambiguously.

This creates a new baseline for what AI safety accountability looks like in practice. Every AI company with a large user base is now on notice that the “we are just a platform” defense is insufficient when their systems identify potential imminent harm.

Key Takeaways

- The OpenAI Tumbler Ridge apology was issued by CEO Sam Altman in April 2026, acknowledging that the company failed to alert Canadian law enforcement after banning a ChatGPT account that described gun violence scenarios in June 2025.

- The suspected shooter in the Tumbler Ridge mass shooting, which killed eight people, had their account flagged and banned by OpenAI months before the attack.

- OpenAI staff internally debated contacting police but ultimately decided against it, citing the absence of a clear protocol rather than any deliberate indifference.

- Following the apology, OpenAI announced revised safety protocols including flexible referral criteria and direct contact channels with Canadian law enforcement.

- The case reveals a critical gap in AI safety governance: AI companies lack the mandatory threat-reporting frameworks that established social media platforms developed under regulatory pressure.

- Canadian officials are considering new AI regulations, though no legislation had been finalized as of late April 2026.

- The OpenAI Tumbler Ridge apology sets a new precedent for corporate accountability in AI safety — one that the broader industry will need to reckon with.

Conclusion: Why the OpenAI Tumbler Ridge Apology Changes Everything

The OpenAI Tumbler Ridge apology is more than a corporate response to a tragic event—it is a defining moment for how society understands responsibility in the age of artificial intelligence. What unfolded in Tumbler Ridge exposed a reality that many policymakers, technologists, and users had not fully confronted: AI systems are no longer passive tools. They sit at the intersection of human intent, data interpretation, and real-world consequences. When those systems surface signals of potential harm, the decisions made by the organizations behind them can carry life-or-death implications.

At its core, the OpenAI Tumbler Ridge apology underscores a failure not of technology, but of process and preparedness. The warning signs were identified. The account was flagged. The internal debate happened. Yet, without a clear framework to guide action, hesitation prevailed. This gap between detection and escalation is now the central issue in AI safety accountability. It is no longer sufficient for companies to claim they can identify harmful content; they must also demonstrate what happens next when that content crosses a critical threshold.

What makes the OpenAI Tumbler Ridge apology especially significant is the precedent it sets. For the first time, a leading AI company has publicly acknowledged that inaction—despite awareness—was a mistake with profound consequences. This shifts the conversation from abstract ethics to concrete accountability. It sends a signal to the broader industry that responsibility does not end at banning an account or enforcing platform rules. Instead, responsibility extends into difficult, high-stakes decisions about when to involve external authorities.

At the same time, the OpenAI Tumbler Ridge apology highlights the complexity of balancing privacy and public safety. AI platforms are built on user trust, and that trust depends on the expectation that conversations are not arbitrarily shared. However, this case demonstrates that absolute privacy without clear exceptions can create its own risks. The challenge moving forward is not choosing one over the other, but designing systems that can responsibly navigate both—ensuring that credible threats are acted upon without turning AI platforms into instruments of unchecked surveillance.

Looking ahead, the OpenAI Tumbler Ridge apology will likely accelerate regulatory momentum. Governments are now under pressure to define what constitutes a “credible threat,” when reporting becomes mandatory, and how liability should be assigned when companies fail to act. At the same time, AI organizations must move faster than regulation, building internal protocols that are transparent, consistent, and capable of handling edge cases before they escalate into tragedies.

Ultimately, the lasting impact of the OpenAI Tumbler Ridge apology will depend on what changes from this point forward. Apologies acknowledge harm, but accountability is measured by action. If this moment leads to stronger safety frameworks, clearer escalation pathways, and a shared industry standard for threat response, then it may serve as a turning point. If not, it risks becoming another example of lessons learned too late.

The world is entering an era where AI systems are deeply embedded in human decision-making. The OpenAI Tumbler Ridge apology is a stark reminder that with that integration comes responsibility—not just to innovate, but to act when it matters most.