Real-time human-AI collaboration has a new benchmark. Mira Murati’s Thinking Machines Lab just released a research preview of interaction models — a native multimodal AI architecture that replaces the clunky request-response loop with continuous, bidirectional perception across audio, video, and text. If you’ve ever wished your AI assistant could react while you’re still talking, this is what that looks like at a systems level.

What Are Interaction Models?

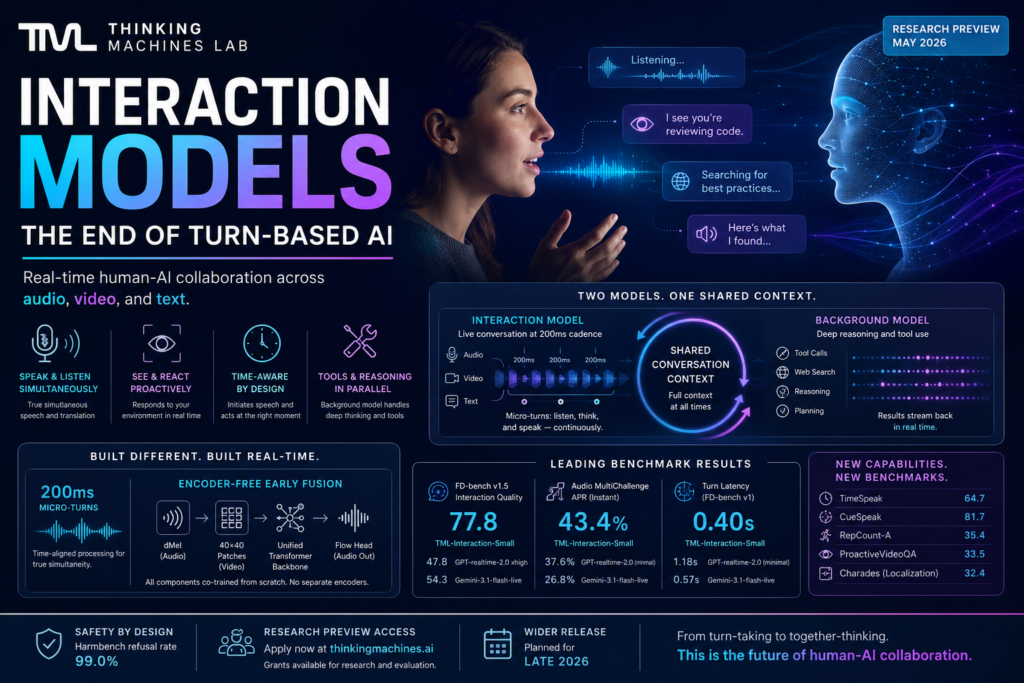

Definition: Interaction models are a new class of AI system in which real-time interactivity is built natively into the model itself — not simulated through external scaffolding components stitched around a standard language model.

Most AI systems you interact with today — including leading voice APIs — are fundamentally turn-based. You complete a thought, the model processes it, and then it responds. The exchange is sequential and discrete. Interaction models break this pattern entirely. Rather than waiting for your turn to end, the model continuously perceives incoming audio, video, and text while simultaneously generating a response — operating in synchronized 200-millisecond chunks.

Think of it less like an AI assistant waiting at a desk and more like a fluent conversation partner who listens, thinks, and speaks all at once.

The concept was introduced by Thinking Machines Lab, the AI research company founded by former OpenAI CTO Mira Murati. Their May 2026 research preview describes interaction models as a scalable approach to human-AI collaboration — one where making the model smarter also makes it a better real-time collaborator, by design.

The Problem With Turn-Based AI

Why the Standard Model Falls Short

The current paradigm — request in, response out — creates a fundamental bottleneck for human-AI collaboration. The model has no awareness of what’s happening while you’re still typing or speaking. It can’t see you pause mid-sentence, react to something happening on your camera feed, or respond to a visual cue that you never verbalized.

The situation is equally blind during generation: while the model is producing output, perception freezes. The only way to interrupt is through an external mechanism that detects the user’s intent to speak — mechanisms the model itself has no part in.

The VAD Harness Problem

To simulate responsiveness in real-time applications, most systems use what Thinking Machines Lab calls a harness — a collection of separate components built around the core model. The most common is voice-activity detection (VAD), which predicts when a user has stopped speaking so the turn-based model knows when to begin generating.

The problem is structural. These harness components are meaningfully less intelligent than the model itself. They can’t catch a facial expression, a self-correction, or a pause that signals thinking rather than turn-yielding. They preclude capabilities like:

- Speaking while listening (simultaneous speech)

- Reacting to something in the user’s environment without being told verbally

- Making a tool call while the conversation is still in progress

- Responding to context that was never explicitly stated

Thinking Machines Lab frames this as a version of the “bitter lesson” in machine learning: hand-crafted scaffolding will always be outpaced by scaling general capabilities. For interactivity to improve alongside intelligence, it has to be part of the model itself.

How Interaction Models Work: The Architecture

Two Models, One Shared Context

The system Thinking Machines Lab has built runs two components in parallel, both sharing the same context at all times:

The Interaction Model stays live with the user continuously. It ingests audio, video, and text in real time and generates responses in the same stream. It handles all aspects of conversation flow — including interruptions, backchanneling, pauses, and yield signals — without a separate VAD component.

The Background Model handles deeper cognitive work asynchronously: tool calls, web search, extended reasoning, and longer-horizon planning. Crucially, when the interaction model delegates a task, it sends a rich context package containing the full conversation — not a stripped-down query. Results stream back as the background model produces them. The interaction model weaves those updates into the conversation at a contextually appropriate moment, rather than delivering them as an abrupt switch.

The analogy Thinking Machines Lab offers: one person keeping you engaged while a colleague in the background looks something up and passes notes forward in real time.

200ms Micro-Turns Explained

The architectural key that makes all of this possible is what the team calls time-aligned micro-turns. Instead of consuming a complete user turn and generating a complete response, both input and output are treated as streams divided into 200-millisecond chunks.

The model interleaves processing of each 200ms input chunk with generation of a corresponding 200ms output chunk. This means:

- The model can speak while listening

- It can react to visual cues without being explicitly prompted

- It can handle genuine simultaneous speech (e.g., live Spanish-to-English translation as you talk)

- Tool calls and web searches happen while the conversation is still unfolding — results arrive woven into the dialogue, not interrupting it

This is not a workflow optimization. It’s a structural change in how the model processes time itself.

Encoder-Free Early Fusion

What is encoder-free early fusion? It’s the design choice that allows multimodal processing to happen at 200ms cadence without the latency overhead of large, separately pretrained encoders.

Standard multimodal systems route audio through something like a Whisper-style ASR model and video through a dedicated vision encoder — each a large pretrained component with its own inference cost. Thinking Machines Lab’s architecture eliminates these separate encoders entirely. Instead:

- Audio input is ingested as dMel and transformed via a lightweight embedding layer

- Video frames are split into 40×40 patches encoded by a small hMLP module

- Audio output uses a flow head decoder

- All components — including the transformer backbone — are co-trained from scratch together

There is no separately pretrained encoder or decoder at any stage. Because all modalities share the same training objective from the beginning, the model develops integrated representations rather than patching modalities together post-hoc. The result is faster, tighter, and more contextually aware.

What Interaction Models Can Actually Do

Because interactivity is native rather than bolted on, the following are built-in model behaviors — not features added by a harness:

- Simultaneous speech — The model speaks and listens at the same time. Live translation (e.g., Spanish input to English output, bidirectionally) is a natural use case.

- Verbal interjections — The model can jump in mid-sentence when it has relevant information, based on conversational context — not just when you pause.

- Visual proactivity — The model reacts to what it sees on your camera without you saying anything. Examples include counting repetitions of a physical exercise, flagging a bug it spots in your code on screen, or noting a change in your environment.

- Time-awareness — The model tracks elapsed time and can initiate speech at user-specified moments — e.g., “remind me in 3 minutes.”

- Concurrent tool use — Web search, tool calls, and UI generation happen while conversation is ongoing. Results arrive naturally in context, not as separate events.

- Native dialog management — Pauses, self-corrections, and conversational yield signals are handled by the model itself, with no separate VAD component required.

Benchmark Results: How TML-Interaction-Small Compares

The model Thinking Machines Lab released for the research preview is named TML-Interaction-Small — a 276B parameter Mixture-of-Experts (MoE) architecture with 12B active parameters at inference time.

The benchmarks below compare it against leading real-time AI systems. Models are categorized as Instant (no extended reasoning) or Thinking (extended reasoning enabled). TML-Interaction-Small is an Instant model.

| Benchmark | TML-Interaction-Small | GPT-realtime-2.0 (minimal) | GPT-realtime-1.5 | Gemini-3.1-flash-live (minimal) |

|---|---|---|---|---|

| Audio MultiChallenge APR (Instant) | 43.4% | 37.6% | 34.7% | 26.8% |

| FD-bench v1.5 Interaction Quality (avg) | 77.8 | 47.8 | 48.3 | 54.3 |

| FD-bench v1 Turn Latency | 0.40s | 1.18s | 0.59s | 0.57s |

| FD-bench v3 Response Quality | 82.8% | — | — | — |

| FD-bench v3 Tool Use Pass@1 | 68.0% | — | — | — |

| TimeSpeak (model-initiated speech at correct moment) | 64.7 | 4.3 | — | — |

| CueSpeak (verbal cue response accuracy) | 81.7 | 2.9 | — | — |

| RepCount-A (visual action counting, off-by-one) | 35.4 | 1.3 | — | — |

Note: GPT-realtime-2.0 (xhigh) — a Thinking model — scores 48.5 on Audio MultiChallenge APR using extended reasoning. On FD-bench v1.5, it scores 47.8, below TML’s 77.8 despite the reasoning advantage.

The interaction quality gap on FD-bench v1.5 is the most significant result in the table. This benchmark specifically evaluates how a model handles user interruption, backchanneling (brief affirmative signals like “uh-huh”), talking-to-others scenarios, and background speech. These are precisely the scenarios where harness-based systems collapse — and where a natively interactive architecture has structural advantages.

New Benchmarks for Capabilities That Didn’t Exist Before

Because no existing real-time AI can meaningfully perform time-aware or visually proactive tasks, Thinking Machines Lab introduced four new internal benchmarks to measure these capabilities:

- TimeSpeak — Does the model initiate speech at a user-specified time with correct content? TML: 64.7 macro-accuracy. GPT-realtime-2.0 (minimal): 4.3.

- CueSpeak — Does the model respond to verbal cues at the correct conversational moment? TML: 81.7. GPT-realtime-2.0 (minimal): 2.9.

- RepCount-A — Can the model count repeated physical actions in a streaming video? TML: 35.4 off-by-one accuracy. GPT-realtime-2.0 (minimal): 1.3.

- ProactiveVideoQA — Does the model answer a question at the exact moment the visual answer becomes available in a stream? TML: 33.5 PAUC vs. 25.0 (the no-response baseline).

- Charades (temporal localization) — Can the model say “start” and “stop” as an action begins and ends in a streamed video? TML: 32.4 mIoU. GPT-realtime-2.0 (minimal): 0.

The zero score from GPT-realtime-2.0 on Charades is not a small performance gap — it reflects that the architecture has no mechanism to perform temporal localization in a continuous stream at all. The task category itself is outside what turn-based interaction models can address. These benchmarks define what the next generation of real-time AI will be measured against.

Why This Matters for the Future of Human-AI Collaboration

The framing Thinking Machines Lab uses — that interactivity must scale with intelligence rather than be managed separately — has significant implications for how developers, researchers, and product teams think about building on top of AI.

Right now, most real-time AI products spend significant engineering resources managing the gap between model intelligence and interaction quality. VAD pipelines, streaming state machines, context truncation logic, interruption handling — these are all workarounds for the fact that the underlying model doesn’t understand time, continuity, or simultaneity.

Interaction models shift that burden into the model layer. If the architecture proves out at scale, developers building voice products, live collaboration tools, assistive technology, and real-world AI agents could reduce infrastructure complexity substantially while gaining capabilities — like visual proactivity and concurrent tool use — that no amount of harness engineering can replicate.

The safety implications are also notable. Thinking Machines Lab reports a Harmbench refusal rate of 99.0% for TML-Interaction-Small — a deliberately high bar, given that real-time interaction introduces new alignment challenges that batch-mode systems don’t face.

Limitations to Know Before You Build

Thinking Machines Lab has been transparent about where the current system falls short:

- Long sessions — Continuous audio and video accumulate context quickly. Very long sessions still require careful context management and are an active area of development.

- Network dependency — Streaming in 200ms chunks requires reliable low-latency connectivity. Poor connections degrade the experience significantly.

- Model size — Larger pretrained variants exist but are currently too slow to serve in real-time. Larger real-time models are planned for later in 2026.

- Active alignment research — Real-time interaction at this fidelity opens genuinely new safety and alignment questions. Feedback collection is ongoing.

How to Get Access to the Research Preview

As of May 2026, Thinking Machines Lab is opening limited research preview access to collect structured feedback ahead of a wider 2026 release.

- Apply for early access via thinkingmachines.ai — contact details are on the research blog post.

- Research grant program — The lab is offering grants for work focused on interaction model benchmarks, evaluation frameworks, and human-AI collaboration research. The community is explicitly invited to develop new measurement frameworks for interactivity quality, which they consider an underserved area.

- Wider release — A broader public release is planned for later in 2026.

Key Takeaways

- Interaction models are a new class of AI system where real-time interactivity is native — not simulated through external scaffolding.

- The architecture runs two parallel models: an interaction model for continuous live exchange and a background model for deep reasoning and tool use, sharing full conversation context throughout.

- 200ms micro-turns replace the request-response loop, enabling simultaneous speech, visual proactivity, and concurrent tool use without waiting for a user turn to complete.

- On interaction quality benchmarks, TML-Interaction-Small (77.8 on FD-bench v1.5) significantly outperforms GPT-realtime-2.0 xhigh (47.8) and Gemini-3.1-flash-live (54.3).

- Competing systems score near zero on time-awareness and visual proactivity benchmarks (TimeSpeak, CueSpeak, Charades) — tasks that turn-based architectures have no mechanism to perform.

- A limited research preview is open now via Thinking Machines Lab, with a broader release planned for later in 2026.