Recursive self-improving AI is no longer a science-fiction concept — it’s a $650 million startup bet made in May 2026. Here’s everything you need to understand about what this technology is, how it works, and why it could be the most consequential shift in AI development since the transformer architecture.

What Is Recursive Self-Improving AI?

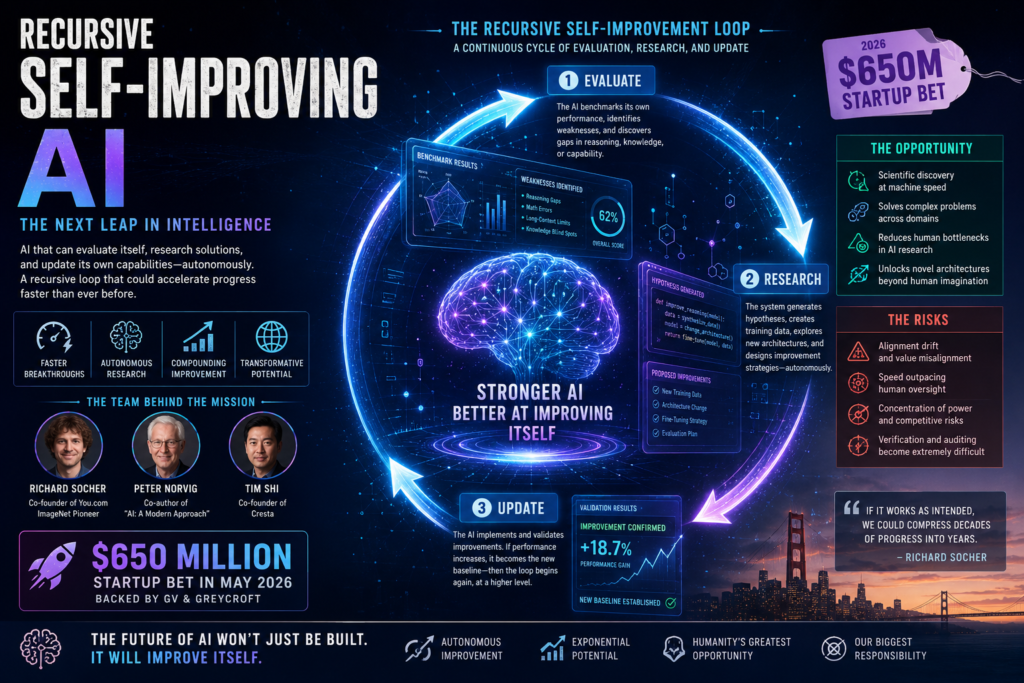

Definition: Recursive self-improving AI refers to an artificial intelligence system that can autonomously identify its own limitations, conduct research to address those limitations, and then update itself — without requiring a human engineer to make each improvement. The term “recursive” describes the self-referential loop: the AI improves itself, and the improved version is better at improving itself still further.

This is fundamentally different from how AI has been built so far. Today, a team of researchers trains a model, evaluates it, tweaks the architecture or training data, and retrains. That cycle takes months and enormous compute resources — and humans sit at the center of every decision. In a recursive self-improving system, the AI sits at the center instead.

Why does this matter? Because the speed of AI progress would no longer be bottlenecked by the rate at which human researchers can work. A recursive self-improving AI could, in theory, compress decades of research into a fraction of the time.

The Recursive Superintelligence Startup Explained

The concept moved from whiteboard to wire transfer on May 14, 2026, when a San Francisco-based startup called Recursive Superintelligence came out of stealth, backed by $650 million in funding from investors including GV and Greycroft.

Who Is Behind It?

The company was founded by Richard Socher, a prominent AI researcher previously known for co-founding You.com and for his contributions to the ImageNet project — foundational work that helped launch the modern deep learning era. He is joined by Peter Norvig, co-author of the field’s most widely used textbook Artificial Intelligence: A Modern Approach, and Tim Shi, co-founder of the enterprise AI company Cresta.

This is not a team of newcomers making bold promises. These are researchers who have built systems that are already shaping how AI works today.

The $650M Funding Bet

The size of the funding round signals something important: this is not speculative side-project capital. $650 million is the kind of money that gets committed when sophisticated investors believe a category is real, the timing is right, and the team has the credibility to execute. For context, that figure is comparable to early-stage funding rounds raised by OpenAI and Anthropic before they became household names.

Socher has also been explicit that Recursive Superintelligence will ship products — not just publish research papers. That distinguishes it from purely academic efforts and puts it in direct tension with the product roadmaps of every major AI lab.

How Does Recursive Self-Improving AI Actually Work?

At its core, recursive self-improving AI operates on a feedback loop that has three stages running continuously: evaluate, research, and update.

The Feedback Loop Explained

Stage 1 — Evaluate: The AI system runs benchmarks on its own performance. It identifies tasks where it underperforms: reasoning gaps, knowledge blind spots, mathematical errors, or limitations in long-context understanding. This self-evaluation is itself powered by AI — the model assesses itself the same way it would assess any other problem.

Stage 2 — Research: Having identified a weakness, the system autonomously generates hypotheses about how to address it. It may synthesize new training data, propose architectural changes, or design new fine-tuning strategies. This is the step that has historically required teams of PhD researchers working for months.

Stage 3 — Update: The system implements its proposed improvements, validates them against held-out benchmarks, and integrates the changes. If the update improves performance, it becomes the new baseline. The new, stronger model then returns to Stage 1 — and because it is more capable, it can find and fix even more sophisticated weaknesses.

This is why the term “recursive” is apt. Each generation of the model is more capable of improving the next generation. The loop does not plateau at the same level — it compounds.

Recursive Self-Improving AI vs. Traditional AI Development

Understanding the difference between recursive self-improvement and conventional model development is essential for grasping what is genuinely new here.

| Dimension | Traditional AI Development | Recursive Self-Improving AI |

|---|---|---|

| Who drives improvement | Human researchers | The AI system itself |

| Improvement cycle time | Months to years | Potentially hours to days |

| Bottleneck | Researcher bandwidth, compute budgets | Compute and safety constraints |

| Predictability | High — humans control each update | Lower — emergent behaviors are possible |

| Scalability | Linear (more researchers = more progress) | Potentially exponential |

| Safety oversight | Built into each iteration manually | Must be baked into the recursive loop itself |

| Current stage | Mature, industry-standard | Early research and development |

| Example organizations | OpenAI, Google DeepMind, Anthropic | Recursive Superintelligence (2026) |

The most striking contrast is in the improvement cycle time and scalability columns. Traditional AI development improves roughly linearly with the resources invested. Recursive self-improving AI, if it works as intended, could improve exponentially — because a smarter AI is better at making itself smarter.

Why This Matters: Opportunities and Risks

Recursive self-improving AI is not merely a technical curiosity. Its implications span scientific discovery, economic competitiveness, geopolitics, and existential safety. Here is a grounded assessment of both sides.

The Upside: What Becomes Possible

The optimistic case for recursive self-improving AI is genuinely compelling. Consider what becomes achievable if AI systems can autonomously accelerate their own capabilities:

- Scientific discovery at machine speed. Drug discovery, climate modeling, materials science, and genomics all require iterating over enormous solution spaces. An AI that improves its own reasoning could compress decades of research.

- Democratized expertise. If recursive self-improving AI produces dramatically more capable systems at lower cost, access to high-quality AI tools could expand well beyond large corporations.

- Reduced human bottleneck in AI research itself. There are not enough AI researchers in the world to meet the demand for AI development. Systems that can partially replace researcher time could relieve that constraint.

- Novel architectures. Human researchers tend to iterate within the paradigms they already know. A self-improving system might discover architectural innovations that humans would never think to try.

The Risks: What Could Go Wrong

The pessimistic case is equally serious — and gets more attention the longer you think about it.

Alignment drift. Each time a recursive self-improving AI updates itself, it is changing its own values and objectives, not just its capabilities. If its optimization target is even slightly misspecified, small errors compound with each iteration. This is the core concern articulated by AI safety researchers for decades: an AI that is good at self-improvement but misaligned with human values becomes more misaligned as it improves.

Speed outpacing oversight. If a recursive loop runs faster than human auditors can evaluate its outputs, meaningful human oversight becomes practically impossible. This is not a hypothetical — it is a structural feature of the technology.

Concentrated power. The organization that controls a genuinely recursive self-improving AI would have an enormous advantage over every competitor. That asymmetry creates incentives for secrecy, competitive shortcuts on safety, and geopolitical conflict.

Verification difficulty. How do you know a self-modified AI is still doing what you want? The model that ships products next quarter may be architecturally and behaviorally different from the model that was evaluated last month. Current interpretability tools are nowhere near adequate for this challenge.

Key Questions Recursive Self-Improving AI Raises

These are the questions researchers, policymakers, and builders are grappling with right now:

- Can safety constraints be made self-reinforcing? Can you design a recursive self-improving AI that is constitutionally resistant to modifying its own safety guardrails?

- What benchmarks should govern autonomous self-improvement? The AI decides what to improve based on its evaluation metrics. Whoever designs those metrics has enormous power over what the system becomes.

- How do you audit something that changes itself? Standard model evaluation assumes a static model. Recursive self-improving AI breaks that assumption entirely.

- Is there a safe stopping point? If you can turn off the recursive loop, when should you? And who decides?

What Comes Next: The Road Ahead for Recursive Self-Improving AI

Recursive Superintelligence’s emergence is not an isolated event — it reflects a broader shift in how the AI industry is thinking about progress. Several converging trends make 2026 a plausible inflection point:

Compute availability. The infrastructure required to run iterative self-improvement loops — running benchmarks, generating training data, fine-tuning — is now commercially accessible in a way it was not five years ago.

Better evaluation frameworks. The field has developed increasingly sophisticated ways to measure AI capabilities across dimensions that matter: reasoning, long-context performance, factual accuracy, and code generation. These benchmarks are good enough to serve as the “objective function” for recursive self-improvement.

Interpretability progress. While current interpretability tools are still inadequate for overseeing recursive self-improvement, the field is moving quickly. Anthropic, DeepMind, and academic labs have published significant work on mechanistic interpretability that makes model internals more legible.

Institutional pressure. Governments and regulators are paying attention. The EU AI Act, U.S. executive orders on AI safety, and emerging international frameworks all contemplate risks from highly autonomous AI systems. How Recursive Superintelligence navigates that regulatory environment will be a major story to watch.

The honest forecast is this: recursive self-improving AI in its full form remains more promise than product as of mid-2026. Socher’s insistence that Recursive Superintelligence will ship real products — not just papers — is the right instinct. The field needs to demonstrate practical, bounded, safe self-improvement before it can credibly claim to be building something transformative.

But the direction of travel is clear. Every major AI lab is working on some version of AI-assisted AI research. The question is not whether AI systems will begin improving themselves — some already do in narrow ways. The question is how fast, how autonomous, and how safe that process will be.

Summary: What You Need to Know About Recursive Self-Improving AI

Recursive self-improving AI is an AI system that autonomously researches and implements improvements to itself, creating a compounding feedback loop that could dramatically accelerate the pace of AI development. In May 2026, Recursive Superintelligence — founded by Richard Socher, Peter Norvig, and Tim Shi — became the highest-profile attempt to build this technology, raising $650 million to do so. The opportunity is enormous: faster scientific discovery, reduced research bottlenecks, and capabilities that compound over time. The risks are proportionally serious: alignment drift, oversight gaps, and the concentration of transformative power in a single organization. The coming years will determine whether the field can capture the upside while building adequate safeguards around one of the most consequential ideas in the history of computing.