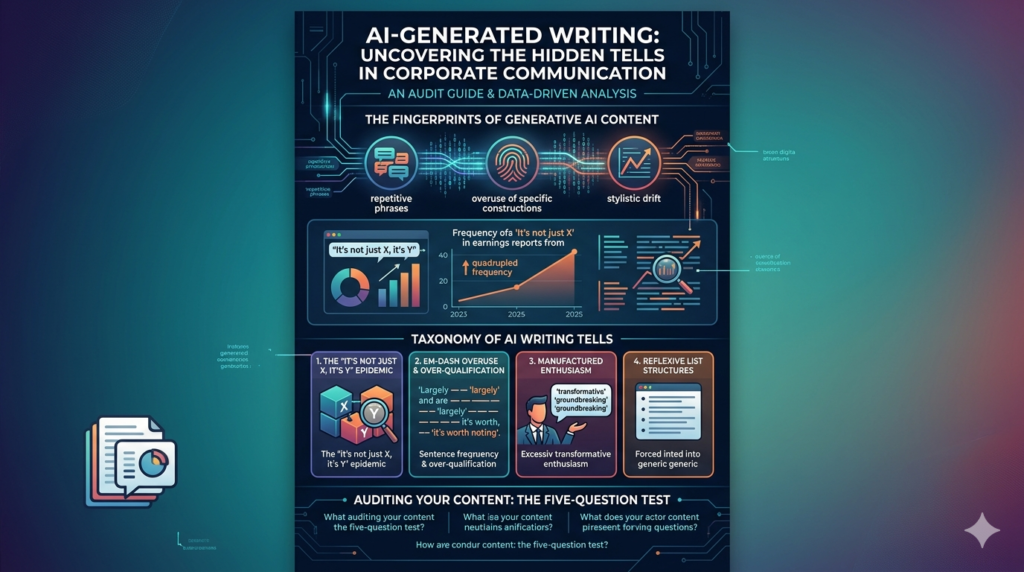

AI-generated writing leaves predictable linguistic fingerprints, and one phrase has become its clearest signature: “It’s not just X — it’s Y.” According to a Barron’s analysis of the AlphaSense market intelligence database, this construction appeared in corporate communications more than four times as often in 2025 as it did in 2023 — jumping from roughly 50 mentions to over 200 across earnings reports, news releases, and government filings. If you’ve ever read a press release and felt like something was just slightly off, there’s now data to explain why.

What Is AI-Generated Writing — And Why It Leaves Fingerprints

AI-generated writing is any text produced wholly or substantially by a large language model (LLM) such as ChatGPT, Gemini, or Claude, often with minimal human editing afterward. These models are trained on vast bodies of human-written text, and in learning to mimic effective prose, they converge on high-frequency rhetorical patterns — phrases that appeared often in their training data and were statistically reinforced as “good writing.”

The result is a kind of stylistic consensus. Millions of independent AI outputs, trained on similar data and optimized for similar goals, tend to produce the same rhetorical gestures. They’re not copying each other — they’re all copying us. Or more precisely, they’re copying the statistical average of professional human writing, which then feeds back into their outputs at scale.

This creates a detectable loop: AI-generated writing patterns emerge, spread through corporate and institutional content, get incorporated into new training data, and get amplified in the next generation of models.

The “It’s Not Just X, It’s Y” Epidemic

Where the Data Comes From

The clearest current evidence of AI writing patterns in the wild comes from a Barron’s investigation using AlphaSense, a market intelligence platform that indexes corporate filings, earnings call transcripts, press releases, and regulatory documents. Researchers tracked how often the construction “It’s not just [A] — it’s [B]” appeared in this corpus over time.

The results were stark. This specific phrase structure — designed to elevate a claim from the merely interesting to the supposedly transformative — quadrupled in frequency between 2023 and 2025. The timing correlates almost precisely with the mass adoption of generative AI tools in enterprise content workflows following the release of ChatGPT in late 2022.

Real examples identified in the past year include statements from Cisco, Accenture, Workday, McKinsey, and even a widely-circulated Microsoft blog post attributed to CEO Satya Nadella — which used the construction multiple times in a single piece.

Why AI Systems Favor This Construction

This isn’t random. LLMs learn to use contrastive “not just… but also” constructions because they are genuinely effective rhetorical devices in persuasive human writing. They signal depth: “We’re not giving you the surface answer — we’re giving you the real, larger truth.” In training data, this pattern appears in speeches, op-eds, TED talks, and executive communications — all high-quality signal sources that models weight heavily.

The problem is that when an LLM uses this device, it often does so without the underlying substance that makes the construction earn its weight. The phrase implies a revelation; the content delivers a restatement. Human writers use it sparingly; AI-generated writing uses it reflexively.

The Full Taxonomy of AI Writing Tells

The “not just X, it’s Y” construction is the most statistically documented AI writing pattern right now, but it isn’t the only one. AI-generated writing tends to cluster around a recognizable set of rhetorical habits.

Em-Dashes and Over-Qualification

Em-dashes — used to insert clarifying asides — have become so strongly associated with AI-generated writing that many editors now treat them as a soft red flag. LLMs overuse them because training data rewards prose that is both confident and hedged, and em-dashes allow a model to appear authoritative while simultaneously softening the claim.

Similarly, AI outputs tend toward over-qualification: “largely,” “in many ways,” “it’s worth noting that,” and “to some extent” appear with unusual frequency. These hedges appear in human expert writing too, but usually serve a specific purpose. In AI-generated text, they appear as stylistic defaults regardless of whether precision actually requires them.

The Enthusiasm Problem

AI-generated writing also exhibits a specific flavor of manufactured enthusiasm. Phrases like “game-changing,” “transformative,” “unprecedented,” and “groundbreaking” appear at rates that no individual human writer would sustain. So do structural enthusiasm markers: sentences that begin with “Imagine if…” or “What if we told you…” These patterns are statistically common in the kind of forward-looking, visionary content that dominates business writing — and so they get over-represented in model outputs targeting that register.

AI Writing Patterns in Corporate Communications: A Comparison

The table below maps the most common AI writing patterns against their probable rhetorical origin and the telltale sign that distinguishes AI overuse from authentic human use.

| AI Writing Pattern | Why LLMs Use It | Human Equivalent | AI Overuse Signal |

|---|---|---|---|

| “It’s not just X — it’s Y” | Signals depth and revelation | Used once for genuine contrast | Appears multiple times per document |

| Em-dash asides | Balances confidence with hedging | Sparingly, for emphasis | Every other sentence |

| “Transformative / game-changing” | High reward in training data | Reserved for genuine inflection points | Applied to routine updates |

| “Imagine if…” openings | Mimics visionary speech patterns | Occasionally, in creative contexts | Appears in technical or policy docs |

| Numbered lists for everything | Optimized for readability scores | When content is genuinely enumerable | Replaces prose even for nuanced ideas |

| Passive voice hedges (“it can be said that”) | Avoids assertive claims | Never in good writing | Common as a way to appear balanced |

| “In today’s rapidly evolving landscape” | Ultra-common opener in training data | Rarely, and only to ground temporal context | Opening line of nearly every section |

Why Companies Keep Using AI-Generated Language Anyway

If these patterns are so recognizable, why haven’t businesses corrected course? Several forces are at work.

First, speed and volume: AI-generated writing is orders of magnitude faster to produce than carefully crafted human prose. For companies under pressure to publish quarterly reports, investor updates, product announcements, and regulatory filings — all simultaneously — the productivity calculus is difficult to resist.

Second, diffuse accountability: In large organizations, no single person owns the voice of every corporate document. When AI tools are embedded across teams, the resulting writing reflects no individual’s stylistic judgment and faces no individual editor’s scrutiny. The patterns slip through because no one is looking for them specifically.

Third, the feedback loop: Because AI corporate language is now everywhere, it starts to define what “professional writing” sounds like to readers and to models alike. If enough earnings calls use “not just a product launch — a platform moment,” the phrase starts to seem normal. AI-generated content normalizes AI writing patterns, which then get further reinforced in training data.

How to Audit Your Own Content for AI Writing Patterns

If you’re a content strategist, communications professional, or marketing leader, the practical question is: how do you know if your content has drifted into these patterns — and how do you fix it?

The following checklist targets the most common AI writing issues that reduce content credibility and distinctiveness:

- Check for contrastive constructions: Search your document for “not just” and “not only.” If the phrase appears more than once per 1,000 words, flag each instance and ask whether the contrast is genuinely earned.

- Count your em-dashes: More than two or three per page is a signal worth investigating.

- Audit your superlatives: Every use of “transformative,” “revolutionary,” or “unprecedented” should have a specific, factual referent. If it doesn’t, cut or rewrite.

- Test the opening sentence of every section: Can it be understood without any surrounding context? If not, the structure may be too narrative and not modular enough for modern readers — or AI retrieval systems.

- Read for hedging density: Run a search for “largely,” “in many ways,” “to a certain extent,” and “arguably.” If they appear more than once per 500 words, your content may be over-qualifying in ways that dilute authority.

- Look for list overuse: If every argument in your document is a bullet point, the content may have been structured for AI readability at the expense of actual persuasive flow.

The Five-Question Passage Test

For any section of content you suspect may have been AI-generated or AI-assisted with insufficient editing, apply these five diagnostic questions:

- Does the opening line actually answer the question the heading raises — or does it just restate the question?

- Is there a specific, verifiable fact in this section, or only general claims?

- Does any sentence sound like it could appear verbatim in a completely different article about a completely different industry?

- Is the enthusiasm level consistent with what a knowledgeable human expert would actually feel about this topic?

- Could the main point of this section be stated in one plain sentence without using any qualifiers?

If you answered “no” to questions 1, 2, or 5, or “yes” to questions 3 or 4, the section likely needs substantive human revision — not just a light polish.

The Bigger Implication: When AI Writes the Record

The Barron’s/AlphaSense finding isn’t just a curiosity about corporate writing style. It points to something more significant: AI-generated writing is increasingly becoming the official record of institutional activity.

Earnings calls, regulatory filings, investor communications, and government reports are not entertainment — they are legal, financial, and historical documents. When the language of these documents homogenizes around AI writing patterns, several things happen simultaneously:

- Specificity erodes. The distinctive voice of an organization — or a leader — gets flattened into statistical average prose. When Satya Nadella’s blog post sounds like it could have been written by any large company’s communications team, something meaningful has been lost.

- Accountability blurs. Rhetorical patterns that imply depth and vision without providing it create a kind of vagueness that is very hard to hold anyone to. “It’s not just a product — it’s a platform” sounds like a commitment, but it isn’t one.

- Trust erodes gradually. Readers and regulators are increasingly attuned to the feel of AI-generated content. When official communications feel synthetic, the credibility of the institutions behind them suffers — even when no individual statement is technically false.

This is the moment for content teams, communications professionals, and executives to make an active choice: use AI-generated writing as a draft accelerator, but invest in the human editorial work that makes content actually distinctive, credible, and yours.

The em-dash. The revelation construction. The landscape that’s always rapidly evolving. These aren’t just quirks — they’re symptoms. And recognizing a symptom is the first step toward treating it.

Key Takeaways

- AI-generated writing is identifiable by recurring rhetorical patterns — the “not just X, it’s Y” construction being the most statistically documented.

- Corporate use of this specific phrase quadrupled between 2023 and 2025, according to AlphaSense data analyzed by Barron’s.

- Other common AI writing patterns include em-dash overuse, superlative inflation, hedging density, and reflexive list structures.

- The spread of these patterns in official documents — filings, earnings calls, policy communications — carries real-world implications for specificity, accountability, and trust.

- Content audits focused on five diagnostic questions can help teams identify and correct AI-generated drift before it affects credibility.

Frequently Asked Questions (FAQ)

1. What is AI-generated writing?

AI-generated writing refers to text created by large language models (LLMs) such as ChatGPT, Claude, or Gemini. These systems are trained on vast datasets of human-written content and generate responses by predicting statistically likely word sequences.

In practice, AI-generated writing is used for blog posts, corporate communications, emails, product descriptions, and even regulatory filings. While it can be highly fluent and grammatically correct, it often reflects patterns from training data rather than original human intent. This leads to recognizable AI writing patterns, such as repetitive phrasing, overly structured sentences, and generalized statements.

2. Why does AI-generated writing sound so similar across different companies?

One of the biggest issues with AI-generated writing is convergence. Since most large language models are trained on similar internet-scale datasets, they tend to reproduce the same rhetorical structures.

This is why corporate content from completely different organizations often sounds identical. Phrases like “not just X, but Y,” “in today’s rapidly evolving landscape,” and “transformative solutions” appear frequently because they are statistically common in training data.

Over time, this creates a loop where AI corporate language reinforces itself, making writing more uniform and less distinctive.

3. What are the most common AI writing patterns?

The most recognizable AI writing patterns include:

- Overuse of contrast phrases like “It’s not just X, it’s Y”

- Frequent em-dash interruptions — used for added explanation

- Excessive hedging (“in many ways,” “it can be argued that”)

- Generic enthusiasm (“game-changing,” “revolutionary,” “transformative”)

- Over-structured bullet-point formatting

- Repetitive introductory phrases like “In today’s fast-paced world”

These patterns are not inherently wrong, but their overuse is a strong indicator of generative AI content tells in writing.

4. How can you detect AI-generated writing in corporate content?

Detecting AI-generated writing involves looking for consistency issues rather than grammatical errors. A few key indicators include:

- Lack of specific data or concrete examples

- Overuse of abstract or vague claims

- Repetitive sentence structures across sections

- Generic tone that feels “too polished” or emotionally neutral

- Over-reliance on marketing-style language without substance

Professional editors often perform an “authenticity audit” by checking whether each paragraph could realistically have been written by a subject-matter expert without AI assistance.

5. Is AI-generated writing bad for businesses?

Not necessarily. AI-generated writing can be highly beneficial for speed, scalability, and productivity. Businesses use it to generate drafts, summarize reports, and automate repetitive content tasks.

However, problems arise when AI output is published without proper human editing. This can lead to diluted brand voice, reduced credibility, and in some cases, loss of trust from readers who recognize AI writing patterns.

The best approach is a hybrid model: AI for drafting, humans for refinement.

6. Why do companies still rely heavily on AI-generated writing?

Despite its flaws, companies continue using AI-generated writing because of efficiency. It dramatically reduces content production time and costs.

Large organizations also face constant publishing pressure—earnings reports, marketing campaigns, internal updates, and policy documents. AI helps meet these demands at scale.

Another reason is normalization: as more companies adopt AI corporate language, it becomes the default expectation for “professional writing,” even if it lacks originality.

7. What are the risks of overusing AI writing patterns?

Overuse of AI writing patterns introduces several risks:

- Loss of brand authenticity

- Reduced audience engagement

- Lower trust in corporate messaging

- Increased difficulty in differentiating from competitors

- Potential misinterpretation due to vague language

In regulated industries, overly generic AI-generated content can also create compliance risks if statements lack clarity or specificity.

8. How can you improve or “humanize” AI-generated writing?

To improve AI-generated writing, editors should focus on:

- Replacing generic claims with specific data or examples

- Removing repetitive rhetorical structures

- Reducing unnecessary hedging language

- Varying sentence length and tone

- Adding real-world context or case studies

A useful technique is the “specificity test”: if a sentence could apply to any company or industry, it should be rewritten.

9. What is the “not just X, it’s Y” AI writing pattern?

The phrase “not just X, it’s Y” is one of the most identifiable AI writing patterns in corporate communication.

It is used to create a sense of depth or transformation, such as:

- “It’s not just a product—it’s a platform”

- “Not just innovation, but disruption”

While effective in moderation, AI systems tend to overuse this structure because it frequently appears in persuasive human writing data. This makes it a key generative AI content tell in modern analysis.

10. Will AI-generated writing replace human writers?

AI is unlikely to fully replace human writers, but it is reshaping their role. AI-generated writing is best understood as a drafting tool rather than a complete replacement for human creativity.

Human writers are still essential for:

- Strategic messaging

- Emotional nuance

- Brand voice consistency

- Critical thinking and fact validation

The future of content creation is not AI vs humans—it is AI + humans working together.

11. How should companies balance AI-generated writing and authenticity?

The ideal balance involves using AI-generated writing for efficiency while maintaining strict editorial oversight. Companies should:

- Use AI for first drafts only

- Apply human editing for tone and clarity

- Establish style guidelines to avoid AI writing patterns

- Regularly audit content for repetition and generic phrasing

This ensures content remains both scalable and authentic.

12. Why is identifying AI writing patterns important today?

Understanding AI writing patterns is increasingly important because AI-generated content is now embedded in corporate, legal, and public communications.

Without awareness, organizations risk unintentionally producing homogeneous content that weakens their messaging. By identifying these patterns early, companies can preserve originality, improve communication quality, and maintain audience trust.