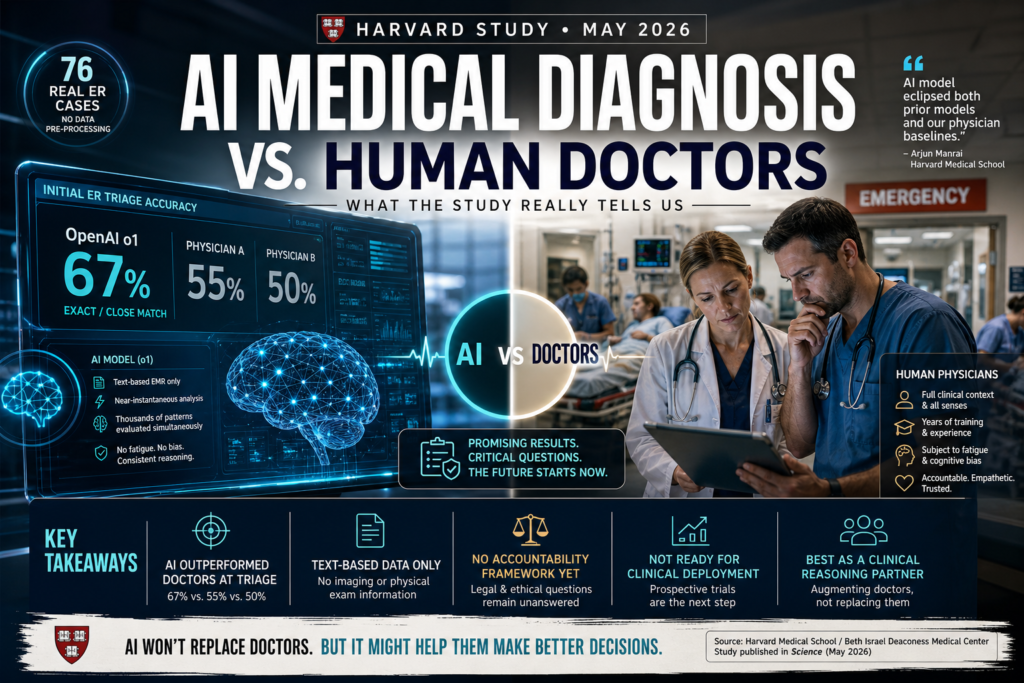

A landmark Harvard study published in May 2026 found that AI medical diagnosis matched or surpassed emergency room physicians at every diagnostic checkpoint — most strikingly at triage, where an AI model correctly identified the diagnosis 67% of the time compared to 55% and 50% for two attending physicians. Here is what that means, what it doesn’t, and why it matters for the future of medicine.

What the Harvard Study Actually Found

Published in the journal Science and led by researchers at Harvard Medical School and Beth Israel Deaconess Medical Center, the study is one of the most rigorous real-world evaluations of AI performance in clinical settings to date. Unlike lab-based benchmarks, this research used actual emergency room cases — not curated or pre-processed datasets.

How the Study Was Designed

Researchers examined 76 real patients who presented at the Beth Israel emergency room. For each patient, two attending physicians recorded their diagnoses. OpenAI’s o1 and GPT-4o models were given the same information available in the electronic medical record at each diagnostic touchpoint. Two additional attending physicians, blinded to whether the diagnoses came from humans or AI, then evaluated the accuracy of all responses.

Critically, the researchers did not pre-process or clean the data before feeding it to the models. The AI operated under exactly the same informational constraints as the human doctors — no special preparation, no curated inputs.

The Numbers: AI vs. Attending Physicians

| Diagnostic Stage | OpenAI o1 (Exact/Close Match) | Physician A | Physician B |

|---|---|---|---|

| Initial ER Triage | 67% | 55% | 50% |

| Overall Performance | At or above physicians | Baseline | Baseline |

| Data Type Used | Text-based EMR only | Full clinical context | Full clinical context |

At every diagnostic touchpoint studied, OpenAI’s o1 model performed at least as well as — and often better than — both human physicians. The gap was widest at the initial triage stage, where time pressure and limited patient information are most acute.

What Is AI Medical Diagnosis?

Definition: AI medical diagnosis refers to the use of artificial intelligence systems — particularly large language models (LLMs) and machine learning algorithms — to analyze patient data and generate differential diagnoses, treatment suggestions, or clinical assessments.

Expansion: Unlike traditional clinical decision support tools that flag specific drug interactions or alert for abnormal lab values, modern LLM-based AI medical diagnosis systems can reason across complex, multi-variable patient presentations in natural language. They ingest clinical notes, symptom histories, lab results, and vital signs — the same text-based content that clinicians document in electronic health records — and produce ranked lists of possible diagnoses with supporting reasoning.

The Harvard study represents a pivotal step in validating this capability in real-world conditions rather than controlled benchmarks.

Why AI Outperformed Doctors at Triage

The Triage Advantage: Less Data, Better Reasoning?

The study’s most striking finding was not just that AI performed well overall — it is that the advantage was sharpest at the moment of least information. At initial triage, clinicians have only a brief patient history, presenting complaint, and basic vitals to work with. This is precisely where cognitive load is highest and diagnostic error rates climb.

Lead researcher Arjun Manrai, who heads an AI lab at Harvard Medical School, noted that the AI model “eclipsed both prior models and our physician baselines” across virtually every benchmark tested. The o1 model’s extended reasoning capabilities — designed to work through problems methodically before producing an answer — may be particularly suited to incomplete, ambiguous clinical scenarios.

There is also a structural explanation. Large language models trained on vast biomedical literature can pattern-match across thousands of disease presentations simultaneously, without the fatigue, cognitive bias, or anchoring effects that affect human clinicians after hours on a busy ER floor.

AI vs. Doctors in the Emergency Room: A Comparison

Understanding where AI medical diagnosis adds value — and where it falls short — requires comparing the two approaches across the dimensions that matter most in clinical settings.

| Dimension | AI (LLM-based) | Human ER Physician |

|---|---|---|

| Diagnostic Accuracy (triage) | ~67% exact/close match | 50–55% exact/close match |

| Speed | Near-instantaneous | Variable (minutes to hours) |

| Data Input | Text-based records only | All senses + clinical intuition |

| Fatigue | None | Significant after long shifts |

| Emotional Intelligence | Limited | High |

| Accountability | No formal framework exists | Legally and ethically defined |

| Non-text Reasoning | Currently limited | Strong (imaging, physical exam) |

| Patient Trust | Early-stage | Established |

This comparison illustrates the core insight from the study: AI medical diagnosis is not a wholesale replacement for physician judgment. It is, right now, a potentially powerful reasoning complement — especially in the narrow but critical window of initial patient assessment.

What This Means — and What It Doesn’t

Is AI Ready to Replace Emergency Room Doctors?

No. The researchers themselves were explicit on this point. The study concludes that its findings demonstrate “an urgent need for prospective trials to evaluate these technologies in real-world patient care settings.” That is scientific language for: these results are promising, but we are not done yet.

Several key limitations apply:

- Text only: The models were tested exclusively on text-based clinical data. They could not interpret imaging, auscultate a chest, observe a patient’s affect, or perform a physical examination. Existing research suggests that current AI systems are considerably weaker when reasoning over non-text inputs.

- Small sample: Seventy-six patients is a meaningful pilot, but it is not the scale needed to draw population-level conclusions or to justify clinical deployment.

- No intervention: The study measured diagnostic accuracy, not patient outcomes. Whether acting on an AI’s suggested diagnosis would lead to better survival rates, shorter hospital stays, or fewer complications remains untested.

AI medical diagnosis, at this stage, should be understood as a research breakthrough — not a clinical protocol.

The Accountability Gap

Perhaps the most important non-medical finding from the study is a legal and ethical one. Adam Rodman, a Beth Israel physician and co-lead author, acknowledged to the Guardian that there is currently “no formal framework for accountability” around AI-generated diagnoses. Patients, he noted, still want humans to guide them through life-or-death decisions.

This accountability gap is not a minor footnote. Healthcare operates within a dense web of regulatory, institutional, and ethical structures. Before AI medical diagnosis can move from research to routine clinical practice, medicine will need to answer hard questions: Who is liable when an AI is wrong? How is informed consent managed when an algorithm contributes to a treatment plan? What audit trails are required?

These questions do not diminish the study’s findings — they frame the work that still needs to happen.

The Road Ahead: From Lab Study to Clinical Reality

The Harvard study lands at an inflection point. AI capabilities in clinical reasoning have clearly advanced beyond what most medical institutions have had time to process. The findings are prompting researchers and policymakers alike to reconsider how AI tools should be evaluated, regulated, and integrated.

Several likely near-term developments follow from this research:

- Prospective clinical trials will be the immediate next step. Retrospective studies like this one analyze past cases; prospective trials embed AI tools in live clinical workflows and measure real outcomes over time.

- Regulatory clarification from bodies like the FDA will be necessary to define the legal status of AI-assisted diagnosis — whether it constitutes a medical device, a decision-support tool, or something entirely new.

- Multimodal expansion is the obvious capability gap to close. Combining LLM-based reasoning with AI-powered imaging analysis (already advanced in radiology) could address the text-only limitation identified in the Harvard study.

- Hybrid clinical models — where AI performs initial diagnostic reasoning and flags cases for physician review — are the most likely near-term deployment pattern. This preserves human accountability while leveraging AI’s pattern-recognition speed.

The study does not answer all these questions. But it significantly raises the stakes for answering them soon.

Key Takeaways

- The Harvard study published in Science (May 2026) found that OpenAI’s o1 model matched or outperformed two attending physicians in diagnosing 76 real emergency room cases — without any pre-processing of clinical data.

- AI medical diagnosis was most accurate at triage, where information is scarce and urgency is highest: o1 achieved a 67% exact or close match rate versus 55% and 50% for human physicians.

- The study used text-based EMR data only. AI performance on non-text inputs (imaging, physical examination findings) remains an open and important question.

- No accountability framework exists for AI-generated clinical diagnoses. Deployment at scale will require legal, regulatory, and ethical infrastructure that does not yet exist.

- The researchers explicitly did not claim AI is ready for clinical deployment. They called for prospective real-world trials as the necessary next step.

- AI medical diagnosis is best understood today as a clinical reasoning augmentation tool — one that may reduce diagnostic error, especially in high-pressure, data-limited environments like the ER triage bay.

- Large language models trained on biomedical literature can pattern-match across thousands of disease presentations simultaneously, without the fatigue or cognitive bias that affects human clinicians in demanding settings.

Frequently Asked Questions

Q: Did the Harvard study prove AI is better than doctors at diagnosing illness?

The study found that OpenAI’s o1 model matched or exceeded two attending physicians in diagnostic accuracy across 76 real emergency room cases, with the largest advantage at initial triage. However, the study was limited to text-based data, involved a relatively small sample, and did not measure patient outcomes — so “better than doctors” is a significant overstatement of what the evidence currently supports.

Q: Which AI model was tested in the Harvard emergency room study?

The study tested two OpenAI models: o1 and GPT-4o. OpenAI’s o1 model consistently performed at or above physician-level accuracy, with the widest gap at the triage stage where it achieved a 67% exact or close match rate.

Q: What are the main limitations of using AI medical diagnosis in real clinical settings?

The primary limitations identified in the study and broader literature include: reliance on text-only inputs (no imaging or physical exam data), absence of a regulatory accountability framework, lack of prospective outcome data, and limited patient and institutional trust. These are solvable problems — but they are not yet solved.

Q: What should hospitals do in response to this study?

The researchers recommend prospective clinical trials as the immediate next step. Hospitals and health systems should begin evaluating how AI reasoning tools can be integrated into clinical workflows in a supervised, accountable way — not deployed wholesale as autonomous decision-makers.

Conclusion

The Harvard study has pushed AI medical diagnosis into a new phase of credibility. For years, discussions around artificial intelligence in healthcare were often dominated by hype, skepticism, or theoretical possibilities. This new evidence changes that conversation. By showing that AI medical diagnosis systems like OpenAI’s o1 could match or outperform attending physicians across real emergency room cases, the study offers one of the clearest signals yet that AI is becoming a serious clinical reasoning tool.

What makes these findings especially important is where the strongest results appeared. The fact that AI medical diagnosis performed best during early triage suggests a practical and immediate opportunity for hospitals. Triage is fast, chaotic, and information-poor—exactly the kind of environment where clinicians are vulnerable to fatigue, bias, and time pressure. In contrast, AI medical diagnosis systems can rapidly evaluate symptom patterns, compare them against vast medical knowledge, and surface likely diagnoses without cognitive exhaustion.

Still, this does not mean hospitals should hand decision-making over to algorithms. The current limitations remain significant. AI medical diagnosis in this study relied entirely on text-based electronic medical records, meaning the system could not perform physical exams, interpret body language, or integrate real-world bedside observations. Human doctors still provide critical judgment, empathy, accountability, and context that no model currently replicates.

The most realistic future is not AI versus doctors, but AI with doctors. In this hybrid model, AI medical diagnosis can function as an intelligent second opinion—catching overlooked conditions, reducing diagnostic error, and helping physicians make faster, better-informed decisions. Rather than replacing clinicians, AI medical diagnosis is more likely to strengthen clinical workflows, especially in high-pressure environments like emergency departments.

The next step is clear: broader prospective trials, stronger regulation, and accountability frameworks. If these are developed responsibly, AI medical diagnosis could become one of the most meaningful healthcare advancements of the decade. The Harvard study does not prove AI is ready to replace doctors, but it does prove something equally important: AI medical diagnosis is no longer an experimental curiosity. It is emerging as a practical force that could reshape modern medicine, improve emergency care, and redefine how hospitals approach diagnosis in the years ahead.