AI terminology glossary content is essential if you’ve ever felt lost reading about artificial intelligence. This complete 2026 guide explains key AI terms like LLMs, hallucinations, tokens, and more in a simple, practical way for beginners and professionals alike.

If you’ve ever felt lost when reading about artificial intelligence, you’re not alone — and this guide fixes that. This comprehensive AI terminology reference covers every major term you’ll encounter in 2026, from large language models to hallucinations, written plainly so both beginners and professionals can benefit immediately.

AI Terminology Glossary: Why It Matters in 2026

The AI industry is accelerating faster than most people can keep up with. Researchers coin new phrases, companies release jargon-heavy announcements, and journalists assume baseline fluency. Understanding AI terminology is no longer a nice-to-have skill reserved for engineers — it’s become essential literacy for business leaders, students, marketers, and curious consumers alike.

More practically: AI systems like ChatGPT, Gemini, and Claude are now embedded in tools people use every day. When you know what a “token” or an “inference” actually means, you make better decisions about how to use these tools, how to evaluate their outputs, and how to avoid being misled by them.

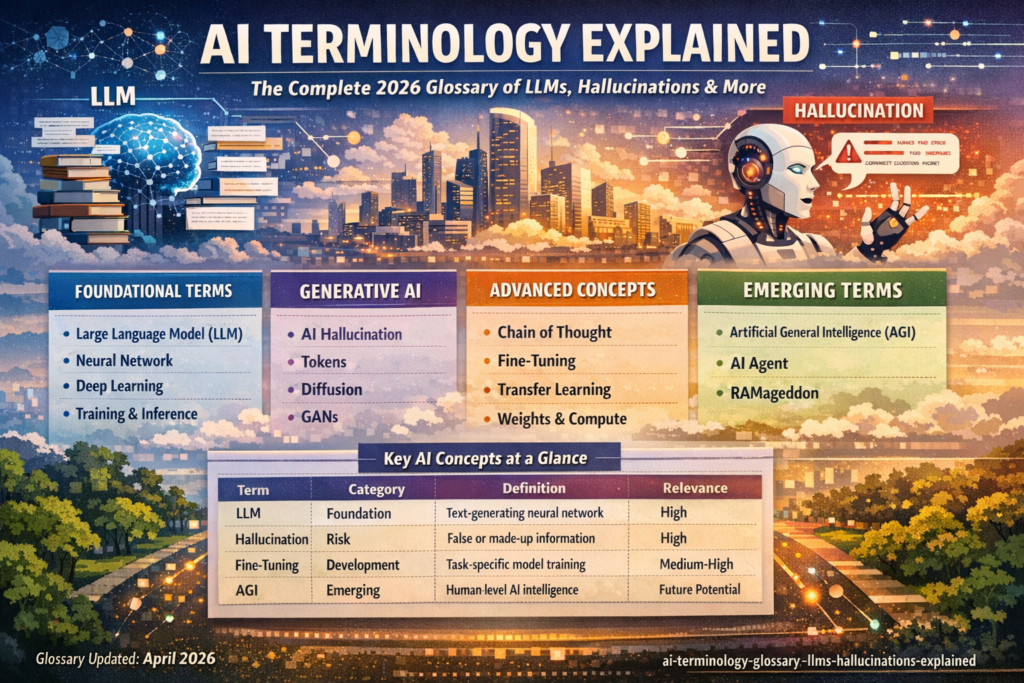

This guide organizes AI terminology into four layers — foundational, generative, advanced, and emerging — so you can navigate it at whatever depth you need.(AI terminology glossary, AI terms explained, LLM terminology, AI concepts explained, generative AI glossary)

Foundational AI Terminology: Where It All Starts

These are the bedrock concepts. If you only learn a handful of AI terminology entries, make them these.

Large Language Model (LLM)

What it is: A large language model (LLM) is a deep neural network trained on vast quantities of text — books, articles, code, web pages — that learns to predict and generate language.

Why it matters: LLMs are the engines powering ChatGPT, Claude, Google Gemini, Meta’s Llama, and Microsoft Copilot. When you type a question into any of these assistants, you’re interacting directly with an LLM. The model works by evaluating the most probable next word given everything said before, then repeating that process until a complete response is formed.

LLMs are made of billions of numerical parameters called weights that encode relationships between words, phrases, concepts, and context — essentially building a multidimensional map of language itself. (AI terminology glossary, AI terms explained, LLM terminology, AI concepts explained, generative AI glossary)

Neural Network

What it is: A neural network is a multi-layered computational structure designed to loosely mimic the interconnected pathways of neurons in the human brain.

Why it matters: Neural networks are the architectural foundation beneath virtually all modern AI, including LLMs. The concept dates back to the 1940s, but it was the rise of powerful GPU chips (originally developed for video games) that made deep, many-layered networks practical and transformative. Today, neural networks underpin everything from voice recognition to drug discovery to autonomous navigation.

Deep Learning

What it is: Deep learning is a subset of machine learning that uses multi-layered neural networks to find complex patterns in data without requiring human engineers to manually define the features. (AI terminology glossary, AI terms explained, LLM terminology, AI concepts explained, generative AI glossary)

Why it matters: Deep learning is what separates modern AI from the rule-based systems of earlier decades. Because the model identifies what’s important in data on its own, it can handle nuance and ambiguity at scale. The tradeoff: deep learning requires enormous volumes of data (often millions of examples) and substantial computing resources, making it expensive to develop.

Training

What it is: Training is the foundational process by which a machine learning model learns. Data is fed into the model so it can detect patterns, adjust its internal parameters (weights), and progressively improve its outputs toward a desired goal.

Why it matters: Before training, an AI model is essentially just a mathematical structure filled with random numbers. Training is what gives it intelligence. The process can be extraordinarily resource-intensive — large models like GPT-4 or Claude 3 required millions of dollars in compute to train. This is why training costs, data quality, and energy consumption are central concerns in AI development. (AI terminology glossary, AI terms explained, LLM terminology, AI concepts explained, generative AI glossary)

Inference

What it is: Inference is what happens when you actually use a trained AI model. It’s the process of the model generating a response — a prediction, an answer, a piece of text — based on the patterns it learned during training.

Why it matters: Inference happens every time you send a message to an AI chatbot. Unlike training (which happens once), inference happens billions of times per day across the global AI industry. Hardware efficiency during inference is a major engineering challenge, since very large models can be slow or expensive to run without specialized chips.

Generative AI Glossary: The Terms Behind the Tools

Generative AI refers to systems that create new content — text, images, audio, code — rather than just classifying or analyzing existing data. Here are the essential AI terminology entries for this domain.

AI Hallucination

What it is: An AI hallucination occurs when a model generates information that is factually incorrect or entirely fabricated — but presents it with apparent confidence.

Why it matters: Hallucinations are arguably the most consequential problem in modern AI. A model that produces plausible-sounding but false medical advice, legal citations, or news summaries poses real-world risks. Hallucinations arise partly because models are trained to generate statistically likely language — not to verify facts. There simply isn’t enough data in existence to fill every possible knowledge gap, so models sometimes “fill in” with invented content.

Most AI tools now include disclaimers urging users to verify AI outputs, though these warnings are often far less prominent than the answers themselves. Reducing hallucinations is driving significant investment in domain-specific “vertical” AI models that work within narrower, more verifiable knowledge boundaries. (AI terminology glossary, AI terms explained, LLM terminology, AI concepts explained, generative AI glossary)

Tokens

What it is: Tokens are the basic units of data that LLMs process. A token is roughly equivalent to a word or word fragment — for example, “running” might be one token, while “unbelievable” might be split into two or three.

Why it matters: Tokenization is how human language gets translated into something an AI model can compute. When you send a message to an LLM, your words are first converted into tokens; the model then generates output tokens in response. In commercial AI products, tokens are also the unit of pricing — the more tokens your prompts and responses consume, the more you pay.

There are three main token types worth knowing:

- Input tokens: generated from your query or prompt

- Output tokens: generated as the model’s response

- Reasoning tokens: used internally by the model during complex, multi-step thinking

Diffusion

What it is: Diffusion is the underlying technique in many image-, audio-, and video-generating AI systems. It works by progressively adding “noise” to data until the structure is destroyed, then training a model to learn the reverse — reconstructing structured data from noise.

Why it matters: Diffusion models power tools like Stable Diffusion, DALL-E, and Sora. The “reverse diffusion” process is how these tools generate a coherent image from what starts as static. This approach has proven remarkably effective for creative generation tasks.

Generative Adversarial Network (GAN)

What it is: A GAN is a machine learning framework using two competing neural networks — a generator that creates outputs and a discriminator that evaluates whether those outputs are real or artificial. They train each other through structured competition.

Why it matters: GANs were the dominant generative AI technique before diffusion models rose to prominence. They’re still widely used for producing realistic images and videos, including deepfakes. The adversarial structure allows quality to improve without additional human labeling.

Distillation

What it is: Model distillation is a technique where a smaller “student” model is trained to replicate the behavior of a larger “teacher” model, by learning from the teacher’s outputs rather than raw data.

Why it matters: Distillation allows companies to create compact, efficient models that approximate the capabilities of much larger ones — often at a fraction of the cost. It’s believed to be the method behind several faster model variants (like GPT-4 Turbo). Importantly, distilling from a competitor’s model without permission typically violates their terms of service. (AI terminology glossary, AI terms explained, LLM terminology, AI concepts explained, generative AI glossary)

Advanced AI Terminology for Practitioners

If you work in AI, tech, or content, this tier of AI terminology shows up constantly in research papers, product announcements, and strategy discussions.

Chain of Thought Reasoning

What it is: Chain of thought (CoT) is a prompting and training technique that encourages an LLM to break a problem into intermediate reasoning steps before arriving at a final answer.

Why it matters: Just as a human might write out an equation on paper rather than trying to solve it mentally, chain of thought reasoning allows a model to “show its work.” This dramatically improves accuracy on complex logic, math, and coding tasks. Modern reasoning models (like OpenAI’s o-series) are specifically optimized for CoT thinking through reinforcement learning. The tradeoff is speed — CoT responses take longer to generate.

Fine-Tuning

What it is: Fine-tuning is the process of taking a pre-trained model and continuing to train it on a narrower, domain-specific dataset to improve performance for a specific task or industry.

Why it matters: Most commercial AI products built on top of foundation models use fine-tuning to sharpen the model’s utility. A general-purpose LLM fine-tuned on legal documents performs significantly better at legal tasks than its base version. Fine-tuning is central to how AI startups differentiate their products without the cost of training a model from scratch.

Transfer Learning

What it is: Transfer learning involves using a model pre-trained on one task as the starting point for training on a different but related task — allowing prior “knowledge” to carry over.

Why it matters: Transfer learning is what makes fine-tuning possible. Rather than starting from random weights, developers inherit the general capabilities of a powerful base model and adapt it efficiently. The limitation: if the source domain and target domain differ too much, transferred knowledge may be more hindrance than help.

Weights

What it is: Weights are the numerical parameters inside a neural network that determine how much influence each input variable has on the model’s output.

Why it matters: Weights are the “memory” of a trained AI model. They’re what gets saved when you “download” an open-source model. During training, weights start as random numbers and gradually adjust through billions of correction cycles until the model produces useful, accurate outputs. When people talk about “open weights” AI models, they mean the weights have been publicly released.

Compute

What it is: Compute refers to the computational power — hardware resources like GPUs, TPUs, and CPUs — required to train and run AI models.

Why it matters: Compute is the fuel of the AI industry. Access to sufficient compute has become a geopolitical and strategic issue, with governments imposing chip export restrictions and companies spending billions on data center buildouts. Without compute, no model trains; without inference hardware, no product ships.

AI Terminology Comparison Table: Key Concepts at a Glance

| Term | Category | One-Line Definition | Beginner Relevance |

|---|---|---|---|

| LLM | Foundation | Neural network trained on text to generate language | High — powers every AI chatbot |

| Hallucination | Risk | Model generating false information confidently | High — critical for safe AI use |

| Token | Mechanics | Basic unit of data processed by an LLM | Medium — affects cost and context limits |

| Training | Foundation | Process of teaching a model via data exposure | Medium — explains model capability origins |

| Inference | Foundation | Running a trained model to generate outputs | Medium — what happens when you use AI |

| Fine-tuning | Development | Further training on specialized data | Medium-High — drives domain-specific AI |

| Chain of Thought | Reasoning | Breaking problems into steps for better accuracy | High — key to newer reasoning models |

| Diffusion | Generative | Noise-to-structure technique for image/video AI | Medium — underlies most image AI tools |

| GAN | Generative | Two competing networks that improve each other | Medium — still used for deepfakes, media |

| Distillation | Efficiency | Compressing large model knowledge into a smaller one | Low-Medium — explains model variants |

| Weights | Mechanics | Numerical parameters encoding model knowledge | Medium — what “open weights” means |

| Compute | Infrastructure | Hardware power for training and running models | High — explains AI cost and access issues |

| Transfer Learning | Development | Reusing pre-trained model knowledge for new tasks | Medium — explains fine-tuning foundations |

Emerging AI Terminology in 2026

The AI field evolves fast. Here are the newer AI terminology entries gaining traction this year.

Artificial General Intelligence (AGI)

What it is: AGI refers to AI that matches or exceeds average human capability across a broad range of cognitive tasks — not just specific ones.

What’s contested: There is no consensus definition. OpenAI defines AGI as systems that “outperform humans at most economically valuable work.” Google DeepMind frames it as AI “at least as capable as humans at most cognitive tasks.” Even leading AI researchers disagree on whether AGI has been achieved, is imminent, or remains decades away. The term carries enormous strategic and regulatory weight despite — or perhaps because of — its ambiguity.

AI Agent

What it is: An AI agent is a system that uses AI to autonomously perform multi-step tasks on your behalf — filing expenses, booking appointments, writing and running code, managing workflows — going far beyond what a simple chatbot can do.

Why it matters: AI agents represent the next frontier of practical AI deployment. Rather than answering a single question, agents plan, act, and adapt across a sequence of steps. Infrastructure to support reliable, safe AI agents is still actively being built.

RAMageddon

What it is: RAMageddon is an industry term for the severe global shortage of RAM (random access memory) chips caused partly by AI companies buying up memory at massive scale for their data centers.

Why it matters: The RAM shortage has cascading effects: higher prices for gaming consoles, declining smartphone shipments, and constrained enterprise computing. It’s a vivid example of how AI infrastructure demand reshapes the broader technology supply chain.

How to Use This AI Terminology in Practice

Knowing the vocabulary matters, but applying it confidently is the real goal. Here are practical ways to put this AI terminology to work:

- When evaluating AI tools: Ask whether a product uses fine-tuning or just prompting — fine-tuned models for your domain are usually more accurate.

- When assessing AI outputs: Remember hallucinations are structural, not random bugs. Always verify AI-generated facts in high-stakes situations.

- When reading AI news: “New reasoning model released” = chain of thought optimization. “Open weights model” = the model’s parameters are publicly downloadable. “Token limits increased” = the model can handle longer documents.

- When managing AI costs: Token usage drives pricing for most API-based AI tools. Shorter, more precise prompts reduce costs; verbose prompts with vague instructions burn tokens inefficiently.

- When hiring or managing AI roles: Familiarity with training vs. inference, fine-tuning, and distillation signals genuine technical fluency versus surface-level familiarity.

Frequently Asked Questions About AI Terminology

What is the difference between an LLM and a chatbot? A large language model (LLM) is the underlying AI model — the trained neural network. A chatbot is the product interface built on top of it. ChatGPT is a chatbot; GPT-4 is the LLM it runs on. Claude is Anthropic’s chatbot; Claude Sonnet 4.6 or Opus are the underlying models.

Why do AI models hallucinate? Hallucinations occur because LLMs generate statistically probable language, not verified facts. When a model encounters a knowledge gap, it doesn’t return a blank — it generates a plausible-sounding continuation. This is a known limitation of how current LLMs are architecturally designed.

What does “open weights” mean in AI? An open weights model is one where the trained parameters (weights) have been publicly released, allowing anyone to download and run the model locally. This is different from open-source code; having the weights means you have the trained model itself.

How are tokens different from words? Tokens are not identical to words. A single word like “extraordinary” might be split into multiple tokens, while short common words may each be one token. On average, 100 tokens corresponds to roughly 75 English words, though this varies significantly by language and content type.

What’s the difference between fine-tuning and transfer learning? Transfer learning is the broader concept of reusing pre-trained knowledge for new tasks. Fine-tuning is a specific, common method of transfer learning where you continue training a pre-existing model on new, task-specific data. All fine-tuning involves transfer learning, but not all transfer learning involves fine-tuning.

The Bottom Line on AI Terminology

The AI landscape is complex, fast-moving, and full of terms that are sometimes used loosely or inconsistently. But the core AI terminology remains stable: LLMs generate language through pattern recognition; hallucinations are a structural limitation of that approach; tokens are the unit of processing and pricing; training and inference describe building versus using a model; and fine-tuning, distillation, and transfer learning are the tools developers use to make powerful general models practical for specific tasks.

Bookmark this guide. The field will keep producing new AI terminology — RAMageddon won’t be the last novel term — but the foundational vocabulary here gives you the framework to interpret new concepts as they emerge.