DeepSeek V4 is here — and it’s the most cost-efficient frontier AI model ever released. With a Pro version priced at $1.74 per million input tokens versus GPT-5.5 Pro’s $30, the Chinese lab has once again redrawn the economics of large language models overnight.

If you’re building with AI, budgeting for enterprise inference, or simply tracking where the global AI race is headed, DeepSeek V4 is the model you need to understand right now.

What Is DeepSeek V4?

Definition: DeepSeek V4 is a family of open-weight large language models released by Hangzhou-based AI lab DeepSeek on April 24, 2026. It comes in two variants — V4-Pro and V4-Flash — both featuring one million token context windows, MIT licensing, and pricing that significantly undercuts every major Western AI provider.

Expansion: The release arrived just hours after OpenAI launched GPT-5.5, making it one of the most strategically timed drops in AI history. DeepSeek V4 isn’t just a performance update — it’s an architectural rethink of how large models can scale efficiently, even under U.S. chip export restrictions that have limited Chinese labs’ access to high-end Nvidia hardware.

The two models in the DeepSeek V4 family serve very different purposes:

- DeepSeek V4-Pro — 1.6 trillion total parameters, 49 billion active per inference pass. The largest open-source LLM ever released. Designed for complex reasoning, coding agents, and enterprise-scale tasks.

- DeepSeek V4-Flash — 284 billion total parameters, 13 billion active. Optimized for speed and cost. Designed for high-volume, latency-sensitive workloads.

Both are available on Hugging Face under MIT license, meaning they can be run locally for free.

DeepSeek V4 vs GPT-5.5 Pro: The Price Breakdown

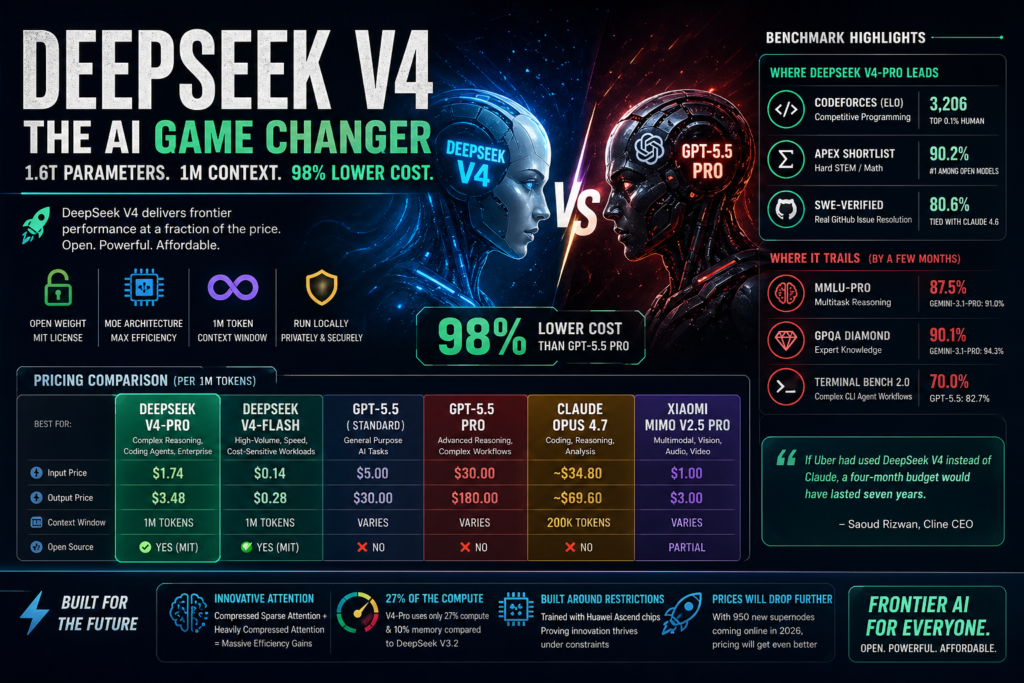

The most headline-grabbing aspect of DeepSeek V4 is its price. The comparison below makes the gap viscerally clear.

| Model | Input (per 1M tokens) | Output (per 1M tokens) | Context Window | Open Source |

|---|---|---|---|---|

| DeepSeek V4-Pro | $1.74 | $3.48 | 1M tokens | ✅ Yes (MIT) |

| DeepSeek V4-Flash | $0.14 | $0.28 | 1M tokens | ✅ Yes (MIT) |

| GPT-5.5 (Standard) | $5.00 | $30.00 | Varies | ❌ No |

| GPT-5.5 Pro | $30.00 | $180.00 | Varies | ❌ No |

| Claude Opus 4.7 | ~$34.80 (est.) | ~$69.60 (est.) | 200K tokens | ❌ No |

| Xiaomi MiMo V2.5 Pro | $1.00 | $3.00 | Varies | Partial |

To put those numbers in human terms: Cline CEO Saoud Rizwan calculated that if Uber had used DeepSeek V4 instead of Claude for its AI workloads, a four-month budget would have lasted seven years.

The 98% cost reduction compared to GPT-5.5 Pro isn’t a typo. It’s a fundamental shift in what “frontier AI” means for anyone who actually has to pay for inference at scale.

Under the Hood: How DeepSeek V4 Achieves Efficiency

Mixture-of-Experts Architecture

DeepSeek V4 uses a Mixture-of-Experts (MoE) design, a technique the lab has refined since V3. The concept: instead of activating the entire model for every request, only a relevant “expert” slice of the network wakes up for any given query.

In V4-Pro’s case, that means 1.6 trillion parameters are stored but only 49 billion are activated per inference. The result is a model with the knowledge capacity of a frontier system at a fraction of the compute cost. V4-Flash takes this further — 284 billion total, 13 billion active.

This is what allows DeepSeek V4 to deliver competitive benchmark scores while pricing at a level that would have been considered impossible twelve months ago.

The New Attention Innovations

Standard transformer attention has a brutal scaling problem: every time you double context length, compute costs roughly quadruple. This is why most “long context” models throttle silently after a certain point.

DeepSeek V4 introduces two new attention mechanisms to solve this:

Compressed Sparse Attention works in two stages. First, it compresses groups of tokens — roughly every four tokens — into a single representative entry. Then, a “Lightning Indexer” selects only the most relevant compressed entries for any given query. Instead of attending to a million tokens, the model attends to a small, relevance-filtered subset.

Heavily Compressed Attention is more aggressive: it collapses every 128 tokens into one entry, sacrificing fine-grained detail for an extremely cheap global view of the context. The two attention types alternate across layers, giving the model both granular detail and broad overview simultaneously.

The result: at one million tokens of context, DeepSeek V4-Pro uses only 27% of the compute its predecessor V3.2 needed. KV cache — the memory required to track context — drops to just 10% of V3.2. V4-Flash is even leaner at 10% compute and 7% memory relative to V3.2.

This efficiency is what makes the one-million-token context window a standard feature rather than a premium add-on.

DeepSeek V4 Benchmark Performance: Where It Wins and Where It Trails

One of the most unusual things about DeepSeek V4 is its transparency. Unlike most model releases that cherry-pick winning benchmarks, the DeepSeek technical report openly publishes the gaps.

Where DeepSeek V4-Pro leads or competes:

- Codeforces (competitive programming): V4-Pro scored 3,206 Elo — equivalent to roughly the 23rd-ranked human participant in competitive programming contests.

- Apex Shortlist (hard STEM/math): V4-Pro scored 90.2%, ahead of Claude Opus 4.6 (85.9%) and GPT-5.4 (78.1%).

- SWE-Verified (real GitHub issue resolution): V4-Pro scored 80.6%, matching Claude Opus 4.6.

- GDPval-AA (economic knowledge work — finance, legal, research): V4-Pro-Max ranked first among all open-weight models with 1,554 Elo, compared to Claude Opus 4.6’s 1,619.

Where DeepSeek V4-Pro trails:

- MMLU-Pro (multitask reasoning): Gemini-3.1-Pro hits 91.0%; V4-Pro reaches 87.5%.

- GPQA Diamond (expert knowledge): Gemini-3.1-Pro 94.3% vs. V4-Pro 90.1%.

- Humanity’s Last Exam (graduate-level reasoning): Gemini-3.1-Pro 44.4% vs. V4-Pro 37.7%.

- Terminal Bench 2.0 (complex CLI agent workflows): GPT-5.5 scores 82.7% vs. V4-Pro’s 70.0%.

The honest picture: DeepSeek V4-Pro’s general reasoning lags behind Gemini-3.1-Pro and GPT-5.5 by roughly three to six months. But on coding and agentic tasks — the workloads most developers actually care about — it competes at or near the top of the field, at a price that makes the remaining gaps largely irrelevant.

Why DeepSeek V4 Matters for Developers and Enterprises

For Enterprise: The Math Has Changed

A model that leads open-source benchmarks at $1.74 per million input tokens rewrites the business case for AI-powered workflows. Large-scale document processing, legal review, contract analysis, and code generation pipelines that were expensive six months ago are now dramatically cheaper.

The one-million-token context window — standard on both V4-Pro and V4-Flash — means you can process an entire codebase or regulatory filing in a single request. No chunking, no stitching, no loss of inter-document context.

Being open-source adds another layer of enterprise value: DeepSeek V4 can be fine-tuned for specific domains, deployed on private infrastructure, and customized in ways that closed-source models fundamentally cannot support.

For Developers: V4-Flash Is the One to Watch

At $0.14 input and $0.28 output per million tokens, V4-Flash is cheaper than models that were considered budget options a year ago. DeepSeek has already routed its existing deepseek-chat endpoint to V4-Flash (non-thinking mode) and deepseek-reasoner to V4-Flash (thinking mode), so API users are already running on it.

DeepSeek V4 also runs natively in Claude Code, OpenCode, and other popular AI coding tools. In a developer survey of 85 engineers who used V4-Pro as their primary coding agent, 52% said it was ready to be their default model, and 39% leaned toward yes.

Interleaved Thinking: A Meaningful Agentic Upgrade

DeepSeek V4 introduces a feature called “interleaved thinking” that addresses a meaningful pain point for agentic workflows. In previous models, reasoning context was flushed between tool calls — so in a 20-step agent pipeline, the model would lose its chain of thought at every step and have to rebuild context from scratch.

V4 retains the full reasoning chain across tool calls. For complex automated pipelines involving web search, code execution, and file manipulation in sequence, this is a non-trivial improvement in coherence.

The Geopolitical Angle: Building Around Chip Bans

The United States has restricted high-end Nvidia GPU exports to China since 2022, with the stated goal of slowing Chinese AI development. The DeepSeek V4 story is partly a case study in what happens when that strategy encounters a sufficiently motivated engineering team.

Notably, DeepSeek trained V4 in part on Huawei Ascend chips — domestic Chinese hardware that falls outside U.S. export controls. The architectural innovations in DeepSeek V4, particularly its attention compression techniques, weren’t just research elegance: they were a direct engineering response to having less raw compute than Western competitors.

Once 950 new supernodes come online later in 2026, DeepSeek says V4-Pro’s already-low pricing will drop further.

This mirrors the dynamic that made DeepSeek R1 famous in January 2025, when its release wiped $600 billion from Nvidia’s market cap in a single day by demonstrating that frontier-quality models didn’t require the enormous hardware investments the market had assumed.

Should You Switch to DeepSeek V4?

Q: Is DeepSeek V4 better than GPT-5.5?

Not across the board. GPT-5.5 still leads on complex CLI agent workflows (Terminal Bench 2.0: 82.7% vs. 70.0%) and overall reasoning depth. But on coding, competitive programming, and hard math benchmarks, DeepSeek V4-Pro is competitive or better — at 98% lower cost for the Pro tier.

Q: Is DeepSeek V4 safe for enterprise use?

It’s MIT licensed, which means you can deploy it privately, fine-tune it, and audit it. That’s more transparency than most closed-source alternatives offer. Whether that meets your specific compliance requirements depends on your stack and regulatory context.

Q: What about multimodal capabilities?

DeepSeek V4 is text-only at launch. If your use case requires image, audio, or video understanding, models like Xiaomi MiMo V2.5 Pro or GPT-5.5 currently have an edge. DeepSeek has said multimodal is in development.

Q: When do the old DeepSeek endpoints retire?

The deepseek-chat and deepseek-reasoner endpoints will retire on July 24, 2026. Plan migrations accordingly.

Q: Can I run DeepSeek V4 locally?

Yes. Both V4-Pro and V4-Flash are open-weight and available on Hugging Face. Running V4-Pro locally requires substantial hardware given its 1.6 trillion parameter count; V4-Flash is more practically deployable on enterprise-grade GPU clusters.

The Bigger Picture: What DeepSeek V4 Signals for the AI Industry

DeepSeek V4 doesn’t exist in isolation. April 2026 has been one of the most compressed periods of frontier AI releases on record:

- April 16: Anthropic releases Claude Opus 4.7, strong on coding and reasoning.

- April 22: Xiaomi drops MiMo V2.5 Pro with full multimodal capabilities at $1 input / $3 output per million tokens.

- April 23: OpenAI launches GPT-5.5, topping out at $180 per million output tokens in Pro tier.

- April 24: Tencent releases Hy3, another efficiency-focused open-source MoE model. DeepSeek V4 launches hours later.

The compression of this release cycle — and the fact that Chinese labs are now shipping competitive models faster than most Western labs — signals a structural shift. The idea that frontier AI is exclusively a U.S. domain is no longer supportable.

For buyers of AI services, this is good news. Competition at the frontier almost always drives down prices and accelerates capability improvements. The fact that DeepSeek V4 costs 98% less than GPT-5.5 Pro while matching it on several key benchmarks isn’t a niche finding — it’s a market signal that will pressure pricing across the entire industry.

Conclusion

DeepSeek V4 is the most cost-efficient frontier AI model ever released. Its $1.74 per million input token price point, combined with competitive benchmark performance on coding and reasoning tasks, makes it the default consideration for any developer or enterprise evaluating AI infrastructure costs.

Is it the best model in every category? No. Gemini-3.1-Pro still leads on expert knowledge, and GPT-5.5 wins on complex command-line agentic tasks. But DeepSeek V4 closes those gaps to within months — not years — while pricing at a level that turns the cost math of AI deployment on its head.

Whether you use it directly via API, deploy it locally, or simply use its existence as leverage when negotiating with closed-source providers, DeepSeek V4 has changed the baseline for what “good enough” AI costs in 2026.