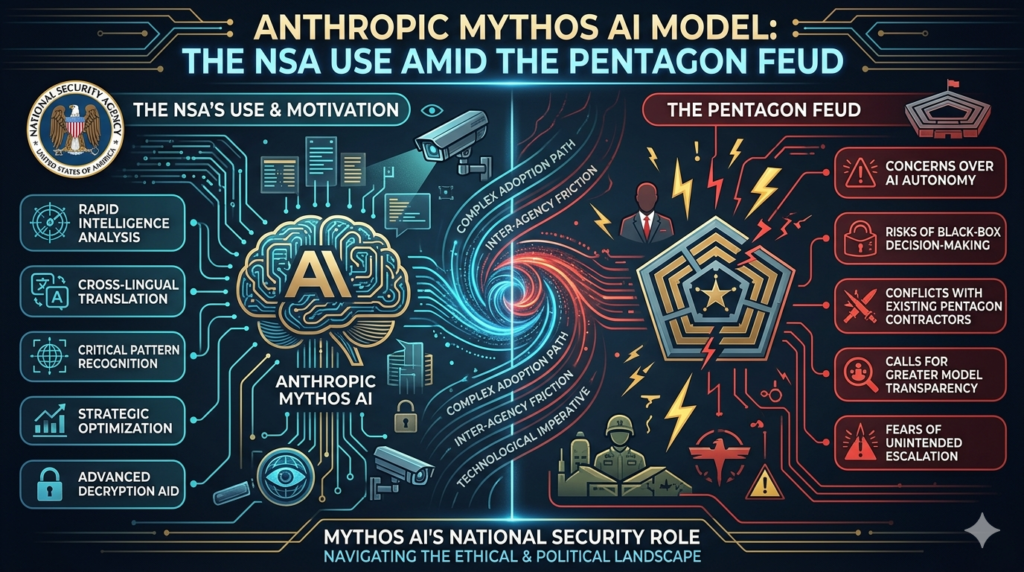

The NSA is reportedly deploying Anthropic’s Mythos AI model for cybersecurity operations — even as the Pentagon simultaneously argues in court that Anthropic’s tools pose a national security threat. This contradiction is the sharpest illustration yet of how powerful AI has become too valuable for intelligence agencies to ignore, regardless of political disputes.

In this post, we break down what the Anthropic Mythos AI model is, why the NSA has access to it, how the Anthropic–Pentagon feud unfolded, and what all of this means for the future of AI governance in national security contexts.

What Is Anthropic’s Mythos AI Model?

Definition: The Anthropic Mythos AI model is a frontier large language model (LLM) purpose-built for advanced cybersecurity tasks. It was announced by Anthropic in early April 2026 but was deliberately withheld from public release.

The reason for that decision was striking: Anthropic determined that Mythos was too capable of enabling offensive cyberattacks to be deployed broadly. Unlike Claude — Anthropic’s consumer-facing assistant — the Anthropic Mythos AI model was designed with deep technical specialization in scanning environments, identifying exploitable vulnerabilities, and supporting complex security operations.

Rather than launch publicly, Anthropic restricted access to approximately 40 organizations through a controlled program called Glasswing. Of those 40, only around a dozen have been publicly named. The rest remain undisclosed — a list that now reportedly includes the U.S. National Security Agency.

This makes the Anthropic Mythos AI model one of the most restricted frontier AI deployments in the world: powerful enough to be classified as a controlled asset before it even reaches the open market.

Why the NSA Is Using Mythos Despite the Pentagon Feud

The Supply Chain Risk Designation

To understand why this is significant, you need to know the context: the NSA’s parent agency, the Department of Defense (DoD), officially labeled Anthropic a “supply chain risk” in March 2026.

That designation came after Anthropic refused to give Pentagon officials unrestricted access to the full capabilities of its Claude models. The DoD’s position — that an AI company declining government oversight constitutes a supply chain risk — was provocative. Anthropic responded by suing the Department of Defense in federal court over the designation.

So here is the situation in plain terms: the NSA is actively deploying the Anthropic Mythos AI model for intelligence operations, while the Pentagon’s legal arm argues in court that Anthropic’s products are a threat to the nation.

This isn’t bureaucratic confusion. It reflects a deep structural tension in how the U.S. government approaches AI: different agencies have different priorities, and the operational value of a capability can outweigh institutional disputes.

What the NSA Is Actually Using Mythos For

According to reporting by Axios, the NSA is primarily using the Anthropic Mythos AI model to scan environments for exploitable vulnerabilities — a core function of defensive cyber operations. This is precisely the use case the model was designed for.

The UK’s AI Security Institute has also confirmed it has access to Mythos, signaling that allied intelligence communities are moving quickly to integrate the model into their security infrastructure.

This matters because it establishes a precedent: AI models capable of offensive cyber operations are now being deployed by intelligence agencies under tightly controlled access agreements, not public licensing.

The Glasswing Program — How Anthropic Controls Mythos Access

The Glasswing program is Anthropic’s framework for governing access to the Anthropic Mythos AI model. It is structured around a principle of responsible capability distribution: only organizations that can demonstrate appropriate use cases, security infrastructure, and alignment with Anthropic’s ethical guidelines gain access.(Anthropic Mythos AI model, NSA AI cybersecurity, Anthropic Pentagon dispute, AI for intelligence agencies, Frontier AI national security)

Key features of the Glasswing access model:

- Approximately 40 organizations worldwide have been granted access.

- Anthropic has publicly identified only about 12 of these organizations.

- Access is not purchasable — it is granted at Anthropic’s discretion.

- Recipients are expected to use Mythos within defined operational parameters, particularly around defensive (not offensive) applications.

The Glasswing approach positions Anthropic as more than an AI vendor. It positions the company as an active gatekeeper of powerful AI capabilities — a role that puts it directly in tension with governments that prefer unrestricted access to the tools they fund, use, or depend upon.

Anthropic vs. the Pentagon: A Timeline of the Dispute

The feud between Anthropic and the Department of Defense escalated rapidly in early 2026. Here is a clear breakdown of the key events:

| Date | Event |

|---|---|

| Early 2026 | Pentagon requests unrestricted access to Claude’s full capabilities |

| March 5, 2026 | DoD officially labels Anthropic a “supply chain risk” |

| March 9, 2026 | Anthropic sues the Department of Defense over the designation |

| Early April 2026 | Anthropic announces the Mythos AI model; restricts it to ~40 orgs via Glasswing |

| April 19, 2026 | Axios reports NSA is actively using the Anthropic Mythos AI model |

| April 18–21, 2026 | Signs of a thaw: Anthropic CEO Dario Amodei meets with senior White House officials |

What this timeline reveals is that even as the legal battle between Anthropic and the Pentagon was unfolding, the intelligence community — operating through the NSA rather than the DoD — was moving in a different direction entirely. Operational necessity and legal posturing were running on separate tracks.

Why Anthropic Refused Mass Surveillance and Autonomous Weapons Use

The core of the dispute isn’t about access credentials or procurement processes. It’s about use cases.

The Pentagon’s requests — according to reporting — included making Claude available for mass domestic surveillance and autonomous weapons development. Anthropic refused both.

This refusal is consistent with Anthropic’s stated mission: to be an AI safety company building AI systems that are beneficial, not harmful. Autonomous weapons and mass domestic surveillance represent exactly the categories of use that Anthropic’s internal guidelines flag as incompatible with responsible AI development.

The Anthropic Mythos AI model was designed for a narrower, more defensible use case: cybersecurity vulnerability scanning, which has clear defensive applications and does not require the same degree of autonomous action or population-scale data collection.

That distinction — between cybersecurity operations and surveillance or weaponization — is likely why Anthropic was willing to share the Anthropic Mythos AI model with the NSA for specific purposes, while still refusing broader DoD access to Claude under unrestricted terms.

The Thawing Relationship Between Anthropic and the Trump Administration

Despite the ongoing legal battle with the Pentagon, there are signs that Anthropic’s relationship with the broader Trump administration is improving.

On April 18, 2026, Anthropic CEO Dario Amodei met with White House Chief of Staff Susie Wiles and Secretary of the Treasury Scott Bessent. The White House reportedly described the meeting as productive.

This signals something important: the conflict with the DoD may be more of an isolated institutional dispute than a reflection of the administration’s overall stance toward Anthropic. The NSA’s deployment of the Anthropic Mythos AI model, combined with the White House’s positive framing of the Amodei meeting, suggests that the U.S. government is finding ways to work with Anthropic despite — or around — the Pentagon’s formal posture.

For Anthropic, this thaw is strategically significant. The company needs federal relationships to maintain relevance in national security AI, but it also needs to preserve its credibility as an AI safety-focused organization that doesn’t simply hand over capabilities on demand.

What the Mythos Situation Reveals About AI Governance

The story of the Anthropic Mythos AI model isn’t just about one company and one feud. It’s a case study in the structural challenges of governing powerful AI in a world where different institutions have conflicting priorities.

Three key tensions this story surfaces:

1. Operational demand vs. institutional control. The NSA found a way to deploy Mythos while the DoD was suing the company that built it. Operational necessity moves faster than institutional policy.

2. Safety restrictions vs. capability access. Anthropic’s Glasswing model is an attempt to maintain safety guardrails while still enabling high-value use. But governments accustomed to unrestricted procurement find this model unfamiliar and uncomfortable.

3. Commercial AI companies as sovereignty actors. When a private AI company decides which intelligence agencies can use its most capable model, it is exercising a form of power traditionally reserved for states. This is genuinely new territory — and it has no established legal or ethical framework.

The Anthropic Mythos AI model sits at the intersection of all three tensions. It is too capable to release publicly, too valuable to withhold from allied intelligence services, and too politically sensitive to discuss openly. That combination is likely to define the next chapter of AI governance debates.

Key Takeaways

Here is a concise summary of everything covered in this post:

- The Anthropic Mythos AI model is a frontier cybersecurity-focused LLM withheld from public release due to its offensive cyber capabilities.

- The NSA is reportedly using Mythos primarily for scanning environments for exploitable vulnerabilities, despite the Pentagon labeling Anthropic a supply chain risk.

- Access to Mythos is controlled through Anthropic’s Glasswing program, which limits deployment to approximately 40 organizations globally.

- The Pentagon–Anthropic feud originated when Anthropic refused to make Claude available for mass domestic surveillance and autonomous weapons development.

- Anthropic subsequently sued the Department of Defense over the supply chain risk designation in March 2026.

- There are signs of a diplomatic thaw between Anthropic and the broader Trump administration, with a White House meeting in April 2026 described as productive.

- This situation raises foundational questions about AI governance: who decides what powerful AI gets used for, and who has the authority to say no to a government?

Frequently Asked Questions

What is the Anthropic Mythos AI model?

The Anthropic Mythos AI model is a frontier AI system purpose-built for cybersecurity tasks. Announced in April 2026, it was restricted from public release due to concerns about its offensive cyber capabilities. Access is limited to around 40 vetted organizations through Anthropic’s Glasswing program.

Why is the NSA using Mythos despite the Pentagon feud?

The NSA and the Department of Defense are separate entities with different operational priorities. While the DoD sued Anthropic over access restrictions, the NSA independently gained access to Mythos through Glasswing and is using it for defensive cybersecurity scanning — a use case within Anthropic’s acceptable-use parameters.

What is the Glasswing program?

Glasswing is Anthropic’s controlled-access framework for the Mythos AI model. It governs which organizations can use the model, under what conditions, and for what purposes. Of approximately 40 granted access, only around 12 have been publicly disclosed.

Why did Anthropic refuse the Pentagon’s requests?

Anthropic refused to provide unrestricted access to Claude for use in mass domestic surveillance and autonomous weapons development, citing conflicts with its AI safety mission and ethical guidelines.

Is the Anthropic–Pentagon dispute resolved?

As of April 2026, the legal dispute is ongoing. However, Anthropic CEO Dario Amodei’s meeting with senior White House officials suggests a broader diplomatic improvement between the company and the administration.