Open-source AI just crossed a line it had never quite reached before. DeepSeek V4 Pro delivers near-frontier performance on coding, math, and agentic tasks at a price point that undercuts every major closed-source competitor by a factor of 7 or more — and it does it with a genuinely new architecture, not just a scaled-up rehash of V3.

If you’re evaluating large language models for production use in 2026, this guide covers everything you need to know: what DeepSeek V4 Pro is, how it works under the hood, what the benchmarks actually reveal, how it compares to GPT-5.4 and Claude Opus 4.6, and whether it belongs in your stack.

What Is DeepSeek V4 Pro?

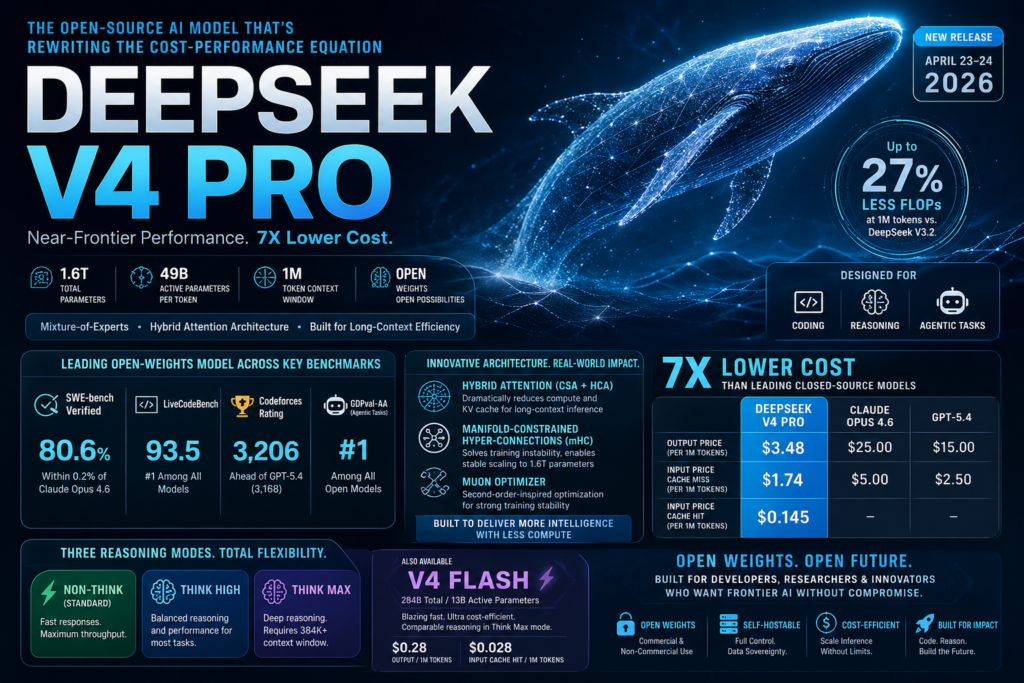

DeepSeek V4 Pro is a 1.6 trillion parameter, open-weights large language model released on April 23–24, 2026 by Chinese AI lab DeepSeek. It is the first entirely new architecture the lab has shipped since DeepSeek V3, and it arrives alongside a smaller companion model, DeepSeek V4 Flash.

Quick Definition: DeepSeek V4 Pro is a Mixture-of-Experts (MoE) language model with 1.6 trillion total parameters and 49 billion activated per token. It supports a 1 million token context window by default and is available under an open-weights license for both commercial and non-commercial use.

The release was delayed nearly four months from its originally anticipated launch. When it finally dropped on Hugging Face, it immediately claimed the #2 spot on the Artificial Analysis Intelligence Index for open-weights reasoning models, trailing only Kimi K2.6. That context matters: DeepSeek V4 Pro is not a marginal improvement. It is a structural rethink of how a frontier-scale model is built and run.

Why This Release Is Different

Previous DeepSeek releases — V3, R1, R1-0528 — were influential primarily because of their pricing and accessibility. V4 Pro adds something new: a ground-up architectural redesign that fundamentally changes the inference cost profile, especially at long context. DeepSeek claims V4 Pro trails state-of-the-art closed models by only 3 to 6 months in capability, while costing a fraction of the price. Based on the benchmark data now available, that claim holds up more than most would have expected.

Architecture Deep Dive: What Makes DeepSeek V4 Pro Tick

Understanding DeepSeek V4 Pro’s design is the key to understanding why its performance-per-dollar ratio is so unusual. Three innovations stand out.

Mixture-of-Experts with Hybrid Attention

At its core, DeepSeek V4 Pro is a Mixture-of-Experts model. MoE architectures activate only a subset of parameters per token rather than running the full network, which is why V4 Pro can have 1.6 trillion total parameters while only using 49 billion of them per token during inference. This keeps compute costs manageable at a scale that would otherwise be prohibitive.

The genuinely new element is the Hybrid Attention Architecture, which combines two complementary mechanisms:

- Compressed Sparse Attention (CSA): Reduces the number of attention operations by selectively attending to relevant tokens, dramatically lowering the compute cost for long sequences.

- Heavily Compressed Attention (HCA): Compresses the KV cache at the representation level, reducing memory bandwidth requirements.

The combined effect is striking: at a 1 million token context window, DeepSeek V4 Pro requires only 27% of single-token inference FLOPs and 10% of the KV cache compared to DeepSeek V3.2. That is not a marginal improvement. Long-context inference — historically one of the most expensive operations in production LLM deployments — becomes dramatically cheaper with this architecture.

Manifold-Constrained Hyper-Connections and the Muon Optimizer

Two additional innovations solve problems that typically appear when scaling MoE models to this parameter count.

Manifold-Constrained Hyper-Connections (mHC) address a training instability discovered during DeepSeek’s own earlier 27B experiments: unconstrained Hyper-Connections caused signal amplification of around 3,000x, crashing training entirely. The mHC variant constrains the residual connections to a geometric manifold, reducing that amplification factor to just 1.6x — enabling stable training at 1.6 trillion parameters.

The Muon optimizer (a second-order-inspired training algorithm) handles overall training stability and gradient flow, complementing mHC to make V4 Pro’s training process tractable at this scale.

Three Reasoning Modes

Both DeepSeek V4 Pro and its smaller sibling V4 Flash ship with three selectable reasoning modes:

- Non-Think (Standard): Fast, direct answers. No internal chain-of-thought. Best for simple queries and high-throughput applications.

- Think High: Enables logical analysis and structured reasoning. Appropriate for most professional use cases.

- Think Max: Full reasoning extent. DeepSeek recommends a minimum context window of 384K tokens in this mode to allow the model to fully develop its chain of thought.

The existence of these modes matters for deployment. You are not locked into a single inference profile; you can tune cost and latency to match the complexity of each task.

Benchmark Performance: What the Numbers Actually Show

Coding Benchmarks

This is where DeepSeek V4 Pro makes its strongest case.

- SWE-bench Verified: 80.6% — within 0.2 percentage points of Claude Opus 4.6, which scores at the frontier of closed-source models.

- LiveCodeBench: 93.5 — ahead of Gemini (91.7) and Claude (88.8), making V4 Pro the top-ranked model on this benchmark as of release.

- Codeforces Rating: 3,206 — ahead of GPT-5.4 (3,168) and Gemini (3,052). Codeforces is a real-world competitive programming measure, not a curated test suite, which makes this result particularly meaningful.

Math and STEM Benchmarks

DeepSeek V4 Pro leads all current open-weights models in math and STEM categories, rivaling top closed-source models. The technical report positions it as “world-class” in reasoning across mathematical and scientific domains, though specific MATH-500 and GPQA scores were not fully published at launch.

Agentic Tasks

DeepSeek V4 Pro leads open-weights models on GDPval-AA, Artificial Analysis’s agentic real-world work tasks benchmark. This benchmark is particularly relevant for teams building autonomous agent pipelines, as it measures performance on tasks that require multi-step planning, tool use, and error recovery — not just single-turn question answering.

On world knowledge, V4 Pro leads all current open models and trails only Gemini-3.1-Pro among all models evaluated.

DeepSeek V4 Pro vs. The Competition: A Direct Comparison

The table below compares DeepSeek V4 Pro against the primary frontier closed-source models across the metrics that matter most for production decision-making.

| Metric | DeepSeek V4 Pro | Claude Opus 4.6 | GPT-5.4 |

|---|---|---|---|

| Total Parameters | 1.6T (MoE) | Undisclosed | Undisclosed |

| Active Parameters | 49B | — | — |

| Context Window | 1M tokens | 200K tokens | 128K tokens |

| SWE-bench Verified | 80.6% | ~80.8% | ~78% |

| LiveCodeBench | 93.5 | 88.8 | ~90.2 |

| Codeforces Rating | 3,206 | — | 3,168 |

| Output Price (per 1M tokens) | $3.48 | $25.00 | $15.00 |

| Input Price — cache miss | $1.74 | $5.00 | $2.50 |

| Input Price — cache hit | $0.145 | — | — |

| Open Weights | Yes | No | No |

| Reasoning Modes | 3 (Standard / High / Max) | Standard only | Standard only |

Two numbers define the competitive position of DeepSeek V4 Pro: $3.48 per million output tokens versus Claude Opus 4.6 at $25.00. That is a 7x price gap at near-identical performance on the benchmark that most closely approximates real-world coding work (SWE-bench). For engineering teams running high-volume inference, that difference is not a rounding error — it is the difference between a project being economically viable and not.

Pricing and Cost Efficiency: The Real Story

DeepSeek V4 Pro’s pricing structure rewards high-cache workflows in a way that most API pricing models do not.

Here is what the pricing tiers look like in practice:

- Cache hit input: $0.145 per million tokens — approaching zero for workflows with stable, repeated system prompts.

- Cache miss input: $1.74 per million tokens.

- Output: $3.48 per million tokens.

The companion model, V4 Flash, goes even lower: $0.028 per million input tokens (cache hit) and $0.28 per million output tokens. V4 Flash is designed for tasks where speed and cost matter more than maximum capability, and it achieves comparable reasoning performance to V4 Pro in Think Max mode — though it falls slightly behind on knowledge-intensive tasks and the most complex agentic workflows.

For teams running agent pipelines with repeated system prompts, the effective cost of DeepSeek V4 Pro input tokens can approach zero on cache hits. This makes it an unusually strong candidate for production deployments where the same instruction set is sent thousands of times per day.

Who Should Use DeepSeek V4 Pro?

DeepSeek V4 Pro is not the right tool for every use case, but it is a strong fit for a well-defined set of them.

Best fits:

- Software engineering teams running automated code review, test generation, or pull request summarization at scale. The SWE-bench and Codeforces numbers make this the strongest argument for V4 Pro over any other model at this price point.

- AI agent pipeline builders who need a model that performs well on multi-step, tool-augmented tasks. V4 Pro’s lead on GDPval-AA makes it the top open-weights choice for this use case.

- Long-context applications — document analysis, large codebase understanding, full-repository indexing — where V4 Pro’s 1M default context window and drastically reduced KV cache requirements provide a structural advantage.

- Research teams and startups who need frontier-class performance but cannot justify the cost of closed-source APIs at scale.

- Organizations with data sovereignty requirements who need open weights they can self-host rather than sending data to a third-party API.

Less ideal fits:

- Multimodal applications — V4 Pro is text-in, text-out only at launch.

- Teams that need a fully finished, post-trained production model immediately. DeepSeek labels V4 as a preview release and notes further post-training refinements are expected.

- Use cases requiring guaranteed uptime SLAs or enterprise support contracts, where closed-source providers have mature infrastructure and support offerings.

Limitations and What to Watch

Transparency about limitations is part of what makes a technical evaluation useful. DeepSeek V4 Pro ships with several caveats worth tracking.

It is a preview release. DeepSeek’s own technical report explicitly notes that further post-training refinements are planned. The model you are evaluating today is not its final form. This is common for major open-weights releases but worth factoring into production adoption timelines.

Modality is text-only. DeepSeek V4 Pro and V4 Flash are text input and output only at launch, placing them behind multimodal leaders like Gemini-3.1-Pro and GPT-5.4 for vision-dependent workflows.

World knowledge trails one model. V4 Pro leads all open-weights models on world knowledge benchmarks but sits behind Gemini-3.1-Pro among all models. If factual recall across broad domains is your primary evaluation criterion, that gap matters.

Think Max mode demands significant context budget. DeepSeek’s own recommendation is a minimum of 384K tokens of context for Think Max reasoning. For API users on tight token budgets, this mode is effectively unavailable without deliberate architecture choices.

Geopolitical and compliance considerations. DeepSeek is a Chinese AI lab. For organizations operating under data residency regulations or government procurement rules that restrict the use of Chinese-origin AI, self-hosting the open weights may be the only viable path to using V4 Pro legally and compliantly.

The Bigger Picture: What DeepSeek V4 Pro Means for the AI Landscape

DeepSeek V4 Pro represents a meaningful inflection point in the open-source AI model ecosystem, not because of any single benchmark number, but because of what the combination of numbers implies.

For two years, the frontier of AI capability was effectively synonymous with expensive closed-source APIs. DeepSeek R1 cracked that assumption in January 2025 by matching OpenAI’s o1 in reasoning at a fraction of the cost — briefly crashing NVIDIA’s stock in the process. V4 Pro extends that pattern to a broader set of capabilities and does so with a genuinely new architectural foundation.

The 7x price gap between DeepSeek V4 Pro and Claude Opus 4.6 on output tokens, at near-identical SWE-bench performance, is the kind of gap that changes business decisions. It changes what is economically viable to build with AI. It changes the competitive dynamics for startups that previously couldn’t afford to put a frontier-class model in their inference loop.

Whether that gap persists as closed-source labs respond is the key question to watch in the second half of 2026.

Frequently Asked Questions

1. What makes DeepSeek V4 Pro different from other AI models?

DeepSeek V4 Pro stands out due to its Mixture-of-Experts (MoE) architecture combined with Hybrid Attention mechanisms. Unlike traditional models that activate all parameters, it uses only a subset per token, drastically reducing computation costs. This allows it to deliver near-frontier performance while being significantly cheaper than closed-source alternatives.

2. Is DeepSeek V4 Pro suitable for production use?

Yes, DeepSeek V4 Pro is suitable for many production use cases, especially those involving coding, long-context processing, and AI agents. However, since it is currently labeled as a preview release, teams should carefully evaluate stability, latency, and post-training updates before deploying it in mission-critical systems.

3. How does DeepSeek V4 Pro achieve lower costs?

The model achieves cost efficiency through sparse activation (MoE), compressed attention mechanisms, and optimized KV cache usage. Additionally, its pricing model rewards cache hits, making repeated workflows significantly cheaper compared to other AI APIs.

4. Can DeepSeek V4 Pro replace GPT-5.4 or Claude Opus 4.6?

In many cases, especially coding and reasoning tasks, DeepSeek V4 Pro performs at a comparable level while being much more cost-effective. However, it may not fully replace these models in areas like multimodal capabilities, enterprise support, or highly refined outputs.

5. Is DeepSeek V4 Pro truly open-source?

DeepSeek V4 Pro is released as open weights, meaning users can download and run the model locally. However, it is not fully open-source since the training data and full pipeline are not publicly available.

6. What are the limitations of DeepSeek V4 Pro?

The model is currently limited to text-based tasks and does not support image or audio processing. It also requires large context windows for maximum reasoning performance, which may increase resource requirements for some users.

7. Is DeepSeek V4 Pro truly open source?

DeepSeek V4 Pro is released as open weights, meaning the model weights are publicly downloadable and can be used for both commercial and non-commercial purposes. The training code and full training data are not open sourced, which is a meaningful distinction from fully open-source AI projects.

8. How does DeepSeek V4 Pro handle very long documents?

The model’s Hybrid Attention Architecture (CSA + HCA) is specifically designed for long-context efficiency. At 1 million tokens, it uses only 27% of the FLOPs and 10% of the KV cache required by DeepSeek V3.2. In practice, this makes V4 Pro one of the most cost-efficient options available for processing large documents, full codebases, or extended conversation histories.

9. What is the difference between V4 Pro and V4 Flash?

DeepSeek V4 Pro (1.6T total / 49B active parameters) is optimized for maximum capability. DeepSeek V4 Flash (284B total / 13B active parameters) is optimized for speed and cost efficiency. V4 Flash achieves comparable reasoning performance to V4 Pro in Think Max mode but falls slightly behind on knowledge-intensive tasks and the most complex agentic workflows. V4 Flash is significantly cheaper, at $0.28 per million output tokens.

10. Can DeepSeek V4 Pro process images or audio?

No. Both V4 Pro and V4 Flash are text-in, text-out only at the time of launch.

Conclusion

DeepSeek V4 Pro is the most capable open-weights model available as of late April 2026, and it is not particularly close. It leads open-source benchmarks in coding, competitive programming, and agentic task performance. It matches closed-source frontier models on SWE-bench Verified at 7x lower output pricing. And it does all of this with a genuinely novel architecture — Hybrid Attention, mHC, Muon optimizer — that solves real engineering problems at scale rather than simply adding more of the same.

For engineering teams, AI researchers, and product builders who have been waiting for open-source AI to close the gap with the frontier, DeepSeek V4 Pro is the clearest signal yet that the wait is effectively over. The remaining gaps — multimodal capability, final post-training polish, enterprise support — are real but shrinking.

The question now is not whether DeepSeek V4 Pro belongs in your evaluation. It does. The question is how quickly your team is ready to act on what the benchmarks are telling you.