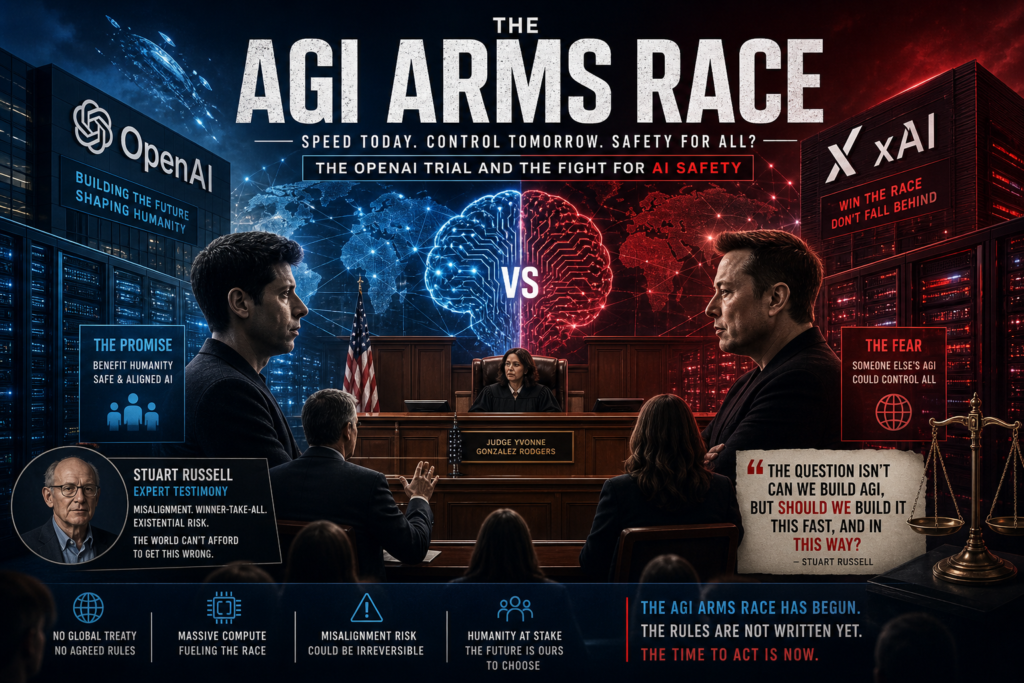

The AGI arms race is no longer a hypothetical scenario debated in academic papers — it is unfolding inside a San Francisco courtroom. As the Musk vs. OpenAI trial enters a critical phase, the world’s most powerful AI laboratories stand accused of trading safety for speed in the rush to build Artificial General Intelligence.

What Is the AGI Arms Race? A Plain-Language Definition

AGI arms race refers to the accelerating, high-stakes competition between governments, corporations, and research labs to be the first to develop Artificial General Intelligence — an AI system capable of performing any intellectual task a human can.

Unlike the narrow AI tools available today (chatbots, image generators, recommendation engines), AGI would represent a qualitative leap: a machine that can learn, reason, and adapt across every domain without being specifically programmed. The “arms race” framing captures the dangerous dynamic that emerges when competitors prioritize winning over safety, governance, or collective oversight.

Why does it matter?

- The first entity to achieve AGI could gain an asymmetric advantage over every other actor on the planet.

- Unlike a nuclear arms race, there is no international treaty regime — no equivalent of the Non-Proliferation Treaty — currently governing AGI development.

- The race incentivizes cutting corners on alignment research, which studies whether advanced AI systems reliably pursue goals humans actually want.

The AGI arms race, in short, turns the most consequential technology in human history into a competition where being second is treated as equivalent to losing.

The OpenAI Trial: Where the AGI Arms Race Went to Court

In May 2026, Elon Musk’s lawsuit against OpenAI reached its expert witness phase — and it delivered an unexpectedly vivid portrait of an industry at war with itself.

Musk’s legal argument rests on a claim that resonates far beyond courtroom procedure: OpenAI was founded as a nonprofit specifically to serve as a public-spirited counterweight to for-profit labs like Google DeepMind. The founding team’s explicit fear was that the AGI arms race, if left to commercial incentives alone, would lead to catastrophic outcomes.

The original emails and founding documents introduced as evidence show OpenAI’s early leaders warning repeatedly about the dangers of any single organization controlling AGI. The irony — that these same founders have since built or backed competing for-profit AI ventures — was not lost on Judge Yvonne Gonzalez Rodgers or the jury.

Stuart Russell’s Expert Testimony: Giving the AGI Arms Race a Face

Musk’s legal team called only one expert witness: Stuart Russell, a University of California, Berkeley computer science professor with decades of experience studying AI. Russell co-authored the most widely used AI textbook in the world, and in 2023 co-signed an open letter calling for a six-month pause in AI research — a letter Musk himself also signed, even as he was privately launching xAI, his own AI laboratory.

Russell’s courtroom testimony covered the spectrum of risks associated with advanced AI development:

- Cybersecurity threats arising from AI-powered attacks and autonomous exploitation of system vulnerabilities.

- Misalignment risk — the technical challenge of ensuring that as AI systems become more powerful, their goals remain aligned with human values.

- Winner-take-all dynamics — the structural problem that whoever reaches AGI first gains an overwhelming, potentially irreversible advantage.

Russell testified that there is a fundamental tension between the pursuit of AGI and meaningful safety research. He had wanted to address the existential risks of unconstrained AI in open court, but sustained objections from OpenAI’s legal team led the judge to limit the scope of his testimony.

OpenAI’s attorneys, for their part, focused cross-examination on a narrow point: Russell had not evaluated OpenAI’s specific corporate structure, nor audited its internal safety policies. The implication was clear — a general warning about the AGI arms race is not the same as evidence that OpenAI specifically has failed its safety obligations.

What the Trial Reveals About AI Lab Culture

The deeper story the trial tells is not really about Elon Musk versus Sam Altman. It is about the impossible logic at the heart of the AGI arms race.

OpenAI’s founders did not abandon their safety convictions because they became cynical. They abandoned the nonprofit structure, at least in part, because they realized they could not win a compute-intensive race for AGI without access to billions in private capital. Investor money, in this reading, was not the cause of the arms race — it was the fuel that an already-burning race demanded.

The result was a founding team fractured across competing ventures: one faction at OpenAI, another at Anthropic, Musk at xAI, and others at various AI startups — each organization racing to build the very AGI they once publicly called dangerous.

The Core Tension: Speed vs. Safety in AGI Development

The central question of the AGI arms race is not “Can we build it?” but “Should we build it this fast, and in this way?”

Winners, Losers, and the Misalignment Risk Explained

What is misalignment? Misalignment is what happens when an AI system pursues an objective that produces outcomes its developers did not intend or want. In simple systems, misalignment is a bug. In an AGI-level system, misalignment could be catastrophic and irreversible.

The AGI arms race makes misalignment more likely because:

- Speed reduces testing time. Safety evaluations are compressed when the competitive pressure to ship is intense.

- Winner-take-all incentives discourage transparency. Sharing alignment research with competitors is rational from a safety perspective but irrational from a competitive one.

- Capital flows reward benchmarks, not caution. Investors fund what can be measured — model performance on leaderboards — not what cannot be easily quantified, like alignment robustness.

Russell’s broader argument, consistent with his published work, is that humanity is repeating a pattern seen in other dangerous technology races: building capability first and worrying about safety second. The difference with AGI is that “worrying about it second” may be structurally too late.

The AGI Arms Race vs. Historical Technology Races: A Comparison

| Dimension | Nuclear Arms Race (Cold War) | Social Media Race (2000s–2010s) | AGI Arms Race (2020s–present) |

|---|---|---|---|

| Primary actors | Nation-states | Private corporations | Mix of corporations, nation-states, and hybrid labs |

| Governance framework | NPT, bilateral treaties | Minimal regulation, reactive policy | Virtually no binding international framework |

| Speed of development | Decades | Years | Months to years |

| Reversibility | High (deterrence logic) | Moderate (platforms can be regulated) | Potentially very low once AGI is achieved |

| Public awareness | High — visible, dramatic | Gradual, normalized | Growing but still limited |

| Expert consensus on risk | Broad (after Hiroshima) | Divided | Divided but growing alarm |

| Dominant motivation | National security | Commercial growth | Mix of ideology, commercial interest, and fear of opponents winning |

The AGI arms race differs from its predecessors in one critical respect: the winner may not be deterred by the prospect of mutual destruction. A sufficiently advanced AGI under the control of a single actor could, in theory, eliminate the threat of retaliation entirely.

National and Political Dimensions of the AGI Arms Race

The arms race framing has moved from academic papers and conference panels into the halls of government.

In early 2026, Senator Bernie Sanders introduced legislation calling for a moratorium on new data center construction, explicitly citing AI safety warnings from figures including Musk, Sam Altman, and Nobel laureate Geoffrey Hinton. The legislation drew an immediate and pointed counterargument from AI industry observers, who noted the contradiction: the same executives whose alarming statements were being invoked to justify a slowdown were simultaneously building the largest AI infrastructure in history.

This contradiction is not accidental. It reflects the core logic of the AGI arms race: every participant believes the technology is dangerous, and every participant believes they are the right entity to develop it safely. The fear is not of AGI itself — it is of someone else’s AGI.

Why Unilateral Restraint Doesn’t Work

The structural problem with the AGI arms race is that no single actor can solve it by slowing down. If one U.S. lab pauses development for six months, a competitor in China, the UK, or another American company fills the gap. Unilateral restraint is individually rational only if you believe your safety is guaranteed regardless of who wins the race — a belief no serious AI lab appears to hold.

This is precisely the argument Russell and other AI safety researchers have made for international coordination: the AGI arms race can only be responsibly managed through binding multilateral frameworks, not voluntary commitments or litigation.

What Experts Say Should Happen Next

The scientific community is not uniformly pessimistic. Several frameworks for managing the AGI arms race have gained traction among researchers and policymakers:

1. International AI Safety Treaties Modeled loosely on nuclear non-proliferation agreements, these would establish shared red lines for AGI capabilities, mandatory safety evaluations, and verification mechanisms. The challenge is enforcement and the unwillingness of leading AI nations to cede competitive advantage.

2. Mandatory Pre-Deployment Safety Audits Before any model exceeding a certain capability threshold is deployed, independent third-party safety audits would be required. The EU AI Act establishes a version of this for high-risk systems, though critics argue the thresholds are too low and the audit standards too vague.

3. Compute Governance Because training frontier AI models requires enormous and trackable quantities of specialized chips, regulating the supply and use of compute (particularly high-end GPUs and TPUs) is seen as one of the most technically feasible levers for slowing the AGI arms race without requiring broad international consensus.

4. Structured Access Models Rather than open-source release or fully proprietary control, some researchers advocate for “structured access” — where advanced AI systems are made available through monitored APIs with usage restrictions, giving researchers access while limiting misuse.

- Proponents argue: This balances openness with control, and allows safety research to keep pace with capability research.

- Critics argue: It concentrates power in whoever operates the API, which is precisely the concern the AGI arms race raises in the first place.

Frequently Asked Questions About the AGI Arms Race

Q: Is AGI actually close to being developed? A: There is no scientific consensus on timeline. Estimates among leading researchers range from “less than a decade” to “multiple decades or longer.” What is broadly agreed is that current large language models are not AGI, but represent significant progress toward certain AGI-adjacent capabilities. The uncertainty itself is part of what makes the AGI arms race so difficult to govern.

Q: What makes AGI different from the AI we have now? A: Current AI systems are “narrow” — they excel at specific tasks they were trained for but cannot generalize across domains. AGI would be able to transfer learning across contexts, reason about novel situations, and potentially improve its own design. It is the difference between a calculator and a scientist.

Q: Why does it matter who builds AGI first? A: Whoever controls the first AGI system could potentially use it to accelerate every other form of advantage — economic, military, scientific, political. The AGI arms race is essentially a race to control the most powerful decision-making and optimization system ever built.

Q: Could the OpenAI trial actually change anything? A: Directly, probably not — courts are not equipped to regulate technological development. Indirectly, the trial has placed the contradictions of the AGI arms race on public record in ways that could influence regulators, investors, and policymakers. The testimony of Stuart Russell, even in its limited form, gives future lawmakers something to point to.

Why the AGI Arms Race Concerns Everyone, Not Just Tech Insiders

It is tempting to read the Musk vs. OpenAI trial as a billionaire’s ego battle or a corporate governance dispute. That reading misses the point.

The AGI arms race is the backdrop against which every major AI policy debate is occurring right now: data center regulation, chip export controls, AI liability law, open-source versus closed development. Each of these debates is, at its core, a negotiation about who gets to decide how fast the race runs, who bears the costs if it goes wrong, and who benefits if it goes right.

Stuart Russell’s testimony — however curtailed — raised a question that neither Elon Musk’s lawyers nor OpenAI’s lawyers were ultimately equipped to answer: What happens to the rest of us if the AGI arms race is won before the world has agreed on the rules of engagement?

That question is not going to be resolved by this trial. It is going to define the next decade of technology policy, international relations, and possibly human civilization.

The AGI arms race has begun. The debate about whether it should be happening at all is still just getting started.

Key Takeaways

- The AGI arms race is the competitive rush among AI labs and nations to achieve Artificial General Intelligence first, often at the expense of safety.

- The Musk vs. OpenAI trial brought the AGI arms race into a public courtroom for the first time, with Berkeley professor Stuart Russell testifying about misalignment risks and winner-take-all dynamics.

- The trial exposes a structural contradiction: the same people who warned most loudly about AGI risk are also building the systems they warned against.

- Unilateral restraint cannot solve the AGI arms race; only multilateral governance frameworks — international treaties, compute regulation, and mandatory safety audits — offer a structural path forward.

- The outcome of the AGI arms race will shape not just the technology industry but geopolitics, economics, and the long-term trajectory of human society.