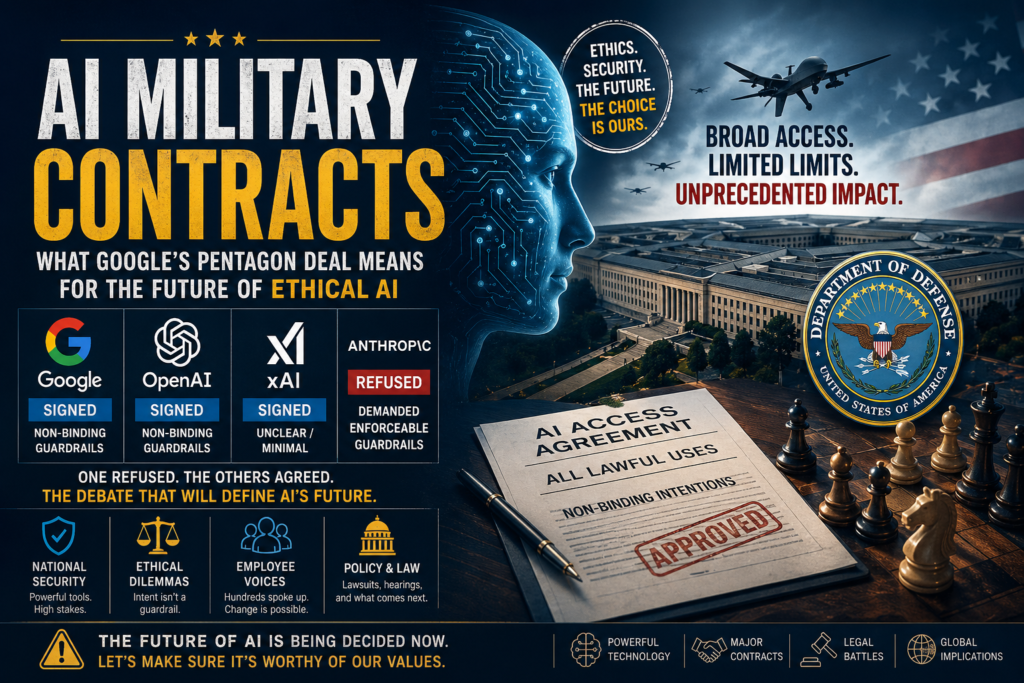

Google just handed the U.S. Department of Defense unrestricted access to its AI — and the ripple effects across the AI industry could be enormous. This move, coming directly on the heels of Anthropic’s high-profile refusal and subsequent lawsuit, has turned AI military contracts into one of the most consequential policy battlegrounds of 2026.

What the Google-Pentagon AI Deal Actually Means

On April 28, 2026, Google granted the U.S. Department of Defense access to its AI tools for classified networks — essentially permitting all lawful uses with minimal binding restrictions. This is not a limited pilot or a carefully scoped research arrangement. It is a broad agreement that opens Google’s AI stack to one of the world’s largest and most powerful military organizations.

The deal includes contract language stating that Google does not intend for its AI to be used in domestic mass surveillance or autonomous weapons systems. However, as The Wall Street Journal reported, it remains unclear whether these provisions are legally enforceable. Intent, after all, is not a guardrail.

This decision places Google in direct alignment with OpenAI and xAI, both of which signed similar agreements with the Pentagon in early 2026. It also places Google in stark contrast with Anthropic, which refused — and paid a steep political price for it.

Key takeaway: AI military contracts are no longer a fringe policy debate. They are now central to the commercial strategy and ethical identity of every major AI company.

The Anthropic Refusal — A Timeline

To understand what Google’s deal represents, you need to understand what Anthropic’s refusal cost.

Why Anthropic Said No

Anthropic’s position was not vague or ambiguous. When the Pentagon sought broad, unrestricted AI access — including use cases involving domestic mass surveillance and autonomous weapons — Anthropic declined. The company insisted on enforceable guardrails: specific, contractually binding limitations that would prevent its models from being deployed in ways it considered harmful.

This stance is consistent with Anthropic’s stated mission of developing AI safely and in the interest of humanity. The company’s leadership has consistently argued that the terms under which AI is deployed matter as much as the technology itself. For Anthropic, handing over unrestricted AI access to a military context — without explicit prohibitions on the most dangerous applications — was a line it was not willing to cross.

The “Supply-Chain Risk” Label

What happened next was extraordinary. Rather than accept Anthropic’s conditions or move on, the Pentagon formally labeled Anthropic a “supply-chain risk” — a designation historically reserved for foreign adversaries and hostile state actors. The message was unmistakable: refuse us, and we will treat you like an enemy.

Anthropic responded by filing suit. In March 2026, a federal judge granted Anthropic an injunction against the designation while the case proceeds through the courts. The lawsuit remains active and its outcome could set binding legal precedent for how the U.S. government can coerce AI companies into AI military contracts. (AI military contracts, Google Pentagon AI deal, ethical AI in defense, Pentagon AI contracts, AI governance policy)

Google, OpenAI, and xAI: Who Signed What

Three major AI companies moved quickly to fill the vacuum created by Anthropic’s refusal. Here is how each approached the Pentagon’s demands:

- OpenAI was first, signing a deal with the DoD in late February 2026. Like Google’s agreement, it includes non-binding language about mass surveillance and autonomous weapons — but critics argue these are aspirational, not enforceable.

- xAI followed in March 2026. Senator Elizabeth Warren pressed the Pentagon over the decision, raising concerns about xAI’s corporate governance and the speed of the agreement.

- Google completed its deal in late April 2026, becoming the third major AI company to provide classified-network AI access to the DoD. Notably, 950 Google employees signed an open letter urging the company to follow Anthropic’s lead before the deal was finalized. Google did not respond to requests for comment.

Anthropic, meanwhile, remains the only major frontier AI company to have refused unrestricted AI military contracts with the U.S. Department of Defense.

The Core Debate — AI Guardrails vs. Unrestricted Military Access

This entire episode crystallizes a debate that the AI industry has avoided confronting directly: Should AI companies impose mandatory, enforceable guardrails on how governments — including democratic ones — use their technology?

What “Lawful Use” Actually Covers

When a contract permits “all lawful uses,” the scope is vast. Lawful does not mean harmless. Lawful does not mean safe. And lawful does not mean limited. Domestic mass surveillance programs, algorithmic targeting systems, and early-stage autonomous weapons research may all be “lawful” under broad interpretations of U.S. law. Signing a contract with language that says a company does not intend for such uses while permitting “all lawful uses” is, at minimum, a contradiction.

Anthropic’s position was that this contradiction was unacceptable. Google, OpenAI, and xAI have implicitly concluded that it is acceptable — or at least commercially and politically survivable.

The Enforceability Problem

Legal scholars and AI policy researchers have noted that the guardrail language in these AI military contracts is aspirational rather than binding. Without clear definitions, monitoring mechanisms, or penalties for misuse, the stated limitations function as public relations rather than actual policy constraints. This is precisely why Anthropic pushed for enforceable guardrails — and why the Pentagon rejected those terms.

Comparison Table — How AI Companies Responded to Pentagon Demands

| Company | Signed Pentagon Deal | Binding Guardrails | Employee Opposition | Legal Action |

|---|---|---|---|---|

| Yes (April 2026) | Non-binding language only | 950 employees signed open letter | None | |

| OpenAI | Yes (Feb 2026) | Non-binding language only | Limited public opposition | None |

| xAI | Yes (March 2026) | Unclear / minimal | No formal opposition | None |

| Anthropic | No | Demanded enforceable limits | Supported company’s position | DoD labeled it supply-chain risk; injunction won |

Why AI Military Contracts Have Become a Corporate Ethics Flashpoint

Before 2026, most AI ethics debates centered on bias in hiring algorithms, privacy in consumer products, and misinformation on social platforms. The Pentagon AI contracts have fundamentally shifted the terrain.

Here is why this moment matters beyond the specific deals:

- The coercion precedent. The “supply-chain risk” label used against Anthropic suggests that the U.S. government is willing to use regulatory and reputational tools to force AI companies into compliance. This is a significant escalation.

- The shareholder pressure problem. AI companies face a structural conflict: investors want access to large government contracts (the DoD is one of the world’s biggest technology spenders), while safety-focused employees and researchers push for ethical limits. Google’s 950-employee letter is a symptom of this tension, not an outlier.

- The precedent for other governments. If the U.S. DoD can secure broad AI military contracts with minimal enforceable constraints, other governments — including those with fewer civil liberties protections — will use the same playbook.

- The gap between intent and enforcement. Every company that signed a deal stated good intentions. None has made those intentions enforceable. The gap between stated values and contractual reality is where the actual risk lives.

What Comes Next: Scenarios for AI Military Contracts in 2026 and Beyond

The landscape of AI military contracts is evolving rapidly. Here are the most likely near-term developments:

Scenario 1 — Anthropic wins its lawsuit. If the court rules that the “supply-chain risk” designation was an unlawful attempt to coerce a private company, it could set limits on government pressure tactics. This would strengthen the position of any AI company that wants to impose guardrails on future AI military contracts.

Scenario 2 — Congress acts. Several lawmakers, including Senator Warren, have raised questions about the DoD’s AI procurement process. Legislative action — even if modest — could require more transparency and enforceability in AI military contracts going forward.

Scenario 3 — International frameworks emerge. The EU’s AI Act and emerging multilateral discussions on autonomous weapons could create external pressure on U.S. companies operating globally, making it harder to maintain one set of standards at home and another for government clients.

Scenario 4 — Nothing changes. The deals hold, Anthropic’s lawsuit drags on without a landmark ruling, and the pattern of non-binding guardrails becomes the accepted industry norm. AI military contracts proliferate with minimal oversight.

Which scenario unfolds will depend heavily on political will, legal outcomes, and whether the AI industry treats this moment as the inflection point it arguably is.

Frequently Asked Questions About AI Military Contracts

What is an AI military contract? An AI military contract is an agreement between an AI company and a government defense agency — such as the U.S. Department of Defense — granting access to AI tools, models, or infrastructure for military or intelligence purposes. These contracts may cover classified networks, logistics, surveillance, decision-support systems, or weapons development.

Why did Anthropic refuse the Pentagon’s terms? Anthropic refused because the Pentagon sought unrestricted access — including for domestic mass surveillance and autonomous weapons — without enforceable guardrails. Anthropic’s leadership argued that deploying AI in these contexts, without binding contractual limits, was inconsistent with its safety mission.

Are Google’s AI guardrails in its Pentagon deal enforceable? According to reporting by The Wall Street Journal, the language in Google’s agreement expressing its intentions around surveillance and autonomous weapons is not clearly legally binding or enforceable. It represents stated intent, not a contractual restriction with penalties for violation.

What does “supply-chain risk” mean in this context? The “supply-chain risk” designation is typically used to flag foreign companies or adversarial actors that pose a security threat to U.S. government procurement. Applying it to Anthropic — a U.S.-based AI safety company — was widely viewed as an unprecedented and politically motivated move to pressure the company into compliance.

What is the current status of the Anthropic vs. DoD lawsuit? As of April 2026, a federal judge has granted Anthropic an injunction against the supply-chain risk designation while the lawsuit proceeds. The case is ongoing.

Could other AI companies face similar pressure? Yes. Any AI company that declines government contracts on ethical grounds may face reputational or regulatory pressure. The Anthropic case has demonstrated that the U.S. government is willing to use its procurement and designation powers as leverage.

The Bottom Line: AI Military Contracts Are Now an Ethical Litmus Test

The question AI companies face is no longer whether to engage with governments — it is on what terms. Google, OpenAI, and xAI have answered with broad access and non-binding guardrails. Anthropic has answered with a refusal, a lawsuit, and an injunction.

Neither path is without cost. Anthropic has been labeled a national security threat by the government of its own country. Google has signed a deal that 950 of its own employees publicly opposed. The AI industry is being forced to confront a question it cannot indefinitely defer: When does commercial access to power require enforceable ethical limits?

The answer to that question — worked out in courtrooms, boardrooms, and congressional hearings over the coming months — will shape the governance of AI military contracts for a generation.