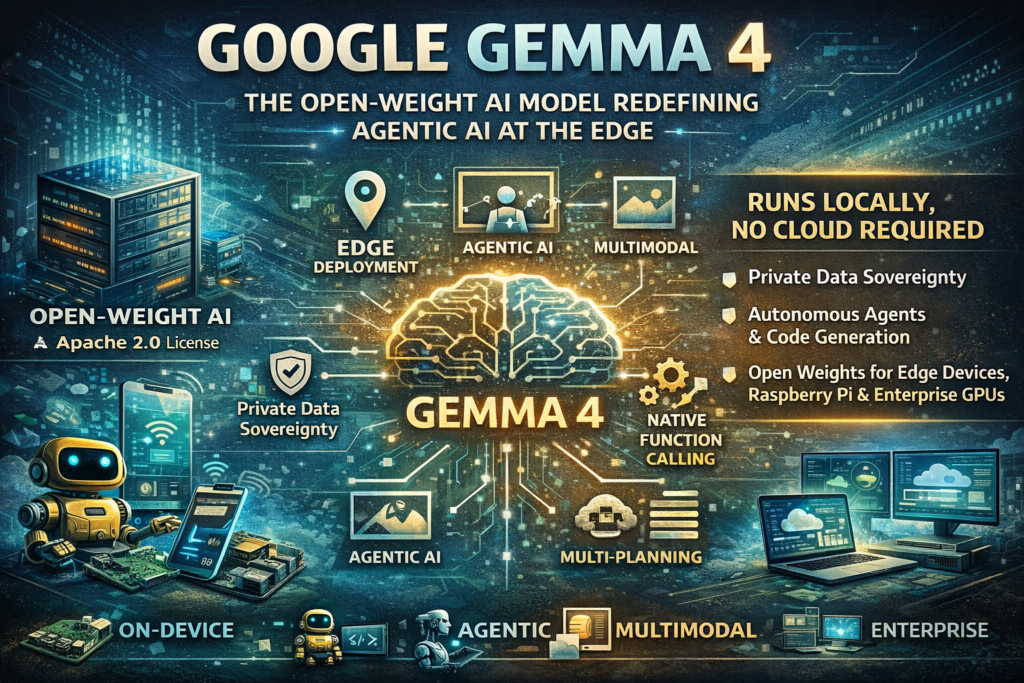

Google Gemma 4 is the most capable open-weight AI model family Google has ever released — and it runs entirely on your own hardware. Whether you’re building autonomous agents, deploying privacy-first applications, or pushing AI to IoT devices and smartphones, Google Gemma 4 delivers frontier-level intelligence without a cloud subscription, per-token fees, or data leaving your network.

Released by Google DeepMind on April 2, 2026, under the commercially permissive Apache 2.0 license, this model family signals a major shift: open-weight AI is no longer a compromise. It’s a competitive strategy.

What Is Google Gemma 4?

Definition: Google Gemma 4 is Google DeepMind’s latest family of open multimodal large language models (LLMs), purpose-built for advanced reasoning, agentic AI workflows, and efficient on-device deployment. CometAPI

The name “Gemma” has always stood for accessible, open AI from Google — but version 4 is a generational leap. Built from the same world-class research and technology as Gemini 3, Google Gemma 4 is the most capable model family you can run on your own hardware. Google The key innovation is what Google calls “intelligence-per-parameter” — packing Gemini-class reasoning into model sizes that fit on consumer devices.

Since the launch of the first generation, developers have downloaded Gemma over 400 million times, building a vibrant Gemmaverse of more than 100,000 variants. Google Google Gemma 4 is the answer to what that developer community has been asking for next: breakthrough capabilities made widely accessible.

The Google Gemma 4 Model Family Explained

Google Gemma 4 isn’t a single model — it’s a carefully designed family of four variants, each targeting a distinct point on the capability-efficiency spectrum.

| Model | Parameters | Architecture | Context Window | Best For |

|---|---|---|---|---|

| E2B | ~2B effective | Dense + PLE | 128K tokens | Mobile, IoT, Raspberry Pi |

| E4B | ~4B effective | Dense + PLE | 128K tokens | On-device assistants, offline apps |

| 26B MoE | 26B total / 4B active | Mixture-of-Experts | 256K tokens | Fast reasoning, agentic workflows |

| 31B Dense | 31B | Dense | 256K tokens | Max quality, fine-tuning, enterprise |

The entire family moves beyond simple chat to handle complex logic and agentic workflows. The 31B model currently ranks as the #3 open model in the world on the industry-standard Arena AI text leaderboard, and the 26B model secures the #6 spot — outcompeting models 20x its size. Google

Edge Models — E2B and E4B

The “E” in E2B and E4B stands for effective — a meaningful distinction. These models use a per-layer embedding architecture that keeps memory footprints tiny, while punching well above their weight class in reasoning benchmarks. DEV Community

To support the extended context lengths required by agentic use cases, LiteRT-LM leverages cutting-edge GPU optimizations to process 4,000 input tokens across 2 distinct skills in under 3 seconds. On the Raspberry Pi 5 running on CPU, it reaches 133 prefill and 7.6 decode tokens/s, while NPU acceleration on the Qualcomm Dragonwing IQ8 boosts performance to 3,700 prefill and 31 decode tokens/s. Google Developers

These are not toy models. They are production-grade AI running entirely offline.

Powerhouse Models — 26B MoE and 31B Dense

The MoE advantage: the 26B model has 26B total parameters but only 4B active per token, enabling frontier-level knowledge with efficient compute. Medium This means fast inference with minimal hardware overhead.

For developers, this new level of intelligence-per-parameter means achieving frontier-level capabilities with significantly less hardware overhead. Google The 31B Dense model, meanwhile, is optimized for maximum quality — ideal for fine-tuning and complex enterprise orchestration.

What Makes Google Gemma 4 Truly Agentic?

“Agentic AI” is more than a buzzword here — it describes a concrete set of capabilities baked into the architecture of Google Gemma 4 from the ground up.

Native Function Calling

Google Gemma 4 includes native support for function calling, enabling developers to build autonomous agents that plan, navigate apps, and complete tasks on their behalf. Google DeepMind Unlike models that require complex prompt engineering to simulate tool use, Gemma 4 treats function calling as a first-class feature, with structured JSON output and native system instruction support.

Native System Prompt Support introduces native handling for the system role, enabling more structured and controllable conversations Google AI — a critical requirement for production agent pipelines.

Multi-Step Planning and Tool Use

Google Gemma 4 can decompose complex tasks into subtasks, execute steps sequentially, adapt based on intermediate results, and handle errors with retry logic. This enables autonomous agents that don’t need hand-holding for every decision. Medium

This is the defining quality of a true agentic system: not just responding to a prompt, but pursuing a goal. Combined with native tool calling, Google Gemma 4 can query APIs, browse structured data, generate code, and verify its own outputs — all in a single autonomous loop running on your hardware.

The built-in reasoning mode lets the model think step-by-step before answering, with configurable thinking modes across all model sizes. LM Studio

Multimodal Capabilities: Beyond Text

Google Gemma 4 is not just a text model. All models handle a broad range of tasks across text, vision, and audio. Key capabilities include image understanding (object detection, document and PDF parsing, screen and UI understanding, chart comprehension, OCR including multilingual, handwriting recognition), video understanding via sequences of frames, and interleaved multimodal input — allowing text and images to be freely mixed in any order within a single prompt. LM Studio

The E2B and E4B edge models go further: these models feature native audio input for speech recognition and understanding Google, enabling full voice-driven agents that operate completely offline on mobile devices.

For developers building document intelligence tools, visual QA systems, or accessibility applications, this multimodal depth — at edge scale — is unprecedented in open-weight AI.

On-Device AI: Why the Edge Matters

Running AI at the edge means more than saving on cloud costs. It means a fundamentally different relationship between your application and your data.

Consider the tradeoffs between cloud-dependent and on-device AI:

Cloud AI:

- Every API call costs money

- Data leaves your network on every request

- Latency on every interaction

- Dependent on internet connectivity

- Subject to rate limits and provider policy changes

On-Device AI with Google Gemma 4:

- One-time model download, unlimited local use

- Data stays private — never leaves the device

- Millisecond response times with no round-trip

- Fully functional offline

- You own and control the entire stack

With Google Gemma 4, developers can go beyond chatbots to build agents and autonomous AI use cases running directly on-device — enabling multi-step planning, autonomous action, offline code generation, and audio-visual processing, all without specialized fine-tuning. Google Developers

The platform support spans mobile (Android and iOS, with system-wide access via Android AICore), desktop and web (Windows, Linux, macOS via Metal, and native browser execution via WebGPU), and IoT and robotics (Raspberry Pi 5 and Qualcomm Dragonwing IQ8, which also powers Arduino VENTUNO Q). Google Developers

This is the broadest hardware coverage ever offered by an open-weight model family at this capability level.

Google Gemma 4 vs. Closed Models: The Case for Open Weights

Why choose Google Gemma 4 over proprietary models like GPT-4o or Gemini 1.5 Pro? The answer depends on your priorities, but the case for open weights has never been stronger.

Advantages of Google Gemma 4 over closed models:

- Data sovereignty: Your inputs and outputs never leave your infrastructure, meeting strict compliance requirements for healthcare, legal, and finance sectors.

- Zero marginal cost: No per-token pricing means you can run millions of inferences without incremental cost.

- Customizability: Developers can fine-tune any variant using Vertex AI Training Clusters, from the E2B model for edge tasks to the 31B dense model for complex enterprise orchestration. Google Cloud

- No vendor lock-in: The Apache 2.0 license allows commercial use, modification, and redistribution without restrictions.

- Benchmark performance: The 31B and 26B variants deliver scores once reserved for much larger proprietary systems, while edge models outperform Gemma 3’s larger predecessor. CometAPI

- Offline capability: Mission-critical applications in aviation, defense, industrial IoT, or remote environments can’t depend on cloud uptime.

- Transparency: Google Gemma 4 undergoes the same rigorous safety evaluations as the proprietary Gemini models Google AI, with published model cards and evaluation benchmarks.

The one meaningful trade-off: closed models from major providers may have more recent training data and broader tool integrations out of the box. For most production use cases, however, Google Gemma 4’s function-calling architecture fills that gap effectively.

How to Deploy Google Gemma 4

Option 1: Ollama (Easiest — Local Desktop)

Ollama is the fastest path to running Google Gemma 4 locally. Install Ollama, pull the model, and run it — no GPU required for the smaller variants.

bash

ollama pull gemma4:e4b

ollama run gemma4:e4bThis gives you a local API endpoint compatible with OpenAI-format clients.

Option 2: Google AI Edge Gallery (Mobile and IoT)

The Google AI Edge Gallery app allows building and experimenting with AI experiences that run entirely on-device. It can generate structured code and control device settings with natural language, all running offline on a phone. DEV Community

The Agent Skills feature provides modular tool packages: each gives the model a new capability without bloating the system prompt. Built-in skills include Wikipedia lookups, interactive maps, QR code generation, and mood tracking. Custom skills can be loaded from the community-featured gallery, via a URL, or by importing from a local file. DEV Community

Option 3: Google Cloud (Enterprise Scale)

Gemma 4 is available on Cloud Run using a prebuilt vLLM container with GPU support, and Vertex AI offers managed endpoints with fine-tuning capabilities for enterprise deployments. Google Cloud The Agent Development Kit (ADK) provides the orchestration framework for building production agents on top of either target.

Option 4: LM Studio / llama.cpp (Power Users)

For developers who want full control, Google Gemma 4 weights are available in GGUF format, compatible with llama.cpp, LM Studio, and Jan. Quantized versions run on consumer GPUs as low as 4GB VRAM for the E2B model.

Real-World Use Cases for Google Gemma 4

The combination of agentic capabilities, edge deployment, and multimodal processing opens up a new class of applications:

Healthcare: Patient-intake assistants that process clinical notes, images, and lab results entirely on-premises — meeting HIPAA requirements without cloud data exposure.

Field Operations: Offline AI assistants for technicians, inspectors, or first responders who need reliable AI in areas without internet connectivity.

Developer Tooling: High-quality offline code generation turns your workstation into a local-first AI code assistant Google, with no subscription required and no code leaving your machine.

Education: Multilingual tutoring applications running on low-cost devices. Gemma 4 supports building experiences that transform paragraphs or videos into concise summaries or flashcards for studying, or transform data into interactive visualizations or graphs. Google Developers

Enterprise Automation: Pairing Google Gemma 4’s multi-step planning capabilities with the GKE Agent Sandbox, developers can safely execute LLM-generated code and tool calls within highly isolated, Kubernetes-native environments that offer sub-second cold starts with up to 300 sandboxes per second. Google Cloud

IoT and Robotics: Smart sensors, industrial monitors, and autonomous vehicles that make decisions at the edge using real-time visual and audio data.

Limitations to Know Before Deploying

No model is perfect. Before building on Google Gemma 4, be aware of these constraints:

- Training data cutoff: The training data cutoff is January 2025, meaning the model has no real-time knowledge without external tools. CometAPI For applications requiring current information, pair Gemma 4 with a web search tool or RAG pipeline.

- Audio scope: Audio support is limited to speech — not music — and video input is capped at 60 seconds. CometAPI

- Hallucination risk: Like all large language models, Gemma 4 can generate plausible-sounding but incorrect information. Use the built-in thinking mode and implement output verification for high-stakes applications.

- Hardware minimums: To run the smallest Gemma 4 model, you need at least 4GB of RAM; the largest may require up to 19GB. LM Studio

- Application-level safety: While Google applies rigorous safety filtering during training, developers are responsible for adding application-specific guardrails for their deployment context.

Conclusion: The Open-Weight Era Has Arrived

Google Gemma 4 represents a genuine inflection point in the history of open AI. For the first time, a fully open-weight model family can compete with frontier closed models on benchmarks, run autonomous multi-step agents natively, process text, images, video, and audio, and deploy across everything from a Raspberry Pi to an enterprise GPU cluster — all under a license that gives you complete ownership.

For developers, the message is clear: you no longer need to choose between capability and control. Google Gemma 4 gives you both. Medium

Whether you’re a solo developer building a local coding assistant, an enterprise architect designing a sovereign AI infrastructure, or a researcher pushing the boundaries of on-device intelligence, Google Gemma 4 is the foundation to build on. The cloud is optional. The capability is not.

Frequently Asked Questions (FAQ)

1. What is this new AI model and why is it significant?

This latest AI model family represents a shift toward more accessible and flexible artificial intelligence. Unlike traditional systems that rely heavily on cloud infrastructure, it allows developers to run powerful AI directly on their own devices. This reduces dependency on external services and gives organizations more control over how their applications function.

Its significance lies in combining high-level reasoning capabilities with efficient performance. Developers can now build advanced applications without needing massive infrastructure, making AI more practical for everyday use cases.

2. How is this different from traditional AI models?

Most traditional AI systems are closed and require API access, meaning users must send data to external servers for processing. In contrast, this model allows local execution, giving developers the freedom to customize and deploy it as needed.

Another key difference is its ability to handle multi-step tasks. Instead of just answering questions, it can plan actions, execute them, and adjust based on outcomes. This makes it far more useful for real-world automation.

3. Can it work without an internet connection?

Yes, one of the biggest advantages is offline functionality. Once installed on a device, it can perform tasks without needing constant internet access. This is especially useful in environments where connectivity is limited or unreliable.

Running AI locally also enhances privacy, since sensitive data does not need to be transmitted to external servers. This makes it suitable for industries where data security is critical.

4. What are the main use cases?

The range of applications is broad. It can be used to build intelligent assistants, automate repetitive workflows, and analyze complex datasets. Developers can also use it for code generation, document processing, and customer interaction systems.

In addition, its ability to operate on edge devices opens up possibilities in areas like IoT, robotics, and field operations. This flexibility makes it valuable across multiple industries.

5. What does “agentic” capability mean in simple terms?

Agentic capability refers to the ability of a system to act independently toward a goal. Instead of waiting for step-by-step instructions, it can break a task into smaller parts, execute them in order, and adjust as needed.

This allows for more dynamic and intelligent behavior. For example, instead of just answering a query, the system can gather information, process it, and deliver a complete solution.

6. What kind of hardware is needed?

Hardware requirements vary depending on the size of the model being used. Smaller versions can run on everyday devices such as laptops or even smartphones, while larger versions may require more powerful systems like GPUs.

The availability of optimized and compressed versions makes it easier to run on consumer-grade hardware, making advanced AI more accessible to a wider audience.

7. Is it suitable for commercial use?

Yes, it is designed to be flexible for both personal and commercial applications. Businesses can integrate it into their products, customize it for specific needs, and deploy it at scale without heavy licensing restrictions.

This makes it an attractive option for startups and enterprises alike, especially those looking to reduce operational costs while maintaining control over their technology.

8. Are there any limitations to consider?

Like any AI system, it is not perfect. It may occasionally produce inaccurate or incomplete results, especially when dealing with complex or ambiguous inputs. It also does not automatically stay updated with real-time information unless connected to external data sources.

Developers need to implement validation mechanisms and oversight processes to ensure reliable performance, particularly in high-stakes applications.

9. How can someone get started?

Getting started typically involves selecting the appropriate model version and installing it using available tools or platforms. Many frameworks make it easy to run the model locally or integrate it into existing systems.

Once set up, developers can experiment with different use cases, refine workflows, and gradually scale their applications based on performance and requirements.

10. What does this mean for the future of AI?

This approach signals a broader shift toward decentralized and accessible AI. By enabling powerful capabilities to run locally, it reduces reliance on centralized systems and opens the door for more innovative applications.

As the technology continues to evolve, it is likely to play a major role in shaping how AI is built, deployed, and used across industries. The focus will move from just access to AI toward how effectively it is implemented and integrated into real-world solutions. (edge AI deployment)