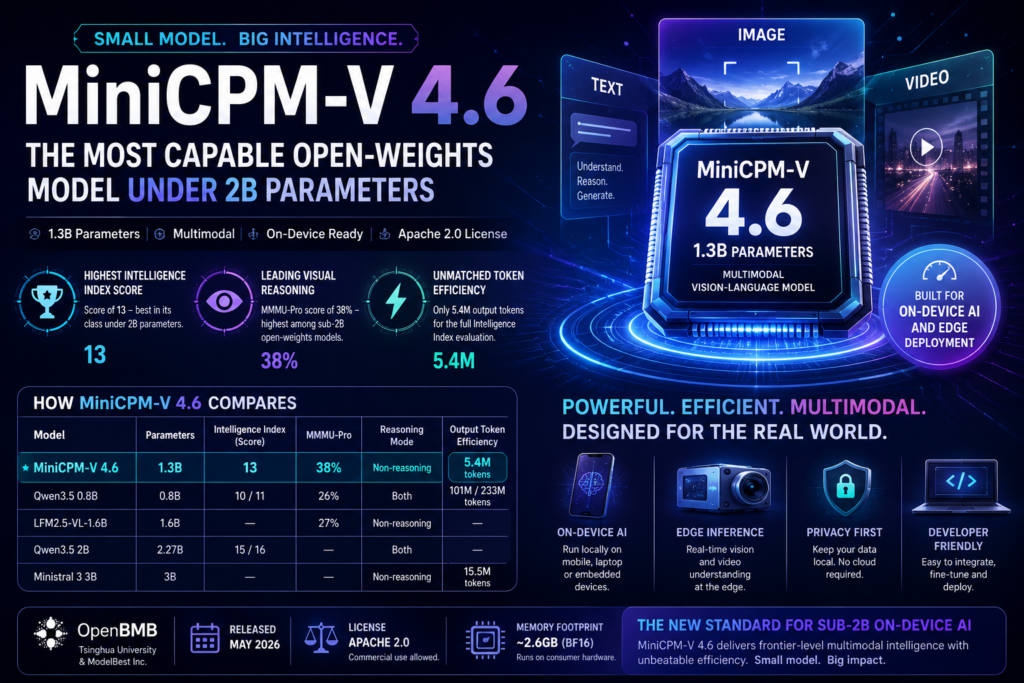

MiniCPM-V 4.6 is a 1.3-billion-parameter vision-language model from OpenBMB that outperforms every open-weights model under 2B parameters on the Artificial Analysis Intelligence Index — including models that use nearly twice as many parameters. If you’re evaluating small language models for on-device AI, edge deployment, or multimodal applications, this release changes your shortlist.

Released in May 2026 under an Apache 2.0 license, MiniCPM-V 4.6 achieves an Intelligence Index score of 13 — the highest for any open-weights model in its size class — while also leading sub-2B models in visual reasoning benchmarks. Here’s what that means for developers, researchers, and enterprises building with constrained compute.

What Is MiniCPM-V 4.6?

MiniCPM-V 4.6 is a dense, multimodal vision-language model with 1.3 billion total parameters, developed by OpenBMB — a collaboration between Tsinghua University’s NLP Lab and Beijing-based ModelBest Inc.

The “V” in the name signals its vision capabilities. Unlike text-only small language models, MiniCPM-V 4.6 accepts text, image, and video as input and produces text output — making it one of the rare sub-2B models capable of native video understanding.

OpenBMB has been building the MiniCPM series since 2022 with a focus on parameter efficiency: squeezing frontier-level capabilities into models that run on laptops, mobile devices, and edge hardware. MiniCPM-V 4.6 is the latest and most capable iteration in that mission.

The model’s weights are publicly available on Hugging Face under the Apache 2.0 license, meaning commercial use is permitted without royalty obligations.

Key Technical Specifications

| Specification | MiniCPM-V 4.6 |

|---|---|

| Total Parameters | 1.3B (dense) |

| Architecture | Dense (not MoE) |

| Context Window | 262,144 tokens |

| Input Modalities | Text, Image, Video |

| Output Modality | Text |

| Precision | BF16 |

| License | Apache 2.0 |

| Intelligence Index Score | 13 |

| MMMU-Pro Score | 38% |

| Output Tokens (Intelligence Index Run) | 5.4M |

| Developer | OpenBMB (Tsinghua University / ModelBest) |

| Release Date | May 2026 |

Why MiniCPM-V 4.6 Redefines the Sub-2B Category

Intelligence Index Performance: The Highest in Its Class

What is the Artificial Analysis Intelligence Index? It’s a composite benchmark that measures general reasoning, instruction-following, knowledge, and language quality across a standardized suite of tasks. Higher scores indicate broader capability.

MiniCPM-V 4.6 scores 13 on the index — the highest score recorded for any open-weights model below 2 billion parameters. For context:

- Qwen3.5 0.8B (Non-reasoning) scores 10 — three points behind MiniCPM-V 4.6, despite being a well-regarded sub-1B model.

- Qwen3.5 2B (Non-reasoning) scores 15 — two points ahead, but requires 1.7× the parameters (2.27B).

This positioning is what benchmark analysts call a “Pareto-optimal” point: MiniCPM-V 4.6 delivers more intelligence per parameter than any comparable open-weights model at this scale.

Multimodal Visual Reasoning: Leading the Sub-2B Pack

What does MiniCPM-V 4.6 score on visual benchmarks? On MMMU-Pro — a rigorous multi-discipline multimodal understanding test — MiniCPM-V 4.6 achieves 38%, the highest score for any open-weights model under 2B parameters.

For comparison:

- LFM2.5-VL-1.6B: 27%

- Qwen3.5 0.8B (Non-reasoning): 26%

This gap is significant. A 12-percentage-point lead over the next contender on MMMU-Pro is not a marginal improvement — it’s a category-level differentiation. And because MiniCPM-V 4.6 also supports video input (not just images), it opens use cases that competitors at this scale simply cannot address.

Token Efficiency: A Standout Feature for On-Device AI

Why does token efficiency matter for small models? On-device AI and edge deployments are constrained by latency, battery life, and compute budgets. A model that produces fewer output tokens to complete the same task runs faster, cheaper, and longer on limited hardware.

MiniCPM-V 4.6 used just 5.4 million output tokens to complete the full Artificial Analysis Intelligence Index evaluation — the lowest count measured for any open-weights model under 4B parameters scoring 10 or above on the Index.

Compare that to its closest competitors:

- Qwen3.5 0.8B (Non-reasoning): 101 million output tokens (~19× more)

- Qwen3.5 0.8B (Reasoning): 233 million output tokens (~43× more)

- Ministral 3 3B (the next most efficient): 15.5 million output tokens (~3× more)

This is MiniCPM-V 4.6’s most underappreciated advantage. Raw intelligence scores attract headlines, but in production environments — especially on-device AI deployments where every token costs compute — efficiency can be the deciding factor.

MiniCPM-V 4.6 vs. Comparable Models: Side-by-Side

| Model | Parameters | Intelligence Index | MMMU-Pro | Reasoning Mode | Output Token Efficiency |

|---|---|---|---|---|---|

| MiniCPM-V 4.6 | 1.3B | 13 | 38% | Non-reasoning | 5.4M tokens |

| Qwen3.5 0.8B | 0.8B | 10 (Non-reasoning) / 11 (Reasoning) | 26% | Both | 101M / 233M tokens |

| LFM2.5-VL-1.6B | 1.6B | — | 27% | Non-reasoning | — |

| Exaone 4.0 1.2B | 1.2B | ~10 | — | Non-reasoning | — |

| Qwen3.5 2B | 2.27B | 15 (Non-reasoning) / 16 (Reasoning) | — | Both | — |

| Ministral 3 3B | 3B | — | — | Non-reasoning | 15.5M tokens |

Reading the table: MiniCPM-V 4.6 occupies a unique position — top intelligence score in the sub-2B category, top visual reasoning score in the sub-2B category, and the lowest output token count for efficient inference among competitive models under 4B parameters.

Who Should Use MiniCPM-V 4.6?

MiniCPM-V 4.6 is purpose-built for deployment scenarios where compute is constrained and multimodal capability is a requirement. It is an excellent fit for:

- On-device AI applications on mobile, laptop, or embedded hardware where cloud API calls are impractical or cost-prohibitive

- Edge inference pipelines in manufacturing, logistics, or healthcare where images or video frames need real-time analysis without server round-trips

- Researchers and academics building multimodal systems with limited GPU budgets who want a strong vision-language baseline

- Developers prototyping multimodal features who need a lightweight, commercially licensed model to iterate quickly

- Privacy-sensitive applications where sending image or video data to external APIs is not permissible and local inference is required

- Resource-constrained fine-tuning scenarios where the base model’s efficiency means faster experimentation cycles

- Education and personal projects that benefit from Apache 2.0 licensing and a model small enough to run on consumer hardware

Limitations to Understand Before Deploying MiniCPM-V 4.6

Being the strongest model in its size class doesn’t mean MiniCPM-V 4.6 is without trade-offs. Developers should be aware of the following constraints:

Knowledge recall is limited. MiniCPM-V 4.6 scores -85 on AA-Omniscience, the Artificial Analysis knowledge recall benchmark. This is consistent with other sub-2B non-reasoning models (Qwen3.5 0.8B Non-reasoning scores -89; Exaone 4.0 1.2B scores -83), but it confirms that the model has a narrow factual knowledge base. Tasks requiring broad encyclopedic recall or deep domain expertise will underperform relative to larger models.

It is a non-reasoning model. MiniCPM-V 4.6 does not implement chain-of-thought reasoning at inference time. For tasks that require step-by-step logical decomposition — complex math, multi-step coding problems, or nuanced causal analysis — a reasoning-capable model will outperform it.

No confirmed API providers at launch. As of May 2026, no major cloud inference providers have confirmed hosted endpoints for MiniCPM-V 4.6. Users will need to self-host via Hugging Face or local frameworks like llama.cpp, ollama, or transformers.

It is a non-MoE dense model. All 1.3B parameters are active on every forward pass. While this simplifies deployment and avoids the routing overhead of Mixture-of-Experts architectures, it means there is no “selective compute” efficiency during inference beyond the model’s inherent token efficiency.

How to Access MiniCPM-V 4.6

MiniCPM-V 4.6 is available now through the following channels:

Hugging Face Hub — The model weights, model card, and usage documentation are hosted at the OpenBMB organization page on Hugging Face. Search for openbmb/MiniCPM-V to find the MiniCPM-V 4.6 1.3B Instruct repository. The Apache 2.0 license permits commercial and non-commercial use without restriction.

Local inference frameworks — Because the model is dense at 1.3B parameters in BF16 precision, it requires approximately 2.6 GB of VRAM or RAM for loading. This places it within reach of most modern consumer GPUs (8GB VRAM or above) and even some CPU-only inference setups with sufficient system RAM. Compatible inference backends include:

- Hugging Face

transformers(Python, most flexible) llama.cpp(C++, optimized for CPU and consumer GPU inference)ollama(Docker-friendly, easy local deployment)mlx(for Apple Silicon, if converted to MLX format)

Fine-tuning — The Apache 2.0 license and parameter-efficient architecture make MiniCPM-V 4.6 well-suited for supervised fine-tuning (SFT) on domain-specific datasets. Teams with specialized image or video classification tasks can fine-tune on commodity hardware.

Frequently Asked Questions About MiniCPM-V 4.6

What makes MiniCPM-V 4.6 different from other small language models? MiniCPM-V 4.6 combines three advantages rarely found together at the sub-2B scale: top-tier general intelligence (score of 13 on the AI Intelligence Index), native multimodal support for text, image, and video input, and exceptional token efficiency (5.4M tokens to complete the full Intelligence Index benchmark). No other open-weights model under 2B parameters matches all three simultaneously.

Can MiniCPM-V 4.6 run on a laptop or smartphone? Yes, with appropriate tooling. At 1.3B parameters in BF16, the model requires roughly 2.6 GB of memory. Modern laptops with integrated GPU memory sharing or 8+ GB RAM can run inference using frameworks like llama.cpp or ollama. Smartphone deployment requires quantized versions (e.g., INT4 or INT8), which are expected to be released by the community on Hugging Face.

Is MiniCPM-V 4.6 free for commercial use? Yes. The model is released under the Apache 2.0 license, which explicitly permits commercial use, modification, and redistribution without royalty fees, provided license notices are preserved.

How does MiniCPM-V 4.6 handle video input? Video input at the 1B parameter scale is described by OpenBMB as “uncommon.” MiniCPM-V 4.6 supports it natively, though performance on long-form video understanding will be constrained by the model’s context window (262K tokens) and the inherent knowledge limitations of a sub-2B model.

Who built MiniCPM-V 4.6? OpenBMB, a collaboration founded in 2022 by Tsinghua University’s NLP Lab and ModelBest Inc., a Beijing-based AI company. The organization has a track record of releasing capable small language models in the MiniCPM family.

The Bottom Line: A New Benchmark for Compact Multimodal Intelligence

The race to build better artificial intelligence models has long been dominated by scale. For years, the industry narrative suggested that stronger performance could only come from adding more parameters, increasing training data, and expanding compute budgets. This assumption pushed the market toward increasingly large systems that required specialized hardware, expensive cloud infrastructure, and dedicated engineering teams just to deploy and maintain them. While those large models remain valuable for advanced reasoning, enterprise-scale workflows, and broad knowledge tasks, they are not always practical for real-world deployment environments where latency, cost, privacy, and hardware constraints matter.

This is where compact models are beginning to reshape the conversation.

A major shift is now taking place in AI development. Instead of asking how large a model can become, developers and businesses are increasingly asking how much capability can be compressed into a smaller footprint without sacrificing real-world usability. That shift is not theoretical anymore. Compact multimodal systems are proving they can handle increasingly complex tasks while remaining efficient enough for local inference, embedded systems, and consumer-grade hardware.

This release represents an important milestone in that transition.

What makes this model stand out is not just that it performs well for its size, but that it changes expectations for what small models can realistically achieve. Historically, smaller systems have often been treated as lightweight alternatives—useful for simple chatbots, narrow classification tasks, or educational experimentation, but rarely serious contenders for production-grade multimodal workloads. That perception is becoming outdated.

This model demonstrates that compact architectures can now deliver strong general intelligence, visual understanding, and efficient inference in a single deployable package. That combination matters more than raw benchmark numbers because practical AI adoption is rarely about isolated scores. It is about whether a system can be integrated into products, deployed reliably, and operated within business constraints.

For many teams, the real deployment bottlenecks are no longer purely about model quality. They are operational.

Cloud dependency introduces recurring API costs, data transfer concerns, rate limits, and latency overhead. In regulated industries or privacy-sensitive environments, routing image or video data through external infrastructure may not even be an option. A locally deployable model avoids many of these issues by enabling inference directly on hardware controlled by the developer or organization.

This has implications across industries.

In healthcare settings, visual data often contains highly sensitive information. Keeping inference local can reduce compliance concerns while improving response times. In manufacturing environments, edge systems need near-instant analysis of camera feeds, defect detection, or process monitoring without relying on unstable network connections. In logistics, warehouse systems increasingly depend on real-time visual intelligence for inventory analysis, scanning, and operational automation.

Consumer applications benefit just as much.

Mobile AI assistants, offline productivity tools, smart cameras, document analysis apps, and educational software all require models that can function reliably without continuous internet access. Users increasingly expect AI capabilities to feel instantaneous. Delays caused by cloud round-trips degrade user experience, drain battery, and introduce dependency on external services.

A smaller model with strong multimodal performance solves many of these problems elegantly.

Another important factor is cost efficiency. Large models are often impressive in demonstrations but expensive in sustained operation. Organizations testing AI products quickly discover that inference cost scales with usage, and cloud-based architectures can become financially inefficient as adoption grows. Local deployment changes the economics by shifting cost from recurring usage to hardware provisioning and optimization.

That is especially attractive for startups, research labs, and mid-sized businesses with limited infrastructure budgets.

A compact model lowers the barrier to experimentation. Teams can prototype faster, fine-tune more affordably, and iterate without burning through API credits or provisioning enterprise GPUs. This accessibility expands who can participate in advanced AI development. Instead of limiting multimodal experimentation to well-funded labs, compact systems make deployment practical for smaller teams and independent developers.

The licensing model also matters.

Commercial restrictions have slowed adoption of many otherwise capable open models. Businesses evaluating open-source AI often face uncertainty around redistribution, monetization, or product integration. A permissive license removes much of this friction, allowing teams to test, adapt, and deploy with far greater confidence.

This creates strategic flexibility.

Organizations are no longer forced to choose between closed proprietary APIs and weak open alternatives. They can adopt a compact open model, customize it for domain-specific use cases, and retain infrastructure control. That matters not only for cost, but for long-term product ownership.

Of course, compact systems are not universal replacements.

There are still meaningful trade-offs. Smaller architectures naturally have limited world knowledge, narrower factual recall, and reduced reasoning depth compared to larger frontier systems. Tasks requiring deep research, complex coding workflows, advanced mathematical decomposition, or highly nuanced long-form reasoning will still favor larger or reasoning-optimized architectures.

That limitation should not be misunderstood as a weakness unique to this release. It is a reality of model scaling economics.

The more useful question is not whether a compact model can outperform a frontier system on every task—it cannot. The more relevant question is whether it is sufficient for the majority of practical workflows that need speed, multimodal understanding, local control, and affordable deployment.

In many cases, the answer is now yes.

That is what makes this release notable. It is not simply another incremental benchmark improvement or a smaller alternative for hobbyists. It represents a broader maturity point in the compact AI ecosystem. Small models are becoming legitimate deployment choices rather than fallback options.

This trend is likely to accelerate.

As hardware optimization improves, quantization techniques become more accessible, and developer tooling matures, compact multimodal systems will continue gaining adoption. The future of AI will not be defined solely by increasingly large centralized systems. It will also include distributed intelligence running across laptops, phones, embedded devices, cameras, industrial equipment, and local servers.

That future requires models built with efficiency as a first-class objective.

This release aligns closely with that direction. It combines practical deployment characteristics with competitive multimodal performance, making it one of the clearest signals yet that compact AI has entered a new phase of relevance.

For developers, this means reevaluating assumptions.

A small model no longer automatically means compromise. In the right deployment context, a compact multimodal architecture may be the more rational engineering decision than a larger, more expensive alternative. Performance must always be evaluated relative to constraints, not in isolation.

This is ultimately why the release matters.

It is less about a single benchmark win and more about what that win represents: a growing proof point that intelligent, efficient, and locally deployable multimodal systems are no longer aspirational. They are here, practical, and increasingly competitive.

For teams building the next generation of AI-powered products, that changes the evaluation landscape significantly.

The era of assuming “bigger is always better” is beginning to crack. What replaces it is a more nuanced reality where capability, efficiency, deployability, and cost all matter together.

And in that new equation, compact multimodal systems are no longer the underdogs. They are becoming the practical standard.