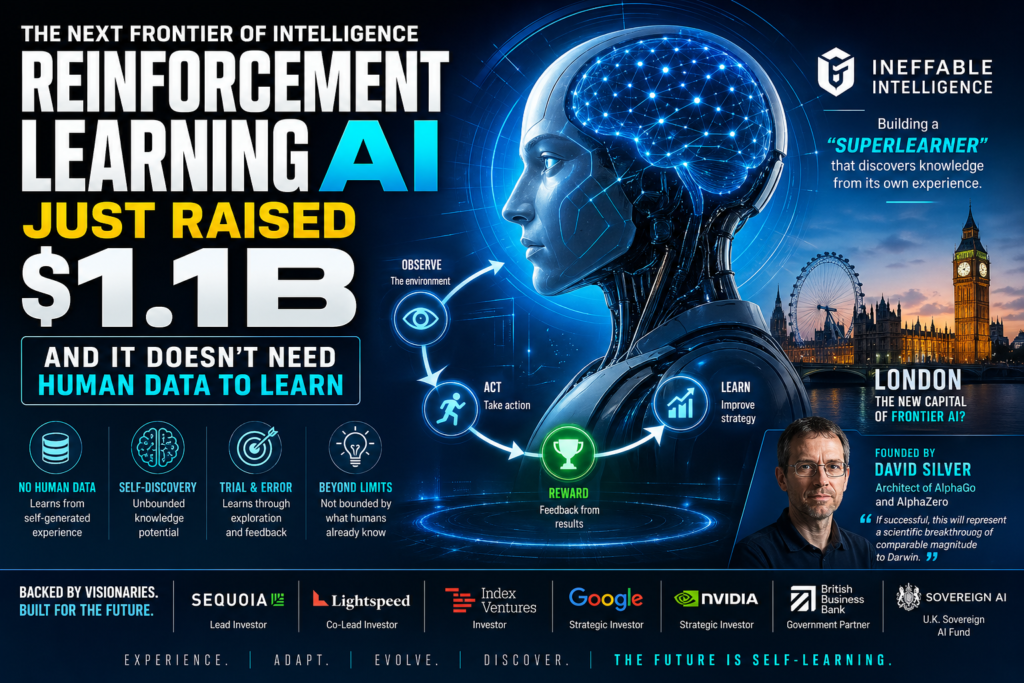

The next frontier of artificial intelligence may not need a single human-written word to become smarter. Ineffable Intelligence, a London-based AI lab founded by former DeepMind researcher David Silver, closed a $1.1 billion funding round in April 2026 — betting that reinforcement learning AI can surpass the capabilities of today’s large language models by learning entirely from its own experience.

This is not a minor technical tweak. It’s a fundamental rethink of how intelligence is built.

What Is Ineffable Intelligence?

Ineffable Intelligence is a British AI startup founded by David Silver, one of the world’s foremost experts in reinforcement learning. The company launched its public website in April 2026 alongside the announcement of its $1.1 billion Series A raise at a $5.1 billion valuation — making it an instant “pentacorn.”

Its stated mission: build a “superlearner” — an AI system capable of discovering knowledge and skills from its own experience, without relying on human-generated data.

That last phrase is what sets it apart from virtually every major AI system deployed today.

What Does “AI That Learns Without Human Data” Actually Mean?

Short answer: It means building an AI that discovers knowledge the same way a child explores a room — by interacting, failing, adjusting, and improving — rather than by reading millions of human-authored books and websites.

Today’s most capable AI systems, including ChatGPT, Gemini, and Claude, are built on a paradigm called supervised learning. They are trained on enormous datasets of human-generated text, code, and images. The AI learns patterns in that data and becomes skilled at replicating and recombining them.

Reinforcement learning AI works differently. Instead of learning from a static dataset, it learns by taking actions in an environment, observing the outcomes, and updating its behavior based on feedback — often defined as a reward signal. There’s no need for a human to label what’s “correct.” The system figures that out on its own.(reinforcement learning AI, AI without human data, superlearner AI, David Silver AI startup, AlphaZero successor )

Reinforcement Learning vs. Supervised Learning: A Clear Comparison

| Feature | Supervised Learning (LLMs) | Reinforcement Learning AI |

|---|---|---|

| Data source | Human-generated text/images | Self-generated experience |

| Learning mechanism | Pattern matching on labeled data | Trial-and-error with reward signals |

| Knowledge ceiling | Limited by training data | Potentially unbounded |

| Human oversight needed | High (data curation, labeling) | Lower (reward function design) |

| Best at | Language tasks, summarization | Strategy, discovery, reasoning |

| Example systems | GPT-4, Claude, Gemini | AlphaGo, AlphaZero, Ineffable’s “superlearner” |

The promise of reinforcement learning AI, at its most ambitious, is that it isn’t bounded by the limits of what humans already know. If the reward function is well-designed, the system can discover strategies, solutions, and knowledge that no human has yet articulated.

David Silver: The Mind Behind the Mission

If there is anyone credentialed to pursue this vision, it is David Silver.

Silver spent more than a decade at Google DeepMind, where he led the reinforcement learning research team. During that time, he was centrally involved in developing some of the most stunning demonstrations of machine intelligence ever witnessed:

- AlphaGo — the first AI to defeat a professional human player at the board game Go, widely considered one of the most complex strategy games in existence.

- AlphaZero — perhaps Silver’s most celebrated work. AlphaZero was given only the rules of chess, shogi, and Go. Within 24 hours of self-play, it surpassed every previously existing computer program — and all human grandmasters. No human games were used for training. No human strategies were fed in. It discovered everything on its own.

Silver is also a professor at University College London. He has described Ineffable Intelligence as “his life’s work.”

The ambition is stated plainly on the company’s website: “If successful, this will represent a scientific breakthrough of comparable magnitude to Darwin: where his law explained all Life, our law will explain and build all Intelligence.”

The $1.1B Funding Round: Who’s Betting Big and Why

Ineffable Intelligence’s raise is one of the largest for any AI startup at such an early stage. The round was led by Sequoia Capital and Lightspeed Venture Partners, two of Silicon Valley’s most prestigious venture firms. But the investor list reads like a who’s-who of both private capital and sovereign power:

Key Investors at a Glance

| Investor | Type | Significance |

|---|---|---|

| Sequoia Capital | Venture Capital | Round lead; backed Google, Apple, OpenAI |

| Lightspeed Venture Partners | Venture Capital | Round co-lead; major AI portfolio |

| Index Ventures | Venture Capital | Europe’s top VC, London-based |

| Strategic | DeepMind’s parent company | |

| Nvidia | Strategic | World’s leading AI chip maker |

| British Business Bank | Government | U.K. public financial institution |

| Sovereign AI | Government Fund | U.K.’s new sovereign AI venture fund |

The inclusion of Google and Nvidia signals strategic conviction — both companies have direct stakes in the trajectory of reinforcement learning AI as a field. Nvidia’s chips power most AI training runs; Google’s DeepMind is Silver’s former employer and the birthplace of AlphaZero.

Perhaps most notable is the participation of Sovereign AI, the U.K.’s newly launched national venture fund for artificial intelligence. This is a government-backed vote of confidence in Ineffable’s approach — and in Britain’s ambitions as an AI superpower.

The Superlearner Concept Explained

What is a “superlearner”? In Ineffable Intelligence’s framing, a superlearner is an AI agent that can discover any knowledge domain from scratch — not by reading about it, but by experiencing it.

The concept draws directly on the success of AlphaZero. That system was given a set of rules and a goal (win the game), and it bootstrapped its own expertise through millions of games played against itself. A superlearner would apply this same principle more broadly — potentially to science, mathematics, engineering, medicine, and beyond.

The core claim is this: human-generated data is a ceiling. The sum of all human knowledge is vast, but it is finite, biased, and sometimes wrong. A reinforcement learning AI that generates its own experience is constrained by none of those limitations. It can explore areas of knowledge space that no human has mapped.

This is the bet Ineffable Intelligence is making with $1.1 billion in backing.

Why This Matters: The Known Limits of Large Language Models

To understand why reinforcement learning AI is attracting this level of capital and attention, it helps to understand what current AI systems cannot do well.

Large language models like GPT-5, Claude, and Gemini are extraordinarily capable at tasks involving language: summarizing documents, writing code, answering questions, translating text. But they have well-documented limitations:

- They hallucinate — they generate plausible-sounding but factually incorrect information because they are pattern-matching, not reasoning from first principles.

- They are bounded by training data — an LLM cannot know what it hasn’t been trained on, and its knowledge is frozen at a cutoff date.

- They don’t truly discover — they recombine existing human knowledge rather than generating novel insight.

- They require massive human-curated datasets — building a competitive LLM today requires billions of dollars in data collection, labeling, and curation.

Reinforcement learning AI addresses each of these in principle. A system learning from direct experience doesn’t rely on text corpora, doesn’t inherit human biases in the same way, and can — at least theoretically — discover genuinely new knowledge.

The question is whether Ineffable Intelligence can translate that theoretical potential into a real, deployable system. That remains unproven. But the investors apparently believe the odds are worth a $5.1 billion bet.

London’s Moment: A New AI Capital Is Taking Shape

Ineffable Intelligence’s raise is not happening in a vacuum. It is part of a broader, accelerating trend: London is establishing itself as a serious global hub for frontier AI research.

Several forces are converging:

- DeepMind’s legacy: Google acquired DeepMind in 2014, but the lab has remained rooted in London. Over a decade, it has trained a generation of world-class AI researchers — many of whom are now founding their own companies.

- The “DeepMind mafia”: Multiple former DeepMind researchers are now at the helm of well-funded startups. Silver at Ineffable Intelligence. Tim Rocktäschel co-founding Recursive Superintelligence (reportedly valued at up to $1 billion). This alumni network is becoming a gravitational force.

- U.K. government investment: The launch of Sovereign AI and the British Business Bank’s participation in this round signal that the U.K. government is actively trying to anchor frontier AI development on home soil.

- Big Tech scouting: Jeff Bezos’ AI lab, Project Prometheus, is reportedly in talks to establish office space near Google’s London AI hub.

London is beginning to look less like a secondary AI market and more like a genuine peer to San Francisco.

Honest Caveats: What We Don’t Know Yet

Intellectual honesty requires acknowledging what remains uncertain.

The superlearner is theoretical. Ineffable Intelligence has announced a vision and raised capital against it. It has not yet demonstrated a system that does what it claims. The gap between AlphaZero (mastering a bounded game with clear rules) and a general superlearner (discovering knowledge across open-ended domains) is enormous.

Reinforcement learning has hard problems. Designing reward functions for complex, real-world environments is notoriously difficult. Poorly designed rewards lead to systems that “game” the metric in unintended ways. Scaling these methods beyond games and puzzles has proven challenging for the entire field.

The valuation is extraordinary for pre-product. A $5.1 billion valuation with no deployed product reflects the market’s hunger for bets on foundational AI — not evidence of commercial traction.

The Darwin comparison is audacious. Comparing your potential breakthrough to the theory of evolution is the kind of claim that either ages very well or becomes an embarrassing footnote. History will decide which.

None of this invalidates Silver’s vision. It contextualizes it.

What This Means for the Future of AI

Whether or not Ineffable Intelligence achieves its specific goals, its emergence signals something important about where the frontier of artificial intelligence is moving.

The current paradigm — scaling LLMs on ever-larger human datasets — is showing signs of diminishing returns. The next leap may require a different kind of machine learning altogether. Reinforcement learning AI, with its capacity for self-directed discovery, is one of the most credible candidates for what comes next.

Here’s what to watch for:

- Early demos: Will Ineffable release any proof-of-concept demonstrations of its superlearner? Even partial results would be scientifically significant.

- Competitor responses: How will DeepMind, OpenAI, and Anthropic respond to a well-funded, focused push on reinforcement learning as an alternative to LLMs?

- Regulatory attention: A self-directed AI that discovers knowledge without human oversight raises governance questions that regulators haven’t fully grappled with yet.

- The London effect: Will Ineffable’s raise trigger a further acceleration of talent and capital into the U.K. AI ecosystem?

The company may be one of the most important AI bets of the decade — or it may prove that the problem is harder than anyone expected. Either outcome will teach the field something valuable.

Key Takeaways

- Ineffable Intelligence raised $1.1B at a $5.1B valuation to build a “superlearner” powered by reinforcement learning AI.

- Founded by David Silver, architect of AlphaZero and former head of DeepMind’s RL team.

- The core thesis: reinforcement learning AI can discover knowledge without human data, transcending the limits of large language models.

- Backed by Sequoia, Lightspeed, Google, Nvidia, and the U.K. government’s Sovereign AI fund.

- The company is unproven at scale, but its vision represents one of the most credible alternatives to the LLM paradigm.

- London is emerging as a serious global hub for frontier AI, fueled by DeepMind’s alumni network and government support.

Frequently Asked Questions

What is reinforcement learning AI? Reinforcement learning AI is a type of machine learning in which an AI system learns by interacting with an environment, taking actions, and receiving feedback in the form of rewards or penalties — without relying on human-labeled training data.

How is Ineffable Intelligence different from OpenAI or Anthropic? OpenAI and Anthropic build large language models trained primarily on human-generated text. Ineffable Intelligence is pursuing a reinforcement learning AI approach, where the system learns from self-generated experience rather than existing human data.

Who is David Silver? David Silver is a British AI researcher and professor at University College London. He spent over a decade at Google DeepMind, where he led the reinforcement learning team and co-developed AlphaGo and AlphaZero — AI systems that achieved superhuman performance in board games through pure self-play.

What is a “superlearner”? Ineffable Intelligence’s term for an AI agent capable of discovering knowledge across any domain by learning from its own experience — analogous to how AlphaZero mastered chess without studying human games, but applied to open-ended real-world knowledge.

Why do investors believe in this approach? AlphaZero’s success demonstrated that reinforcement learning AI can achieve superhuman performance in complex domains without human data. The theory is that scaling this approach beyond games could produce AI systems with genuinely novel capabilities — not bounded by the limits of existing human knowledge.

Conclusion

The rise of reinforcement learning AI marks a pivotal shift in how we think about intelligence, both artificial and human. For years, the dominant paradigm in artificial intelligence has relied heavily on human-generated data—massive datasets of text, images, and code that models learn to mimic and recombine. While this approach has produced remarkable systems, it has also revealed clear limitations. Models constrained by existing knowledge can only extrapolate so far. This is where reinforcement learning AI introduces a fundamentally different trajectory—one that is not bounded by what humans already know, but instead driven by what machines can discover on their own.

At the heart of this transformation is the idea that intelligence can emerge through interaction, experimentation, and feedback. Rather than passively absorbing information, reinforcement learning AI actively engages with environments, learns from consequences, and refines its strategies over time. This approach mirrors how humans and animals learn in the real world, making it a more natural and potentially more powerful pathway toward general intelligence. The concept of a “superlearner” pushes this idea even further, suggesting a future where AI systems can independently explore complex domains such as science, medicine, and engineering without needing human instruction at every step.

However, it is important to remain grounded in reality. While the promise of reinforcement learning AI is immense, the challenges are equally significant. Designing effective reward systems, scaling learning to open-ended environments, and ensuring safe, aligned behavior are all unresolved problems. The leap from mastering structured games to navigating the complexity of the real world is not trivial. This means that while the vision is compelling, execution will determine whether it becomes a true breakthrough or remains an ambitious experiment.

From an industry perspective, the massive investment flowing into this space signals strong belief in its potential. When top venture firms, technology giants, and governments align behind a single idea, it reflects more than hype—it reflects a strategic bet on the future. The growing focus on reinforcement learning AI also suggests that the AI landscape is entering a new phase, where innovation is no longer just about scaling models, but about redefining how they learn altogether.

Looking ahead, the evolution of reinforcement learning AI could reshape not just technology, but entire industries and knowledge systems. If successful, it may unlock capabilities that go beyond automation and into true discovery—where machines generate insights that humans have never conceived. At the same time, it will raise important questions about control, ethics, and the role of human oversight in a world where machines can learn independently.

In the end, this moment represents both an opportunity and a test. The future of AI may not be determined solely by how much data we feed into systems, but by how effectively we enable them to learn on their own. And in that future, reinforcement learning AI could very well be the foundation upon which the next generation of intelligence is built.