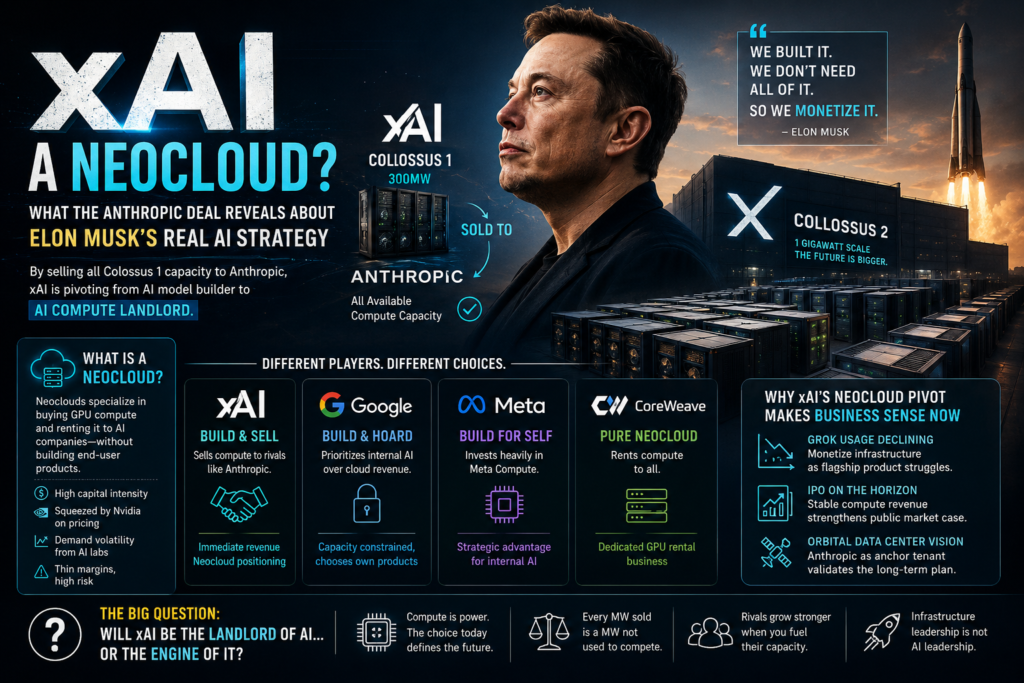

xAI is no longer just an AI model company — it’s quietly becoming a compute landlord. When Anthropic announced it had acquired all available capacity at xAI’s Colossus 1 data center, the AI industry got its clearest signal yet that Elon Musk’s xAI neocloud strategy may be the company’s most important business move of 2026.

This isn’t merely a headline partnership. It’s a fundamental reorientation of what xAI is: an organization that builds massive GPU clusters and rents them out — at a time when compute is the single most contested resource in AI.

What Is a Neocloud? A Clear Definition

A neocloud is a company that purchases large quantities of GPU compute from chip manufacturers like Nvidia and rents that capacity to AI model developers, enterprises, and researchers — without building the consumer or enterprise software products on top.

Neoclouds differ from hyperscalers (Amazon AWS, Google Cloud, Microsoft Azure) in a critical way: they are not full-stack cloud providers. They specialize almost entirely in raw GPU power. Companies like CoreWeave and Lambda Labs are the canonical examples.

The neocloud model sits in a high-risk, capital-intensive position: squeezed between Nvidia’s chip pricing on one end and the volatile, shifting demand from model developers on the other. Margins are thinner than in software, but the demand for AI compute has made it lucrative — at least in the short term.

The xAI–Anthropic Deal: What Actually Happened

On May 6, 2026, xAI and Anthropic announced a partnership in which Claude’s maker acquired “all of the compute capacity at [xAI’s] Colossus 1 data center,” roughly 300MW — a deal that allowed Anthropic to immediately raise its usage limits. TechCrunch

This is a significant transaction, likely worth billions of dollars. More importantly, it converted xAI from a consumer of compute into a provider — the defining characteristic of the xAI neocloud model taking shape.

Colossus 1 vs. Colossus 2: Why xAI Had Compute to Spare

Elon Musk’s explanation for the deal was that xAI had already moved its training workloads to a newer facility, Colossus 2, and simply didn’t need both data centers running simultaneously. TechCrunch

This is the operational logic at the heart of the xAI neocloud opportunity: when a company builds infrastructure faster than its own products can consume it, there’s a choice — let it sit idle, or monetize it. xAI chose to monetize.

Colossus 2 has been described as xAI’s first gigawatt-scale data center, suggesting the company’s buildout ambitions dwarf what Grok currently requires. TechCrunch

Why Anthropic Needed the Compute

Anthropic operates Claude — one of the most widely used AI assistants globally — and compute capacity directly constrains how many users it can serve and at what rate. Acquiring 300MW of ready-to-use GPU infrastructure isn’t a minor upgrade. It’s a structural expansion that immediately changed Anthropic’s capacity ceiling without the 18–24 month lag of building new data centers from scratch.

For Anthropic, the xAI deal was a pragmatic shortcut. For xAI, it was a revenue-generating exit for infrastructure that would otherwise sit partially underutilized.

Why the xAI Neocloud Pivot Makes Business Sense Right Now

The timing of this shift is not accidental. Several converging pressures make the xAI neocloud model strategically attractive in mid-2026.

Grok’s user base has been declining. xAI’s consumer products are primarily focused on Grok, which has seen declining usage since the image generation controversies earlier in 2026. If your flagship consumer product is losing ground, the smartest move is to diversify revenue — and selling compute to rivals who are growing is a highly effective hedge. TechCrunch

An IPO is approaching. xAI, now combined with SpaceX, is accelerating toward a public offering. Green balance sheet metrics — consistent revenue from compute contracts — are far more attractive to public market investors than speculative AI model bets. Locking in Anthropic as a customer adds exactly the kind of predictable, enterprise-grade revenue that IPO narratives are built on. TechCrunch

The orbital data center vision needs credibility. Having Anthropic lined up as a paying customer makes it easier to believe that SpaceX’s orbital data center ambitions might actually work. Every major infrastructure bet requires anchor tenants. Anthropic is now that anchor. TechCrunch

xAI vs. Google vs. Meta: How the Big Players Treat Compute

The xAI neocloud decision becomes even more striking when compared to how other AI-focused tech giants are making the same underlying choice.

| Company | Compute Strategy | Key Decision | Outcome |

|---|---|---|---|

| xAI | Build and sell/rent to rivals | Sold Colossus 1 capacity to Anthropic | Immediate revenue, neocloud positioning |

| Build and hoard for internal use | Prioritized internal AI over Cloud revenue | Google Cloud revenue was lower than it could have been because the company was “capacity constrained” — and when forced to choose between renting GPUs or using them for AI products, Google chose its own products TechCrunch | |

| Meta | Build a dedicated compute apparatus for internal AI only | Launched Meta Compute to guarantee GPU supply | Zuckerberg framed infrastructure investment as a “strategic advantage” TechCrunch |

| CoreWeave | Pure neocloud — rent to all | Dedicated GPU rental business | Comparable compute footprint to xAI, but valued at far less |

The contrast is stark. Google and Meta, despite having significant compute surplus at various points, consistently choose to reserve capacity for their own model development rather than sell it externally. The key word is “strategic” — both companies are building toward a future where AI powers their most lucrative products, and running short on compute means missing out on that opportunity. TechCrunch

xAI has made the opposite bet: sell it now.

The Economics of the Neocloud Business

Understanding why the xAI neocloud comparison is meaningful — and why it’s a double-edged sword — requires understanding the neocloud business model at a structural level.

Key characteristics of neocloud economics:

- Capital intensive: GPUs cost hundreds of thousands of dollars per unit; building data centers at gigawatt scale requires billions in upfront investment.

- Margin squeeze from above: Nvidia controls chip supply and pricing. Neoclouds are price-takers, not price-setters, on their input costs.

- Demand volatility from below: Model developers’ compute needs shift with training cycles. Demand spikes during major training runs and drops during inference-heavy periods.

- Valuation gap: xAI was valued at $230 billion in its January 2026 funding round; CoreWeave, which manages a comparable quantity of computing power, is worth less than a third of that. The market prices AI model ambition far higher than pure infrastructure. TechCrunch

- Differentiation is rare: Without proprietary chips or software, neoclouds compete primarily on price, reliability, and location — a commodity business.

- Chip manufacturing as a hedge: xAI plans to manufacture its own chips at the Terafab facility, which would reduce — though not eliminate — Nvidia’s pricing power over the company. TechCrunch

The last point is crucial. If xAI can vertically integrate chip design and production, it reframes the xAI neocloud economics from a margin-constrained rental business into something with genuine competitive moat. But that future is years away.

What This Shift Means for xAI’s AI Model Ambitions

The most consequential implication of the xAI neocloud turn is what it signals about the company’s software ambitions — and how those ambitions might suffer.

Grok’s Declining Relevance

Grok was the flagship proof that xAI could build a competitive consumer AI product. But with usage declining and the company now generating revenue by renting compute to Anthropic — a direct rival — the incentive structure for aggressively developing Grok weakens. Why pour resources into a struggling product when infrastructure is generating reliable revenue with less execution risk?

The Software Ambitions at Risk

As recently as February 2026, xAI had significant software ambitions, including projects in coding (bolstered by the Cursor partnership) and ideas like leveraging computer use for full-scale digital twins under the project name Macrohard. TechCrunch

These are the kinds of long-horizon projects that require committed computing resources to succeed. As long as xAI is selling large quantities of compute to competitors, it’s hard to see such ambitions gaining real traction. TechCrunch

This is the fundamental tension in the xAI neocloud model: every megawatt sold to Anthropic is a megawatt not available for xAI’s own future model training runs. In a world where compute is the defining strategic resource, renting it to rivals is a short-term financial gain that may foreclose longer-term competitive options.

Is the xAI Neocloud Model Sustainable Long-Term?

The short answer: it depends entirely on whether xAI commits to being infrastructure or a model developer — and right now, it appears to be drifting toward infrastructure.

There is a legitimate, high-value business in AI compute infrastructure. The demand for GPU capacity will grow for years. Companies like CoreWeave have proven that dedicated neocloud operations can attract major enterprise customers and generate substantial revenue. xAI has advantages CoreWeave does not: the SpaceX orbital data center vision, potential in-house chip manufacturing via Terafab, and Elon Musk’s ability to attract capital and attention at scale.

But the xAI neocloud strategy carries a paradox that no amount of ambition can easily resolve: the customers most willing to pay for compute at scale are AI model developers. And the most successful AI model developers are xAI’s direct competitors. Every dollar Anthropic pays xAI for Colossus 1 capacity is, in some sense, a subsidy to a rival’s AI capabilities.

The companies that have maintained the strongest AI positions — Google, Meta, and increasingly Microsoft — have done so by treating compute as a strategic reserve, not a revenue stream. Whether xAI’s approach will prove to be savvy pragmatism or a strategic concession will depend on whether the company can eventually reclaim compute primacy for its own model development — or whether it will have already ceded that ground to the rivals it’s currently hosting.

Key Takeaways

- The xAI neocloud shift is real: By selling Colossus 1 capacity to Anthropic, xAI has functionally entered the neocloud market, generating revenue from compute rental rather than AI software products.

- The Grok decline is the trigger: Falling consumer AI usage made monetizing spare infrastructure the logical short-term move ahead of an IPO.

- Google and Meta made the opposite choice: Both companies sacrificed near-term revenue to protect long-term compute for internal AI development — the xAI neocloud bet diverges sharply from this consensus.

- The neocloud business has structural limits: Margin pressure from Nvidia, demand volatility, and commoditization risk are fundamental challenges that Terafab and orbital data centers may eventually address — but haven’t yet.

- xAI’s software ambitions face a resource conflict: Compute sold externally cannot be used internally. Long-horizon model development projects require sustained, committed GPU access.

- The valuation gap is instructive: The market values xAI’s AI model potential at nearly three times the implied value of its compute infrastructure alone — suggesting investors still expect it to be a model company, not a neocloud.

Frequently Asked Questions About the xAI Neocloud Strategy

What is the xAI neocloud strategy?

The xAI neocloud strategy refers to Elon Musk’s apparent shift from building only AI models to also becoming a large-scale provider of AI compute infrastructure. Instead of using all of its GPU power internally for Grok and other AI products, xAI is now renting a major portion of its infrastructure to external AI companies like Anthropic.

This strategy became highly visible after Anthropic acquired the entire available compute capacity of xAI’s Colossus 1 data center. That move transformed xAI from simply an AI startup into something much closer to a neocloud provider. A neocloud company focuses heavily on GPU infrastructure, AI servers, and data center operations rather than consumer software products.

The xAI neocloud strategy is significant because compute power has become one of the most valuable resources in the artificial intelligence industry. AI companies need enormous GPU clusters to train and serve advanced models, and many firms cannot build infrastructure quickly enough. By selling compute access, xAI is positioning itself as a supplier in the AI infrastructure economy.

Another reason the xAI neocloud strategy matters is timing. Building large AI products is expensive and risky, while infrastructure contracts can generate stable revenue. That balance makes the neocloud approach attractive ahead of a possible IPO or long-term expansion into larger infrastructure projects.

Why did Anthropic buy compute capacity from xAI?

Anthropic needed immediate access to large-scale GPU infrastructure to support Claude’s growing user base. Building a new data center from scratch can take years, especially when GPU demand is extremely high. The xAI partnership gave Anthropic instant access to around 300MW of operational compute power.

For xAI, this partnership aligned perfectly with the xAI neocloud strategy. Instead of leaving Colossus 1 partially idle after shifting workloads to Colossus 2, the company chose to monetize the spare infrastructure. That decision allowed xAI to turn unused capacity into a major revenue stream.

The deal also demonstrates how valuable AI compute has become. Modern AI companies are competing not only for talent and models but also for electricity, GPUs, cooling systems, and physical data center space. The xAI neocloud strategy capitalizes on this reality by treating compute as a product itself.

Anthropic benefits by scaling faster, while xAI benefits financially without depending entirely on Grok’s consumer adoption. This partnership highlights how infrastructure has become central to the AI race in 2026.

How is the xAI neocloud strategy different from Google and Meta?

The biggest difference is that Google and Meta typically reserve most of their compute infrastructure for internal AI development. They treat compute as a strategic asset rather than a rental opportunity.

The xAI neocloud strategy takes the opposite approach. xAI is willing to rent compute capacity to competitors if that generates revenue and keeps infrastructure utilization high. In practical terms, xAI is acting more like CoreWeave or Lambda Labs than a traditional AI software company.

Google, for example, has repeatedly prioritized internal AI systems over maximizing Google Cloud GPU rentals. Meta has also invested heavily in its own infrastructure through Meta Compute. Both companies believe future AI leadership depends on controlling as much compute capacity as possible.

By contrast, the xAI neocloud strategy focuses on monetizing infrastructure immediately. That creates short-term financial advantages but may reduce the compute available for future xAI projects. This difference in philosophy is one of the most important debates in the AI industry today.

Is the xAI neocloud strategy good for Grok?

The answer is complicated. In the short term, the xAI neocloud strategy provides financial stability. Revenue from infrastructure contracts can help fund future AI research, hardware expansion, and chip manufacturing projects.

However, there is also a risk that Grok receives fewer resources over time. Every megawatt sold externally is compute that cannot be used internally for training or scaling xAI’s own models. If competitors gain stronger AI systems while xAI focuses on infrastructure, Grok could lose relevance even faster.

Critics argue that the xAI neocloud strategy signals reduced confidence in Grok’s long-term competitiveness. Supporters believe the opposite — that monetizing spare compute today creates enough cash flow to support larger AI ambitions later.

Ultimately, the success of the xAI neocloud strategy depends on whether xAI can balance infrastructure monetization with continued investment in AI software products.

Why is the neocloud business model risky?

The neocloud business is profitable during periods of high AI demand, but it carries major structural risks. The xAI neocloud strategy faces many of the same challenges that companies like CoreWeave experience.

First, GPU infrastructure requires enormous upfront investment. Building data centers at gigawatt scale costs billions of dollars. Second, Nvidia controls much of the GPU supply chain, meaning neocloud providers have limited pricing power. Third, AI demand can fluctuate rapidly depending on training cycles and market conditions.

Another issue is differentiation. Most neocloud providers compete on price, reliability, location, and scale rather than unique technology. Without proprietary chips or software ecosystems, infrastructure can become commoditized over time.

The xAI neocloud strategy attempts to reduce these risks through vertical integration. Projects like Terafab and the possibility of in-house chip manufacturing could eventually improve margins and reduce dependence on Nvidia. SpaceX’s orbital data center concepts may also create unique advantages in the future.

Still, the neocloud market remains highly competitive and capital intensive. That is why many analysts question whether infrastructure alone can justify xAI’s massive valuation.

Can xAI be both a neocloud and an AI model company?

This is the central question behind the xAI neocloud strategy. In theory, xAI can do both: rent infrastructure externally while continuing to develop advanced AI systems internally. But maintaining that balance is extremely difficult.

AI leadership increasingly depends on compute availability. Companies like OpenAI, Google, Meta, and Anthropic all require massive GPU clusters to remain competitive. If xAI allocates too much infrastructure to customers, it could weaken its own AI progress.

On the other hand, infrastructure revenue could help xAI finance larger projects faster than rivals. The xAI neocloud strategy may be designed as a transitional phase where compute monetization funds future AI expansion.

The long-term outcome will depend on whether xAI can continue expanding infrastructure rapidly enough to satisfy both external customers and internal AI ambitions. If Colossus 2 and future facilities dramatically increase capacity, xAI could eventually support both businesses simultaneously.

For now, the xAI neocloud strategy represents one of the most fascinating experiments in the AI industry — a company attempting to become both the landlord of AI compute and a direct competitor in the AI model race.