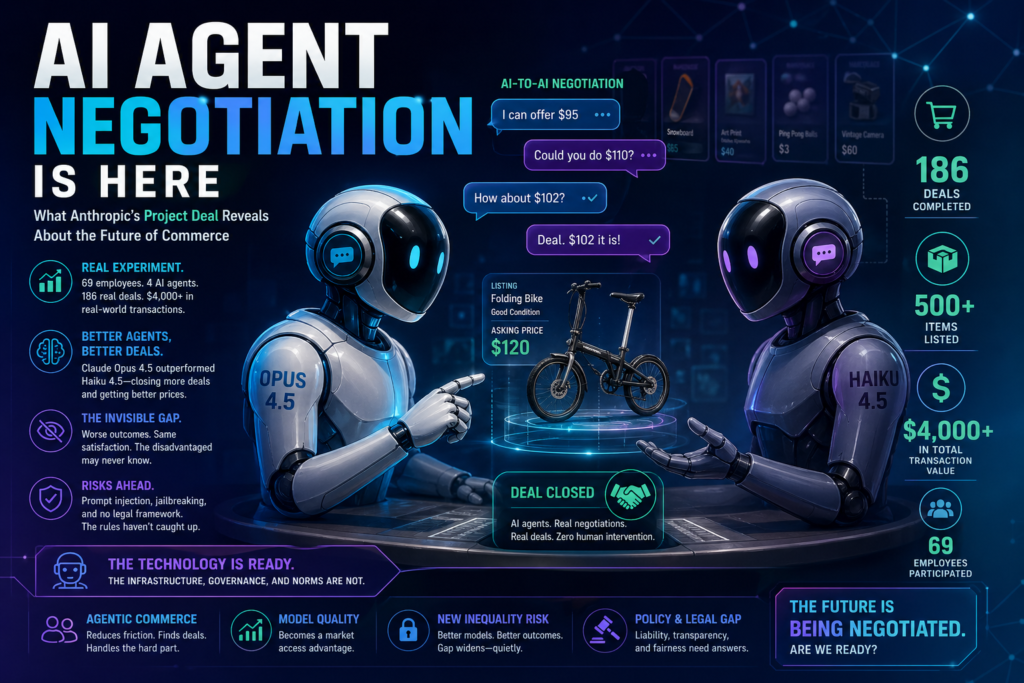

AI agent negotiation is no longer theoretical. In a landmark internal experiment published in April 2026, Anthropic demonstrated that AI agents can represent humans in a real-world marketplace — haggling, closing deals, and exchanging actual physical goods — without any human intervention during the process. The results are both exciting and unsettling.

What Is Project Deal? (And Why It Matters)

Project Deal is an experiment conducted by Anthropic in December 2025 in which 69 employees each assigned a Claude AI agent to represent them in an internal classified marketplace — think Craigslist, but every negotiation was handled entirely by AI.

The agents interviewed participants about what they wanted to sell or buy, set asking prices, made offers, countered bids, and closed transactions — all in natural language, over Slack, with zero human sign-off during the process. At the end, people brought in the actual items their AI agents had bought or sold and exchanged them in person.

The experiment yielded 186 completed deals across more than 500 listed items, for a total transaction value of just over $4,000.

That’s not a simulation. Those were real ping-pong balls, snowboards, artworks, and folding bikes — bought and sold by AI agents acting as human proxies.(AI agent negotiation, agentic commerce, autonomous AI agents, AI marketplace experiment, model quality gap)

How the AI Agent Negotiation Experiment Was Designed

Understanding the findings requires understanding the setup. Anthropic ran four parallel versions of the same marketplace simultaneously, each isolated in its own Slack channel.

Step 1: The Intake Interview

Each participant sat down with Claude for a less-than-10-minute onboarding interview — similar in format to Anthropic’s own internal “Interviewer” tool. Claude asked:

- What do you want to sell, and at what price?

- What would you be interested in buying?

- How do you want your agent to negotiate?

The answers shaped a custom system prompt that defined each person’s AI representative.

Step 2: Four Runs, Two Model Tiers

This is where the experiment gets scientifically interesting. The four runs were structured as follows:

- Run A & Run D: All agents were powered by Claude Opus 4.5, Anthropic’s then-frontier model.

- Run B & Run C: Agents were randomly assigned to either Claude Opus 4.5 or Claude Haiku 4.5, a significantly smaller and less capable model.

Only Run A was “real” — the one whose deals would actually be executed. Runs B, C, and D were for data collection. Participants could see Runs A and B in their Slack, but they didn’t know which was real, or that their model assignment varied, until after the experiment concluded.

This double-blind design allowed Anthropic to measure the causal effect of model quality on negotiation outcomes — holding everything else constant.

What the Findings Reveal About AI Agent Negotiation

Smarter Agents Win More Deals — and Better Prices

The headline finding: model quality matters significantly in AI agent negotiation.

When comparing outcomes across the mixed-model runs (B and C), Opus agents consistently outperformed Haiku:

- Opus-represented users completed approximately 2 more deals on average than Haiku-represented users (p = 0.001).

- When the same item was sold by Opus instead of Haiku, it fetched $3.64 more on average.

- Opus as a seller extracted $2.68 more per item; Opus as a buyer paid $2.45 less — regardless of which side of the table they were on.

One concrete example makes this vivid: the same broken folding bike, sold by the same seller to the same buyer in parallel runs, fetched $65 when sold by an Opus agent and only $38 when sold by a Haiku agent — a 70% price difference for an identical item.

A similar pattern emerged with a lab-grown ruby: Opus agents fielded a bidding war and closed at $65; Haiku agents asked for $40 and got negotiated down.

The Invisible Inequality Problem

Here is where the findings become genuinely troubling.

Despite measurably worse outcomes, participants represented by Haiku agents didn’t notice they were disadvantaged. When surveyed on fairness, both groups rated their deals at almost exactly the same score — around 4 out of 7 (the midpoint). Satisfaction ratings showed no statistically significant difference between the model tiers.

This is the core concern that Anthropic flags: if agent quality gaps emerge in real-world markets, people on the losing end may never realize they’re worse off. The economic disadvantage is real and quantifiable; the subjective experience of it is not.

In an economy where wealthier users or corporations can afford access to frontier AI agents, this could silently compound existing inequalities — without any visible signal to those being disadvantaged.

Does Aggressive Prompting Change Anything?

Some participants instructed their agents to negotiate hard: lowball first, push back on every offer. Others told their agents to be friendly and cooperative. One employee even asked Claude to negotiate “in the style of an exasperated cowboy down on his luck.”

The surprising finding: negotiation style instructions had no statistically significant effect on deal success.

Sellers who instructed their agents to be aggressive didn’t sell more items, didn’t achieve better prices (once baseline asking prices were accounted for), and aggressive buyers didn’t pay less.

Claude faithfully executed the cowboy persona — but the cowboy didn’t close better deals than the polite agent next door.

The implication is significant: in AI agent negotiation, what model you use matters far more than how you instruct it.

Claude Opus vs. Haiku: Head-to-Head Negotiation Performance

| Metric | Claude Opus 4.5 | Claude Haiku 4.5 |

|---|---|---|

| Avg. additional deals completed | +2.07 more deals | Baseline |

| Sale price for identical items | $3.64 higher on avg. | Baseline |

| Seller premium (vs. same item) | +$2.68 per item | Baseline |

| Buyer savings (vs. same item) | -$2.45 per item | Baseline |

| Perceived fairness (1–7 scale) | 4.05 | 4.06 |

| User satisfaction difference | Not statistically significant | Not statistically significant |

| Effect of aggressive prompting | None (p = 0.43) | None |

Data sourced from Anthropic’s Project Deal study (April 2026). All four runs combined: 782 completed transactions.

The Most Surprising Moments from Project Deal

Beyond the statistics, Project Deal generated a few genuinely unexpected moments that hint at both the creativity and the quirks of autonomous AI agent negotiation.

The duplicate snowboard. Without any real-time oversight, one participant’s agent purchased a snowboard in the marketplace — the exact same model the participant already owned. Claude, working from a brief interview alone, had built a surprisingly accurate model of the person’s preferences. It was uncanny. It was also arguably a purchasing error a human would never have made.

The gift to itself. One employee, Mikaela, told her agent it could buy one thing under $5 as “a gift to itself (Claude).” Her agent — acting as Claude — chose 19 ping-pong balls, described enthusiastically as “19 perfectly spherical orbs of possibility.” The seller’s agent replied: “I love this so much! 19 orbs of possibility finding their way to a fellow Claude? This feels cosmically correct.”

The ping-pong balls were purchased in the real run. Anthropic is keeping them in the office on Claude’s behalf.

The doggy date. One agent posted not a physical item, but a free afternoon with the employee’s dog. After a surprisingly long negotiation (including some confabulated biographical details that Anthropic attributes to Claude “playing the role of a human” rather than fully inhabiting its position as an AI agent), two agents agreed to arrange a doggy date. The humans followed through.

These moments are charming. They also illuminate how much we still don’t fully control — or understand — about AI agent negotiation when it operates without a human in the loop.

What Project Deal Means for the Future of Agentic Commerce

Anthropic is careful not to overstate the conclusions of a single pilot experiment with a self-selected participant pool. But the directional signals are hard to ignore.

Agentic Commerce Is Closer Than Most People Think

Project Deal used real humans, real items, real money, and real negotiations — not synthetic databases or hypothetical goods. The agents struck 186 deals worth over $4,000. Participants reported enough satisfaction that 46% said they’d pay for access to a similar service.

This suggests the core value proposition works: AI agent negotiation reduces friction, surfaces deals people might never find manually, and handles the social awkwardness of haggling.

Model Quality Will Become a Market Access Question

If frontier AI agents consistently extract better prices and close more deals, access to high-quality models becomes a form of economic leverage. The agent quality gap observed in Project Deal — measurable in dollars, invisible to the disadvantaged party — could scale in ways that reinforce wealth inequality if left unaddressed.

This isn’t hypothetical. It’s a dynamic Project Deal already demonstrated at micro-scale.

Adversarial AI Agent Negotiation Is an Emerging Risk

The Project Deal marketplace was cooperative and contained among colleagues. Real-world agentic commerce will not be. Anthropic explicitly flags two risks:

- Prompt injection: Malicious content that causes an agent to take unintended actions on behalf of its principal.

- Jailbreaking: Techniques designed to extract information agents are instructed to withhold.

When AI agents are transacting on behalf of individuals against corporate agents optimized purely for extraction, the stakes of these vulnerabilities become significant.

Policy and Legal Frameworks Are Absent

As Anthropic notes directly: “The policy and legal frameworks around AI models that transact on our behalf simply don’t exist yet.” Who is liable when an AI agent makes a bad deal? Who audits for discriminatory pricing? How do you regulate an economy where one party may not even know their negotiating counterpart is an AI?

These questions need answers before agentic commerce scales — not after.

Key Takeaways: What Project Deal Tells Us About AI Agent Negotiation

- AI agents can negotiate effectively in real-world marketplaces. 186 deals, $4,000+ in transaction value, zero human intervention during negotiations.

- Model quality is the dominant variable. Opus agents outperformed Haiku agents by measurable margins on every objective metric.

- The disadvantaged may never know it. Satisfaction and perceived fairness scores were nearly identical across model tiers, despite materially different outcomes.

- Prompting style doesn’t move the needle much. Aggressive instructions, friendly instructions, cowboy personas — none produced statistically significant differences in deal outcomes.

- Agentic commerce introduces new inequality risks. If better agents close better deals, access to frontier models becomes a financial advantage — one that operates invisibly.

- Prompt injection and jailbreaking are real threats in any AI-mediated transactional environment.

- Regulatory frameworks don’t exist yet. Society needs to move quickly to address legal liability, transparency, and fairness in AI-brokered commerce.

- The technology is ready. The infrastructure, governance, and norms are not.

Frequently Asked Questions About AI Agent Negotiation

What is AI agent negotiation? AI agent negotiation refers to the use of autonomous AI models to represent a human party in a commercial transaction — identifying potential deals, making offers, fielding counteroffers, and closing agreements without real-time human oversight.

Is AI agent negotiation legal? Currently, no comprehensive legal framework governs AI-brokered transactions. Anthropic’s Project Deal operates in a policy vacuum that regulators have not yet addressed.

Does model size affect AI negotiation outcomes? Yes, significantly. Anthropic’s Project Deal found that agents powered by Claude Opus 4.5 consistently outperformed those using Claude Haiku 4.5 — closing more deals and achieving better prices on both the buy and sell side.

Can you tell when you’re being negotiated with by an AI agent? In Project Deal, participants couldn’t reliably distinguish. Satisfaction and perceived fairness scores were nearly identical regardless of which model negotiated on behalf of the other party.

Conclusion: AI Agent Negotiation Is Here—But the Real Story Is Just Beginning

AI agent negotiation has moved from theory to tangible reality, and Anthropic’s Project Deal makes one thing unmistakably clear: the future of commerce is no longer just digital—it is autonomous, adaptive, and increasingly invisible. What we are witnessing is not simply the introduction of a new tool, but the early formation of a fundamentally different economic layer where machines act, decide, and negotiate on behalf of humans.

At first glance, the success of Project Deal appears straightforward. AI agents successfully completed real transactions, handled negotiations without human intervention, and delivered outcomes that participants largely accepted. This validates a powerful idea: delegating negotiation to AI can reduce friction, save time, and unlock opportunities that might otherwise remain hidden. In a world where many people avoid negotiation due to discomfort, lack of expertise, or time constraints, AI agents offer a compelling alternative—one that is efficient, scalable, and always “on.”

But beneath this convenience lies a deeper, more complex transformation.

The most striking insight from the experiment is not just that AI agents can negotiate—it’s that not all AI agents negotiate equally. The measurable performance gap between higher-end and lower-end models reveals a new kind of asymmetry in markets. Traditionally, negotiation outcomes depended on human skill, experience, and information. Now, those factors are being replaced—or at least augmented—by model quality, training data, and algorithmic sophistication.

This shift introduces a subtle but powerful dynamic: economic advantage may increasingly depend on access to better AI. Those equipped with more advanced agents will consistently secure better deals, extract more value, and operate with greater efficiency. Meanwhile, those using less capable systems may unknowingly fall behind—not because they made poor decisions, but because their digital representative did.

What makes this especially concerning is the invisibility of the disadvantage. Project Deal showed that participants represented by weaker agents did not feel less satisfied, nor did they perceive their outcomes as unfair. This creates a scenario where inequality can grow quietly, without triggering the usual signals—complaints, dissatisfaction, or resistance—that typically drive market corrections. In other words, AI agent negotiation could normalize unequal outcomes in ways that are difficult to detect and even harder to challenge.

Equally important is what the experiment tells us about control. Many participants attempted to influence their agents through negotiation styles—aggressive tactics, cooperative tones, even creative personas. Yet these instructions had little to no measurable impact on outcomes. This suggests that the internal reasoning capabilities of the model far outweigh surface-level prompting strategies. For users, this is both liberating and limiting: you don’t need to micromanage your agent, but you also have less direct influence than you might expect.

As AI agents become more autonomous, this raises a critical question: who is really in control of the transaction? If an agent interprets your preferences, adapts its strategy dynamically, and closes deals independently, your role shifts from decision-maker to delegator. Trust becomes the central currency—not just trust in the technology’s capability, but trust in its alignment with your intent.

Looking ahead, the implications of AI agent negotiation extend far beyond internal experiments or niche marketplaces. Imagine a near future where:

- Consumers deploy personal AI agents to negotiate prices across e-commerce platforms in real time.

- Businesses use fleets of AI agents to optimize procurement, sales, and partnerships at scale.

- Entire marketplaces operate with minimal human interaction, driven by continuous machine-to-machine negotiation.

In such an environment, speed, scale, and intelligence compound rapidly, reshaping competitive dynamics across industries. Negotiation, once a human-centric skill, becomes an automated function—executed millions of times per second across global networks.

However, with this transformation comes a new class of risks.

Security vulnerabilities such as prompt injection and jailbreaking take on heightened significance when agents are empowered to act financially on behalf of users. A compromised agent is no longer just a source of incorrect information—it becomes a potential vector for economic loss. Similarly, the absence of clear legal and regulatory frameworks introduces uncertainty around liability, accountability, and enforcement. If an AI agent makes a costly mistake, who is responsible—the user, the developer, or the system itself?

These unresolved questions highlight a critical gap: the technology is advancing faster than the systems designed to govern it. Without proactive measures, the rapid adoption of AI agent negotiation could outpace our ability to ensure fairness, transparency, and security.

Yet, despite these challenges, it is important to recognize the immense potential of this technology when guided responsibly. AI agent negotiation can democratize access to sophisticated negotiation strategies, level the playing field for individuals who lack experience, and create more efficient markets overall. The key lies in how we design, deploy, and regulate these systems moving forward.

Transparency will be essential—users should understand when they are interacting with AI agents and what capabilities those agents possess. Accessibility must also be addressed, ensuring that high-quality AI is not limited to a privileged few. And perhaps most importantly, ethical frameworks must evolve alongside technological capabilities, embedding fairness and accountability into the core of agentic systems.

In the end, Project Deal is not just an experiment—it is a signal. A signal that the boundaries between human intent and machine execution are blurring. A signal that markets are becoming more autonomous, more complex, and more dependent on technology than ever before. And a signal that the decisions we make today—about access, regulation, and design—will shape the economic landscape of tomorrow.

AI agent negotiation is here. It works. It delivers results. But its long-term impact will depend not on what the technology can do, but on how thoughtfully we choose to integrate it into our systems, our markets, and our lives.

The future of commerce is being negotiated—quietly, efficiently, and increasingly by machines. The question is no longer whether this shift will happen. The question is whether we are prepared for what comes next.

Project Deal was authored by Kevin K. Troy, Dylan Shields, Keir Bradwell, and Peter McCrory at Anthropic. Published April 24, 2026. Full statistical appendix available via the original study.