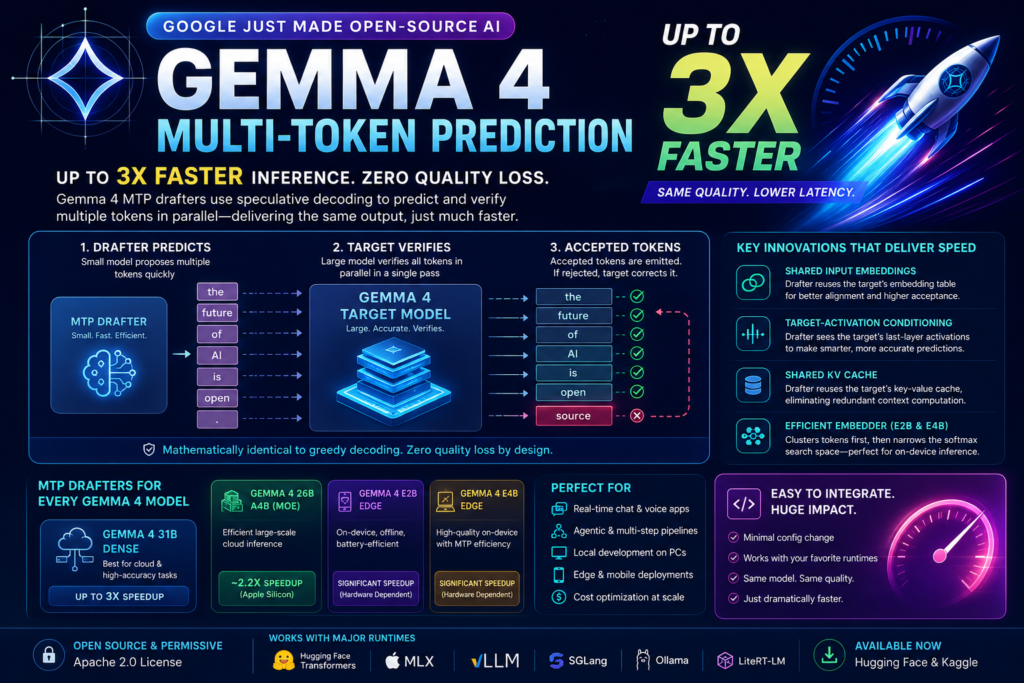

Google’s Gemma 4 multi-token prediction drafters can triple inference speed without sacrificing a single token of output quality — here’s exactly how they work and why it matters for every developer deploying LLMs in production.

If you’ve ever waited too long for a large language model to finish a response — in a chatbot, a coding assistant, or an agentic pipeline — you’ve personally felt the problem that Google just solved. On May 5, 2026, Google released Multi-Token Prediction (MTP) drafters for the entire Gemma 4 model family, delivering up to a 3x speedup without any degradation in output quality or reasoning logic. Google

This isn’t a new model. The underlying Gemma 4 weights are unchanged. What Google shipped is a smarter, faster inference path — one that exploits idle GPU compute to predict multiple tokens at once. The result is dramatically lower latency, lower cloud bills, and a better experience for end users. Let’s break down what Gemma 4 multi-token prediction actually is, how it achieves its speed gains, and what this means for the open-source AI ecosystem.

What Is Gemma 4 Multi-Token Prediction?

Gemma 4 multi-token prediction is an inference optimization technique that pairs each full Gemma 4 target model with a small, tightly coupled “drafter” model. Instead of generating one token per forward pass — the standard autoregressive approach — the drafter rapidly proposes several candidate tokens in advance, and the large target model verifies them all in a single parallel pass.

The key insight: verification is much cheaper than generation from scratch. When the drafter’s predictions are good (i.e., accepted by the target), the system effectively produces multiple tokens in the time it would normally take to produce one. If the target model rejects a drafted token, it still produces the correct token for that position, ensuring that step is not wasted. Google AI

This foundational technique is called speculative decoding, and it has existed since 2022. What makes Gemma 4’s implementation novel is how tightly integrated the drafter is with the target — a design that maximizes acceptance rates and therefore maximizes real-world speedup.

How MTP Drafters Work: Speculative Decoding Explained

The Drafter-Target Architecture

The technique decouples token generation from verification by pairing two models: a lightweight drafter and a heavy target model. Here’s the pipeline in plain terms: marktechpost

- The drafter (small, fast) predicts several tokens ahead autoregressively — in the time it takes the large target model to process just one token.

- The target model (large, accurate) verifies all the drafted tokens in a single parallel forward pass.

- Accepted tokens are emitted all at once. If a token is rejected, the target corrects it and the drafter resumes from that corrected point.

- The output is mathematically identical to greedy decoding from the target alone — there is zero quality regression by design.

The wall-clock gain comes from the ratio of accepted tokens per target forward pass. The higher the acceptance rate, the closer to the theoretical maximum speedup you get.

Three Key Architectural Innovations

Gemma 4’s MTP drafters are not independent small models — they are tightly coupled to the target. Three architectural choices make this work: NYU Shanghai RITS

1. Shared Input Embeddings The drafter reuses the target model’s embedding table instead of learning its own. This means the drafter starts from the same semantic representation as the target, which inherently improves prediction alignment and acceptance rates.

2. Target-Activation Conditioning The drafter concatenates the target model’s last-layer activations with token embeddings and down-projects the result, so it builds directly on representations the target has already computed. In effect, the drafter can “see” what the target was thinking — making its predictions far more likely to be accepted. NYU Shanghai RITS

3. Shared KV Cache Drafters reuse the target’s key-value cache rather than rebuilding context, which removes the dominant prefill cost in long-context generation. For applications with long system prompts or conversation histories, this is a significant advantage. NYU Shanghai RITS

For the on-device edge models (E2B and E4B), an additional innovation applies: an efficient embedder that clusters tokens in advance so the model first predicts a likely cluster, then restricts the final softmax calculation to tokens within it. This cuts the most expensive computation step on memory-constrained mobile hardware.

Measured Speedups: What the Numbers Actually Mean

By using Multi-Token Prediction (MTP) drafters, Gemma 4 models reduce latency bottlenecks and achieve improved responsiveness for developers. Google’s headline figure is up to 3x faster inference — but the practical speedup depends on your hardware and workload. Google

The realized speedup varies sharply with hardware and batch size — about 2.2x on Apple Silicon for the MoE model, and around 1.72x for community EAGLE3 drafters — so the practical move is to benchmark on your own workload before assuming the full 3x figure. claypier

Here’s what drives the variance:

- Batch size matters. At batch size 1 (single-user inference), memory bandwidth is the bottleneck and speculative decoding delivers peak relative gains. At large batch sizes, the GPU is already busy and the marginal benefit shrinks.

- MoE models have a caveat. The 26B A4B Mixture-of-Experts target activates only a subset of experts per token, but verifying a multi-token draft can require additional experts to be loaded from memory — partially offsetting the drafting gain at low batch sizes.

- The quality guarantee holds universally. Because the target model does all verification, accepted tokens are bit-for-bit identical to greedy decoding from the target alone. There is no tradeoff to calibrate. NYU Shanghai RITS

Which Gemma 4 Models Get MTP Drafters?

Google DeepMind shipped MTP drafter models paired with four Gemma 4 variants: the 31B dense flagship, the 26B A4B Mixture-of-Experts model, and the on-device E2B and E4B edge models. Each drafter is published as a standalone checkpoint on Hugging Face and Kaggle. NYU Shanghai RITS

| Gemma 4 Variant | Type | Best For | MTP Speedup (Typical) |

|---|---|---|---|

| Gemma 4 31B | Dense | Cloud, data center, high-accuracy tasks | Up to 3x |

| Gemma 4 26B A4B | Mixture-of-Experts (MoE) | Efficient large-scale cloud inference | ~2.2x (Apple Silicon) |

| Gemma 4 E2B | Edge (on-device) | Mobile, offline, battery-constrained | Significant; exact HW-dependent |

| Gemma 4 E4B | Edge (on-device) | High-quality on-device with MTP efficiency | Significant; exact HW-dependent |

All four drafters ship under the same Apache 2.0 open-source license as the base Gemma 4 models — meaning you can use, modify, and deploy them commercially with no restrictions.

Real-World Use Cases That Benefit Most

The performance profile of Gemma 4 multi-token prediction makes it especially valuable in specific deployment scenarios:

Real-Time Chat and Voice Applications Latency is the enemy of conversational AI. A 2–3x speedup in token generation translates directly to a snappier user experience. Developers can reduce latency dramatically for near real-time chat, voice communication applications, and agentic workflows. AlternativeTo

Agentic and Multi-Step Pipelines In agentic systems where a model calls tools, evaluates results, and generates follow-up reasoning steps, inference happens dozens or hundreds of times per task. Cutting each step’s latency by 2–3x compounds aggressively into faster end-to-end task completion.

Local Development on Consumer Hardware The new inference process allows large Gemma 4 models to run efficiently on personal computers and consumer GPUs for seamless, offline coding and planning. Developers who previously found the 31B model too slow for interactive use can now treat it as a practical daily driver. AlternativeTo

Edge and Mobile Deployments For edge devices, the optimized output speed conserves battery life, benefiting the use of E2B and E4B models. Generating fewer GPU cycles per response is directly proportional to saved battery. Mobile AI applications — offline translation, local document Q&A, on-device coding assistants — become meaningfully more responsive. AlternativeTo

Cost Optimization at Scale For teams running Gemma 4 in the cloud, faster inference means fewer GPU-seconds per request. With no change in output quality, this is essentially a free infrastructure cost reduction. At high query volumes, the savings compound into significant budget impact.

How to Get Started With Gemma 4 MTP Drafters

Getting started requires loading two models together — the target and the drafter — then configuring your runtime to use speculative decoding. The good news: the runtimes that already serve Gemma 4 — Hugging Face Transformers, MLX, vLLM, SGLang, Ollama, and LiteRT-LM — pick up the drafters with minimal configuration. NYU Shanghai RITS

Here’s a quick-start checklist:

- Download the drafter checkpoint from Hugging Face (e.g.,

google/gemma-4-E2B-it-assistant) or Kaggle alongside your existing target model - Load both models in your runtime — the target (e.g.,

google/gemma-4-E2B-it) and the assistant drafter in parallel - Enable speculative decoding in your runtime config — in Hugging Face Transformers this is a one-line argument addition

- Set your draft length (number of tokens to propose per step) — Google recommends tuning this for your acceptance rate; higher draft lengths have more potential upside but more wasted compute if acceptance is low

- Benchmark on your workload — run latency and throughput tests under your actual batch sizes and prompt lengths before pushing to production

- Try the mobile experience via Google AI Edge Gallery for Android or iOS if you’re targeting on-device E-series deployments

- Check the Apache 2.0 license — confirm your deployment context is covered (it almost certainly is)

How Gemma 4 Multi-Token Prediction Compares to the Broader Open-Source Ecosystem

Speculative decoding is not new to the open-source AI world. Several major model families have explored related techniques:

Llama, Qwen, and DeepSeek already train MTP-aware variants, but ship without official drafter checkpoints; community drafters exist but are uneven in quality. A polished Apache-2.0 drafter release sets a baseline that other vendors will likely match. NYU Shanghai RITS

What distinguishes the Gemma 4 multi-token prediction release is the combination of:

- First-party quality — Google built and validated these drafters specifically for each Gemma 4 variant, meaning acceptance rates are maximized by design

- Architectural coupling — shared embeddings, activations, and KV cache are not features you get with a community-built third-party drafter

- Permissive licensing — Apache 2.0 from day one, no restrictions on commercial use

- Runtime breadth — out-of-the-box support across six major inference runtimes plus mobile, eliminating integration friction

The contrast with community-built EAGLE3 drafters is instructive: the EAGLE3 approach is architecturally sound but yields roughly 1.72x speedup in comparable settings, compared to Google’s reported 3x for the purpose-built MTP drafters. That delta reflects the value of first-party architectural integration.

What This Means for the Future of LLM Inference

The Gemma 4 multi-token prediction release signals a broader shift in how the AI industry thinks about model efficiency. The frontier is no longer purely about training smarter models — it’s about deploying existing models smarter.

The release comes just weeks after Gemma 4 surpassed 60 million downloads and directly targets one of the most persistent pain points in deploying large language models: the memory-bandwidth bottleneck that slows token generation regardless of hardware capability. marktechpost

The memory-bandwidth problem is architectural: modern GPUs have enormous compute capacity, but moving model weights from memory to compute cores is slow. Standard autoregressive decoding applies the same heavyweight computation to trivial token predictions (“words” after “Actions speak louder than…”) as it does to complex logical inferences. MTP drafters fix this by exploiting the predictability structure of language — letting a cheap model handle the easy tokens and reserving expensive target-model computation for verification and difficult predictions.

As models grow larger and more capable, inference efficiency will only become more critical. The pattern established by Gemma 4 multi-token prediction — tight drafter-target coupling with shared representations — is likely to become standard practice across the open-source and commercial AI ecosystems.

For developers and organizations deploying LLMs in 2026, the message is clear: faster inference is now available, it’s free (same license, same model quality), and integrating it is a configuration change rather than an engineering project. The question is no longer whether to use Gemma 4 multi-token prediction — it’s how quickly you can ship it.

Key Takeaways

- Gemma 4 multi-token prediction pairs a small drafter with the full Gemma 4 target to propose and verify multiple tokens per forward pass

- The technique delivers up to 3x faster inference with zero quality loss — accepted tokens are bit-for-bit identical to standard decoding

- Four Gemma 4 variants are covered: the 31B Dense, 26B A4B MoE, and the on-device E2B and E4B edge models

- All MTP drafters are available under the Apache 2.0 license on Hugging Face and Kaggle

- Compatible runtimes include Hugging Face Transformers, MLX, vLLM, SGLang, Ollama, LiteRT-LM, and Google AI Edge Gallery

- Real speedup varies by hardware and batch size — benchmark on your specific workload before assuming peak figures

- This release sets a new standard for open-source inference optimization that competitors will need to match

Frequently Asked Questions (FAQ)

What is Gemma 4 multi-token prediction?

Gemma 4 multi-token prediction is an advanced inference optimization technique introduced for Google’s Gemma 4 model family. Instead of generating one token at a time like traditional autoregressive decoding, the system uses a lightweight “drafter” model to predict multiple future tokens simultaneously. The larger target model then verifies those predictions in parallel.

This process dramatically improves inference speed while maintaining the exact same output quality. Because the target model still validates every accepted token, the generated responses remain mathematically identical to standard greedy decoding. Developers benefit from lower latency, faster responses, and reduced GPU costs without retraining or modifying the base model itself.

How does speculative decoding improve LLM inference speed?

Speculative decoding accelerates LLM inference by separating token drafting from token verification. A smaller, faster model predicts several tokens ahead, while the larger model checks them in one pass instead of generating every token individually.

In standard inference, each new token requires a full forward pass through the large model. That becomes expensive for large language models with billions of parameters. With Gemma 4 multi-token prediction, multiple accepted tokens can be emitted after a single verification pass, reducing the total computational workload.

This approach is especially effective for predictable text patterns where the drafter’s guesses are frequently correct. The higher the acceptance rate, the larger the speed improvement.

Does Gemma 4 multi-token prediction reduce output quality?

No. One of the biggest advantages of Gemma 4 multi-token prediction is that it preserves output quality completely. Unlike approximation-based acceleration techniques, speculative decoding guarantees that the verified output remains identical to what the original target model would have generated on its own.

If the drafter predicts an incorrect token, the target model simply rejects it and generates the correct one instead. This means developers do not need to trade accuracy for performance. The reasoning ability, coding quality, and conversational accuracy of Gemma 4 remain unchanged.

That quality guarantee is a major reason why speculative decoding is becoming one of the most important inference optimizations in modern AI systems.

Which Gemma 4 models support MTP drafters?

Google released MTP drafters for multiple Gemma 4 variants, including:

- Gemma 4 31B Dense

- Gemma 4 26B A4B MoE

- Gemma 4 E2B Edge

- Gemma 4 E4B Edge

These models target different deployment scenarios ranging from cloud-scale inference to mobile and offline AI applications. The edge-focused E-series models also include additional efficiency optimizations for battery-constrained devices.

All official MTP drafter checkpoints are available under the Apache 2.0 license, allowing commercial use and integration into production systems.

What are the real-world benefits of Gemma 4 multi-token prediction?

The real-world impact of Gemma 4 multi-token prediction goes beyond benchmark numbers. Faster inference directly improves user experience in applications such as AI chatbots, coding assistants, voice interfaces, AI agents, and document analysis systems.

For businesses running LLMs in production, speculative decoding reduces GPU usage and cloud infrastructure costs. Lower latency also means AI applications feel more responsive and interactive for end users.

On mobile and edge devices, the optimization helps conserve battery life while improving response speed. Developers building offline AI tools or on-device assistants can now deploy larger models more efficiently than before.

As AI adoption grows, inference optimization techniques like MTP are becoming just as important as model training improvements.

Is Gemma 4 multi-token prediction open source?

Yes. Google released the Gemma 4 MTP drafters under the permissive Apache 2.0 open-source license. Developers can freely download, modify, fine-tune, and deploy the models commercially without restrictive licensing concerns.

The drafters are compatible with major AI runtimes including Hugging Face Transformers, vLLM, MLX, Ollama, SGLang, LiteRT-LM, and Google AI Edge tools. This broad compatibility makes integration straightforward for both startups and enterprise AI teams.

The open-source release also strengthens the broader AI ecosystem by making advanced inference acceleration techniques accessible to developers worldwide.