OpenAI Daybreak is a new cybersecurity initiative that embeds AI-powered vulnerability detection, threat modeling, and patch validation directly into the software development lifecycle — shifting security from a reactive afterthought into a proactive engineering discipline. Launched in May 2026, it represents the most significant expansion of OpenAI’s security ambitions to date.

If you work in enterprise security, developer tooling, or software architecture, OpenAI Daybreak is worth understanding now — not after it goes fully public.

What Is OpenAI Daybreak?

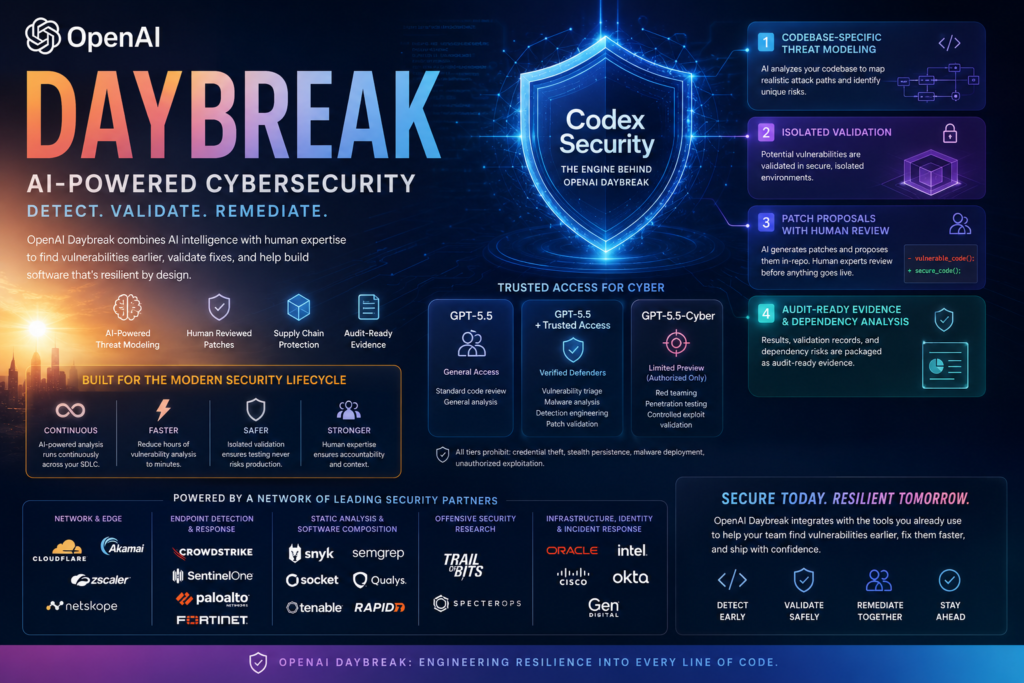

Definition: OpenAI Daybreak is a cybersecurity program that combines OpenAI’s frontier AI models with Codex Security — its agentic coding system — and a network of more than 20 security partners to help organizations find, validate, and remediate software vulnerabilities earlier in the development cycle.

Expansion: Daybreak is not a brand-new product built from scratch. It is a substantial repositioning of Codex Security, OpenAI’s application security agent that launched in March 2026. Where Codex Security debuted as a developer-facing tool for context-aware vulnerability scanning, OpenAI Daybreak evolves it into a full enterprise security platform — complete with enterprise-grade threat modeling, dependency risk analysis, isolated patch validation, and audit-ready evidence logs.

The core philosophical shift behind OpenAI Daybreak is deliberate: rather than treating vulnerability remediation as something that happens after an exploit surfaces, the initiative treats resilience as a design property that must be engineered in from the start. As OpenAI frames it, the goal is software that is “resilient to vulnerabilities by design — not patched reactively after exploits are identified in the wild.”

How OpenAI Daybreak Works: The Technical Workflow

OpenAI Daybreak’s operational pipeline consists of four distinct stages, each of which can feed results into existing security toolchains rather than requiring teams to abandon their current infrastructure.

Stage 1 — Codebase-Specific Threat Modeling

Rather than applying generic vulnerability checklists, Codex Security ingests an organization’s actual repository and constructs a threat model specific to that codebase. It maps realistic attack paths — authentication bypasses, injection points, privilege escalation vectors — based on the real structure of the code, not an abstract template.

This matters because most organizations’ threat models are derived from industry-standard frameworks (OWASP, MITRE ATT&CK) that describe categories of risk but cannot account for the specific logic, third-party integrations, or configuration quirks of a given system. OpenAI Daybreak addresses that gap directly.

Stage 2 — Isolated Validation

Likely vulnerabilities are confirmed in isolated environments that are sandboxed from production systems. This means that the validation step itself cannot introduce risk into a live environment — a key concern with any AI-driven security tooling that might inadvertently execute exploit paths during testing.

Stage 3 — Patch Proposals with Human Review

Codex Security generates patches and proposes them directly within the repository, with scoped access controls and account-level monitoring active throughout. Crucially, no patch is applied autonomously — all proposals go to human reviewers before deployment. OpenAI is explicit that OpenAI Daybreak is not positioned as a fully autonomous remediation platform.

This human-in-the-loop architecture is a deliberate design choice, not a limitation. It preserves accountability, ensures that context-specific judgment is applied before any change reaches production, and satisfies the governance requirements that enterprise security and compliance teams operate under.

Stage 4 — Audit-Ready Evidence and Dependency Analysis

Beyond first-party code review, OpenAI Daybreak covers the software supply chain layer — analyzing third-party packages and open-source dependencies for known and emerging risks. Results, validation records, and proposed patches are packaged as audit-ready evidence that flows back into an organization’s existing security and compliance systems, creating a traceable record of remediation activity over time.

According to OpenAI, this pipeline can reduce hours of vulnerability analysis to minutes, attributing the efficiency gains to more effective token utilization within Codex Security’s reasoning engine.

The Three-Tier Model Architecture: Trusted Access for Cyber

OpenAI Daybreak does not run on a single AI model. Access is gated through a framework called Trusted Access for Cyber, which segments capabilities into three tiers based on the verified identity and role of the requesting organization.

| Model Tier | Access Level | Primary Use Cases |

|---|---|---|

| GPT-5.5 | General (all users) | Standard code review, general-purpose analysis |

| GPT-5.5 + Trusted Access | Verified defenders | Vulnerability triage, malware analysis, detection engineering, patch validation |

| GPT-5.5-Cyber | Limited preview (authorized only) | Red teaming, penetration testing, controlled exploit validation |

The tiered structure is a direct response to the dual-use reality of frontier AI in cybersecurity. A model capable of reasoning deeply about vulnerabilities, attack paths, and exploit conditions is inherently more dangerous if accessed without proper authorization. GPT-5.5-Cyber — the most capable tier for security research — is gated behind identity verification, scoped access controls, and human review requirements precisely because its capabilities map closely to what a sophisticated threat actor would want to abuse.

Across all tiers, several capabilities are explicitly prohibited: credential theft, stealth persistence mechanisms, malware deployment, and unauthorized exploitation.

OpenAI Daybreak vs. Traditional Security Tooling: A Comparison

How does OpenAI Daybreak position itself relative to the categories of tooling security teams already use? The table below summarizes the key distinctions.

| Capability | Traditional SAST/DAST Tools | Penetration Testing Services | OpenAI Daybreak |

|---|---|---|---|

| Threat model scope | Generic rule-based | Engagement-specific (manual) | Codebase-specific (AI-generated) |

| Vulnerability discovery speed | Fast (automated scans) | Slow (manual, periodic) | Fast (continuous, AI-driven) |

| Patch generation | Not typically included | Recommendations only | AI-generated, human-reviewed patches |

| Supply chain coverage | Limited (some SCA tools) | Out of scope | Integrated dependency analysis |

| Audit evidence | Log exports | Written reports | Audit-ready evidence fed to existing systems |

| Human review requirement | Not required | Inherent (manual process) | Mandatory before patch application |

| Integration model | Standalone or CI/CD plugin | One-time engagement | Designed to feed into existing toolchains |

The most significant differentiation is not speed or coverage — it is the combination of codebase-aware threat modeling with patch generation and supply chain analysis in a single continuous workflow. Traditional SAST tools can identify categories of vulnerability quickly, but they cannot reason about whether a specific code path in a specific application constitutes an exploitable attack vector in the way a human security researcher would. That reasoning gap is precisely what Codex Security, and by extension OpenAI Daybreak, is designed to close.

The Partner Ecosystem: Why the Network Matters

OpenAI Daybreak launched with more than 20 security partners, each occupying a distinct layer of the security stack. This is not a token partner list — it reflects a deliberate strategy to position OpenAI Daybreak as an orchestration layer that feeds into tooling organizations already trust, rather than as a replacement for those tools.

Network Edge and Traffic: Cloudflare, Akamai, Zscaler, Netskope

Endpoint Detection and Response: CrowdStrike, SentinelOne, Palo Alto Networks, Fortinet

Static Analysis and Software Composition: Snyk, Semgrep, Socket, Qualys, Tenable, Rapid7

Offensive Security Research: Trail of Bits, SpecterOps

Infrastructure, Identity, and Incident Response: Oracle, Intel, Cisco, Okta, Gen Digital

The logic connecting these partners: OpenAI Daybreak’s outputs — vulnerability reports, proposed patches, audit evidence, dependency risk alerts — need somewhere to land. If those outputs cannot flow into the SIEM, the SOAR platform, the CI/CD pipeline, or the endpoint detection tool that a security team already uses daily, the initiative becomes an additional workflow rather than an integrated one. The partner network is the integration surface.

Why OpenAI Daybreak Launched Now: The Competitive and Dual-Use Context

OpenAI Daybreak arrived roughly a month after Anthropic announced Project Glasswing and Claude Mythos, its security-focused AI model. Mozilla used Claude Mythos to discover 271 previously unknown vulnerabilities in the Firefox codebase — a concrete demonstration of what frontier AI can accomplish at scale in vulnerability discovery.

That context matters for understanding the timing. The capabilities that make AI useful for defensive security — deep reasoning about code structure, systematic enumeration of attack paths, automated hypothesis generation — are the same capabilities that make it potentially dangerous in the wrong hands. Both OpenAI and Anthropic are navigating this dual-use tension publicly and deliberately.

OpenAI’s response in OpenAI Daybreak is structural: tiered access with identity verification, mandatory human review before any patch reaches production, and explicit restrictions on the most sensitive capabilities. Rather than treating the dual-use risk as a reason to restrict access broadly, the initiative uses it as the justification for a trust framework that expands access proportionally to verification level.

This is a meaningful departure from how AI capabilities have typically been released in consumer contexts. OpenAI Daybreak treats cybersecurity AI more like controlled access to a regulated resource than like a general-purpose API endpoint.

What Security Teams Should Do Right Now

OpenAI Daybreak is not fully public yet. Organizations must request a vulnerability scan or contact OpenAI’s sales team, with broader deployment across industry and government partners planned for the coming weeks. That window — between announcement and general availability — is useful preparation time.

Here is what security and engineering leaders should do in the near term:

- Audit your current threat modeling process. If your organization’s threat models are derived from generic frameworks without codebase-specific analysis, document that gap. OpenAI Daybreak directly addresses it, and quantifying the gap will help build the business case for adoption.

- Inventory your software supply chain exposure. Dependency risk analysis is a core Daybreak capability. Organizations that lack visibility into their third-party package landscape will benefit most from this layer.

- Identify your Trusted Access eligibility. The more capable model tiers — particularly GPT-5.5 with Trusted Access — require verification as a legitimate defender. Begin that process early if your team handles vulnerability triage, malware analysis, or detection engineering.

- Map your existing toolchain integrations. Daybreak is designed to feed into tooling you already have, not replace it. Knowing which SIEM, SOAR, and CI/CD platforms your team depends on will determine how cleanly Daybreak outputs can be absorbed.

- Review the restricted-use policy across all model tiers. Credential theft, stealth persistence, and unauthorized exploitation are explicitly prohibited. Ensure your security team’s workflows — particularly red team and penetration testing activities — are structured for authorized workflows before requesting GPT-5.5-Cyber access.

- Watch for CI/CD pipeline integrations and audit-ready evidence logs as early signals of enterprise readiness in the weeks following the broader rollout.

Frequently Asked Questions About OpenAI Daybreak

What is the difference between OpenAI Daybreak and Codex Security? Codex Security is the underlying agentic system that powers OpenAI Daybreak. It launched in March 2026 as a research preview for context-aware vulnerability detection. OpenAI Daybreak is the initiative that expands Codex Security’s scope from a developer tool into an enterprise security platform, adding the partner network, tiered model access, and audit-ready evidence capabilities.

Does OpenAI Daybreak autonomously apply patches? No. All patch proposals generated by OpenAI Daybreak require human review before application. OpenAI has been explicit that the platform is not positioned as a fully autonomous remediation system.

Who can access GPT-5.5-Cyber? GPT-5.5-Cyber is currently in limited preview and is restricted to authorized organizations with verified use cases in red teaming, penetration testing, and controlled exploit validation. Access requires going through OpenAI’s Trusted Access for Cyber verification process.

Is OpenAI Daybreak available now? Not fully. As of May 2026, organizations must request a vulnerability scan or contact OpenAI sales. Broader deployment with industry and government partners is expected in the coming weeks.

How does OpenAI Daybreak handle the dual-use risk of AI in cybersecurity? Through the Trusted Access for Cyber framework — tiered model access based on verified identity, scoped access controls, account-level monitoring, and explicit restrictions on capabilities that could enable offensive attacks.

Key Takeaways

The emergence of advanced security automation marks a major turning point in how organizations think about protecting software systems. For years, most engineering teams have operated with a security model that is fundamentally reactive: developers build features, products get shipped, vulnerabilities are later discovered through audits, bug bounty programs, incident reports, or real-world attacks, and only then do teams begin remediation. This cycle has persisted because traditional security tooling has generally been optimized for identifying known patterns rather than understanding the deeper behavioral logic of an application.

What is changing now is not simply the addition of another scanning layer or a more sophisticated static analysis engine. The broader shift is toward systems capable of reasoning about software architecture, dependencies, attack surfaces, and likely exploit paths in a way that more closely resembles how experienced security researchers think. This matters because software vulnerabilities are rarely isolated issues. They often emerge from interactions between business logic, authentication design, infrastructure decisions, third-party integrations, and human assumptions embedded in code over time.

A more intelligent security workflow fundamentally changes where and how risk is addressed. Instead of waiting until a codebase reaches a maturity stage where issues become expensive to fix, organizations can surface structural weaknesses much earlier. This has meaningful downstream effects. Engineering teams spend less time firefighting production issues. Security teams can allocate resources toward strategic risk reduction instead of endless alert triage. Compliance teams gain cleaner evidence trails for audits and regulatory reviews.

Another major implication is the growing importance of context-aware analysis. Legacy tools are often highly effective at catching well-known vulnerability categories but struggle to understand whether a flagged issue is practically exploitable within the real environment. This creates alert fatigue, one of the most persistent pain points in enterprise security. Teams are flooded with warnings, many of which lack urgency or operational relevance. Over time, this erodes trust in the tooling itself.

By contrast, systems capable of evaluating actual code relationships and execution logic can help reduce noise while increasing signal quality. That does not eliminate false positives entirely—no serious security professional expects perfection—but it can meaningfully improve prioritization. A smaller number of high-confidence findings is often more valuable than thousands of generic alerts spread across repositories.

The human role remains essential, and this is an important distinction. Security automation is becoming more powerful, but full autonomy is neither realistic nor desirable for high-stakes environments. Software systems are deeply contextual. A patch that is technically correct may still introduce performance regressions, compatibility issues, workflow disruptions, or hidden business risks. Human review remains critical because security is never purely a technical problem; it is an operational and organizational one as well.

This hybrid model—automation for speed and scale, humans for judgment and accountability—is likely to become the dominant architecture moving forward. Organizations that understand this balance will be better positioned than those seeking fully hands-off automation or those resisting automation entirely.

Supply chain risk is another area receiving long-overdue attention. Modern software is rarely built entirely in-house. Most applications depend heavily on open-source packages, third-party APIs, container images, libraries, SDKs, and cloud services. This interconnected ecosystem accelerates development, but it also introduces layers of inherited risk that many organizations only partially understand.

Recent years have shown how fragile these dependencies can be. A vulnerability in a single package can cascade across thousands of organizations globally. As software ecosystems become more modular, dependency visibility is no longer optional. Security maturity increasingly depends on understanding not just what an organization builds, but what it builds on top of.

The integration aspect is equally important. New security platforms often fail not because they lack capability, but because they create operational friction. Security teams already work across a fragmented stack of tools: code repositories, CI/CD pipelines, ticketing systems, SIEM platforms, endpoint protection suites, compliance dashboards, and incident response frameworks. Any new system that demands teams abandon existing infrastructure is unlikely to see widespread adoption.

The more successful model is orchestration rather than replacement. Security outputs must flow naturally into existing workflows. Findings should appear where developers already work. Evidence should integrate with audit systems already in place. Recommendations should fit within current deployment and review practices. Adoption is as much about workflow compatibility as technical sophistication.

There is also a broader strategic dimension to consider: the dual-use nature of advanced security intelligence. Tools capable of identifying weaknesses, modeling attack paths, and validating exploitability are inherently powerful. Their value to defenders is obvious, but the same reasoning capabilities can be attractive to malicious actors if released without meaningful controls.

This introduces a governance challenge unlike most conventional developer tooling. Security-focused intelligence cannot be treated as just another general-purpose utility. Access controls, identity verification, scoped permissions, monitoring, and review requirements are becoming foundational design principles rather than optional enterprise add-ons.

This reflects a wider trend in technology governance. As systems become more capable, access models become more nuanced. Rather than universal openness or blanket restriction, organizations are experimenting with tiered access structures that align capability exposure with trust and verification. This is particularly relevant in domains like cybersecurity, where the difference between legitimate research and harmful misuse can be narrow.

For enterprise leaders, the strategic takeaway is clear: security is increasingly becoming an engineering discipline embedded throughout the software lifecycle rather than a specialized checkpoint added late in development. Teams that continue treating security as an isolated function may struggle to keep pace with both threat complexity and software velocity.

Investment priorities are likely to evolve accordingly. Organizations will place greater emphasis on secure development workflows, repository-aware analysis, dependency intelligence, automated evidence generation, and tighter collaboration between engineering and security teams.

For developers, this evolution may initially feel like increased oversight, but in practice it has the potential to reduce friction. When vulnerabilities are surfaced earlier with clearer remediation guidance, developers can resolve issues while code context is still fresh. This is far less disruptive than emergency fixes triggered by post-deployment incidents or external disclosures.

For security practitioners, the change is even more significant. Much of traditional security work has historically been constrained by scale limitations. Human teams simply cannot manually analyze every code path, dependency update, configuration drift, or infrastructure change in modern software environments. Intelligent automation expands capacity, enabling teams to focus on strategic analysis, architecture decisions, incident preparedness, and advanced threat research.

Ultimately, the deeper story is not about one platform or announcement. It is about the continuing convergence of software engineering, security operations, and machine reasoning. The organizations that adapt fastest will likely be those that stop viewing security as a defensive cost center and begin treating resilience as a built-in property of software quality.

In the years ahead, the competitive advantage will not belong solely to teams that ship faster. It will increasingly belong to those that can ship confidently, maintain visibility across growing complexity, and reduce risk without sacrificing development speed. That balance—speed with resilience—is becoming one of the defining operational challenges of modern software organizations.